Quick answer

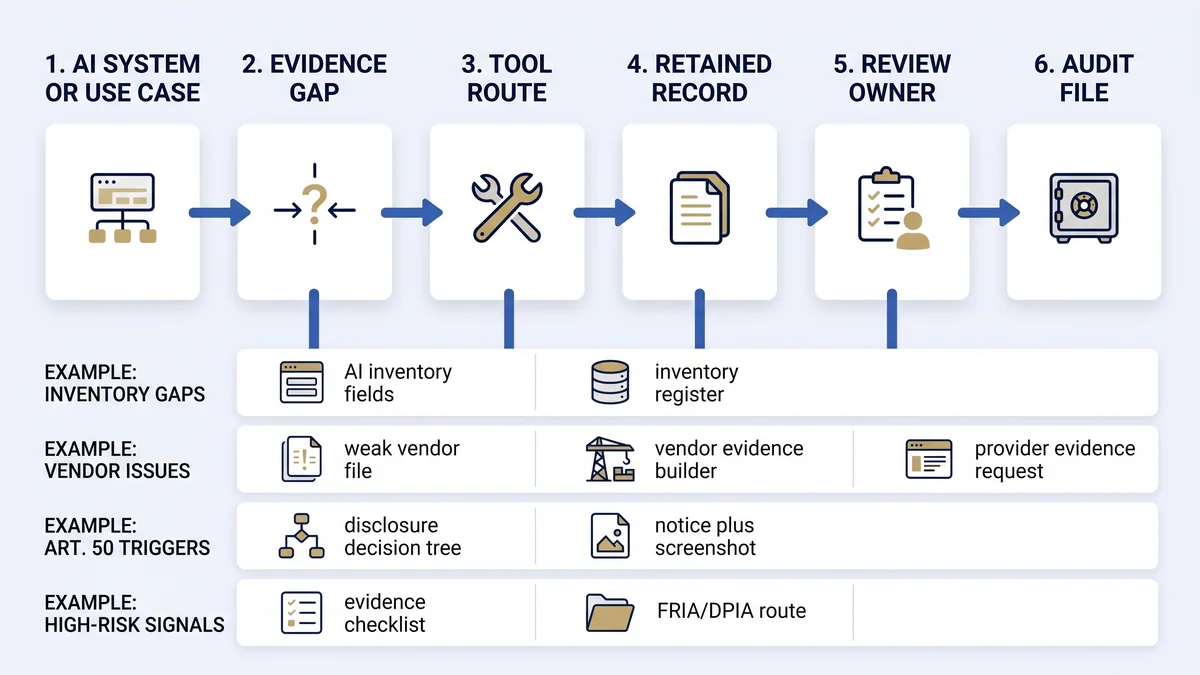

The EU AI Act deployer evidence router is a free browser-based tool that maps one AI system or use case to the records a deployer should prepare: inventory, role and risk rationale, vendor evidence, oversight, logs, Article 50 notices, FRIA/DPIA escalation, incident routes, and audit-readiness files. Start with the AI system inventory.

Current-law planning note

Article 113 of Regulation (EU) 2024/1689 sets 2 August 2026 as the general application date, with staged exceptions. Digital Omnibus material may change parts of the high-risk timeline if adopted, but proposal-stage material should not replace current-law readiness planning. Keep assumptions visible in the evidence file.

Choose your situation

No inventory exists

Create the controlled register before debating obligations.

Open inventory fields →Vendor evidence is weak

Ask for provider instructions, limitations, logging, oversight, and security evidence.

Build vendor request →Article 50 may apply

Screen interaction, synthetic content, deepfake, and biometric or emotion triggers.

Run disclosure tree →AI agents are involved

Separate deployer evidence from runtime boundary and agent-control evidence.

Check agent evidence →Map your deployer evidence gap

Local-only router

Build a first-pass evidence route

This browser form does not submit entries to EU AI Compass. Use working names only. Do not enter personal data, customer-confidential data, trade secrets, employee records, production credentials, or incident details.

Evidence route appears here

Select the evidence gaps and generate a first-pass route to EU AI Compass tools, records, and escalation owners.

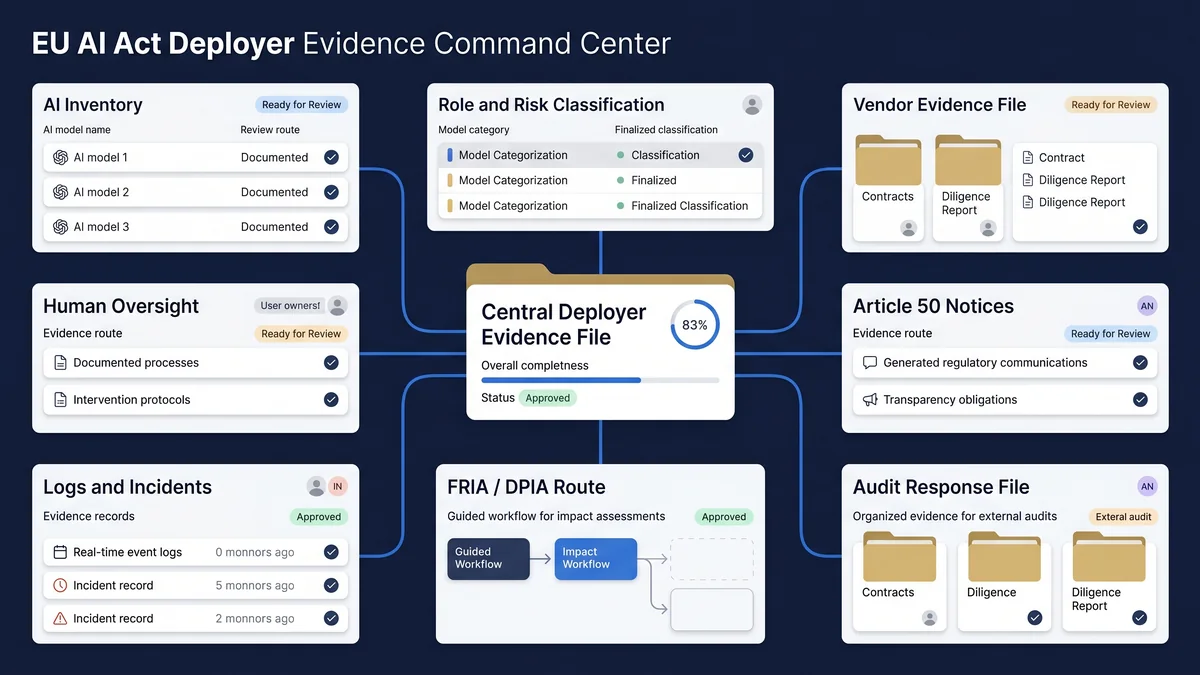

Deployer evidence stack

The deployer evidence stack is the minimum operating file that turns AI use into reviewable records. It should not depend on one vendor document or one policy paragraph.

| Evidence layer | Record to retain | Owner question |

|---|---|---|

| AI inventory | System name, purpose, owner, vendor, data category, deployment stage, users, geography. | Who owns the system and when was the record last reviewed? |

| Role and risk route | Provider/deployer assumption, Article 6/Annex III signals, high-risk rationale, uncertainty log. | Who approved the classification basis? |

| Vendor evidence | Instructions for use, limitations, oversight guidance, logging support, security and change notes. | Can the deployer explain reliance on provider information? |

| Oversight and monitoring | Human oversight assignment, training, intervention path, monitoring notes, review cadence. | Who can stop, escalate, or override the workflow? |

| Logs and incidents | Logs under deployer control, event review, suspected risk, serious-incident route, closure record. | Who checks the log or incident trail? |

| Article 50 transparency | Notice text, placement screenshot, first-exposure context, provider marking evidence, approval record. | Who approved what people see? |

| FRIA / DPIA route | FRIA screening note, DPIA linkage, affected groups, risks, oversight and mitigation evidence. | Who decides whether formal legal/privacy review is required? |

| Audit response file | Summary pack, evidence index, open gaps, accepted risks, unresolved legal questions. | Who can answer review questions without rebuilding the file? |

Article 26 evidence map

Article 26 evidence should convert deployer obligations into records that can be checked. The table below is an operational map, not a legal determination.

| Article 26 area | Evidence artifact | Common gap |

|---|---|---|

| Use according to instructions | Provider instructions, approved operating procedure, version record. | Vendor instructions exist but are not connected to the actual deployment. |

| Human oversight | Named human oversight owner, competence record, intervention and stop path. | Oversight named in policy but not assigned to a trained person. |

| Input data under deployer control | Input-data relevance and representativeness check where the deployer controls input data. | Data owner assumes the provider handles all data-quality questions. |

| Monitoring and risk escalation | Monitoring cadence, trigger criteria, provider/distributor/authority escalation path. | Team has logs but no review rule or escalation owner. |

| Logs under deployer control | Log-retention decision, minimum retention basis, privacy review where needed. | Logs are available technically but not retained as evidence. |

| Workplace use | Worker and representative information record where workplace rules apply. | HR or works-council review is started too late. |

| Public-authority registration checks | Registration verification or provider escalation where database entry is missing. | Use begins before registration status is checked. |

| DPIA linkage | DPIA cross-reference using provider information under Article 13 where applicable. | DPIA and AI Act evidence files are maintained separately with inconsistent assumptions. |

Tool routing table

| If the evidence gap is... | Use this EU AI Compass route | Expected output |

|---|---|---|

| No inventory | AI system inventory fields | Inventory structure and field-level evidence logic. |

| Evidence plan unclear | 30-day evidence planner | Week-by-week action path. |

| Audit response needed | Audit and regulator questions hub | Likely review questions and evidence prompts. |

| Evidence checklist needed | EU AI Act evidence checklist | Evidence areas to create, assign, and retain. |

| Vendor evidence missing | Vendor evidence request builder | Structured request language for provider/vendor evidence. |

| Article 50 trigger possible | Article 50 disclosure decision tree | Notice draft, evidence list, and escalation notes. |

| Article 50 implementation needed | Article 50 implementation pack | Implementation path for notice operations and evidence retention. |

| AI agent runtime involved | AI agent deployer evidence checklist | Agent boundary, runtime, tool-use, oversight, and evidence checks. |

30-day evidence path

Week 1: Inventory

Name systems, owners, vendors, users, purposes, and deployment stage.

Week 2: Role and risk

Record provider/deployer assumptions, high-risk signals, and uncertainty.

Week 3: Evidence requests

Collect vendor instructions, oversight, logs, limitation, and security evidence.

Week 4: Review file

Route Article 50, FRIA/DPIA, incident, legal, privacy, and audit questions.

Escalation triggers

| Trigger | Escalate to | Evidence to retain |

|---|---|---|

| High-risk classification is plausible or disputed. | Legal, compliance, product owner. | Classification rationale, assumptions, and unresolved questions. |

| Personal data, biometric data, employment data, or sensitive groups are involved. | DPO, privacy counsel, HR where relevant. | DPIA/FRIA screening note, data map, affected group analysis. |

| Deepfake, synthetic media, or public-interest text is published. | Legal, communications, content owner. | Article 50 notice, screenshot, approval and publication context. |

| Vendor cannot explain limitations, logs, oversight, or security controls. | Procurement, security, risk owner. | Vendor evidence request, response, residual-risk decision. |

| Operational monitoring finds risk, complaint, or incident signals. | Security, legal, product, competent authority path where relevant. | Incident intake, log extract, containment note, escalation timeline. |

Common evidence mistakes

- Starting with a vendor PDF instead of an AI system inventory.

- Keeping classification logic in email instead of a retained record.

- Assigning human oversight to a role, not a named accountable owner.

- Assuming logs exist without checking who controls and retains them.

- Publishing Article 50 notices without screenshots or version dates.

- Treating FRIA and DPIA as the same artifact.

- Using proposal-stage deadlines as if adopted law.

- Waiting for audit questions before building the evidence index.

Frequently asked questions

Use these answers to check scope, evidence depth, escalation points, and limits before relying on the evidence hub.

EU AI Act deployer evidence should usually start with an AI system inventory. The inventory should name the system, owner, vendor, purpose, user group, deployment stage, data categories, role assumption, risk route, Article 50 trigger, and evidence status. Without the inventory, later vendor review, Article 26 mapping, FRIA/DPIA routing, and audit preparation become guesswork.

An AI inventory is necessary but not enough for EU AI Act readiness. The inventory tells the team what exists; the evidence file should also show instructions for use, human oversight assignment, input-data checks where controlled by the deployer, log retention where available, monitoring, vendor evidence, Article 50 notice records, and escalation decisions.

Article 26 deployer evidence commonly maps to use according to instructions, competent human oversight, input-data relevance where the deployer controls inputs, monitoring, risk or incident escalation, log retention where logs are under deployer control, workplace notification where applicable, registration checks for public authorities, DPIA linkage, and cooperation with authorities.

Yes. A deployer usually needs vendor evidence because provider instructions, technical documentation extracts, model limitations, log availability, human oversight guidance, cybersecurity information, and Article 50 marking support affect the deployer evidence file. A vendor PDF is not enough if it does not answer the deployment-specific review questions.

Article 50 disclosure evidence should sit beside the AI system inventory and publication record. Keep the notice text, version date, screen placement, first-exposure timing, audience, workflow owner, provider marking evidence where relevant, editorial-control record where relied upon, and legal or communications review note.

FRIA or DPIA review should be escalated when the system may be high-risk, affects people in a sensitive context, processes personal data, supports consequential decisions, involves employment or public-service use, or creates rights-impact questions. Use the evidence route builder as triage only; the final FRIA, DPIA, legal, and privacy positions need qualified review.

No. The EU AI Act Deployer Evidence Route Builder does not prove compliance, certify an AI system, or provide legal advice. It helps structure evidence preparation and routes users to free EU AI Compass tools. Compliance depends on system facts, implementation, legal interpretation, governance operation, and continuing review.

Use the current-law deadline as the planning baseline until adopted amendments change it. The Digital Omnibus process may affect high-risk timing, but proposal-stage material should not replace operational readiness work. Keep a change log, record assumptions, and update the evidence plan when the legal position becomes final.

Source and review note

This page provides operational guidance, not legal advice. It was last reviewed on 3 May 2026 against the AI Act Service Desk text for Article 113, Article 26, Article 50, Article 27, and the European Parliament Legislative Train page for the Digital Omnibus on AI. Validate final legal, privacy, employment, procurement, security, tax, and sector-specific decisions with qualified professionals.

Build the evidence file before the review question arrives

Start with the evidence route, then use the planner or inventory fields to turn the result into a retained record.