Quick answer

Before deploying high-risk AI, ask the vendor for a deployer-facing evidence pack: intended purpose, role classification, instructions for use, limitations, accuracy and cybersecurity claims, input-data requirements, human oversight measures, logging access, change-control triggers, incident contacts, and conformity evidence. Keep gaps visible. Vendor claims do not replace deployer evidence.

“We are AI Act compliant” is not evidence. It is a claim. Procurement should ask for the file behind the claim.

Why the vendor handoff matters

A deployer is the organisation using an AI system under its authority. A provider is the party that develops or has an AI system developed and places it on the market or puts it into service under its own name or trademark. In procurement language both may be called “the vendor”, but the EU AI Act roles are not interchangeable.

For high-risk AI systems, the vendor handoff matters because the deployer’s Article 26 work depends on the information provided with the system. If your team cannot understand the intended purpose, limitations, oversight controls, log mechanisms, input-data assumptions, and escalation process, it cannot operate the system responsibly or defend its evidence trail.

Practical rule

Do not treat “we are EU AI Act compliant” as evidence. Treat it as a claim that must be supported by documents, roles, controls, and contractual obligations.

Minimum vendor evidence pack

This is the evidence set a deployer should request before signing or before production go-live. It is not a substitute for legal advice, and it is not a claim that every vendor must disclose every internal technical file. It is a practical handoff checklist for deployer due diligence.

| Evidence item | What to ask the vendor for | Why deployers need it |

|---|---|---|

| Role and intended-purpose statement | Provider/deployer/importer/distributor role statement, intended purpose, target users, prohibited or unsupported uses. | Prevents accidental scope drift and supports your internal role and use-case record. |

| High-risk classification basis | Article 6 / Annex III reasoning, including any documented basis for “not high-risk” treatment where the system appears to touch Annex III. | Stops the procurement team from accepting a classification conclusion without evidence. |

| Instructions for use | Clear operating instructions, limitations, expected conditions of use, maintenance needs, hardware/software dependencies, and user responsibilities. | Article 26 requires deployers to use high-risk systems according to the instructions for use. |

| Performance and limitation evidence | Accuracy metrics, robustness expectations, cybersecurity posture, known failure modes, group-specific performance where relevant. | Supports operational acceptance, monitoring design, and risk owner sign-off. |

| Input-data specifications | Required input fields, data quality assumptions, prohibited inputs, representativeness expectations, and data-preparation instructions. | If the deployer controls input data, Article 26 requires relevance and sufficient representativeness for the intended purpose. |

| Human oversight design | Required reviewer role, intervention points, override options, alert logic, training expectations, escalation path, and automation-bias warnings. | Human oversight must be operational, not just a sentence in a policy. |

| Logging and traceability | What logs are generated, where they live, who controls them, retention configuration, export method, interpretation guidance, and audit access. | Article 12 supports traceability, and Article 26 requires deployers to keep logs under their control for at least six months unless other law applies. |

| Conformity and compliance evidence | Conformity assessment status, declaration of conformity where applicable, CE marking status, registration evidence, and a deployer-facing technical documentation summary. | Lets procurement distinguish real compliance evidence from sales collateral. |

| Change-control process | Release notes, notification triggers, model/system update policy, re-testing support, and reassessment triggers for material changes. | Silent changes can break classification, oversight, logging, or FRIA/DPIA assumptions. |

| Incident and post-market handoff | Incident reporting contacts, severity thresholds, investigation support, post-market monitoring feedback channel, and response time commitments. | Deployers need a clear path when monitoring reveals risk or serious incidents. |

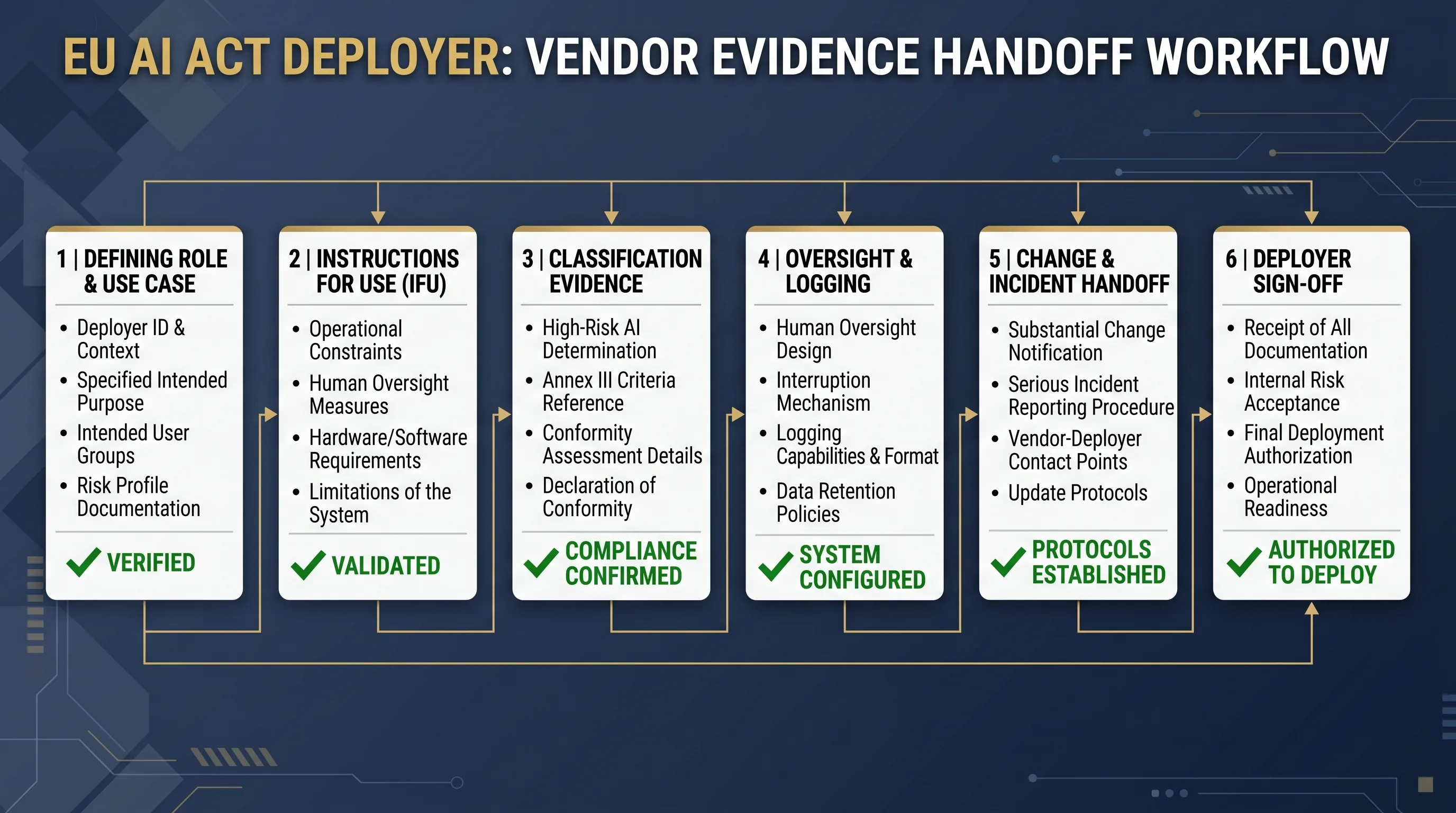

Six-step handoff process

Use this process before production deployment, not after the first exception. It gives procurement, legal, privacy, security, and business owners one evidence spine.

- Confirm the role and use case. Record whether the vendor is provider, distributor, importer, or another supplier role, then map your deployment context.

- Collect the instructions-for-use pack. Reject generic marketing PDFs. Ask for operating instructions tied to intended purpose, limitations, oversight, input data, logs, and maintenance.

- Request classification and conformity evidence. Ask for the classification rationale and applicable conformity, declaration, CE marking, or registration evidence.

- Validate oversight, logging, and input-data controls. Confirm that your team can operate the controls and retain the evidence in your environment.

- Define update and incident handoff obligations. Put change triggers, incident contacts, response windows, and investigation support into the contract or procurement file.

- Record internal sign-off and unresolved gaps. Use a risk acceptance decision when evidence is partial. Do not bury unresolved gaps inside procurement notes.

Vendor red flags before deployment

No intended-purpose boundary

If the vendor cannot define what the system is and is not designed to do, your risk classification and oversight design are unstable.

No log export or access model

If the deployer cannot access or interpret logs under its control, the monitoring evidence file is weak from day one.

No human override design

If reviewers cannot interpret, challenge, or override outputs, “human in the loop” is a slogan, not oversight.

Silent model or workflow updates

If updates happen without notice, your Article 26, DPIA, FRIA, and contractual assumptions can become stale without anyone noticing.

Contract controls to request

These are not legal clauses. They are procurement requirements your counsel can convert into contract language.

| Control | Business requirement | Owner to review |

|---|---|---|

| Evidence delivery | Vendor must deliver and maintain the deployer evidence pack before go-live. | Procurement + legal + AI governance |

| Change notification | Vendor must notify material changes before deployment where the change affects intended purpose, performance, oversight, logs, or risk controls. | Product owner + legal |

| Incident support | Vendor must support incident investigation, causal analysis, and regulator-facing evidence where applicable. | Security + legal + compliance |

| Log access | Vendor must define generated logs, retention configuration, export rights, and interpretation support. | Security + AI governance |

| Audit support | Vendor must provide reasonable evidence support for audits, regulator questions, and internal governance reviews. | Compliance + procurement |

Use the free EU AI Compass tools first

Start with the free evidence path. Do not buy a toolkit until you know what gap you are solving.

Structure procurement questions and evidence requests.Deployer Obligation Self-Assessment

Check which deployer duties apply to your use case.AI System Inventory Template

Record vendor, owner, use case, classification, and evidence location.Serious Incident Register

Prepare the incident record before an incident occurs.

If the free tools reveal repeated evidence gaps across several systems, E1/E2 can be used later as the implementation layer for structured templates, control mapping, and board-ready evidence packs. Do not skip the diagnostic step.

FAQ: AI vendor due diligence under the EU AI Act

Not always. Article 11 technical documentation is mainly a provider evidence file for authorities and conformity assessment, while Article 13 requires instructions for use that are clear, complete, correct, and comprehensible to deployers. A deployer should ask for a deployer-facing evidence pack, not assume it is entitled to every internal engineering record.

The most important vendor document is the instructions-for-use pack because the deployer’s Article 26 duties depend on using the system according to those instructions. The pack should explain intended purpose, limitations, input data requirements, expected performance, oversight measures, logging mechanisms, maintenance, and incident contacts.

No. A vendor questionnaire can structure evidence collection, but it cannot replace legal review, procurement review, DPIA/FRIA assessment, or security due diligence. Use it to identify gaps before contract signature, then route unresolved questions to counsel, procurement, security, privacy, and the business owner.

Ask for the documented basis for that classification. Article 6 allows some Annex III systems to be treated as not high-risk only under specific conditions, and providers who rely on that route must document the assessment before placing the system on the market or putting it into service. The deployer should still keep its own use-case record.

Yes. Logging is not a back-office technical detail. Article 12 requires high-risk AI systems to allow automatic recording of events, and Article 26 requires deployers to keep logs under their control for at least six months unless other law says otherwise. If logs are unavailable, deployer evidence is weak.

The contract should require prior notice for material changes, release notes, impact information, re-testing support, and a trigger for re-assessing intended purpose, limitations, performance, logging, and human oversight. Silent model changes can break the evidence file even when the user interface looks unchanged.

No. Deployers can require vendor support, documentation, notifications, and technical controls, but they still need their own internal evidence for use according to instructions, human oversight assignment, relevant input data, monitoring, log retention, workplace notices, and serious incident escalation where applicable.

A deployer should pause deployment when the vendor cannot explain intended purpose, limitations, oversight, input data requirements, logging, serious incident handling, classification basis, or change-control triggers. For high-impact use cases, missing evidence before go-live usually becomes a governance defect after go-live.

Source and review note

This page was reviewed against official EU AI Act Service Desk pages and Regulation (EU) 2024/1689 source material available as of 2026-04-30. It is operational guidance for evidence planning, not legal advice.

- AI Act Service Desk: Article 3 definitions

- AI Act Service Desk: Article 6 high-risk classification

- AI Act Service Desk: Article 11 technical documentation

- AI Act Service Desk: Article 12 record-keeping

- AI Act Service Desk: Article 13 information to deployers

- AI Act Service Desk: Article 14 human oversight

- AI Act Service Desk: Article 15 accuracy, robustness, cybersecurity

- AI Act Service Desk: Article 16 provider obligations

- AI Act Service Desk: Article 26 deployer obligations

- AI Act Service Desk: Article 73 serious incident reporting

Legal disclaimer: This page is educational and operational guidance only. It does not provide legal advice and does not guarantee EU AI Act compliance. Validate system-specific decisions with qualified legal, privacy, procurement, and security advisers.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.