Quick answer

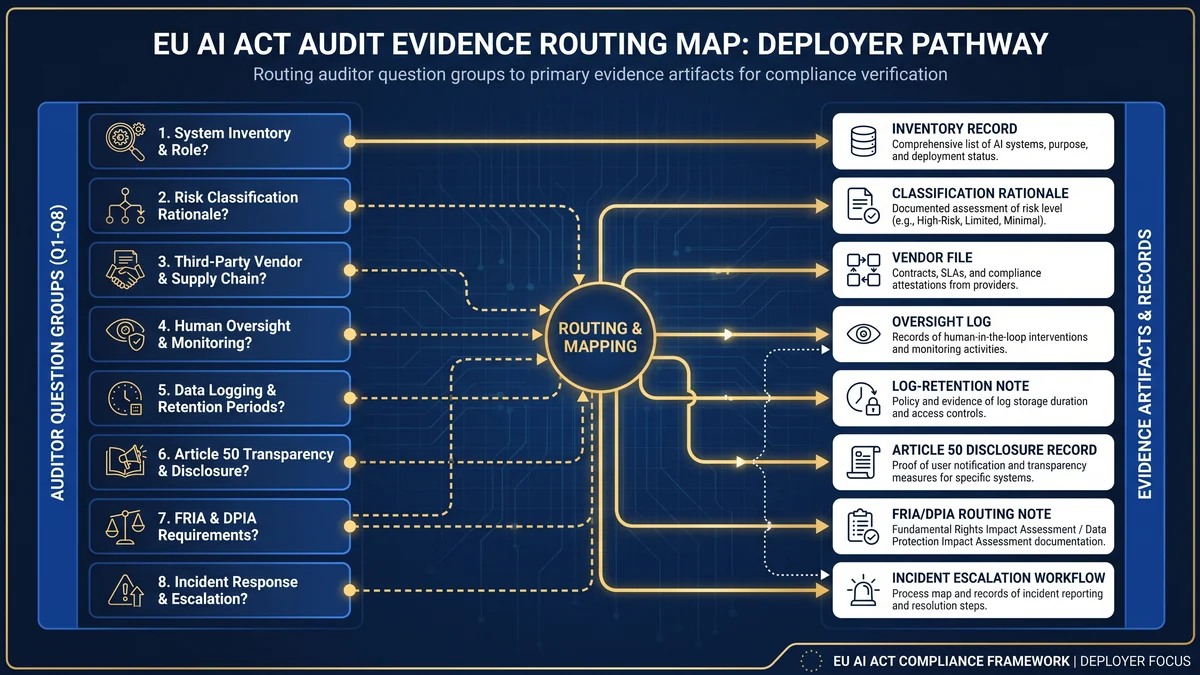

Under the EU AI Act, a deployer should be ready to explain which AI systems it uses, why each system is or is not high-risk, what provider instructions were reviewed, who owns human oversight, what logs are retained, whether Article 50 transparency triggers apply, whether FRIA or DPIA review is needed, and how risks or serious incidents are escalated.

This page is a routing hub. Use it to move from likely review questions to the exact EU AI Compass evidence page or free tool that helps build the record.

Who this hub is for

Deployers and business owners

Use this page to understand what evidence you may need before using AI systems in employment, education, essential services, customer-facing workflows, public content, or regulated operations.

CTOs, CISOs, DPOs and legal teams

Use the routing map to connect technical records, data-protection screening, vendor evidence, oversight controls, log retention, and transparency notices into one operating file.

Procurement and vendor-risk teams

Use the vendor evidence route before signing or renewing AI contracts. The weak point is usually not the questionnaire. It is missing instructions, limitations, logs, incident handoff, or update-control evidence.

Internal audit and governance reviewers

Use the eight question groups to test whether one real AI system can be traced from inventory to classification, provider evidence, oversight, operation, transparency, and escalation.

The eight question groups reviewers may test

| Question group | What the reviewer is testing | Primary evidence |

|---|---|---|

| 1. Which AI systems exist? | Whether the organisation has a controlled inventory rather than scattered tool names. | AI system inventory, owner, vendor, use case, affected people, evidence location. |

| 2. What is the role and classification rationale? | Whether the team can explain provider/deployer role and Article 6 / Annex III status. | Role decision, intended purpose, Annex III route, high-risk or non-high-risk rationale. |

| 3. What did the provider or vendor hand over? | Whether deployer use is grounded in instructions, limitations, controls, and incident handoff. | Instructions for use, limitations, logging note, oversight guidance, change-control record. |

| 4. Who oversees the system? | Whether a named person has competence, training, authority, and support to oversee the system. | Oversight owner, training/competence record, intervention route, escalation record. |

| 5. What logs and operating evidence exist? | Whether logs and operating records are retained where under the deployer’s control. | Log source, retention rule, access model, privacy review, review cadence. |

| 6. What Article 50 notices or labels apply? | Whether transparency triggers have been checked for users, exposed persons, and public content. | Disclosure trigger register, notice wording, placement evidence, screenshot, exception rationale. |

| 7. Is FRIA or DPIA routing needed? | Whether the system has been routed to fundamental-rights and data-protection assessment where relevant. | FRIA/DPIA routing note, affected groups, risk review, legal/privacy owner. |

| 8. What happens if risk or serious incident occurs? | Whether the organisation can suspend use, notify the right parties, and preserve incident records. | Risk escalation route, provider contact, authority route, serious incident log, decision timeline. |

Evidence routing map

Do not duplicate evidence work. Route each review question into the correct EU AI Compass page, then keep the evidence link in the AI system inventory.

EU AI Act Evidence Checklist

Use when you need one cross-cutting deployer evidence file: inventory, oversight, logs, vendor files, Article 50, FRIA/DPIA routing, and incident records.

InventoryAI System Inventory Fields for EU AI Act Readiness

Use when the first problem is knowing which AI systems exist, who owns them, what they do, and which evidence route applies.

Vendor handoffEU AI Act Vendor Due Diligence Checklist

Use before procurement, renewal, or go-live to request instructions, limitations, logs, oversight guidance, incident contacts, and change-control evidence.

TransparencyEU AI Act Article 50 Implementation Pack

Use when the system interacts with people, generates or manipulates content, supports emotion recognition or biometric categorisation, or publishes public-interest text.

Disclosure recordArticle 50 Disclosure Checklist

Use to create scenario-specific notice, label, screenshot, exception, and approval evidence for Article 50 transparency triggers.

Deadline planningEU AI Act August 2026 Deadline Tracker

Use when the question is what to classify, evidence, and review before the main August 2026 application wave.

AI agentsAI Agent Deployer Evidence Checklist

Use when the AI workflow can call tools, access data, trigger actions, generate outputs, or assist decisions.

Free self-assessmentDeployer Obligation Self-Assessment

Use first if you need a quick deployer triage before building the deeper evidence file.

Detailed reviewer questions and evidence records

1. Which AI systems exist?

The first test is traceability. A reviewer may ask for a list of AI systems, but the useful record is more specific: system name, owner, provider, intended purpose, affected persons, input data, outputs, business process, geography, status, and evidence location.

Evidence to prepare: AI system inventory, system owner, vendor, deployment status, use-case description, EU exposure, affected user groups, and next review date.

2. What is your role and classification rationale?

Article 6 treats AI systems as high-risk where they meet the safety-component/product route in Article 6(1) or are listed in Annex III, subject to the non-high-risk route and profiling override in Article 6(3). A reviewer may test whether the organisation stored the actual classification logic, not just a “high-risk: yes/no” value.

Evidence to prepare: role decision, intended-purpose note, Article 6 route, Annex III category, non-high-risk rationale if used, profiling check, unresolved classification log, and sign-off owner.

3. What did the provider or vendor hand over?

For deployers, provider evidence matters because Article 26 requires use in accordance with the instructions for use. Vendor due diligence should therefore focus on the deployer-facing handoff: what the system is intended to do, its limitations, oversight requirements, logging features, input-data assumptions, update process, and incident contact route.

Evidence to prepare: instructions for use, vendor evidence pack, limitation register, technical support route, model or system update notice process, incident handoff path, and procurement sign-off record.

4. Who oversees the system?

Article 26 requires deployers of high-risk AI systems to assign human oversight to natural persons with necessary competence, training, authority, and support. A policy line saying “humans remain accountable” is weak evidence if no named owner can intervene.

Evidence to prepare: oversight owner, competence/training record, escalation route, stop/pause authority, review schedule, operating procedure, and exception log.

5. What logs and operating evidence exist?

Article 26 requires deployers to keep automatically generated logs to the extent those logs are under their control, for at least six months unless other Union or national law provides otherwise. If logs include personal data, the retention model needs privacy, security, access-control, and purpose-limitation review.

Evidence to prepare: log source, log owner, retention period, access control, privacy review, export method, incident preservation rule, and evidence sample.

6. What Article 50 notices or labels apply?

Article 50 is not limited to high-risk AI systems. It covers specified transparency scenarios, including direct interaction with AI systems, provider-side machine-readable marking for synthetic content, deployer notices for emotion recognition or biometric categorisation, deepfake disclosure, and certain AI-generated or manipulated public-interest text.

Evidence to prepare: Article 50 trigger register, notice wording, placement evidence, screenshot or channel proof, exception rationale, accessibility check, approval owner, and review date.

7. Is FRIA or DPIA routing needed?

Article 27 requires specified deployers to perform a fundamental rights impact assessment before deploying certain high-risk AI systems. It also states that the FRIA complements a DPIA where relevant obligations are already met through data-protection assessment. That is a coordination point, not a reason to collapse the two records into one vague “impact assessment.”

Evidence to prepare: FRIA routing note, DPIA screening record, affected groups, use frequency, risk and mitigation record, privacy/legal owner, and update trigger.

8. What happens if risk or serious incident occurs?

Article 26 requires deployers to inform the provider or distributor and the relevant market surveillance authority where use may present risk, suspend use where required, and inform the provider first where a serious incident is identified. If the provider cannot be reached, Article 73 applies mutatis mutandis.

Evidence to prepare: risk escalation workflow, serious incident triage criteria, provider/importer/distributor contacts, market surveillance authority route, suspension authority, timeline log, and communications record.

30-day evidence baseline sprint

| Week | Workstream | Output |

|---|---|---|

| Week 1 | Inventory | List AI systems, owners, vendors, intended purposes, affected persons, data, outputs, status, and evidence locations. |

| Week 2 | Classification and vendor evidence | Record role decisions, Article 6 / Annex III routing, vendor instructions, limitations, logging information, and change-control gaps. |

| Week 3 | Oversight, logs, Article 50, FRIA/DPIA | Assign oversight owners, collect log-retention position, map transparency triggers, and route impact-assessment decisions. |

| Week 4 | Gap closure and sign-off | Record unresolved gaps, assign owners, approve interim controls, set review cadence, and prepare management-ready evidence summary. |

FAQ

No. Regulation (EU) 2024/1689 does not publish one universal deployer audit checklist. A reviewer may instead test whether your evidence connects the AI system inventory, role decision, classification rationale, provider instructions, oversight owner, logs, transparency notices, FRIA or DPIA routing, incident workflow, and review history.

Start with the AI system inventory. Without a controlled inventory, you cannot show which AI systems exist, who owns them, which vendors supply them, what each system is used for, whether Annex III or Article 50 may be triggered, and where the evidence file is stored.

They may ask how the deployer understood and followed the provider’s instructions for use. For high-risk systems, that means the deployer should retain a deployer-facing vendor evidence pack: intended purpose, limitations, oversight guidance, input-data requirements, logging information, incident contacts, and change-control notices.

For high-risk AI systems, Article 26 requires deployers to keep automatically generated logs to the extent those logs are under their control, for a period appropriate to the intended purpose and at least six months unless other Union or national law provides otherwise. If logs include personal data, privacy and retention review are needed.

No. Article 50 sits in the transparency chapter and applies to specified transparency scenarios, not only to high-risk AI systems. It can matter for direct AI interaction, synthetic content marking, emotion recognition, biometric categorisation, deepfakes, and certain public-interest AI-generated or manipulated text.

No. A fundamental rights impact assessment under Article 27 and a data protection impact assessment under GDPR answer related but different questions. Article 27 says the FRIA complements an existing DPIA where relevant obligations are already addressed through the DPIA. Do not treat one assessment label as proof that the other evidence is complete.

For high-risk AI systems, Article 26 requires the deployer to inform the provider first, then the importer or distributor and relevant market surveillance authorities. If the deployer cannot reach the provider, Article 73 applies mutatis mutandis. The evidence file should show escalation owners, contact paths, timestamps, and decision records.

No. This hub is an operational evidence-planning resource. It does not provide legal advice, official regulatory interpretation, certification, or a guarantee of compliance. Use it to structure internal readiness work, then validate legal, privacy, employment, procurement, sector-specific, and national-law questions with qualified professionals.

Source and review note

This page was last reviewed against Regulation (EU) 2024/1689 on 2026-04-30. The page uses official EU AI Act source text and EU AI Act Service Desk references for Article 6 classification rules, Article 26 deployer obligations, Article 27 fundamental rights impact assessment, Article 50 transparency obligations, Article 73 serious incident reporting, and Article 113 application dates.

- Article 6: Classification rules for high-risk AI systems

- Article 26: Obligations of deployers of high-risk AI systems

- Article 27: Fundamental rights impact assessment for high-risk AI systems

- Article 50: Transparency obligations for providers and deployers of certain AI systems

- Article 73: Reporting of serious incidents

- Article 113: Entry into force and application

Important: this page is operational guidance for evidence planning. It does not provide legal advice, official regulatory interpretation, certification, or a guarantee of compliance.

Author

Abhishek G Sharma

Founder, Move78 International Limited. Cybersecurity, AI governance, risk management, EU AI Act readiness, ISO/IEC 42001, ISO/IEC 27001, CISA, CISM, CRISC, CEH, CCSK, CAIGO, CAIRO.