Quick answer

AI agent evidence checklist EU AI Act work starts by treating the agent as an AI system or AI-enabled workflow that must be mapped to role, intended purpose, risk classification, deployer obligations, transparency triggers, logs, and incident routing. Do not start with agent hype. Start with the evidence file.

An AI agent is not automatically high-risk. It can become high-risk when the workflow falls within Article 6 and Annex III, such as employment, education, biometrics, critical infrastructure, or access to essential services. A pilot is not a magic word. If the agent is acting inside a real workflow, keep the evidence.

This page uses “AI agent” as an operational term. It is not claiming that the EU AI Act creates a separate legal category called AI agents.

What counts as an AI agent for EU AI Act evidence planning?

For this checklist, an AI agent is an AI-enabled workflow that can do more than produce a static answer. It may call tools, retrieve files, write to business systems, trigger emails, create tickets, score records, recommend actions, escalate cases, or execute multi-step tasks with limited human input.

The legal analysis still starts with the AI Act’s existing terms. Article 3 defines an AI system, provider, deployer, putting into service, intended purpose, and instructions for use. That is the anchor. The hard question is not whether the system is called an agent. The hard question is what it can do.

Evidence planning triggers

- The agent touches a process involving natural persons.

- The agent assists decisions in an Annex III area.

- The agent writes to systems, not just reads them.

- The agent uses personal data or sensitive attributes.

- The agent interacts directly with users or workers.

Minimum record

- What the agent is intended to do.

- What it is prohibited from doing.

- Which tools and data it can access.

- Who reviews, approves, overrides, or stops it.

- Where logs and evidence are retained.

EU AI Act trigger map for AI agents

AI agent workflows should be mapped through the same EU AI Act route as other AI systems: definition, role, intended purpose, high-risk classification, deployer obligations, transparency duties, and incident escalation. The label “agent” does not replace that route.

| Question | EU AI Act route | Evidence to keep |

|---|---|---|

| Does the workflow meet the AI system definition? | Article 3 | System description, autonomy note, input/output description, intended-purpose record. |

| Who is using, supplying, modifying, or branding the agent? | Article 3 role definitions; Article 25 where value-chain responsibility may change | Provider/deployer role decision, vendor file, modification review, sign-off. |

| Does the agent touch an Annex III use case? | Article 6 and Annex III | Classification decision, affected-person group, business process map, reviewer approval. |

| Is the agent deployed as high-risk? | Article 26 | Instructions-for-use file, oversight owner, input-data controls, monitoring record, logs, incident route. |

| Does the agent interact with people or generate content? | Article 50 | Disclosure decision, notice wording, screenshot proof, exception rationale, publication owner. |

| Could failure cause a serious incident? | Article 73 and internal escalation workflow | Incident intake path, provider contact, severity triggers, evidence preservation rule. |

Provider vs deployer evidence for agent workflows

Agent evidence breaks when teams confuse vendor capability with deployer operation. The provider may supply the system, instructions, logging capability, technical documentation, and change notices. The deployer still needs an internal file showing the use case, owner, local configuration, tool access, oversight assignment, logs under deployer control, disclosure decision, and escalation route.

Ask the provider for

- Instructions for use and intended-purpose boundary.

- Logging capability and export fields.

- Tool-call, action, and monitoring documentation.

- Accuracy, robustness, and cybersecurity information where relevant.

- Change notice, incident support, and post-market contact.

Create as deployer evidence

- Business use case and deployment owner.

- Autonomy boundary and permission map.

- Human review, approval, override, and stop controls.

- Input-data and output-use controls.

- Article 50 and incident escalation records.

Vendor dashboards are useful. They are not your deployer evidence file.

AI agent deployer evidence checklist

The evidence file should prove what the agent is, who controls it, what it can access, what it can do without approval, what must be reviewed by a human, what logs exist, and what happens if the workflow misfires.

| Evidence area | What to retain | Why it matters under the EU AI Act |

|---|---|---|

| Agent inventory entry | Name, owner, vendor, process, tools/actions, data access, user group, geography, deployment status. | Creates the controlled population for role, risk, Article 26 and Article 50 analysis. |

| Role decision | Provider/deployer/importer/distributor assessment, rebranding or substantial modification review, reviewer and date. | Prevents treating deployer evidence as if it were provider technical documentation. |

| Intended-purpose record | Allowed tasks, prohibited tasks, supported users, deployment context, and assumptions from instructions for use. | Connects Article 3 intended purpose to Article 26 use according to instructions. |

| Annex III triage | Assessment against employment, education, biometrics, essential services, critical infrastructure, and other Annex III routes. | Determines whether high-risk obligations may apply. |

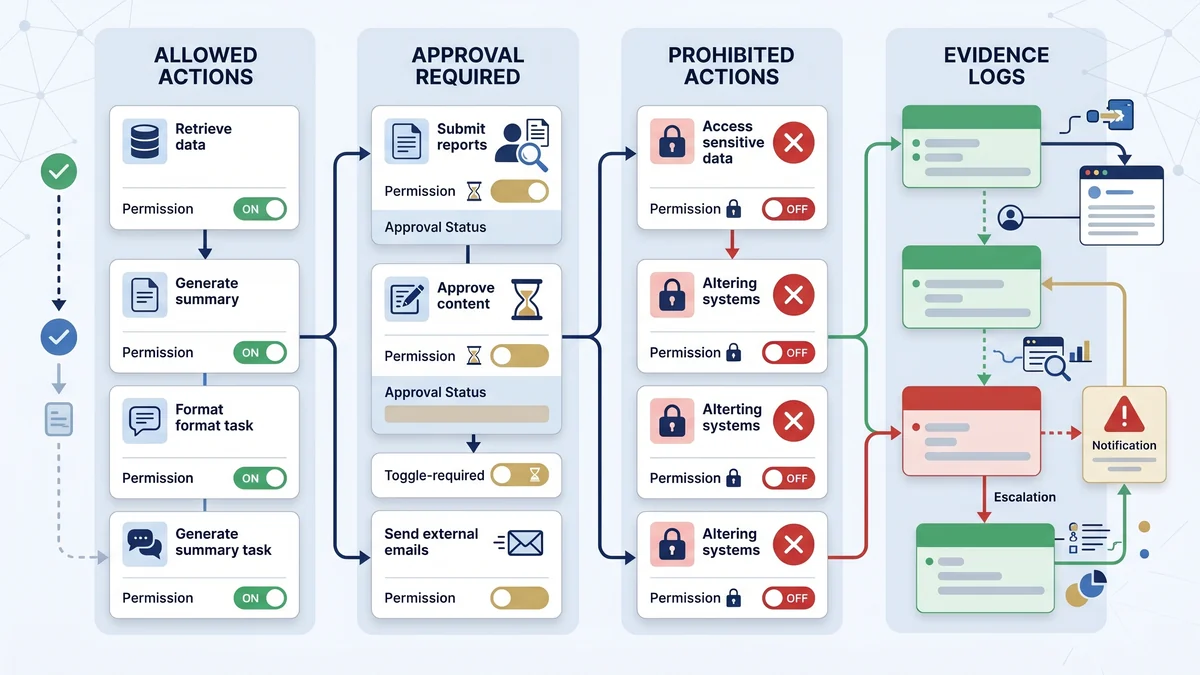

| Autonomy boundary | What the agent can execute alone, what needs approval, what must escalate, and what is blocked. | Turns human oversight into an operating design that can be tested. |

| Tool permission map | APIs, files, SaaS systems, write access, transaction limits, external communication rights, privileged actions. | Shows the real-world impact boundary of the agent workflow. |

| Human oversight record | Named reviewer, competence, authority, review cadence, override path, kill-switch owner, escalation contact. | Supports Article 26 oversight evidence for high-risk deployments. |

| Input-data control note | Data sources, excluded data, quality checks, representativeness review where relevant, personal-data routing. | Supports Article 26 input-data duties where the deployer controls input data. |

| Log and traceability file | Prompt logs, action logs, tool-call logs, approval logs, exception logs, access controls, retention rule. | Supports monitoring, reconstruction, and Article 26 log retention where logs are under deployer control. |

| Article 50 decision | Direct-interaction notice, content disclosure, deepfake/public-interest text review, biometric/emotion notice decision. | Prevents agent interfaces and content workflows from bypassing transparency review. |

| Incident escalation route | Incident trigger, owner, provider contact, evidence preservation, authority routing logic, closure record. | Supports serious-incident awareness and escalation discipline. |

| Review and sign-off | Approval date, unresolved gaps, next review, vendor update trigger, post-deployment change review. | Shows that the evidence file is maintained, not created once and forgotten. |

Runtime boundary register

The runtime boundary register is the evidence artifact that makes agent autonomy visible. It should show which actions the agent may perform, which actions require approval, which actions are prohibited, what the human reviewer sees, and which logs are retained. If nobody can show the tool-call log, the oversight story gets thin fast.

| Boundary item | Record | Evidence owner |

|---|---|---|

| Allowed actions | Read-only search, draft generation, ticket creation, data retrieval, internal summary, or other permitted actions. | System owner |

| Approval required | External communication, decision recommendation, system write action, financial action, HR action, or case escalation. | Business owner + compliance |

| Prohibited actions | Final employment decision, unsupervised credit decision, sensitive attribute inference, unsupported external disclosure, or unchecked deletion. | Legal + risk owner |

| Human intervention | Reviewer role, review screen, override action, halt control, escalation path, and training evidence. | Oversight owner |

| Log evidence | Tool call, input, output, approval, exception, override, error and incident records with retention model. | IT/security + system owner |

High-risk AI agent scenarios to triage first

Prioritize agent workflows that touch people, rights, access, employment, education, credit, biometric data, or public infrastructure. An internal productivity agent may need basic evidence. An employment screening or credit workflow needs deeper classification and oversight records.

| Agent workflow | Why it is sensitive | First evidence action |

|---|---|---|

| Recruitment or worker-management agent | Annex III includes recruitment, selection, task allocation, promotion, termination, monitoring and evaluation of workers. | Create HR owner record, worker-notice review, oversight log and vendor evidence file. |

| Education or proctoring agent | Annex III includes access, assessment, learning outcomes and prohibited-behaviour monitoring in education. | Record intended purpose, learner impact, review owner and appeal/escalation path. |

| Credit or essential-service triage agent | Annex III includes creditworthiness and access to essential public or private services in defined contexts. | Map decision role, affected persons, input data, human review and DPIA/FRIA routing. |

| Biometric or emotion-recognition agent | Annex III and Article 50 may both be relevant, with data-protection escalation. | Pause for legal/privacy review, exposed-person notice, authorization and evidence route. |

| Critical infrastructure workflow agent | Annex III includes safety components in the management and operation of listed critical infrastructure. | Define safety boundary, intervention authority, logging, change control and incident escalation. |

| Public-facing assistant or content agent | Article 50 may apply to direct AI interaction, generated content, deepfakes or public-interest text. | Run the Article 50 trigger review and retain notice / label proof. |

Article 50 transparency triggers for AI agents

AI agents often appear as chat interfaces, workflow assistants, content tools, or autonomous publication helpers. That makes Article 50 review practical, not theoretical. The deployer should record whether natural persons interact directly with AI, whether outputs are synthetic or manipulated, whether the content is deepfake or public-interest text, and whether biometric categorisation or emotion recognition is involved.

Do not use one blanket label for everything. Article 50 is trigger-based. Keep the decision record, the notice or label wording, the placement proof, and the exception rationale where an exception is used.

30-day AI agent evidence sprint

A 30-day sprint should create a baseline evidence file for priority agent workflows. Do not try to solve every governance problem. Prove you know which agents exist, what they can do, which legal routes are relevant, who controls them, what logs exist, and who can stop them.

| Week | Workstream | Output |

|---|---|---|

| Week 1 | Inventory and role file | Agent inventory, owner, vendor, intended purpose, role decision, tool/data access list. |

| Week 2 | EU AI Act triage | Article 3, Article 6, Annex III, Article 26, Article 50, Article 73 and DPIA/FRIA routing note. |

| Week 3 | Oversight, permissions and logs | Runtime boundary register, human oversight record, permission map, log source and retention decision. |

| Week 4 | Incident route and sign-off | Provider contact, severity triggers, authority-routing logic, evidence preservation, unresolved gaps, next review date. |

Common mistakes that weaken AI agent evidence

- Calling the workflow a pilot and keeping no file. If the agent acts inside a real workflow, retain proportionate evidence.

- Tracking tools but not actions. An agent inventory must show what the agent can do, not just what software is installed.

- Relying on vendor dashboards as the full evidence file. Vendor evidence is an input, not the deployer record.

- No runtime boundary. If nobody knows what requires approval, human oversight is not operational.

- No tool-call logs. Without action traceability, incident reconstruction becomes weak.

- No Article 50 review. Public-facing assistants and content agents can create transparency issues quickly.

- No owner for stopping the agent. Someone must have authority to suspend the workflow when risk appears.

Free EU AI Compass tools to use first

Start with the free diagnostic path. Do not buy implementation material before you know which agent workflows, roles, evidence gaps and legal triggers matter.

Define what an AI agent can do, must escalate, or must not do.Evidence Starter Library

Find the core deployer evidence templates.Human Oversight Evidence Log

Record review, override, escalation and sign-off evidence.AI Vendor Intake Due Diligence Template

Request the provider-side evidence file before deployment.AI Content Marking Checker

Check Article 50 content and disclosure triggers.Serious Incident Register

Prepare the escalation record before an incident occurs.

Where E1/E2 fits after the free diagnostic path

Use the free tools first. If one agent workflow exposes a narrow gap, fix that gap locally. If several workflows show the same missing runtime boundary, weak oversight, poor logs, unclear vendor evidence or incomplete disclosure decisions, E1/E2 can help convert the findings into structured registers, control mapping and management-ready evidence packs.

Do not skip the diagnostic step. An implementation pack is only useful after you know which agents, roles and evidence gaps matter.

FAQ: AI agent deployer evidence under the EU AI Act

These answers are written for deployers who need EU AI Act evidence decisions, not generic AI governance theory.

No. The EU AI Act does not create a separate legal category called AI agents. This checklist uses AI agent as an operational term for AI systems or workflows that can call tools, access data, trigger workflows, generate outputs, or assist decisions. The legal analysis still starts with the AI system definition, role, intended purpose, risk classification, and deployment context.

An AI agent can become part of a high-risk AI system where Article 6 and Annex III conditions are met. The name of the workflow is not decisive. The decisive issue is whether the agent is used in an Annex III area such as employment, education, biometrics, critical infrastructure, or access to essential services, and whether the use materially affects people.

Deployers should keep an agent inventory entry, role decision, intended-purpose record, Annex III triage, autonomy boundary, tool permission map, human oversight record, input-data control note, log-retention decision, Article 50 disclosure review, incident route, and sign-off history. The file should show what the agent can do, who controls it, and who can stop it.

For high-risk AI systems, Article 26 requires deployers to keep automatically generated logs to the extent those logs are under their control, for a period appropriate to the intended purpose and at least six months unless other Union or national law provides otherwise. For AI agents, tool-call logs and action logs are often the first evidence auditors ask for.

No. Vendor monitoring can support the evidence file, but it does not replace deployer oversight. The deployer still needs records showing use according to instructions, assigned human oversight, monitoring, log retention where under its control, input-data controls where relevant, and escalation routes for risks or serious incidents.

A pilot is not a magic word. If the AI agent is acting inside a real workflow, handling personal data, assisting decisions, producing public content, or touching Annex III use cases, the team should keep a proportionate evidence file. Label the pilot status, but do not use the label to avoid ownership, logs, oversight, or disclosure review.

Article 50 can matter when an AI agent directly interacts with natural persons, generates or manipulates synthetic content, supports deepfake workflows, publishes AI-generated text on matters of public interest, or uses emotion recognition or biometric categorisation. The deployer should record the trigger decision, notice wording, placement evidence, and exception rationale where relevant.

No. This checklist is operational guidance for EU AI Act evidence planning. It does not determine legal status, confirm compliance, or replace qualified legal, privacy, security, employment, procurement, sector, or regulatory advice. Use it to structure the evidence file, then validate high-impact or unclear scenarios with appropriate professionals.

Source and review note

This page was reviewed against official AI Act Service Desk pages and the official Regulation (EU) 2024/1689 text linked from the Service Desk. It is operational guidance for evidence planning. It is not legal advice, and it does not guarantee compliance.

- Article 3: definitions

- Article 6: classification rules for high-risk AI systems

- Annex III: high-risk AI systems

- Article 25: responsibilities along the AI value chain

- Article 26: obligations of deployers of high-risk AI systems

- Article 27: fundamental rights impact assessment

- Article 50: transparency obligations

- Article 73: reporting of serious incidents

- Article 113: entry into force and application

Disclaimer: Validate legal classification, provider/deployer role, Article 26 duties, Article 50 disclosures, GDPR/DPIA implications, FRIA routing, employment-law duties, sector-specific obligations and national-law questions with qualified counsel or a competent regulatory adviser.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.