Quick answer: Article 50 is a routing problem

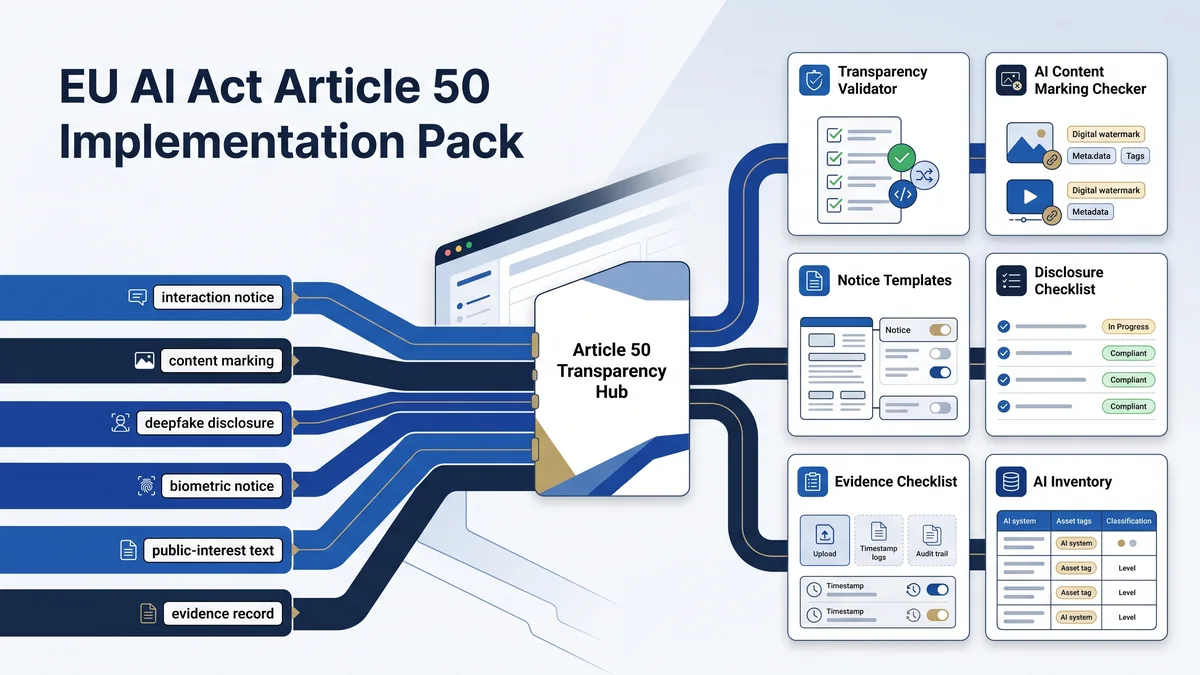

The EU AI Act Article 50 Implementation Pack routes transparency scenarios to the right operational action: AI interaction notice, machine-readable marking, biometric or emotion recognition notification, deepfake disclosure, public-interest text disclosure, and evidence retention. It is a free implementation hub, not legal advice or a compliance guarantee.

The output should be an Article 50 evidence record: scenario, owner, AI system inventory ID, content or interaction type, notice text, notice placement, marking method, review status, exception rationale if used, and change-control date.

Current-law planning note

Article 50 is in Chapter IV of Regulation (EU) 2024/1689 and applies to specified transparency scenarios for providers and deployers of certain AI systems. Under Article 113, the Regulation generally applies from 2 August 2026, with stated exceptions for other chapters and obligations.

The Commission is also running a Code of Practice process for marking and labelling AI-generated content. The official Commission materials describe the second draft and an expected finalisation window; treat the code status as time-sensitive and recheck it before formal reliance.

Route Article 50 work into available EU AI Compass resources

Use this hub as the front door. Do not make product, content, legal, privacy, or engineering teams guess whether they need a scenario decision, disclosure checklist, evidence record, inventory field, or vendor request.

Article 50 Disclosure Decision Tree

Use this first when the team needs to screen chatbot, AI-generated content, deepfake, biometric/emotion, or public-interest text disclosure triggers.

ChecklistArticle 50 Disclosure Checklist

Use this to test whether the disclosure is clear, distinguishable, accessible, placed at the right moment, and supported by retained evidence.

EvidenceEU AI Act Evidence Checklist

Use this to retain proof: scenario classification, notice text, placement screenshots, marking checks, approvals, review dates, and change logs.

InventoryAI System Inventory Fields

Use this to add Article 50 trigger fields to the inventory before a system, chatbot, content workflow, or publication route goes live.

Evidence commandDeployer Evidence Command Center

Use this when Article 50 evidence needs to be routed with deployer obligations, vendor evidence, audit records, and readiness planning.

Vendor handoffVendor Evidence Request Builder

Use this when a provider or vendor must confirm generated-content marking, user-facing notices, model capability limits, or evidence support.

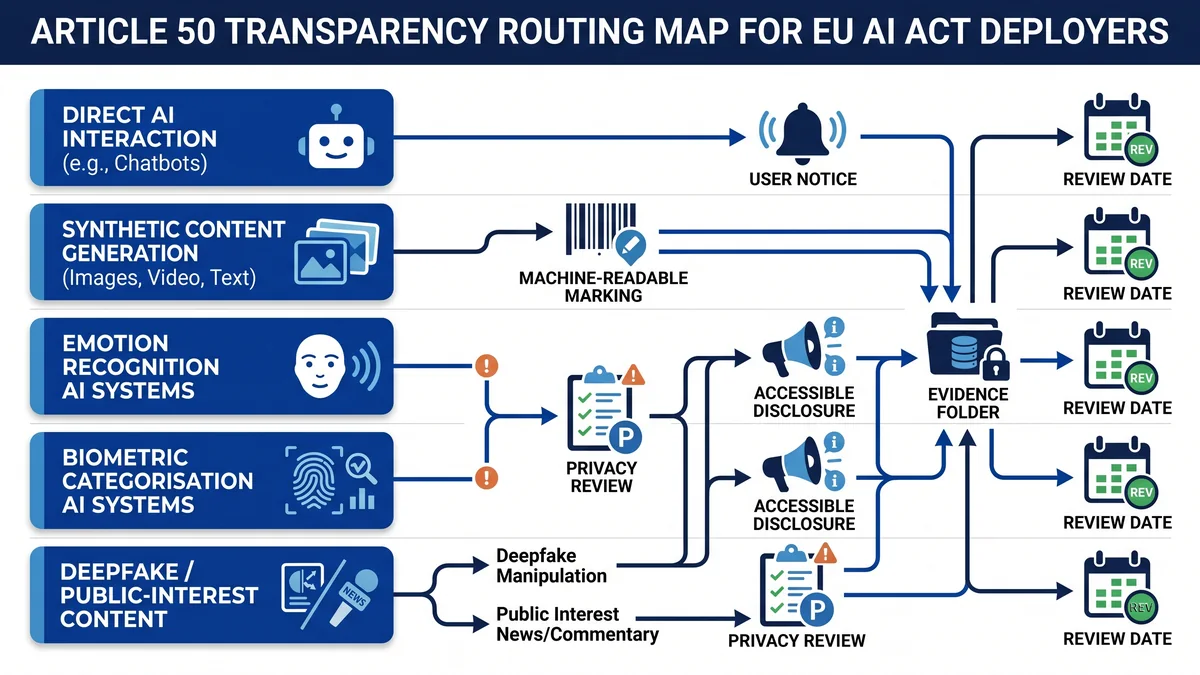

Article 50 scenario map

Separate the transparency scenario before writing a notice. The same AI workflow can trigger more than one Article 50 route.

| Scenario | Main actor route | Operational question | Evidence to retain |

|---|---|---|---|

| Direct interaction with natural persons | Provider design and notice route | Will a person know they are interacting with an AI system unless that fact is obvious from the context? | Interface screenshot, point-of-interaction notice, release note, owner, exception rationale if used. |

| Synthetic audio, image, video, or text generation | Provider marking and detectability route | Can the output be marked in a machine-readable format and detected as artificially generated or manipulated where required? | Metadata or watermark check, output sample, vendor statement, implementation note, version record. |

| Emotion recognition or biometric categorisation | Deployer notification and privacy route | Are exposed persons informed about the operation of the system, and has personal-data processing been routed to privacy review? | Notice wording, placement, DPIA route, lawful-basis note, deployment owner, review date. |

| Deepfake image, audio, or video content | Deployer disclosure route | Does published or distributed content need disclosure that it was artificially generated or manipulated? | Disclosure text, content sample, publication location, creative/satirical context review if relevant. |

| AI-generated or manipulated public-interest text | Deployer publication review route | Is the text published to inform the public on matters of public interest, and does a human review/editorial-control exception apply? | Editorial review note, responsible publisher, disclosure decision, human review record. |

Provider versus deployer split

Provider-facing Article 50 work

- Design direct-interaction AI systems so people are informed they are interacting with AI unless obvious from context.

- Mark synthetic audio, image, video, or text outputs in machine-readable format and make them detectable where technically feasible.

- Provide implementation support that deployers can retain as evidence.

Deployer-facing Article 50 work

- Notify exposed persons when deploying emotion recognition or biometric categorisation systems.

- Disclose deepfake image, audio, or video content where Article 50 applies.

- Disclose certain AI-generated or manipulated public-interest text unless a relevant exception applies.

Minimum Article 50 evidence pack

A defensible Article 50 record should let a reviewer reconstruct the decision without interviewing the product, content, marketing, privacy, or engineering team six months later.

- AI system inventory ID and business owner.

- Article 50 scenario classification.

- Provider/deployer responsibility split.

- Notice, label, or disclosure text used.

- Placement screenshot or publication sample.

- Machine-readable marking or watermark check where relevant.

- Human review or editorial-control note where relevant.

- Exception rationale if an exception is being relied on.

- Approval owner and approval date.

- Review cadence and next review date.

- Change log for content, interface, audience, model, or channel changes.

- Linked DPIA or privacy review where biometric or personal-data processing is involved.

Build the Article 50 workflow in seven practical steps

1. Add Article 50 trigger fields to the inventory

Capture direct interaction, generated content type, biometric/emotion use, deepfake risk, public-interest text, publication channel, audience, and owner.

2. Run the Article 50 disclosure decision tree

Use the decision tree when the scenario is unclear or when several transparency routes may apply to the same workflow.

3. Ask vendors for marking and notice evidence

Use the vendor evidence request builder when the provider must confirm marking, detectability, interface notices, or disclosure-support details.

4. Draft the notice only after classification

Avoid generic policy-page wording that users never see at the relevant interaction, exposure, or publication moment.

5. Check disclosure placement

Use the Article 50 disclosure checklist to test whether the disclosure is clear, distinguishable, accessible, and available at the right time.

6. Attach evidence

Save decision-tree output, notice text, screenshots, marking checks, approvals, and review records in the evidence folder.

7. Review after material changes

Re-run the route when the model, channel, audience, publication purpose, content type, user interface, or vendor implementation changes.

Code of Practice note: useful, status-sensitive, and not a shortcut

The Commission describes the Code of Practice on marking and labelling of AI-generated content as a tool to support compliance with Article 50 transparency obligations. The Commission page says that, if approved, the final code will be a voluntary tool for providers and deployers to demonstrate compliance with their respective Article 50(2) and Article 50(4) obligations.

The current public Commission materials describe the second draft and timeline. Use the draft code as implementation intelligence, not as a substitute for Regulation (EU) 2024/1689, contract terms, accessibility review, privacy review, or formal legal analysis.

Notice wording is not the control

A notice is useful only if the workflow proves where, when, and how the notice appears. A good Article 50 notice record should answer five questions:

- Which Article 50 scenario triggered the notice?

- Who sees the notice or disclosure?

- When do they see it?

- Is the notice clear, distinguishable, accessible, and placed at the right moment?

- Where is the evidence retained?

Recommended internal routing table

| Team | Decision owned | Evidence artifact |

|---|---|---|

| Product / Engineering | Whether users interact directly with an AI system and where notices appear. | Interface screenshot, release note, inventory record. |

| Marketing / Content | Whether generated or manipulated content is published, distributed, labelled, or disclosed. | Publication sample, disclosure label, approval log. |

| Security / IT | Whether marking, metadata, watermarking, provenance, logging, or vendor evidence is supported. | Marking check, metadata screenshot, vendor statement. |

| Privacy / DPO | Whether biometric, emotion recognition, or personal-data processing requires GDPR routing. | DPIA route, notice record, lawful-basis note. |

| Legal / Compliance | Exception review, disclosure wording, accessibility expectations, and regulatory interpretation. | Reviewed notice, exception memo, legal sign-off if required. |

FAQ: EU AI Act Article 50 implementation

The EU AI Act Article 50 Implementation Pack is a routing hub for transparency decisions: AI interaction notices, AI-generated content marking, biometric or emotion recognition notices, deepfake disclosure, public-interest text disclosure, and evidence retention. It is operational guidance only; it does not provide legal advice, certification, or a compliance guarantee.

No. Article 50 includes provider-facing and deployer-facing transparency duties. Providers handle direct-interaction design and machine-readable marking for generated or manipulated content. Deployers handle notices for emotion recognition or biometric categorisation systems, deepfake disclosure, and certain public-interest text publications.

AI systems intended to interact directly with natural persons should be designed so people are informed that they are interacting with an AI system, unless that fact is obvious from the context. Record the interface notice, placement, timing, owner, and any exception rationale before launch.

AI-generated content may need technical marking, human-facing disclosure, or both. Article 50 separates provider marking duties for synthetic audio, image, video, or text from deployer disclosure duties for deepfakes and certain public-interest text publications. The evidence record should show the content type, channel, audience, decision, and review owner.

Deepfake disclosure under Article 50 is scenario-specific. Deployers of AI systems that generate or manipulate image, audio, or video content constituting a deepfake must disclose that the content was artificially generated or manipulated, with specific caveats for authorised law-enforcement uses and creative, satirical, fictional, or analogous works.

Deployers should document the AI system inventory ID, Article 50 scenario, provider/deployer responsibility split, notice or label text, placement screenshot, content sample, machine-readable marking check where relevant, human review or editorial-control note, approval owner, publication date, and change log.

The Commission describes the Article 50 Code of Practice process as a voluntary support route for marking and labelling AI-generated content. As of the 5 May 2026 review, official Commission materials describe the second draft published on 5 March 2026. Final approval had not been verified in the official source set used for this page. Treat the Code as draft implementation context until final approval is verified.

No. This pack provides operational guidance for Article 50 transparency evidence planning. It is not legal advice, official regulatory interpretation, certification, or a compliance guarantee. Use qualified legal, privacy, accessibility, and regulatory counsel for formal decisions.

Source and review note

This hub was reviewed against the AI Act Service Desk, Regulation (EU) 2024/1689 text linked from the Service Desk, and European Commission materials on the Code of Practice on marking and labelling AI-generated content. It is operational guidance for transparency evidence planning. It is not legal advice and does not guarantee compliance.

- Article 50: transparency obligations for providers and deployers of certain AI systems

- Article 113: entry into force and application

- European Commission: Code of Practice on marking and labelling of AI-generated content

- European Commission: second draft Code of Practice on marking and labelling AI-generated content

Last reviewed: 5 May 2026. Use qualified legal, privacy, accessibility, and regulatory counsel for formal Article 50 determinations. The Article 50 Code is draft/voluntary until final approval is verified.

Author

Abhishek G Sharma is the founder of Move78 International and EU AI Compass. He works on cybersecurity, AI governance, ISO 42001, EU AI Act readiness, and evidence-led risk management. This page is practitioner guidance, not legal advice.