Quick answer

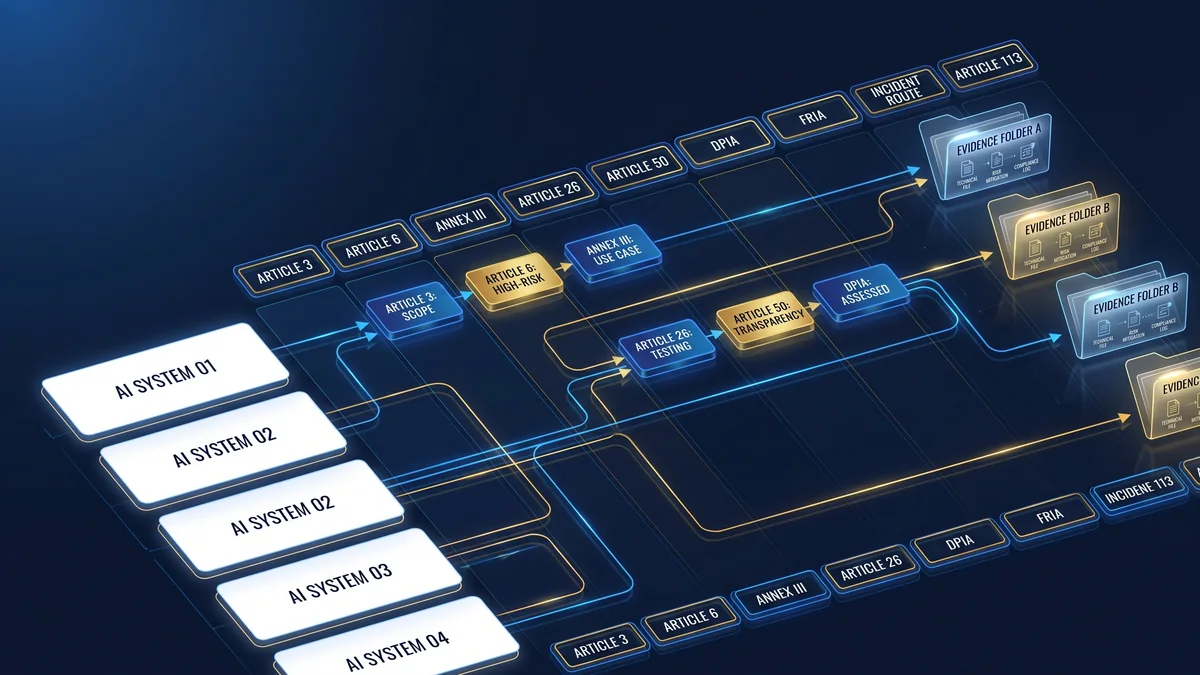

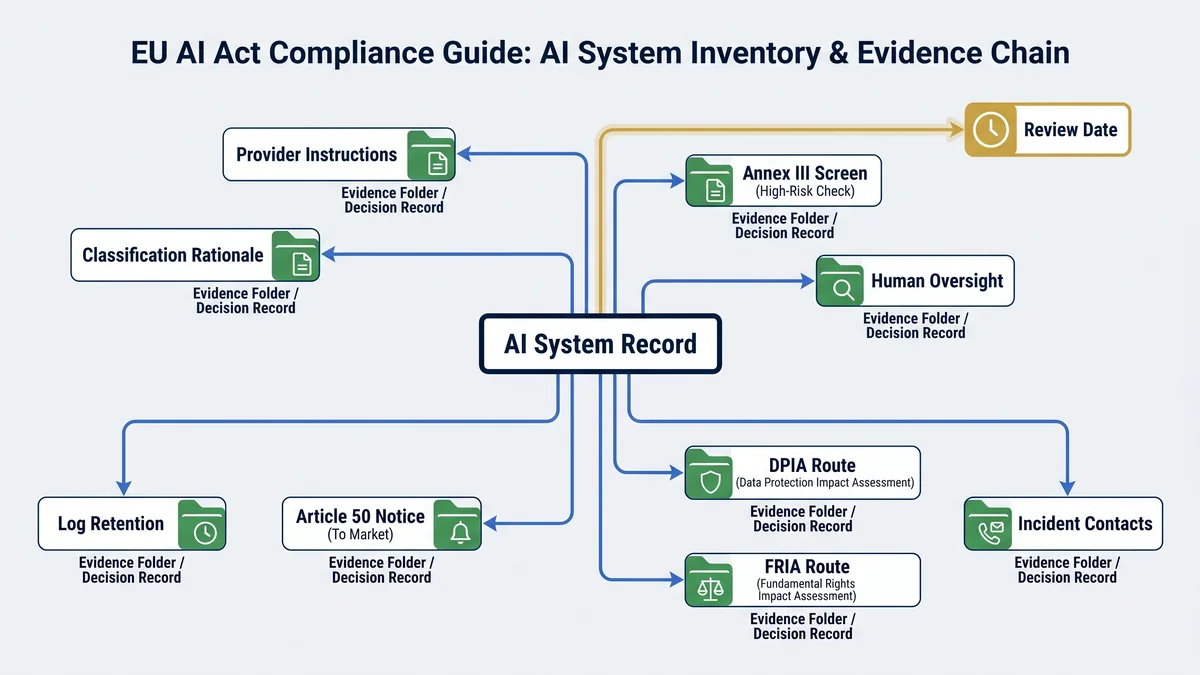

An EU AI Act-ready AI system inventory is a routing table, not a spreadsheet graveyard. Each record should show what the system is, who owns it, who provides it, how it is used, which people it affects, what data and outputs it handles, whether Article 6 and Annex III may apply, whether Article 26 or Article 50 duties may be triggered, and where DPIA, FRIA, incident, and deadline decisions are recorded.

The practical failure is predictable: teams collect tool names and vendor names, then discover too late that they cannot prove intended purpose, role, high-risk reasoning, oversight, log retention, worker notification, disclosure placement, or serious-incident escalation.

This page is written for deployers and AI adopters. It does not replace legal, privacy, employment, sector, procurement, or regulatory advice.

Why the inventory matters for EU AI Act readiness

Most internal AI inventories start as asset lists: application name, vendor, business owner, contract date. That is not enough for EU AI Act readiness. The AI Act forces decisions around definitions, role, intended purpose, high-risk classification, use context, instructions for use, human oversight, input data, logs, transparency, impact assessment, incidents, and application dates.

The inventory does not make a legal conclusion by itself. It makes the decision trail visible. That is the point. If a system is used in recruitment, credit scoring, life or health insurance pricing, education, biometrics, critical infrastructure, public services, law enforcement, migration, justice, or democratic processes, a weak inventory will hide the exact facts needed for classification and evidence planning.

Classify

Record the facts needed to apply Article 3, Article 6, and Annex III routing.

Assign

Separate provider, deployer, importer, distributor, internal builder, and operator responsibilities.

Evidence

Link instructions, notices, oversight logs, DPIA/FRIA records, incident paths, and review dates.

EU AI Act inventory fields: what to capture and why

Use the table below as the minimum practical field model. Do not treat every field as a public disclosure field. Some records will contain legal, security, procurement, employment, privacy, or commercially sensitive information and should be access-controlled.

| Field group | Inventory field | What to capture | EU AI Act routing |

|---|---|---|---|

| Identity | Inventory ID | Stable system record ID. Do not rely only on vendor product names. | Evidence traceability and change history. |

| Identity | System name | Business name, technical name, model or workflow name, and aliases used by teams. | Prevents duplicate records and shadow AI blind spots. |

| Ownership | Business owner | Process owner accountable for the use case and operating decision. | Deployer governance and sign-off path. |

| Ownership | Technical owner | IT, product, engineering, security, or vendor-management owner. | Monitoring, change control, and incident escalation. |

| Role | Legal role | Provider, deployer, importer, distributor, product manufacturer, authorised representative, or other operator. | Article 3 role definitions and obligation routing. |

| System fit | AI system assessment | Whether the record appears to meet the AI system definition, with rationale and reviewer. | Article 3 threshold screen. |

| Purpose | Intended purpose | Use case, decision supported, process context, conditions of use, and user group. | Article 3 intended purpose and Article 6 classification. |

| Scope | EU exposure | Whether the system is used in the EU, placed on the EU market, put into service in the EU, or affects EU persons or operations. | Scope and deployment route. |

| Vendor | Provider / supplier / integrator | Vendor, model provider, reseller, implementation partner, internal builder, and contract owner. | Provider-deployer handoff and evidence request path. |

| Documentation | Instructions for use | Link to provider instructions, internal use procedure, limitations, and prohibited uses. | Article 26 use according to instructions. |

| Classification | Article 6 route | Safety component route, Annex III route, Article 6(3) exception rationale, or unresolved status. | Article 6 high-risk classification. |

| Classification | Annex III area | Biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration/border, justice, or democratic processes. | Annex III high-risk screening. |

| Classification | Profiling flag | Whether the system profiles natural persons in a relevant Annex III context. | Article 6(3) override risk. |

| People | Affected persons or groups | Employees, applicants, students, customers, patients, citizens, vulnerable groups, or other affected groups. | FRIA, DPIA, transparency, and risk review. |

| Data | Input data categories | Personal data, special-category data, biometric data, operational data, public data, synthetic data, or vendor-supplied data. | Article 26 input-data control and DPIA routing. |

| Output | Output impact | Prediction, recommendation, ranking, decision support, content, alert, score, automated action, or workflow trigger. | Article 3 output type and high-risk relevance. |

| Oversight | Human oversight owner | Named role with competence, authority, training, and escalation route. | Article 26 deployer oversight duty. |

| Monitoring | Operational monitoring | What is monitored, review cadence, thresholds, reviewer, provider notification path, and suspension trigger. | Article 26 monitoring and risk escalation. |

| Logs | Log availability and retention | Whether logs are generated, who controls them, retention period, access control, and export path. | Article 26 log retention where logs are under deployer control. |

| Transparency | Direct human interaction | Whether natural persons interact directly with the AI system and where notice appears. | Article 50 interaction disclosure routing. |

| Transparency | Synthetic content / deepfake / public-interest text | Generated or manipulated image, audio, video, text, deepfake, or public-interest content use. | Article 50 disclosure and marking route. |

| Privacy | DPIA route | DPIA screening status, reviewer, trigger rationale, link to DPIA or no-DPIA rationale. | GDPR Article 35 route and Article 26 reference to DPIA information. |

| Fundamental rights | FRIA route | Article 27 trigger screen, affected groups, harm risks, oversight measures, mitigation and complaint route. | Article 27 FRIA routing. |

| Incident | Incident owner and provider contact | Internal incident owner, provider contact, importer/distributor contact, authority escalation owner, and evidence folder. | Article 26 deployer escalation and Article 73 reporting route. |

| Deadline | Application date | Which Article 113 date applies, review date, and whether deadline assumptions require re-checking. | Article 113 staggered application tracking. |

| Lifecycle | Status and material change | Planned, pilot, production, suspended, retired, materially changed, or under review. | Review, reclassification, and evidence maintenance. |

| Evidence | Evidence folder | Links to risk assessment, vendor pack, instructions, notices, DPIA, FRIA, oversight log, incident record, and approvals. | Audit-readiness and internal control evidence. |

How the inventory routes to specific AI Act articles

The inventory must preserve the reasoning path, not only the end label. A yes/no column called “EU AI Act risk” is too weak. The record should explain which facts caused the system to route toward or away from each article or annex.

Article 3: definitions become inventory fields

Article 3 defines “AI system,” “provider,” “deployer,” “operator,” “intended purpose,” “instructions for use,” “input data,” “substantial modification,” “serious incident,” “deep fake,” and related terms. These should not sit only in a legal memo. They should become record fields or reviewer prompts inside the inventory.

Article 6 and Annex III: classification must retain the route

For high-risk classification, the inventory should show whether the system is a safety component under Article 6(1), falls into an Annex III area under Article 6(2), has an Article 6(3) non-high-risk rationale, involves profiling of natural persons, or remains unresolved. That reasoning is the evidence, not the label alone.

Article 26: deployer obligations need operating evidence

For high-risk systems, deployers need evidence that they use the system according to instructions, assign competent human oversight, manage input data where under their control, monitor operation, escalate risks and serious incidents, keep logs where under their control, inform workers where relevant, and use Article 13 information for DPIA obligations where applicable.

Article 50: transparency triggers need notice evidence

The inventory should flag direct human interaction, emotion recognition, biometric categorisation, deepfake generation or manipulation, and AI-generated text published to inform the public on matters of public interest. The evidence link should point to notice wording, placement, timing, accessibility, human review, editorial responsibility, and exception rationale where applicable.

Article 73: deployers need escalation fields, not a fake provider duty

Article 73 is framed around provider reporting of serious incidents. For deployers, the cleaner route is Article 26 incident escalation: inform the provider first, then importer or distributor and relevant market surveillance authorities. If the deployer cannot reach the provider, Article 73 applies mutatis mutandis. The inventory should capture contacts and escalation paths before an incident happens.

Article 113: every record needs a deadline field

Do not assume every obligation applies on the same date. Article 113 creates staggered application dates. The inventory should store the applicable date, the basis for that date, and the next review point because deadline assumptions may change if a lawfully adopted amendment changes the baseline.

How the inventory routes systems to DPIA and FRIA review

Do not merge DPIA and FRIA into one vague “impact assessment” column. They overlap, but they answer different control questions. The inventory should route both separately and link to the relevant evidence.

| Route | Inventory trigger | Evidence link to keep | Owner to involve |

|---|---|---|---|

| DPIA screening | Personal data, special-category data, biometric data, employee monitoring, profiling, large-scale processing, vulnerable persons, new technology, or high-risk privacy indicators. | DPIA screen, completed DPIA, DPO advice where relevant, no-DPIA rationale, privacy controls, retention decision. | DPO, privacy, legal, security, system owner. |

| FRIA screening | High-risk AI system under Article 6(2) used by covered deployers, including bodies governed by public law, private entities providing public services, and specified Annex III 5(b) and 5(c) uses. | Process description, period and frequency of use, affected groups, harm risks, oversight measures, mitigation, complaint mechanism, authority notification record where applicable. | Legal, compliance, fundamental-rights owner, public-service owner, risk owner. |

| Combined review | The same system has personal-data risk and fundamental-rights risk. | Cross-reference between DPIA and FRIA, with clear indication of what each assessment covers and where one complements the other. | Privacy, legal, risk, process owner, affected function. |

The practical rule: an inventory record should not decide final legal status alone. It should trigger the right review queue early enough that the team can fix design, notices, oversight, data handling, procurement, and approval evidence before deployment.

Build the inventory in seven steps

- Start with use cases, not vendors. One vendor product can support several AI systems or workflows with different risk routes.

- Assign business and technical owners. If nobody owns the record, nobody will update classification, notices, logs, or incidents.

- Screen Article 3 fit. Record whether the system appears to meet the AI system definition and who reviewed that conclusion.

- Map Article 6 and Annex III. Store the route, the facts, and the evidence link. Do not collapse it into a yes/no checkbox.

- Add deployer operating fields. Instructions for use, human oversight, input data, monitoring, logs, workplace notice, and provider escalation are Article 26 evidence fields.

- Add Article 50 and impact-assessment fields. Disclosure, DPIA, and FRIA routing should be visible before deployment, not discovered during audit preparation.

- Set review dates. Review records when the system changes, the provider changes instructions, the use case expands, the legal baseline changes, or an incident occurs.

Common inventory failure modes

| Failure mode | Why it breaks | Fix |

|---|---|---|

| Only tracking SaaS vendors | Internal models, embedded models, AI features, spreadsheets, agents, and workflow automations disappear from view. | Track use cases and systems, not only vendors. |

| No intended-purpose field | Article 6, Article 26, and provider instructions become hard to apply. | Capture use context, decision supported, affected process, and limits. |

| No role field | The team cannot separate provider, deployer, importer, distributor, and internal builder duties. | Record role per system and update after material change. |

| No Annex III reasoning | High-risk classification becomes an unsupported label. | Store the Annex III area, facts, reviewer, and rationale. |

| No Article 50 field | Disclosure obligations are discovered after launch or after content is already published. | Screen interaction, biometric/emotion use, deepfake, synthetic content, and public-interest text. |

| No incident contacts | Teams lose time during serious-incident triage and provider escalation. | Store internal owner, provider contact, importer/distributor contact, and authority escalation path. |

| No review cadence | Systems drift, use cases expand, and evidence becomes stale. | Set review triggers for change, expansion, incident, new provider instructions, and legal updates. |

Free-tool-first next step

Do not buy a toolkit before you know where the evidence gaps are. Start by testing a small inventory sample: five production AI systems, two pilots, one employee-facing tool, one customer-facing tool, and one vendor-supplied AI feature embedded inside existing software.

Open the free inventory template →

Use the field model to start a practical AI system register for EU AI Act readiness.

Use the evidence starter library →

Connect inventory records to instructions, oversight logs, DPIA/FRIA records, notices, and incident evidence.

If the same gaps repeat across multiple systems, that is a buying signal for a more complete evidence pack or guided implementation. The inventory should reveal that need, not hide it.

FAQ: AI system inventory fields under the EU AI Act

An EU AI Act inventory is a structured register of AI systems, use cases, owners, roles, vendors, data, outputs, classification routes, deployer duties, transparency triggers, impact-assessment routing, incident contacts, and review dates. It is not only a procurement list. Its job is to route each system to the right legal, privacy, operational, and evidence questions before deployment or material change.

The AI Act does not turn every organisation into a central inventory operator by using one universal “inventory” label for all deployers. The practical need comes from the Act’s role definitions, high-risk classification rules, deployer obligations, transparency duties, FRIA routing, incident escalation, and staggered application dates. A field-level inventory is the cleanest way to keep those decisions traceable.

Start with system name, business owner, technical owner, provider or supplier, intended purpose, legal role, EU exposure, affected people, input data, output impact, Annex III area, Article 50 trigger, human oversight owner, log availability, DPIA/FRIA routing, incident contact, and review date. These fields usually decide the next evidence request faster than broad policy text.

The inventory should preserve the classification path: safety component under Article 6(1), Annex III use case under Article 6(2), possible non-high-risk rationale under Article 6(3), profiling override, or unresolved status. Do not store only “high-risk: yes/no.” Store the reasoning and the evidence link that explains why the classification was reached.

A vendor list tells you who sold or supplied a tool. An EU AI Act-ready inventory tells you what the system does, where it is used, which people are affected, whether Annex III or Article 50 may be triggered, which deployer obligations apply, what evidence exists, and who must act if the system changes or causes a serious incident.

Yes. The inventory should record whether the system directly interacts with natural persons, generates or manipulates synthetic audio, image, video, or text, supports emotion recognition or biometric categorisation, or publishes AI-generated text on matters of public interest. The record should also point to notice wording, placement evidence, human review, editorial responsibility, and exception rationale where relevant.

A DPIA route is relevant where personal-data processing may create high risk under GDPR screening. A FRIA route is relevant for certain high-risk AI systems covered by Article 27, especially specified public-sector, public-service, creditworthiness, and life or health insurance uses. The inventory should not decide everything alone. It should flag the record for privacy, legal, and fundamental-rights review.

No. This page is an operational field model for EU AI Act readiness. It helps teams structure inventory records and evidence trails. It does not determine legal status, confirm compliance, replace counsel, or resolve national-law, sector, employment, data-protection, procurement, or enforcement questions. Use it as a control and evidence design aid.

Source and review note

This page was reviewed against the AI Act Service Desk pages and the official Regulation (EU) 2024/1689 text linked from the Service Desk. It is operational guidance for evidence planning. It is not legal advice and does not guarantee compliance.

- Article 3: definitions

- Article 6: classification rules for high-risk AI systems

- Annex III: high-risk AI systems

- Article 26: obligations of deployers of high-risk AI systems

- Article 27: fundamental rights impact assessment

- Article 50: transparency obligations

- Article 73: reporting of serious incidents

- Article 113: entry into force and application

- Regulation (EU) 2016/679: GDPR official text

Deadline status: This page uses Article 113 of the adopted AI Act as the baseline. If a later enacted amendment changes application dates, update the inventory date field and this page’s review note.

Disclaimer: Validate legal classification, provider/deployer role, Article 26 duties, Article 50 disclosures, DPIA implications, FRIA routing, employment-law duties, procurement duties, sector-specific obligations, national-law questions, and reporting duties with qualified counsel or a competent regulatory adviser.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.