Quick answer

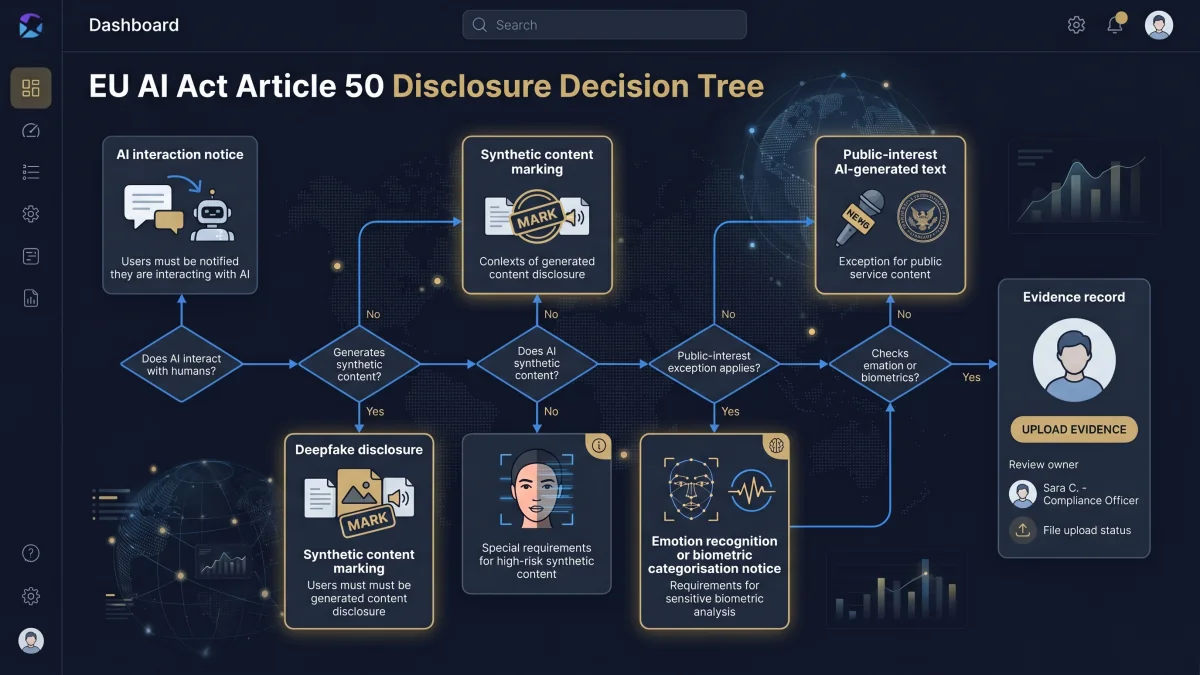

EU AI Act Article 50 disclosure work starts by identifying the transparency scenario: direct AI interaction, synthetic content marking, emotion recognition or biometric categorisation, deepfake disclosure, or public-interest AI-generated text. This local-only tool turns those inputs into conservative draft notices, evidence records, and escalation notes for legal, privacy, product, accessibility, or communications review.

Current-law planning note

Article 50 contains transparency obligations for providers and deployers of certain AI systems, and the AI Act Service Desk states that Article 50 transparency requirements apply as of 2 August 2026. Use this page as an operational screening aid only. The Commission Code of Practice on marking and labelling AI-generated content remains implementation context unless final approval status is verified; final notice design and legal interpretation should be reviewed by qualified counsel.

Run the Article 50 decision tree

Local-only builder

Screen the transparency trigger and draft the notice

Use a working name and select the scenario. The output is generated in this browser. Do not enter personal data, client content, prompts, logs, model outputs, credentials, trade secrets, or sensitive system details.

Output appears here

Run the decision tree to generate scenario tags, draft notice copy, evidence records, and escalation notes.

Article 50 trigger map

| Scenario | Operational question | Typical output |

|---|---|---|

| Direct AI interaction | Will a natural person interact directly with the AI system? | AI interaction notice or rationale if obvious in context. |

| Synthetic content | Does the system generate synthetic audio, image, video, or text? | Provider marking request and deployer publication check. |

| Emotion / biometric | Are people exposed to emotion recognition or biometric categorisation? | Notice plus privacy/legal escalation. |

| Deepfake | Could AI-generated or manipulated image, audio, or video falsely appear authentic? | Disclosure notice plus communications/legal review. |

| Public-interest text | Is AI-generated or manipulated text published to inform the public on matters of public interest? | Disclosure review, editorial-control check, and retained evidence. |

Provider vs deployer split

The Article 50 provider/deployer split matters because provider-side marking and deployer-side notice work are different evidence tasks. A provider may need to support machine-readable marking and detectability for synthetic outputs. A deployer may need to disclose deepfakes, public-interest AI-generated or manipulated text, or the operation of emotion recognition or biometric categorisation systems in deployment context.

Provider-side evidence

Request machine-readable marking details, detection method, technical limitations, and change-control notes.

Deployer-side evidence

Retain notice copy, screenshot, placement, exposure timing, approval owner, publication context, and review rationale.

Conservative notice examples

AI interaction notice

“You are interacting with an AI system. Responses may be automated and should be checked before you rely on them for important decisions.”

AI-generated content notice

“This content was generated or manipulated by AI and reviewed before publication.”

Deepfake / manipulated media notice

“This image, audio, or video has been artificially generated or manipulated and should not be treated as an authentic recording of real events.”

Emotion / biometric notice

“This system uses biometric categorisation or emotion recognition functionality. Review the linked privacy notice before proceeding.”

Evidence to retain

Article 50 evidence should show what notice was used, where it appeared, when people saw it, who approved it, and which AI system or content workflow it covered.

- Notice copy and version date.

- Screenshot or screen recording of placement.

- System name, vendor/model, and workflow owner.

- Publication or first-exposure context.

- Approval owner and review date.

- Provider marking evidence where synthetic content is involved.

- Editorial-control record for public-interest AI-generated text where relied upon.

- Human review or editorial-control record where an exception or publication rationale relies on it.

- Legal, privacy, product, accessibility, or communications escalation record.

Frequently asked questions

An Article 50 disclosure is information given to people when certain AI transparency scenarios apply, such as direct AI interaction, synthetic content, emotion recognition or biometric categorisation, deepfakes, or certain public-interest AI-generated text. The disclosure should be clear, distinguishable, timely, and accessible.

This Article 50 decision tree is for product owners, deployers, providers, compliance leads, DPOs, legal teams, communications owners, and procurement teams who need an operational first pass before approving an AI interaction or AI-generated content workflow.

Article 50 does not apply to every AI system. It targets specific transparency scenarios. A low-risk internal analytics tool may not trigger the same notice work as a public chatbot, a synthetic-media workflow, a deepfake-style video, or an emotion recognition system.

A chatbot or direct AI interaction should be reviewed under Article 50 because providers must ensure that people are informed they are interacting with an AI system unless that is obvious from the point of view of a reasonably well-informed, observant, and circumspect person in context. The decision tree records the context and generates a conservative notice draft where review is needed.

Provider marking normally concerns provider-side obligations for AI systems that generate synthetic audio, image, video, or text in a machine-readable and detectable format. Deployer disclosure normally concerns deployment-facing notices, such as deepfake disclosure, public-interest AI-generated or manipulated text disclosure, or informing people exposed to emotion recognition or biometric categorisation.

Deepfake or manipulated image, audio, or video content should be escalated for legal and communications review before publication or use. The tool generates a conservative disclosure draft and an evidence checklist covering publication context, owner approval, notice text, screenshots, and retained source files.

Article 50 does not replace GDPR, ePrivacy, employment, biometric, sector, or national transparency duties. Emotion recognition and biometric categorisation especially need privacy review because Article 50 references personal-data processing under applicable EU data protection instruments.

No. This tool does not prove Article 50 compliance, provide legal advice, or certify a notice. It creates a structured draft output that should be reviewed by qualified legal, privacy, communications, product, or sector specialists before reliance.

Source and review note

This page provides operational guidance, not legal advice. It was last reviewed on 3 May 2026 against Article 50 and Article 113 of Regulation (EU) 2024/1689 using the AI Act Service Desk text, the AI Act Service Desk implementation FAQ, and the European Commission page on the Code of Practice on marking and labelling AI-generated content. The Code of Practice is treated as implementation context unless final approval status is verified. Final legal, privacy, regulatory, communications, accessibility, or sector-specific decisions should be validated with qualified professionals.