Quick answer

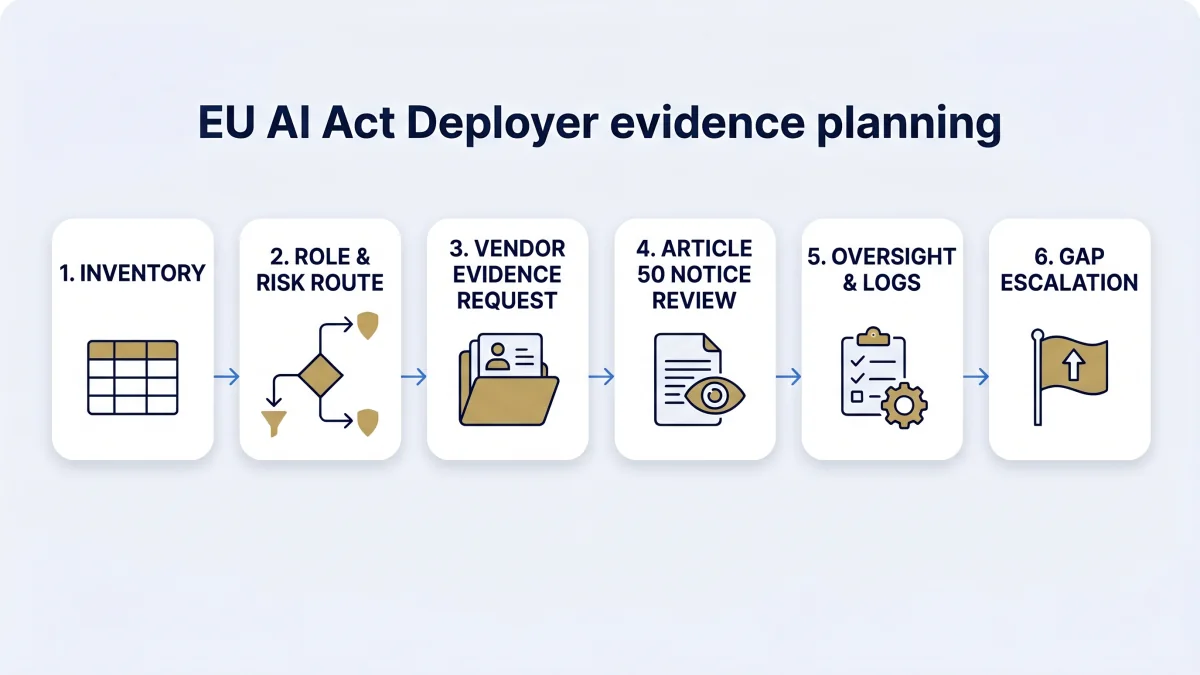

EU AI Act evidence planner work should create one reviewable evidence file per AI system. In 30 days, a deployer can build a baseline inventory, record role and risk decisions, request vendor documentation, prepare transparency evidence, assign oversight, check logs, and list unresolved gaps for legal, privacy, security, procurement, or sector review.

The planner does not prove compliance. It turns scattered AI governance work into a controlled evidence sequence.

Current-law baseline for this planner

Current-law planning assumption: Regulation (EU) 2024/1689 applies from 2 August 2026, with exceptions. Chapters I and II applied from 2 February 2025; Chapter III Section 4, Chapter V, Chapter VII, Chapter XII and Article 78 applied from 2 August 2025, with the Article 101 exception; Article 6(1) and corresponding obligations apply from 2 August 2027.

The European Commission also states that the AI Act entered into force on 1 August 2024, becomes fully applicable on 2 August 2026 with exceptions, and that high-risk rules apply in August 2026 and August 2027 depending on the category. Treat simplification or delay proposals as proposal-stage until enacted.

What the 30-day evidence planner should produce

The 30-day evidence planner should produce a small but usable pack. Keep it system-specific. A generic AI policy does not answer which tool was used, who owns it, what the vendor provided, what risk route was selected, or which notices and logs exist.

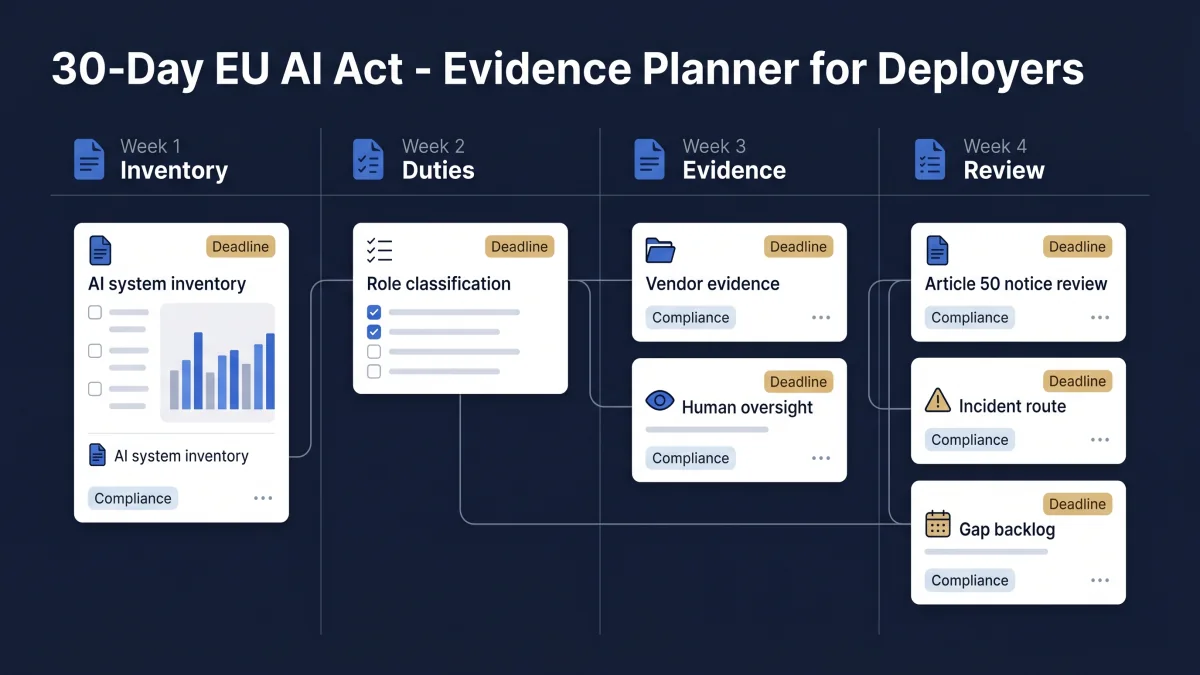

30-day EU AI Act evidence plan

Use the planner as a four-week sprint. The point is not to finish every legal answer. The point is to stop guessing and create records a reviewer can inspect.

Find and classify the systems

Build or clean the AI inventory. Record owner, vendor, intended purpose, users, data categories, region, role assumption, and obvious Article 50 or Annex III signals.

Output: first inventory register and unresolved classification questions.

Map duties and evidence gaps

Route each system to deployer obligations, vendor documentation needs, high-risk indicators, FRIA/DPIA review, input data controls, monitoring, and log-retention questions.

Output: obligation map and gap backlog.

Build operating records

Create or update oversight records, Article 50 notice evidence, vendor request files, literacy evidence, incident escalation path, and review-owner assignments.

Output: working evidence file per priority system.

Review and escalate decisions

Review gaps with legal, privacy, procurement, security, HR, or sector owners. Mark what is closed, open, blocked by vendor, blocked by guidance, or ready for management review.

Output: signed gap register and next 30-day action list.

| Day range | Main task | Evidence output | Suggested owner | EU AI Compass route |

|---|---|---|---|---|

| Days 1–3 | List AI systems and owners | Inventory entry for each known system | AI governance lead / IT owner | AI system inventory fields |

| Days 4–7 | Confirm role and risk route | Role assumption, high-risk notes, Article 50 flags | Compliance / legal / product owner | High-risk system classifier |

| Days 8–12 | Map deployer duties | Operating controls, oversight owner, log source, monitoring routine | Risk / compliance owner | Deployer obligation assessment |

| Days 13–16 | Request vendor records | Vendor evidence request and response tracker | Procurement / vendor owner | Vendor due diligence guide |

| Days 17–20 | Review transparency triggers | Article 50 scenario decision, notice record, screenshot or approval proof | Legal / product / communications owner | Article 50 implementation pack |

| Days 21–24 | Check oversight, logs, and incidents | Oversight log, retention note, incident escalation route | Security / risk / system owner | EU AI Act evidence checklist |

| Days 25–30 | Prepare the review pack | Gap register, decision owners, next review date, escalation list | AI governance lead / executive sponsor | Auditor and regulator questions hub |

Evidence artifact table

| Artifact | Why it matters | Minimum useful content | Risk if missing |

|---|---|---|---|

| AI system inventory | Controls scope and ownership. | System, owner, vendor, purpose, users, data, region, status. | Teams cannot prove what has been reviewed. |

| Role and risk record | Separates provider, deployer, and other operator assumptions. | Role rationale, Annex III indicators, Article 50 triggers, open legal questions. | Evidence requests and controls are aimed at the wrong party. |

| Vendor evidence file | Shows what the deployer received before use. | Instructions, limitations, logging, oversight support, security, changes, incident path. | Procurement relies on claims rather than reviewable records. |

| Oversight and monitoring record | Connects human responsibility to operating use. | Named owner, competence, review cadence, escalation threshold, stop-use route. | Oversight exists only in policy language. |

| Article 50 notice file | Supports transparency trigger decisions. | Scenario decision, notice text, channel, approval, version, screenshot or proof. | Disclosure decisions become hard to reconstruct. |

| Gap and escalation register | Turns uncertainty into owned follow-up work. | Gap, owner, severity, source, decision needed, due date, status. | Open issues disappear between teams. |

Common mistakes

- Starting with policy before inventory. A policy cannot identify which AI systems exist, who owns them, or which vendors need evidence requests.

- Treating vendor documentation as complete deployer evidence. Vendor files help, but the deployer still needs operating records, oversight assignments, notices, and gap decisions.

- Using “high-risk: no” without a rationale. Keep the classification logic, unresolved assumptions, and source documents.

- Leaving Article 50 to marketing or UX alone. Disclosure evidence should connect to system purpose, user interaction, content type, approval, and deployment proof.

- Ignoring privacy and retention constraints. Logs and records may contain personal data. Retention and access controls need GDPR and local-law review.

FAQ

A 30-day EU AI Act evidence planner is a short operating workflow for deployers. It helps a team identify AI systems, assign owners, classify risk, request vendor records, prepare Article 50 disclosure evidence, review oversight, and record open gaps. It is an evidence baseline, not a compliance certificate.

Use this planner if your organisation deploys AI systems for internal operations, customer service, HR, education, finance, healthcare, public services, procurement, or regulated decision support. It is especially useful for CTOs, CISOs, DPOs, legal teams, procurement owners, and AI governance leads who need a first evidence file.

The planner can start before a complete inventory exists, but the first week should create or update the inventory. Without a system register, a deployer cannot reliably map owners, vendors, roles, risk routes, Article 50 triggers, log sources, FRIA indicators, or open evidence gaps.

No. This planner does not prove compliance, certify readiness, or replace legal advice. It creates a practical evidence baseline that can be reviewed by legal, privacy, procurement, security, risk, or sector specialists before final decisions are made.

Start with the AI system inventory, role classification, intended purpose, owner, vendor, risk route, instructions for use, oversight owner, log source, and known Article 50 triggers. Those records control the rest of the evidence work.

The planner treats vendor documentation as a necessary input, not a replacement for deployer evidence. The deployer still needs records showing how the system is used, who oversees it, what logs are retained, what notices were issued, and which gaps remain open.

Article 50 transparency review belongs in Week 3 after the system inventory and role/risk route are clear. The team should record whether the system interacts with humans, generates synthetic content, creates deepfakes, supports emotion recognition or biometric categorisation, or produces public-interest AI-generated text.

Legal, privacy, employment, procurement, sector, or regulatory counsel should review the pack before relying on it for a high-risk system, workplace deployment, biometric use, public-sector use, FRIA/DPIA decision, serious incident process, or regulator-facing response.

Source and review note

This page was reviewed against the AI Act Service Desk Article 113 page, the European Commission AI Act page, and the AI Act Service Desk pages for Article 26, Article 27, Article 50, and Article 73. It is operational guidance for evidence planning. It is not legal advice, and it does not guarantee compliance.

- Article 113: entry into force and application

- European Commission: AI Act

- Article 26: obligations of deployers of high-risk AI systems

- Article 27: fundamental rights impact assessment

- Article 50: transparency obligations

- Article 73: reporting of serious incidents

Disclaimer: Validate legal classification, GDPR/DPIA implications, FRIA applicability, employment-law duties, procurement reliance, sector-specific obligations, and national-law questions with qualified counsel or a competent regulatory adviser.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.