Quick answer

Deployers should use the EU AI Act August 2026 deadline to complete four practical jobs: classify deployed AI systems, confirm whether Article 26 duties apply, check Article 50 transparency triggers, and retain evidence. Do not wait for guidance to start the AI inventory, oversight log, vendor file, disclosure register, and decision record.

The August 2026 failure mode will not be “we had no policy.” It will be “we cannot open the file for one real AI system and show who owned, reviewed, monitored, and escalated it.”

What changes for deployers on 2 August 2026?

Under Article 113, Regulation (EU) 2024/1689 generally applies from 2 August 2026. Chapters I and II have applied from 2 February 2025, selected governance and general-purpose AI provisions from 2 August 2025, and Article 6(1) plus corresponding obligations apply from 2 August 2027. For deployers, the August 2026 readiness problem is mainly Annex III high-risk use, Article 26 evidence, and Article 50 transparency.

Current-law baseline vs proposal-stage uncertainty

The Digital Omnibus proposal may adjust the implementation path for some high-risk obligations. Treat it as a change-tracker item, not as adopted law, unless the final amendment has been adopted and published. Until then, keep current-law dates in your controls, policies, and board reporting.

| Date | Current-law item | Deployer action |

|---|---|---|

| 2 February 2025 | Chapters I and II apply, including AI literacy and prohibited AI practices. | Keep AI literacy evidence and prohibited-practice screening live now. |

| 2 August 2025 | Selected governance, GPAI, penalties, and Article 78 provisions apply. | Track GPAI/vendor dependencies and update procurement evidence. |

| 2 August 2026 | General application date for most AI Act rules. | Complete deployer inventory, classification, Article 26 mapping, Article 50 review, and evidence ownership. |

| 2 August 2027 | Article 6(1) and corresponding obligations apply. | Separate Annex I/product-safety systems from Annex III deployer systems. |

Which AI systems should deployers track first?

Start with AI systems that affect people, access to services, employment, education, credit, insurance, biometric data, or public-facing synthetic content. The practical question is not “do we use AI?” It is “which deployed systems can create legal, operational, privacy, or fundamental-rights exposure if nobody can show the evidence trail?”

| System type | Why it goes first | First evidence artifact |

|---|---|---|

| Recruitment, screening, promotion, task allocation, performance monitoring | Employment and worker-management systems are listed in Annex III. | AI inventory entry, HR owner, vendor file, worker-notice check, human oversight log. |

| Creditworthiness, credit scoring, life or health insurance pricing | Essential private service and insurance use cases are listed in Annex III. | Decision-flow map, model/vendor evidence, DPIA/FRIA routing, complaint/escalation path. |

| Education access, assessment, proctoring, student monitoring | Education and vocational training use cases are listed in Annex III. | Purpose statement, learner-impact review, oversight assignment, testing and appeal record. |

| Biometric categorisation, emotion recognition, remote biometric identification | Biometrics are listed in Annex III and may also trigger Article 50 notices. | Legal basis review, Article 50 notice check, DPIA/FRIA routing, approval record. |

| Public-facing AI-generated text, image, audio, or video | Article 50 may require disclosure or provider-side marking depending on the use case. | Disclosure decision register, editorial review note, label placement record. |

| AI agents or workflow automation that act on business systems | Agent workflows can hide provider/deployer boundaries and create monitoring gaps. | Role map, action boundary, escalation path, logs, vendor instruction file. |

Free tool path: start the AI system inventory, then check whether the system maps to Annex III high-risk use cases.

Article 26 deployer readiness checklist

Article 26 is where deployer readiness becomes evidence work. A policy is not enough. The deployer needs records showing that the system is used according to instructions, human oversight is assigned to competent people, input data is controlled where relevant, operation is monitored, logs are kept, and incidents or risks can be escalated.

| Control question | Evidence to retain | Owner |

|---|---|---|

| Are provider instructions available and followed? | Provider instructions, configuration record, accepted-use note, deviation approvals. | System owner + legal/procurement |

| Has human oversight been assigned to a competent person? | Named oversight owner, training/competence note, escalation authority, review cadence. | Business owner + compliance |

| Does the deployer control input data? | Input-data relevance and representativeness check, data source list, exception log. | Data owner + system owner |

| Is operation monitored against instructions? | Monitoring log, exception review, vendor update review, risk notes. | System owner |

| Are logs kept where under deployer control? | Log-retention setting, access control, retention period, privacy review. | IT/security + data protection |

| Is incident and risk escalation defined? | Serious incident register, provider notification path, authority-escalation decision note. | Risk/compliance + legal |

| Is the system used at work? | Worker representative notice, affected worker notice, HR sign-off. | HR + legal |

| Does the system assist decisions about natural persons? | Affected-person notice review, DPIA/FRIA routing, appeal or review process. | Legal + business owner |

Free tool path: run the free Deployer Obligation Self-Assessment, then download the EU AI Act deployer obligations checklist.

Article 50 transparency trigger table

Article 50 is not a generic “AI was used” slogan. It is a trigger-based disclosure obligation. For deployers, the highest-risk misses are emotion recognition, biometric categorisation, deepfake media, and AI-generated public-interest text. The evidence question is simple: when the trigger fires, can you show the disclosure decision, label wording, placement, and owner?

| Trigger | Likely deployer question | Evidence artifact |

|---|---|---|

| Emotion recognition or biometric categorisation | Have exposed people been informed of the system’s operation? | Notice wording, placement record, privacy review, DPIA route. |

| Deepfake image, audio, or video | Does the content need disclosure that it was artificially generated or manipulated? | Disclosure label, creative/editorial exception note if relevant. |

| AI-generated text published to inform the public on matters of public interest | Is disclosure needed, or was there human review and editorial responsibility? | Editorial review note, publication owner, disclosure decision register. |

| Direct interaction with an AI system | Is it obvious to a reasonably well-informed person that they are interacting with AI? | User-interface notice, chatbot/system wording, first-interaction screenshot. |

| Synthetic outputs generated by provider-side AI systems | Are machine-readable marking and detection obligations relevant upstream? | Vendor/provider evidence request, technical marking statement. |

Free tool path: review Article 50 AI content marking triggers, then read the Article 50 transparency guide.

Annex III high-risk triage table

Annex III is the triage layer for many deployers. The goal is not to label everything high-risk. The goal is to record a defensible classification decision, route high-risk candidates to evidence owners, and keep non-high-risk decisions versioned with the facts used at the time.

| Annex III area | Examples to check | First action |

|---|---|---|

| Biometrics | Remote biometric identification, sensitive biometric categorisation, emotion recognition. | Pause deployment until legal/privacy review confirms lawful basis and Article 50 notice position. |

| Critical infrastructure | Safety components in digital infrastructure, traffic, water, gas, heating, electricity. | Map safety role, operator responsibility, and incident escalation. |

| Education and vocational training | Admission, assessment, learning-outcome evaluation, proctoring, student monitoring. | Record learner impact, oversight path, and challenge route. |

| Employment and worker management | Recruitment, candidate filtering, promotion, termination, task allocation, performance monitoring. | Assign HR/legal evidence owner and worker-notice review. |

| Essential services and benefits | Public benefits, creditworthiness, life/health insurance, emergency triage or dispatch. | Route to DPIA/FRIA, affected-person notice, and appeal path. |

| Law enforcement | Risk assessment, evidence reliability, profiling, polygraphs or similar tools. | Specialist legal review required. Do not use generic business templates. |

| Migration, asylum, border control | Risk assessment, application examination, identity detection or recognition. | Specialist legal review required. Maintain strict authority and purpose controls. |

| Justice and democratic processes | Judicial assistance, election or referendum influence. | Escalate to senior legal and governance review before use. |

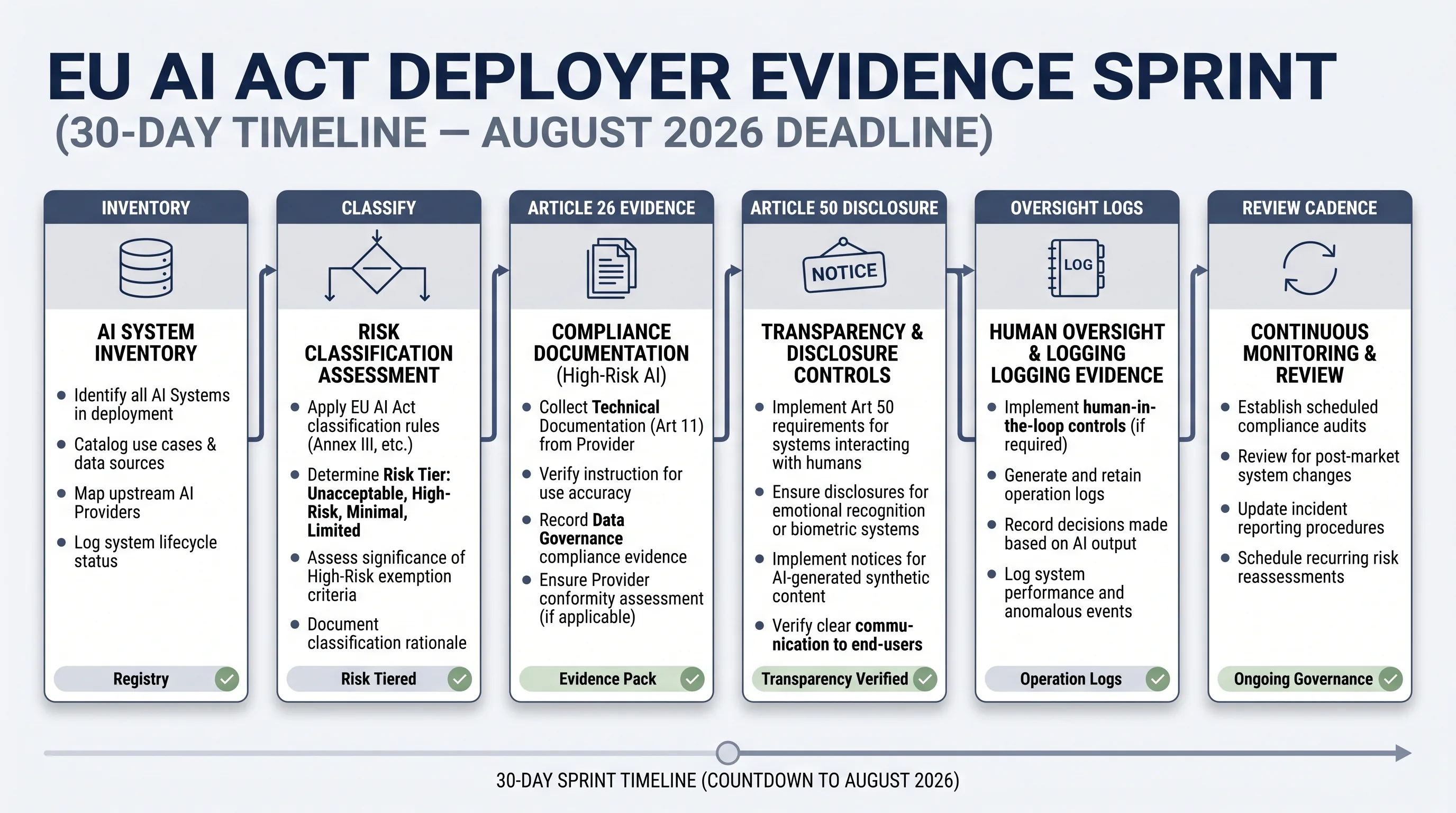

30-day deployer evidence sprint

A 30-day sprint will not make a weak AI governance programme complete. It can create the minimum operating file: inventory, classification, owner mapping, disclosure triggers, oversight logs, and a gap list that senior management can act on. That is a better starting point than waiting for perfect guidance.

| Week | Workstream | Output |

|---|---|---|

| Week 1 | Inventory and ownership | List AI systems, vendors, business owners, user groups, data categories, and evidence locations. |

| Week 2 | Classification and Article 26 scope | Annex III triage, deployer/provider role notes, Article 26 evidence owner assigned. |

| Week 3 | Article 50 and vendor evidence | Disclosure trigger register, label placement decision, vendor instruction/evidence request list. |

| Week 4 | Oversight, logs, and management sign-off | Oversight log, log-retention review, unresolved gaps, decision memo, next review date. |

Free tool path: use the Evidence Starter Library to build the folder structure before moving to paid implementation assets.

What to do if guidance or timelines change

Separate legal baseline, proposal tracking, and internal readiness work. The mistake is to mix them in one spreadsheet and let proposal-stage uncertainty stop practical evidence work. Guidance can change. The inventory, owner map, vendor file, disclosure register, and oversight log are still useful.

- Keep a current-law row. Record the rule and date as currently enacted.

- Add a proposal-stage row. Track Digital Omnibus or guidance changes separately.

- Version your decisions. Record which source and date the team relied on.

- Do not rewrite tool logic too early. Update calculators, notices, and board packs only after final legal change.

- Reconcile monthly. Assign a person to compare confirmed dates with proposal-stage timeline changes.

Reference path: compare confirmed dates with proposal-stage timeline changes.

Common mistakes

Treating vendor paperwork as deployer evidence

Vendor documents help. They do not prove your team assigned oversight, checked input data, issued notices, or retained logs.

Running Article 50 review only in marketing

Article 50 may touch product UX, customer support, content operations, HR, and biometric workflows.

Forgetting workplace notification

If a high-risk AI system is used at work, Article 26 points to worker and worker-representative information duties.

Confusing DPIA and FRIA

A DPIA can be part of the evidence file. It does not automatically answer every fundamental-rights question.

No evidence owner

If nobody owns the file, the evidence will fragment across procurement, product, legal, HR, and IT.

Freezing because guidance is unsettled

Unsettled guidance is not a reason to ignore inventory, classification, notices, logs, and oversight records.

Next step: run the free deployer assessment

Use the self-assessment first. If it exposes repeated evidence gaps, missing owners, unclear Article 50 triggers, or weak board reporting, E1/E2 can become the implementation layer for templates, control mapping, and board-ready evidence packs. The free tracker comes first.

FAQ: EU AI Act August 2026 deadline for deployers

Deployers should build an AI inventory, classify systems against Annex III, confirm whether Article 26 duties apply, check Article 50 transparency triggers, assign evidence owners, and retain records showing oversight, input-data review, monitoring, logs, vendor instructions, and disclosure decisions.

Not all high-risk obligations follow the same date. Under Article 113, the AI Act generally applies from 2 August 2026, while Article 6(1) and corresponding obligations apply from 2 August 2027. Annex III high-risk rules are part of the August 2026 readiness concern. Any Digital Omnibus change should be tracked separately until adopted.

No. Treat Digital Omnibus as proposal-stage unless it has been adopted and published in the Official Journal. The practical control is to keep the current-law baseline and a separate change tracker. Do not rewrite compliance deadlines inside policies or tools until the law changes.

Start with an AI system inventory. Without a register of deployed AI systems, owners, vendors, intended purposes, user groups, data categories, Annex III triggers, and evidence locations, the team cannot reliably scope Article 26, Article 50, FRIA, DPIA, or vendor obligations.

At minimum, retain the provider instructions, assigned human oversight owner, evidence of competence and training, input-data relevance checks where the deployer controls input data, monitoring records, risk or incident escalation notes, logs under deployer control, workplace notices where relevant, and authority-cooperation records where applicable.

Article 50 matters when people interact with AI systems, when deployers use emotion recognition or biometric categorisation, when deepfake image, audio, or video content is generated or manipulated, or when AI-generated text is published to inform the public on matters of public interest, subject to the article’s exceptions.

Sometimes. A DPIA is a data protection assessment under GDPR. A FRIA is an AI Act fundamental rights impact assessment for certain high-risk deployments. The workflows can be coordinated, but the evidence questions are not identical. Treat combined assessment as a practical workflow, not as proof that one replaces the other.

No. EU AI Compass tools help teams structure classification, evidence planning, and readiness discussions. They do not provide legal advice, do not guarantee compliance, and do not replace review by qualified legal, privacy, regulatory, or sector-specific counsel.

Source and review note

This page was last reviewed against official sources on 2026-04-30. It is operational guidance for deployer readiness planning. It is not legal advice, does not guarantee compliance, and does not replace review by qualified legal, privacy, regulatory, or sector-specific counsel.

- Regulation (EU) 2024/1689 / EUR-Lex official text

- European Commission AI Act page

- AI Act Service Desk Article 113

- AI Act Service Desk FAQ

- AI Act Service Desk Article 26

- AI Act Service Desk Article 50

- AI Act Service Desk Article 6

- AI Act Service Desk Annex III

- European Commission Digital Package FAQ

- European Commission Digital Omnibus on AI Regulation Proposal

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.