Scope reminder

Confirmed law Annex III defines the main operational use cases that are treated as high-risk AI systems under the current AI Act framework.

Proposal watch A live Omnibus proposal could change the application timeline if adopted. It has not changed the classification logic of Annex III today.

Removed Weak percentage claims about “how much AI is high-risk” were stripped out because they were not carrying their evidentiary weight.

This page should do one job well: help readers decide whether a concrete use case falls into an Annex III area and what that classification means. It should not pad itself with unsupported market-share statistics.

The eight Annex III areas

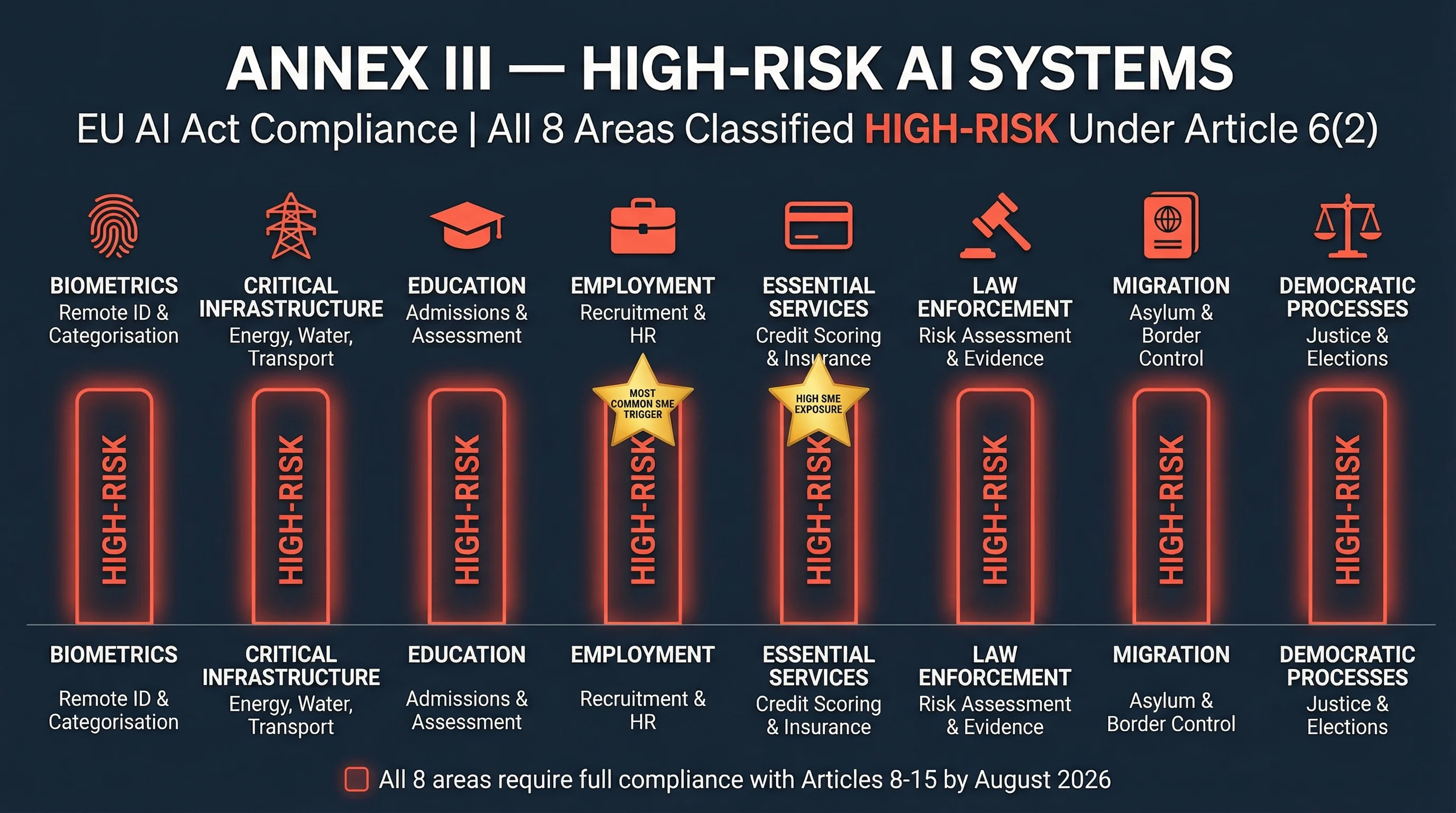

The AI Act identifies eight core operational areas where AI systems may be classified as high-risk under Annex III. These areas are:

- Biometrics

- Critical infrastructure

- Education and vocational training

- Employment, workers management, and access to self-employment

- Access to essential private and public services

- Law enforcement

- Migration, asylum, and border control management

- Administration of justice and democratic processes

The point is not memorisation. The point is operational mapping. If your system affects access, ranking, eligibility, risk, or monitoring in one of these areas, you likely need deeper classification analysis rather than casual reassurance.

Where mid-market firms usually get caught

- Employment: screening, ranking, performance monitoring, task allocation, or worker analytics.

- Essential services: credit scoring, lending, pricing, eligibility, and insurance-related decision support.

- Education: admissions, progression assessment, or AI-based proctoring.

- Biometrics: identification, categorisation, or emotion-recognition use cases outside the prohibited bucket.

Do not forget the Article 6(3) narrow carve-out

Some Annex III systems may rely on the narrow Article 6(3) logic where the system does not pose a significant risk of harm and fits the conditions set out in the law. That is not a casual escape hatch. It requires a documented justification and should be handled as an exception analysis, not as a default assumption.

What high-risk classification triggers

Once a system is treated as high-risk, the organisation needs to deal with the control architecture under Articles 8 through 15. In practice that means risk management, data governance, technical documentation, logs, deployer information, human oversight, and robustness / cybersecurity controls that are defensible and evidenced.

Use this page correctly

Use this checklist to identify likely high-risk candidates. Then move those systems into a documented classification and control workflow. Do not use a blog post as your final legal determination.

For a portfolio-level first pass, use the 12-question Compliance Checker. For Article 6(3) scenarios, the local exemption framework is the better next step.

About the author: Abhishek G Sharma is the founder of Move78 International Limited. He holds ISO 42001 Lead Auditor, CISA, CISM, CRISC, and CEH certifications. He brings over 20 years of practitioner experience in cybersecurity, AI governance, and enterprise risk management.

Disclaimer: This analysis is for educational purposes only and does not constitute legal advice. Consult qualified counsel for binding compliance decisions. Last updated: March 2026.