What EU AI Act Article 50 requires in plain language

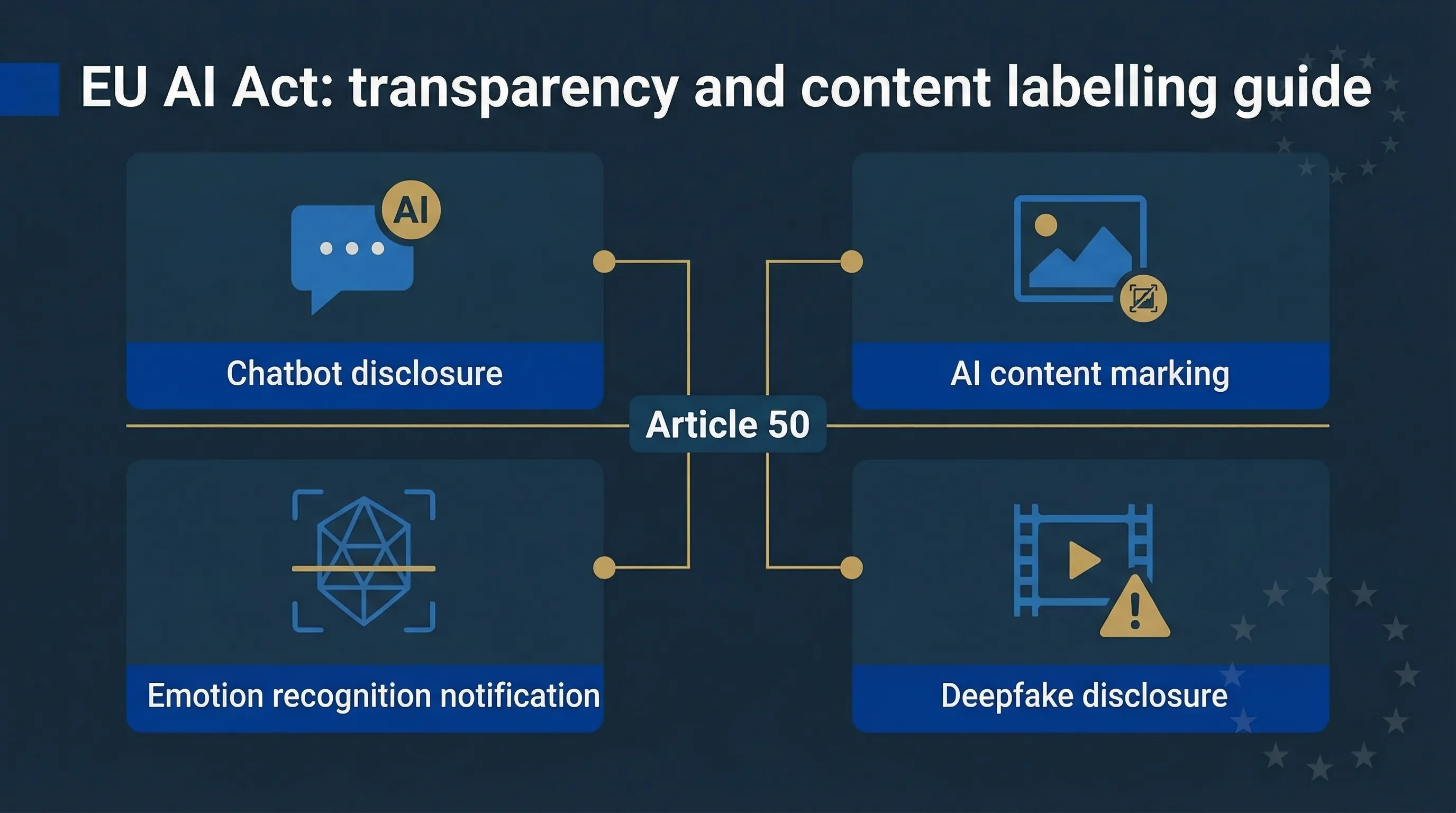

Article 50 is the EU AI Act's transparency layer. It applies to AI systems that aren't necessarily high-risk but still interact with people or produce content that could be mistaken for human-made. It covers four distinct AI content labelling and disclosure obligation categories, all enforceable from 2 August 2026.

1. Tell people when they're talking to AI

If your AI system interacts directly with a natural person — chatbots, voice assistants, AI companions, copilots — the provider must ensure the person is informed they're interacting with an AI system. Not buried in a terms page. At the point of interaction. The only exception is when it's "obvious from the circumstances and context of use" that the system is AI. That bar is higher than most product teams assume.

2. Mark AI-generated content in a machine-readable way

Providers of AI systems that generate synthetic audio, images, video, or text must ensure that outputs are marked as artificially generated or manipulated in a machine-readable format. This means metadata, watermarks, or cryptographic signatures — not just a visible label. The marking must be interoperable, detectable, and robust. GPAI model providers have an additional duty to support downstream content marking by their deployers.

3. Inform people exposed to emotion recognition or biometric categorisation

If your system performs emotion recognition or biometric categorisation, the people being analysed must be informed. This intersects with Article 5 prohibitions — emotion recognition in workplaces and schools is banned outright (with narrow exceptions). But where it's permitted — retail analytics, security screening, healthcare — the transparency duty applies.

4. Disclose deepfakes and AI-generated public-interest text

Deployers who publish AI-generated or AI-manipulated content that resembles real persons, places, or events must disclose it as artificially generated or manipulated. This applies particularly to deepfakes and to AI-generated text on matters of public interest (political content, news, policy). Exceptions exist for artistic, satirical, and fictional works — but those exceptions don't apply when the content depicts real events or real people in a way that could mislead.

What Article 50 does NOT do

It doesn't reclassify your system as high-risk. It doesn't replace GDPR transparency duties — it adds to them. And it doesn't cover every use of AI. If your system simply processes data internally without interacting with people or producing content, Article 50 probably doesn't apply. But the moment your AI talks to someone or creates something someone else will see, you're in scope.

Which obligations apply to providers and which to deployers?

Article 50 splits responsibility. Providers (who build the system) must design transparency features into the product. Deployers (who operate it) must actually use those features and disclose AI involvement to the people they serve. If you're both — you built it and you run it — you carry both sets of duties.

| Scenario | Provider obligation | Deployer obligation |

|---|---|---|

| Chatbot / AI assistant | Design the system so it can inform users they're interacting with AI (Art. 50(1)) | Configure and display the AI interaction notice to end users |

| AI-generated images, audio, video | Implement machine-readable marking (metadata, watermarks) in outputs (Art. 50(2)) | Preserve markings; add visible labels when publishing content |

| Emotion recognition / biometric categorisation | Design system to support notification of affected persons (Art. 50(3)) | Inform individuals being analysed before the system processes them |

| Deepfakes / AI public-interest text | Support content marking in outputs; provide disclosure capabilities | Disclose AI generation at point of publication; label content visibly (Art. 50(4)) |

Table 1: Article 50 obligation split between providers and deployers. For the full overview of deployer obligations, see our Complete EU AI Act Compliance Guide.

How to implement chatbot and AI assistant disclosure

This is the obligation most product teams will encounter first. If your product includes a chatbot, virtual assistant, copilot, or any conversational AI that interacts with users, you need an AI disclosure notice. Here's how to do it without destroying UX.

Design pattern: persistent + first-message

The most robust approach is a two-layer disclosure. First, a persistent visual indicator — a small badge or icon near the chat interface that reads "AI Assistant" or "Powered by AI." Second, a first-message notice: the chatbot's opening message explicitly states it's an AI system. Something like: "I'm an AI assistant. I can help with [topic]. For complex issues, I'll connect you with a human agent." This pattern satisfies the "timely, clear, and intelligible" standard the regulation implies.

Voice assistants

For voice-based systems, the disclosure must be audible. An opening statement at the start of each interaction: "You're speaking with an AI assistant." Don't bury it after 30 seconds of conversation. Front-load it.

The "obvious AI" exception

Article 50(1) exempts cases where AI use is "obvious from the circumstances and context of use." A robotic character in a video game might qualify. A customer service chatbot that uses natural language and human-like responses doesn't — even if it has a bot icon. The exception is narrow, and regulators haven't published detailed guidance on its boundaries yet. My recommendation: disclose anyway. The cost of a small notice is zero. The cost of getting the "obvious" judgment wrong is up to €7.5 million.

Marking AI-generated images, video, audio and text

Article 50(2) targets the output side of generative AI. Providers of systems that generate synthetic content must mark that content in a machine-readable format. This isn't about visible watermarks on images (though those help) — it's about embedded metadata that downstream systems and platforms can detect.

Technical marking options

Three main approaches are emerging. Metadata embedding (XMP, IPTC) — the most mature, already used in photography and publishing. Invisible watermarks — imperceptible to humans but detectable by specialised tools. Cryptographic provenance — systems like C2PA (Coalition for Content Provenance and Authenticity) that create a tamper-evident chain of custody for content. The regulation doesn't prescribe a specific standard, but the marking must be "effective, interoperable, robust, and reliable." Could your content pipeline implement any of these today? If the answer is no, that's the project to start.

Assistive edits: where's the line?

Not every AI touchpoint on a piece of content triggers Article 50. Spell-checking, grammar correction, auto-formatting — these are generally exempt because the human retains substantive control. The threshold isn't precisely defined in the law, so apply a practical test: did the AI change the meaning, structure, or substance of the content? If yes, that's generation or manipulation. If it only corrected typos, it isn't.

⚠️ GPAI model providers: additional duty

If you provide a general-purpose AI model (foundation model, LLM, image generator), you have an additional obligation under Article 50(2) to ensure your model supports downstream content marking. Your deployers can't label content you didn't design to be labelled. Build marking capabilities into your API outputs.

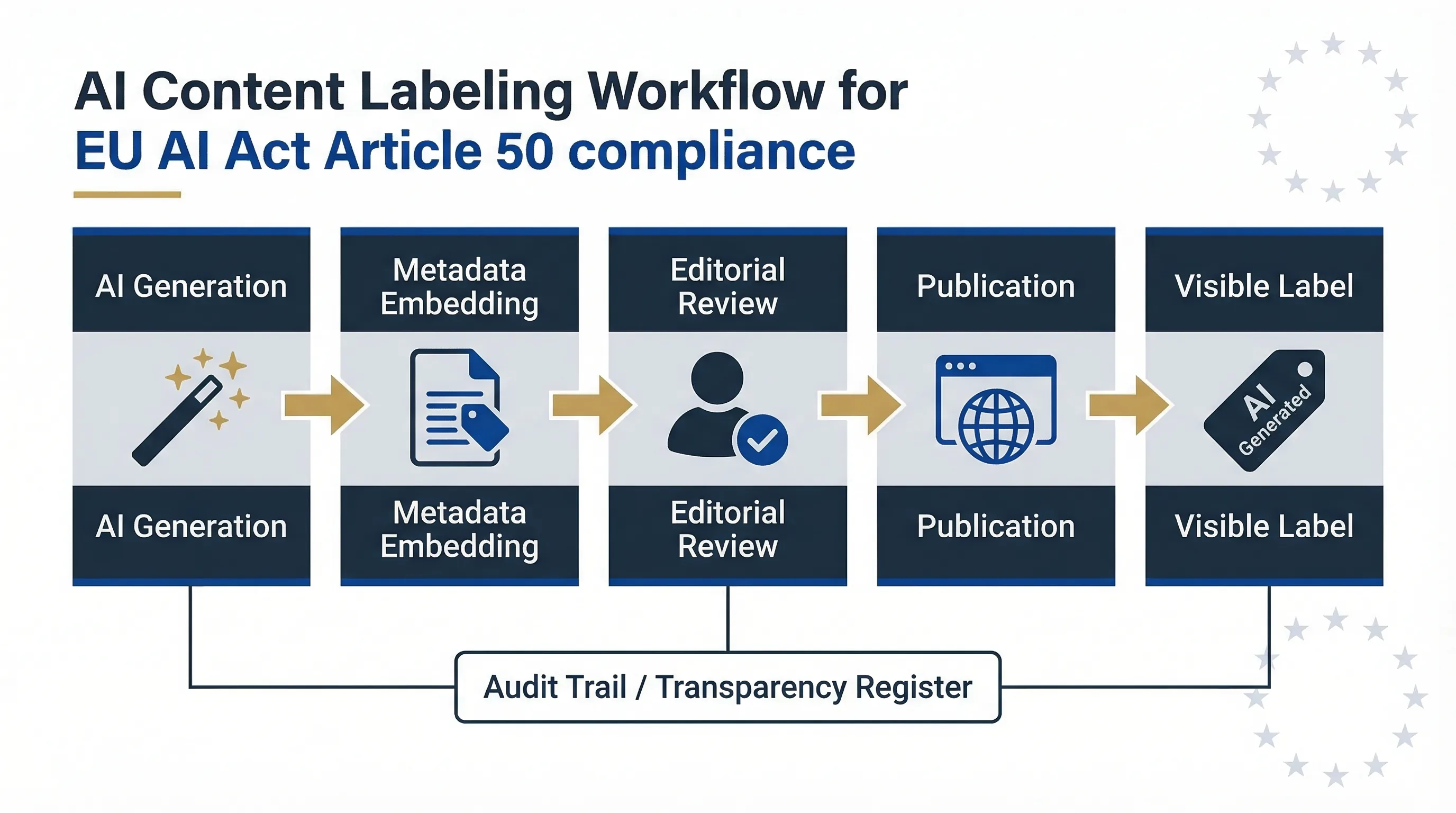

Figure: The content labelling workflow — from AI generation through metadata embedding, editorial review, and compliant publication.

Deepfakes and AI text on matters of public interest

The EU AI Act defines a deepfake as AI-generated or manipulated content — image, audio, or video — that resembles existing persons, objects, places, or events and would falsely appear authentic to a reasonable person. Article 50(4) requires deployers to disclose when they publish such content.

What triggers the deepfake disclosure duty

Three conditions must be met: the content depicts something that looks real, it was generated or substantially manipulated by AI, and it's published or distributed. The Grok deepfake crisis is a live example — AI-generated images of public figures that were indistinguishable from photographs, published on social media without any AI disclosure. That's exactly the scenario Article 50 targets.

AI-generated text on public interest topics

Article 50(4) also covers AI-generated text published to inform the public on matters of public interest. Political campaign content, AI-written news articles, policy analysis, corporate statements on public issues — all require disclosure if substantially AI-generated. The exception: content that has undergone human editorial review where a natural person holds editorial responsibility for the publication. That exception requires genuine editorial control, not a cursory glance before hitting publish.

Artistic and satirical exceptions

Works of art, satire, fiction, and creative expression using AI are exempt from the deepfake disclosure requirement — but only when the content doesn't depict real events or real people in a way likely to mislead. A clearly fictional AI-generated film is fine. An AI-generated video of a real politician making statements they never made is not, regardless of artistic framing.

Emotion recognition and biometric categorisation transparency

Article 50(3) requires that individuals exposed to emotion recognition or biometric categorisation systems are informed of the system's operation. But there's a critical sequencing issue most compliance teams miss: check Article 5 prohibitions before you worry about Article 50 transparency.

Article 5 bans emotion recognition in workplaces and educational settings outright (with narrow law-enforcement and medical exceptions). If your system falls under that ban, the transparency question is moot — the system itself can't operate. Use our EU AI Act Compliance Checker to determine whether your use case hits the Article 5 prohibition before planning Article 50 disclosures.

Where emotion recognition or biometric categorisation is permitted — retail analytics, security screening, healthcare monitoring — the transparency obligation means: inform individuals before the system processes them, explain what the system does, and provide information on how to object or opt out (which overlaps with GDPR data subject rights).

Design patterns and workflow changes for compliance

For product teams

Build disclosure into your design system, not as an afterthought. Create a standard AI disclosure component — badge, tooltip, or banner — that can be dropped into any interface where AI interacts with users. Define toggle defaults: AI disclosure should be on by default and require a documented justification to disable it. For generative features, route all AI outputs through a content marking pipeline before they reach the user. If you're using a third-party GPAI model, verify that the provider's API returns machine-readable provenance data you can preserve.

For editorial and marketing teams

Establish a clear policy: when can AI be used to draft, edit, or generate content? Who reviews it before publication? How is AI involvement disclosed — footnote, byline qualifier, metadata? The policy should distinguish between AI-assisted (human edits substantially) and AI-generated (AI produced the substance). For CMS workflows, add a mandatory field: "AI involvement level" — None / Assisted / Generated. This creates an audit trail that you can produce when a regulator or customer asks.

Documentation for audits

Keep a transparency decisions register. For every AI system or generative tool your organisation uses, record: what disclosure mechanism is in place, when it was implemented, who approved it, and the rationale for any exception claims (e.g., "obvious AI" under Article 50(1)). This register becomes your compliance evidence if a national competent authority comes asking.

Use EU AI Compass tools to check your Article 50 readiness

We've built two free tools specifically for Article 50 compliance. They run in your browser — no login, no data collection.

Article 50 Transparency Validator

Classify your AI system against Article 50 categories and get a disclosure checklist. Covers chatbots, generative content, emotion recognition, and deepfakes.

Launch Validator →AI Content Marking Checker

Check whether your content pipeline meets machine-readable marking requirements. Covers metadata, watermarks, and provenance standards.

Launch Checker →Common mistakes and grey areas around Article 50

| Mistake | Why it's wrong | What to do instead |

|---|---|---|

| Assuming AI-assisted text is always exempt | If AI substantially changed the content's meaning or structure, it's "manipulated" under Article 50 | Apply the substance test: did the AI change what the text says? If yes, it's in scope |

| Assuming internal-only content needs no labelling | If internal AI content influences decisions about employees, deployer duties may apply | Label internal AI content anyway — zero cost, eliminates ambiguity |

| Relying on vendor defaults without internal policy | Deployers carry their own Article 50 obligations regardless of what the provider configured | Verify vendor marking works; create your own content policy and disclosure standards |

| Claiming "obvious AI" exception without documentation | If a regulator disagrees, you need evidence showing why the exception was reasonable | Document the rationale in your transparency register; default to disclosure |

| Stripping metadata from AI-generated images before publishing | Removes the machine-readable marking the provider embedded, potentially violating both provider and deployer duties | Preserve AI provenance metadata through your entire content pipeline |

Table 2: Five common Article 50 compliance mistakes. Further guidance from the Commission, AI Office, and the Article 50 Code of Practice is expected throughout 2026.

Guidance is still evolving. The Article 50 Code of Practice is being developed through multi-stakeholder consultation, but no officially published draft has been confirmed by the Commission or AI Office as of March 2026. Build your systems to the legal text today. Adapt to guidance as it arrives.

Related compliance tools

All tools run 100% in your browser. No login, no data collection.

Classify your system and get a disclosure checklist. ~3 min.

Check machine-readable marking requirements. ~3 min.

Full 12-question diagnostic. Articles 2, 3, 5, 6, 50, 51. ~5 min.

Check deployer duties under Articles 26, 29, 50, 27. ~3 min.

Find unapproved AI tools creating unlabelled content. ~3 min.

Train staff on Article 50 disclosure duties. Article 4. ~3 min.

FAQ: EU AI Act Article 50 transparency

Do chatbots always need an explicit AI disclosure notice?

Almost always. Article 50(1) requires providers to ensure people are informed when interacting with AI, unless it's "obvious from the circumstances." That exception is narrow — a customer service chatbot that mimics human conversation doesn't qualify. The penalty for non-disclosure can reach €7.5 million or 1% of turnover (Article 99). When in doubt, disclose.

How do we label AI-generated blog posts or marketing copy?

Article 50(2) requires AI-generated text published on matters of public interest to be disclosed, unless it has undergone genuine human editorial review. For commercial content not touching public-interest topics, the obligation is lighter — but best practice is to label or disclose AI involvement regardless. Add an "AI involvement" field to your CMS workflow.

What if AI only slightly edits human-written text?

Minor assistive edits — spell-checking, grammar correction, formatting — are generally exempt. The practical test: did the AI change the meaning, structure, or substance? If yes, it's "manipulation" under Article 50. If it only corrected typos, it isn't.

How do we mark deepfakes on social media?

Deployers must disclose with a "clearly visible" label at the point of publication — visible text in the post or an on-screen label on the video/image. Machine-readable metadata (C2PA, IPTC) should also be embedded by the provider. Artistic and satirical exceptions don't apply when content depicts real people or events in a misleading way.

Does Article 50 apply to internal-only AI content?

The core obligations target content affecting natural persons or made available to the public. Purely internal documents that never leave the organisation sit in a grey area. But if internal AI content influences decisions about employees (performance reviews, hiring notes), deployer transparency duties may apply. Best practice: label it anyway.

What penalties apply for Article 50 non-compliance?

Fines up to €7.5 million or 1% of global annual turnover (Article 99). SME proportionality applies. No fines issued as of March 2026. Enforcement begins August 2, 2026.

What is the Article 50 Code of Practice for AI content marking?

The Commission is facilitating a multi-stakeholder Code of Practice on labelling and watermarking AI-generated content. As of March 2026, work is ongoing with no officially published draft. The Code provides practical guidance but is voluntary — it doesn't replace the binding Article 50 obligation. See our Code of Practice analysis.

Does Article 50 apply to emotion recognition in the workplace?

Article 50(3) requires transparency for emotion recognition. But Article 5 bans emotion recognition in workplaces and schools outright. Check Article 5 first — if the system is banned, the transparency question is moot. See our prohibited practices guide.

Further reading

- EU AI Act Compliance Guide → — Full overview of all obligations, timelines, and penalties.

- AI Literacy Article 4 Guide → — Train staff on transparency duties including Article 50.

- FRIA & DPIA Combo Guide → — Combined impact assessments for high-risk AI deployers.

- Article 50 Code of Practice Analysis → — Status of the multi-stakeholder Code of Practice.

- Grok Deepfake Crisis → — Real-world case study of AI-generated deepfakes and regulatory response.

- Article 5 Prohibited Practices → — What's banned outright, including workplace emotion recognition.

Abhishek G Sharma

Founder & CEO, Move78 International Limited. 20+ years in cybersecurity and AI risk management. Certifications: ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, CAIRO.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & educational purpose

This guide is published by Move78 International Limited for educational purposes only. It does not constitute legal advice. The EU AI Act (Regulation 2024/1689) is a complex legislative instrument with evolving guidance. The Article 50 Code of Practice is in development as of March 2026 — implementation standards may change. Organisations should consult qualified legal counsel for compliance decisions. No enforcement fines have been issued under Article 50 as of this publication date.