What is the EU AI Act?

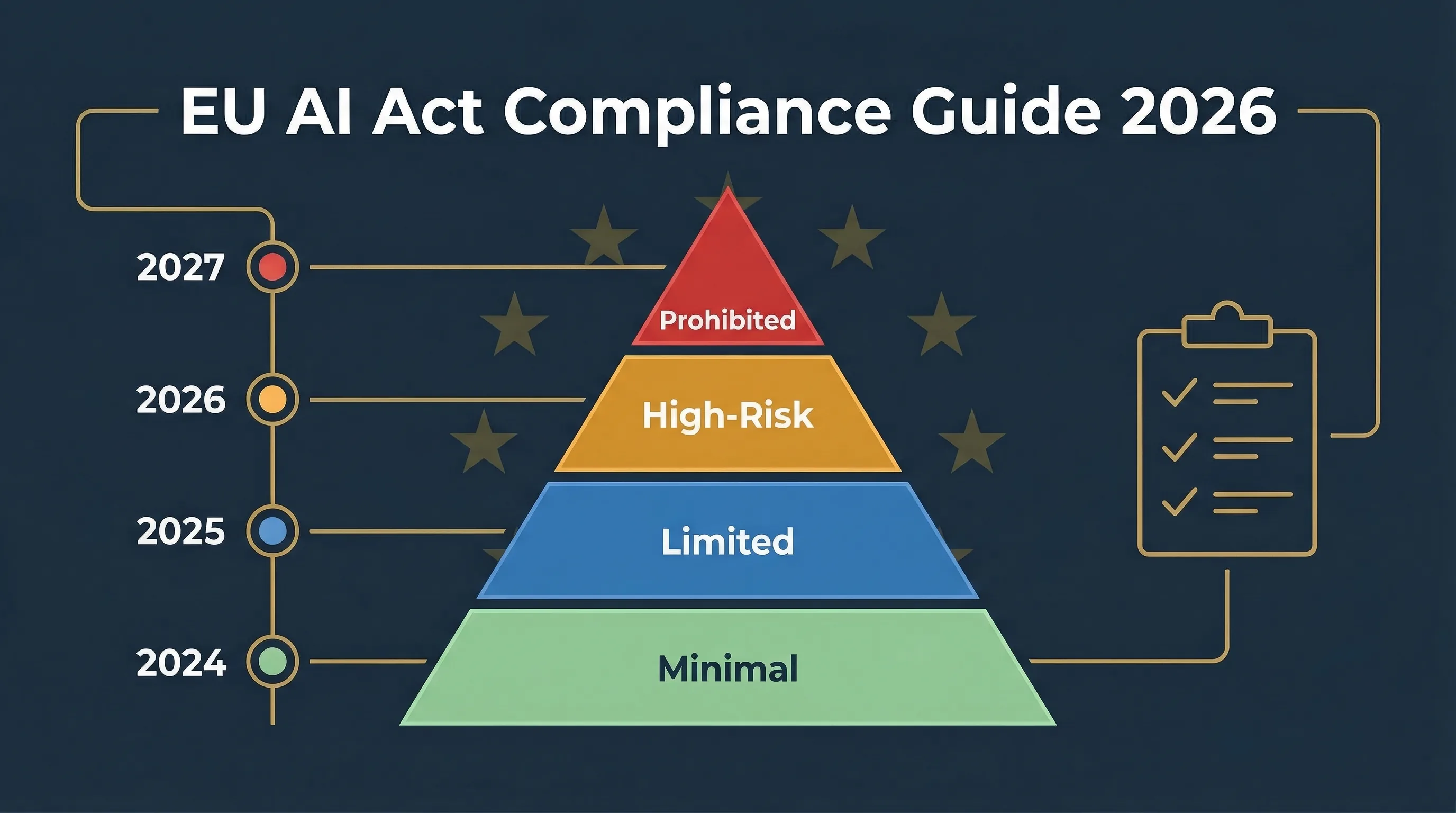

The EU AI Act (Regulation 2024/1689) is the world's first comprehensive law governing artificial intelligence. It was published in the Official Journal on July 12, 2024, entered into force on August 1, 2024, and is now rolling out in phases through 2027. This isn't a directive that each member state transposes differently. It's a regulation — directly applicable in all 27 EU member states from the moment each phase kicks in.

The law takes a risk-based approach. The EU AI Act requirements scale with the danger your AI system poses. If it does something harmless, like filtering spam, you won't face serious obligations. But if it makes decisions about who gets a loan, who passes an exam, or who gets hired, you're in high-risk territory with real documentation, oversight, and governance requirements.

Four types of actors are regulated: providers (who build or commission AI systems), deployers (who use them professionally), importers, and distributors. Most mid-market companies fall into the deployer category. And here's what trips people up: the Act has extraterritorial reach. If you're based outside the EU but your AI system's output is used within the EU, you're in scope. Full stop.

Across 113 articles and 13 annexes, the regulation covers everything from outright bans on certain AI practices to detailed technical documentation requirements for high-risk systems. If you want to start with the basics, our frequently asked questions about the EU AI Act cover the most common entry points. For a hands-on introduction, try the interactive EU AI Act training platform.

Does the EU AI Act apply to your organization?

Probably yes. If you're reading this, chances are you either build AI systems, use AI systems in your business, or sell them into the EU. Any of those puts you in scope.

Article 3 defines four roles. Providers develop AI systems and place them on the market under their own name — they carry the heaviest obligations, including conformity assessment, technical documentation, and post-market monitoring. Deployers use AI systems in a professional capacity. Most SMEs are deployers: they buy AI-powered tools from vendors and use them in operations. Deployer obligations are lighter than provider obligations but still real: human oversight, monitoring, recordkeeping, and in certain cases a fundamental rights impact assessment.

Then there's the trap nobody talks about until it's too late. Article 25 says that if you substantially modify a high-risk AI system or put your own name on it, you become a provider — with all the heavier obligations that come with it. I've seen this catch out at least three mid-market companies who thought they were just "customizing" a vendor tool. Use our Accidental Provider Classifier to check whether this applies to you.

Exemptions exist but they're narrow: purely personal or non-professional use, military and national security, and scientific R&D conducted before the system is placed on the market.

EU AI Act for SMEs: what's different?

The regulation includes specific provisions for small and medium-sized enterprises. SMEs and startups get proportionate fines (the lower of absolute or percentage amounts), access to regulatory sandboxes with priority consideration, and simplified technical documentation options for certain use cases. But the core obligations — risk classification, human oversight, incident reporting — apply equally. Being small doesn't exempt you. It just means the penalties scale down and some procedural requirements are lighter.

🔍 Does the EU AI Act apply to you?

Answer a few questions to determine your role, risk level, and specific obligations.

How the EU AI Act classifies AI systems by risk

The entire regulation hinges on one question: how risky is this AI system? The answer determines everything — what you must document, what oversight you need, whether you need a conformity assessment, and how much you can be fined.

| Risk level | Examples | Key obligations | Maximum penalty |

|---|---|---|---|

| Unacceptable | Social scoring, subliminal manipulation, untargeted facial scraping | Banned — no compliance pathway | €35M / 7% |

| High-risk | Recruitment AI, credit scoring, insurance, education, critical infrastructure | Conformity assessment, risk management, documentation, human oversight, CE marking | €15M / 3% |

| Limited | Chatbots, AI-generated content, deepfakes, emotion recognition | Transparency: disclose AI use, label outputs | €7.5M / 1% |

| Minimal | Spam filters, AI in games, basic recommendations | None (voluntary codes of conduct) | None |

Unacceptable risk — banned outright (Article 5)

Some AI practices are simply prohibited. No compliance pathway exists. These include social scoring by governments, subliminal manipulation, exploitation of vulnerable groups, untargeted facial recognition scraping, emotion recognition in workplaces and schools, biometric categorization that infers sensitive attributes like race or political opinion, predictive policing based solely on profiling, and most real-time remote biometric identification in public spaces. Penalties for deploying these systems reach up to €35 million or 7% of global annual turnover. For the full breakdown, read our guide on prohibited AI practices explained.

High-risk — heavy obligations (Annex III + Annex I)

This is where most compliance work happens. High-risk AI includes systems used in recruitment and employment decisions, credit scoring and financial access, insurance underwriting and pricing, education admissions and assessment, critical infrastructure, law enforcement, border control, and justice administration. These systems require conformity assessment, technical documentation, a risk management system, human oversight, data governance, logging, accuracy monitoring, and CE marking. The Annex III high-risk checklist maps every category in plain English. Use the Article 6(3) local exemption generator to check whether your specific system qualifies for an exemption.

Limited risk — transparency obligations (Article 50)

Systems that interact with people must be transparent about what they are. Chatbots must disclose they're AI. AI-generated content must be labeled. Deepfakes must be marked — the Grok deepfake crisis is a live example of why this matters. Emotion recognition systems must inform users they're being analyzed.

Minimal risk — no obligations

Spam filters, AI in video games, basic recommender systems. No specific obligations, though voluntary codes of conduct are encouraged.

⚖️ Check if your use case is high-risk

What deployers must do: EU AI Act obligations for organizations using AI

Here's something I keep repeating in every assessment I run: your vendor's compliance doesn't automatically make you compliant. Even if the AI system you purchased has a CE mark and a full conformity assessment, you still have independent deployer obligations under Article 26. This catches people off guard.

Article 26 — deployer obligations (enforceable August 2, 2026)

If you deploy a high-risk AI system, you must use it in accordance with the provider's instructions of use, implement human oversight measures appropriate to the risk, monitor system performance in production, maintain logs for a minimum of 6 months (longer if sector law requires it), conduct a fundamental rights impact assessment (FRIA) for systems used in public services or affecting natural persons, inform employees and their representatives when AI is used in workplace decisions, and report serious incidents to your national competent authority.

Article 4 — AI literacy (already enforceable since February 2, 2025)

This one is already live. All staff involved in AI system operation must have sufficient AI literacy — proportionate to the context, technical knowledge, and experience required. An LMS completion certificate isn't enough. You need evidence that people understand what the system does, what it can't do, and how to exercise oversight. Our Article 4 AI literacy guide covers what "sufficient" actually looks like in practice.

Article 50 — transparency (enforceable August 2, 2026)

If your system generates synthetic content, interacts with people, or performs emotion recognition, you have disclosure obligations. Users must know they're dealing with AI.

📋 Deployer compliance tools

For deadline-specific planning, see our August 2026 deadline guide for SMEs.

The deployer compliance workflow: from AI system discovery through ongoing monitoring and evidence collection.

EU AI Act deadlines 2026: enforcement timeline through 2027

The EU AI Act doesn't hit all at once. It rolls out in phases, and two of those phases are already live. Here's the full timeline based on current law:

✓ August 1, 2024

EU AI Act enters into force

✓ February 2, 2025

Prohibited practices (Article 5) + AI literacy (Article 4) enforceable

✓ August 2, 2025

GPAI model obligations (Articles 51-56) enforceable

… August 2, 2026

High-risk AI (Annex III) + Transparency (Article 50) + Regulatory sandboxes

— August 2, 2027

High-risk AI (Annex I, product safety) + GPAI grace period ends

⚠️ Digital Omnibus proposal — NOT YET LAW

The European Commission proposed the Digital Omnibus in November 2025, which may push certain Annex III high-risk deadlines to December 2027. The Council published its mandate (ST-7322-2026-INIT) in early 2026, and the European Parliament is reviewing it in committee. But this is a proposal in trilogue, not enacted law. The prudent approach is to plan for August 2, 2026 as the binding deadline and treat any extension as upside, not baseline. For details, see our Digital Omnibus tracker and confirmed vs proposed timeline.

Every EU member state must establish at least one AI regulatory sandbox by August 2, 2026. For the latest developments across all deadlines, follow our EU AI Act Weekly Intel.

EU AI Act penalties: fines up to €35 million or 7% of global turnover

The penalty structure is tiered under Article 99, and the numbers are not abstract:

| Violation type | Maximum fine |

|---|---|

| Prohibited practices (Article 5) | €35M or 7% of worldwide turnover |

| High-risk system violations | €15M or 3% of turnover |

| Incorrect information to authorities | €7.5M or 1% of turnover |

For SMEs and startups, fines are proportionate: you pay the lower of the two amounts (absolute figure or percentage). Large enterprises pay the higher.

Enforcement sits with national market surveillance authorities in each member state plus the EU AI Office for GPAI models. As of March 2026, Finland's Traficom has been operational since January 1, 2026. Ireland published its AI Office bill on February 4, 2026. Spain's AESIA has published 16 compliance guides. No formal fines have been issued under the EU AI Act yet — but enforcement infrastructure is standing up fast. Track it in our national enforcement tracker.

AI governance frameworks: ISO 42001, NIST AI RMF, and the EU AI Act

The EU AI Act tells you what to do. It doesn't tell you how to build the management system to do it. That's where governance frameworks come in.

ISO/IEC 42001:2023 is the first international standard for AI management systems. It gives you a structured Plan-Do-Check-Act framework for AI governance that maps well to the EU AI Act's requirements around risk management (Article 9), data governance (Article 10), and quality management (Article 17). According to Sprinto's 2025 survey, 76% of organizations plan to use ISO 42001 as their AI governance backbone.

NIST AI RMF 1.0 is the US voluntary framework with four functions: Govern, Map, Measure, Manage. Not legally binding, but widely adopted internationally. It maps to EU AI Act risk management requirements and is particularly useful for transatlantic companies that need to satisfy both US and EU expectations.

Neither framework equals EU AI Act compliance by itself. They're supportive structures — they help you operationalize the obligations, but they don't substitute for meeting specific legal requirements under the regulation. For the detailed control-by-control mapping, see our EU AI Act vs ISO 42001 vs NIST AI RMF crosswalk.

And if you haven't addressed shadow AI yet, you should. Unauthorized use of AI tools by employees creates unmanaged compliance exposure that no governance framework can fix after the fact.

Article 50 transparency obligations: labeling AI-generated content

Starting August 2, 2026, if you deploy AI systems that generate synthetic audio, images, video, or text, you must label or watermark that content so users can identify it as AI-generated. A Commission-facilitated Code of Practice workstream is ongoing, but it is soft-law guidance and does not replace the binding Article 50 obligation.

Chatbots and virtual assistants must disclose they're AI. Deepfakes must be disclosed. Emotion recognition systems must inform the people being analyzed. These aren't optional best practices. They're legal requirements with penalty backing.

Use our Article 50 Transparency Validator to check your disclosure status, and the AI Content Marking Compliance Checker for synthetic content labeling. For the regulatory context, read our analysis of the Article 50 Code of Practice and the distinction between soft law codes and hard law obligations.

How to start: a practical EU AI Act compliance roadmap for your team

Enough about what the law says. Here's what to do Monday morning. Treat the eight steps below as your EU AI Act compliance checklist — work through them in order, and you'll have a defensible baseline before the August 2, 2026 deadline.

-

1. Inventory your AI systems. Catalog every AI system in use, under development, or procured from vendors. Include embedded AI in SaaS tools — that marketing platform's "AI-powered lead scoring" counts. Most organizations I work with discover they have 5-10x more AI systems than they thought. Start with our Shadow AI Discovery Protocol.

-

2. Classify each system by role. For each system, determine whether you're a provider, deployer, importer, or distributor. The Accidental Provider Classifier catches the edge cases.

-

3. Classify each system by risk level. Use Annex III to determine if any systems are high-risk. Our sector-specific validators cover recruitment, credit scoring, insurance, education, biometrics, and industrial IoT.

-

4. Map your obligations. Deployer obligations differ from provider obligations. Run the Deployer Obligation Self-Assessment for a structured walkthrough.

-

5. Run a governance gap analysis. Compare your current AI governance against ISO 42001 and NIST AI RMF requirements. The ISO/NIST Gap Analyzer does this in your browser.

-

6. Build your evidence pack. Document your AI system inventory, risk classification decisions, human oversight arrangements, monitoring procedures, incident response plan, and vendor due diligence records. This is a recordkeeping problem before it becomes a tooling problem.

-

7. Train your team. AI literacy is already enforceable since February 2, 2025. Use our AI Literacy Training Planner to build a training program that produces evidence, not just attendance.

-

8. Monitor and iterate. EU AI Act compliance isn't one-and-done. Post-market monitoring, incident reporting, and regular re-assessment are ongoing obligations. If you're treating this as a project with an end date, you've misunderstood the regulation.

Start your compliance journey

All 25+ tools are free. No login. No data collected. Everything runs in your browser.

Need more practical guidance? Browse Free Tools or read the guides.

25+ free EU AI Act compliance tools — no login required

Every tool below runs entirely in your browser. No data leaves your device. No account needed. They're built for compliance officers, CTOs, CISOs, and DPOs who need fast, practical answers — not another SaaS demo.

Start here

EU AI Act Compliance Checker

12-question triage · 5 min

Quick Risk Quiz

Fast self-check · 2 min

Detailed Applicability Scorer

Weighted scoring · 10 min

Deep dive — specific obligations

Article 6(3) Exemption Generator

Accidental Provider Classifier

Article 26 Operations Scorer

Local FRIA Generator

Input Data Validator

Human Oversight Log

Automation Complacency Assessor

Article 50 Transparency Validator

Material Influence Evaluator

Deployer Obligation Self-Assessment

AI Content Marking Checker

Governance & risk program

AI Literacy Training Planner

ISO/NIST Gap Analyzer

Shadow AI Discovery Protocol

AI Vendor Risk Screener

Agentic AI Bounds Definer

RAG Data Hygiene Screener

Bias Testing Safe Harbor Protocol

Sector-specific Annex III validators

Learning & training

EU AI Act compliance FAQ

Yes. The EU AI Act applies to any organization that places an AI system on the EU market or puts it into service in the EU, regardless of where the organization is established. It also applies to providers and deployers located outside the EU where the output of the AI system is used within the EU (Article 2).

Up to EUR 35 million or 7% of worldwide annual turnover for prohibited practices (Article 99). High-risk system violations carry fines up to EUR 15 million or 3%. Providing incorrect information to authorities: EUR 7.5 million or 1%. SMEs pay the lower of the absolute or percentage amount.

The Digital Omnibus proposal (November 2025) may push certain Annex III high-risk deadlines to December 2027, but this is not yet law. The Council published its mandate (ST-7322-2026-INIT) in early 2026. Plan for August 2, 2026 as the binding deadline.

If you build AI systems and place them on the EU market under your name, you are a provider. If you use AI systems in a professional capacity, you are a deployer. Modifying a high-risk system substantially or putting your name on it can reclassify you as a provider under Article 25.

Yes. AI systems used for recruitment, screening, filtering, or evaluating candidates fall under Annex III Area 4 (employment, workers management, access to self-employment) and are classified as high-risk.

Article 4 requires that all staff operating or overseeing AI systems have sufficient AI literacy proportionate to the system context. This obligation has been enforceable since February 2, 2025.

Start with a systematic discovery covering all SaaS tools, vendor-provided systems, internally built models, and embedded AI features in existing software. Most organizations discover 5 to 10 times more AI systems than they initially expected.

They are complementary regulations. GDPR governs personal data processing; the AI Act governs AI system safety, transparency, and accountability. Many AI systems process personal data, so both apply simultaneously. A DPIA under GDPR and an FRIA under the AI Act may overlap but serve different legal purposes.

About the author

Abhishek G Sharma is the founder of EU AI Compass and Move78 International Limited. He holds ISO/IEC 42001 LA, ISO/IEC 27001 LA, CISA, CISM, CRISC, CEH, and CCSK certifications, with 20+ years of experience in cybersecurity, cloud security, and AI governance across Asia, Europe, and the Middle East.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Educational disclaimer

This guide provides educational and operational guidance only. It is not legal advice. The content is current as of March 2026 and verified against official EU sources. For binding legal interpretation, consult qualified legal counsel. Published by Move78 International Limited, Hong Kong SAR.

Sources & legal basis

EU AI Act official text: Eur-Lex Regulation 2024/1689

European Commission AI Act page: EC Regulatory Framework for AI

AI Act Service Desk: ai-act-service-desk.ec.europa.eu

NIST AI RMF: nist.gov/ai-risk-management-framework