The right question is not “How many days are left?” The right question is “What do we need to complete now under current law, and what can be staged if the proposal track later changes?”

Current law first, proposal second

Confirmed current-law deadlines

- Article 5 prohibited practices: applied from 2 February 2025.

- Article 4 AI literacy: applied from 2 February 2025.

- GPAI obligations: applied from 2 August 2025.

- Article 50 and Annex III main phase: 2 August 2026.

Proposal track you should monitor

- Omnibus could shift some high-risk timing if adopted.

- That proposal remains procedurally active, not final.

- You should track it, not bet your compliance posture on it.

- Build dual timelines into internal planning and customer communication.

The rational operating model is simple: run your programme against the confirmed baseline while carrying a proposal overlay for Board-level planning and resource sequencing.

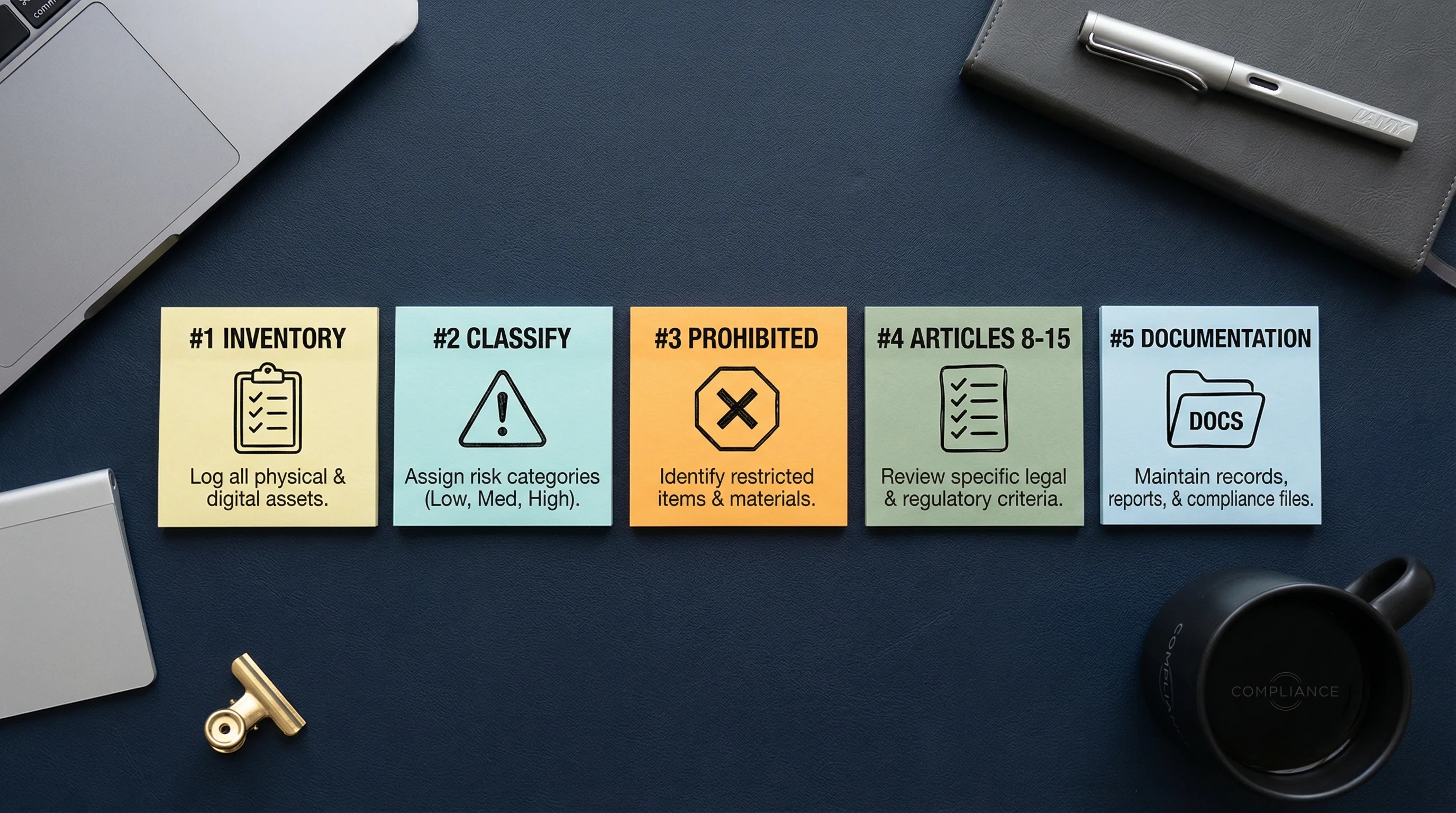

The 5-step action plan for SMEs

1. Build your AI inventory. Record every system you build, deploy, or procure, including embedded AI features inside third-party software. Shadow AI is still the easiest way to fail this law by accident.

2. Classify each system. Separate prohibited, high-risk, transparency-relevant, GPAI-related, and minimal-risk use cases. Do not dump everything into one “AI” bucket.

3. Screen Article 5 and Article 4 first. These are already applicable and are the fastest route to immediate legal exposure or avoidable operational weakness.

4. Prioritise Annex III and Article 50 workstreams. High-risk evidence, documentation, and transparency design should run as parallel tracks, not sequential afterthoughts.

5. Centralise documentation. Regulators will inspect evidence before they admire architecture diagrams. If a control is not evidenced, it is weak. If a decision is not documented, it will be attacked.

What regulators are likely to inspect first

- Visible prohibited-practice exposure.

- Whether you can classify your systems coherently.

- Whether documentation exists for systems you call high-risk or transparency-relevant.

- Whether staff dealing with AI have any defensible literacy measures at all.

What this means for SMEs with limited budget

Do not wait for perfect standards. Do not wait for final codes. Do not wait for the Omnibus to “save” you. Inventory, classification, literacy, prohibited-practice screening, ownership, and evidence collection are good investments under every scenario.

For a cleaner split between current law and the proposal track, read the confirmed vs proposed timeline page. For first-pass triage, use the 2-minute quick quiz and the 12-question Compliance Checker.

About the author: Abhishek G Sharma is the founder of Move78 International Limited. He holds ISO 42001 Lead Auditor, CISA, CISM, CRISC, and CEH certifications. He brings over 20 years of practitioner experience in cybersecurity, AI governance, and enterprise risk management.

Disclaimer: This analysis is for educational purposes only and does not constitute legal advice. Consult qualified counsel for binding compliance decisions. Last updated: 9 May 2026.