What does Article 4 AI literacy require from providers and deployers?

Article 4 of the EU AI Act imposes a single, blunt obligation: providers and deployers must ensure that their staff and other persons dealing with AI systems have a sufficient level of AI literacy. This isn't a suggestion. It's been enforceable since 2 February 2025 — making it one of the earliest live obligations under the entire regulation.

What counts as "sufficient"? The Act says you must consider the technical knowledge, experience, education and training of the people involved, plus the context in which the AI systems are used and the persons or groups on whom the AI system is intended to be used. That's deliberately broad. A receptionist who occasionally uses an AI chatbot needs different training than a data scientist fine-tuning a credit scoring model.

Article 3(56) defines AI literacy as the "skills, knowledge and understanding that allow providers, deployers and affected persons, taking into account their respective rights and obligations in the context of this Regulation, to make an informed deployment of AI systems and to gain awareness about the opportunities and risks of AI."

Could your compliance team articulate that definition today? Could they explain how it maps to the AI tools your organisation actually uses? If the answer is no, that's the gap Article 4 is designed to close.

⚠️ Already enforceable

Unlike high-risk system rules (August 2, 2026) or Annex I obligations (August 2, 2027), Article 4 AI literacy is enforceable right now. Organisations that haven't acted are already non-compliant. Penalties under Article 99 can reach €7.5 million or 1% of global annual turnover.

Why AI literacy is more than a box-ticking exercise

Treating AI literacy as a compliance checkbox is the fastest way to waste your training budget and still fail an audit. The real value sits in three places that directly affect operational risk.

Reducing shadow AI and misuse

Staff who don't understand what AI can and can't do will use it in ways you haven't sanctioned. They'll paste customer data into public LLMs. They'll trust a chatbot's output without verification. They'll build spreadsheet macros using AI tools that nobody in IT knows about. I've seen a European financial services firm discover 14 unapproved AI tools in active use across three departments — after they thought they'd completed their AI inventory. That's what happens without meaningful literacy. Training doesn't eliminate shadow AI, but it gives people the vocabulary to recognise when they're doing something that creates risk.

Enabling genuine human oversight

Article 14 of the EU AI Act requires human oversight for high-risk AI systems. But oversight without understanding is theatre. If the person monitoring a recruitment screening tool can't explain what the model's confidence score means, or what the false positive rate implies for rejected candidates, they aren't overseeing anything. They're watching a dashboard they don't understand. Article 4 AI literacy is the precondition for Article 14 human oversight to actually work. You can't separate them.

Supporting transparency obligations

Article 50 requires organisations to disclose when people are interacting with AI — chatbots, deepfakes, emotion recognition. Your staff need to understand when that duty triggers and how to implement it. An untrained marketing team won't know that an AI-generated product image might require labelling. A customer service manager won't flag that their new chatbot needs a disclosure notice. AI literacy closes that gap before it becomes an enforcement problem.

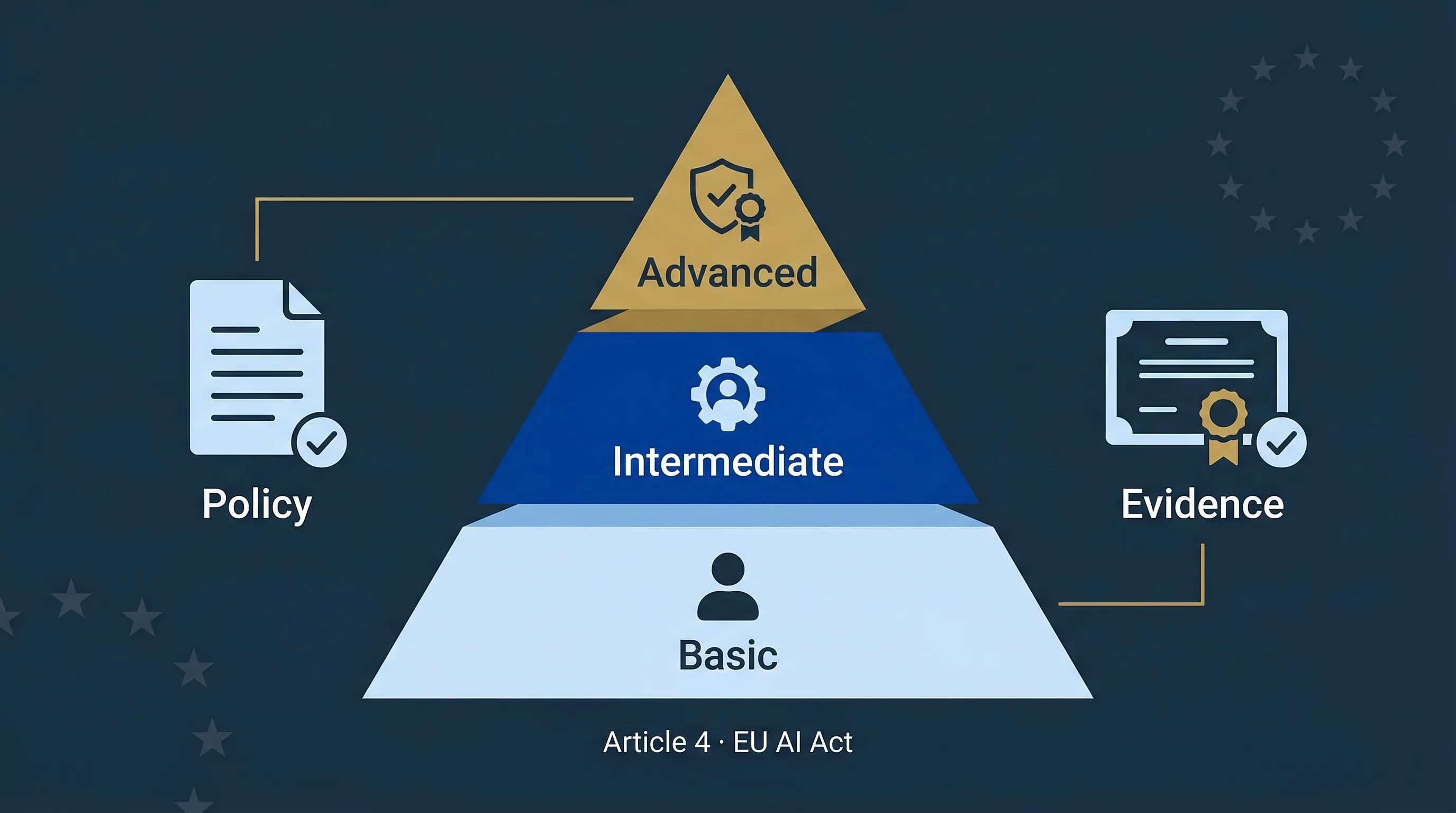

Designing AI literacy by role: basic, intermediate, advanced

A one-size-fits-all training programme won't satisfy Article 4. The Commission's AI Literacy Q&A makes clear that the level of training must be proportionate to the person's role, the AI systems they interact with, and the risk context. Here's a three-tier model that works for most mid-market organisations.

| Dimension | Basic | Intermediate | Advanced |

|---|---|---|---|

| Who | All employees who occasionally use AI-powered tools (email assistants, chatbots, search) | Staff who configure, integrate, or make decisions based on AI system outputs | AI system owners, risk/compliance officers, developers, data scientists |

| Core topics | What AI is, basic risks, acceptable use policy, data handling, when to escalate | Model outputs and confidence levels, bias awareness, oversight duties, incident reporting | EU AI Act obligations by role, risk classification, FRIA/DPIA, technical documentation, conformity assessment |

| Delivery format | 30-min e-learning module + quiz | 2-hour workshop + scenario exercises | Half-day intensive + certification assessment |

| Assessment | Pass/fail quiz (70% threshold) | Scenario-based assessment + written acknowledgement | Competency demonstration + peer review + signed attestation |

| Refresh cycle | Annually | Annually + on new AI system deployment | Biannually + on regulatory change + on new AI system deployment |

| Article mapping | Article 4 | Articles 4, 14, 26 | Articles 4, 9, 11, 13, 14, 15, 26, 27, 50 |

Table 1: Three-tier AI literacy training model mapped to EU AI Act obligations. Organisations should assign every person who interacts with AI to one of these tiers.

How to build an AI literacy programme that meets Article 4

Don't overthink the structure. The EU AI Act doesn't prescribe a specific format, accreditation body, or curriculum. It prescribes an outcome: people who deal with AI systems must be literate enough to deploy them responsibly. Here's a 7-step method that gets you from zero to audit-ready.

Step 1: Inventory your AI touchpoints

Map every AI system in use — approved and unapproved. Include vendor-supplied SaaS with embedded AI features. Most organisations undercount by 40–60% because they don't classify tools like Grammarly, Copilot, or automated email filters as "AI." Use the Shadow AI Discovery Protocol to run a systematic scan.

Step 2: Map roles to literacy levels

For each AI system, identify who interacts with it and assign them to one of the three tiers: basic, intermediate, or advanced. A single person might be "basic" for the company chatbot but "intermediate" for a customer segmentation tool they use daily. Role-to-level mapping is the core artefact of your Article 4 compliance programme.

Step 3: Define the curriculum

Every tier needs specific learning objectives. For Basic, focus on: what AI is and isn't, your acceptable use policy, data handling rules, and escalation paths. For Intermediate, add: how to interpret model outputs, bias and fairness concepts, incident reporting, and deployer duties under Article 26. For Advanced, include: risk classification, FRIA requirements, technical documentation standards, and conformity assessment pathways.

Step 4: Choose delivery formats

E-learning works for Basic. It scales, it's trackable, and people can complete it on their own schedule. For Intermediate and Advanced, use live workshops or structured scenario exercises. The people who need to understand bias in a credit scoring model won't get there from a 20-minute video. They need worked examples and discussion.

Step 5: Plan the rollout

Phase by risk exposure. Start with Advanced-tier staff (AI system owners, compliance, and risk teams) — they need the training most urgently, and they'll become internal champions who reinforce the programme. Roll out Intermediate next for deployer-facing roles. Basic comes last because it's the widest audience and the lowest-risk group.

Step 6: Assess and certify

Article 4 doesn't require formal certification, but you need evidence that people completed training and understood the material. Quizzes for Basic, scenario assessments for Intermediate, competency demonstrations for Advanced. Store results in a central register. A national authority or customer auditor will ask for this.

Step 7: Set the refresh cycle

Annual refreshes as a minimum. Trigger additional refreshes when you deploy a new AI system, upgrade an existing one, or when new regulatory guidance drops. The Commission has signalled that AI literacy expectations will evolve as enforcement matures — your programme needs to evolve with them.

Use the AI Literacy Planner to generate your programme and evidence

We built a free tool specifically for this. The AI Literacy Training Planner walks you through each of the 7 steps above. It generates a role-to-level mapping, a curriculum outline, and a training schedule you can take straight into your LMS or workshop planning.

AI Literacy Training Planner

Design a role-based AI literacy programme aligned to Article 4. Map roles → literacy tiers → curriculum → delivery plan → evidence strategy. 100% browser-based, zero login required.

Launch Planner →

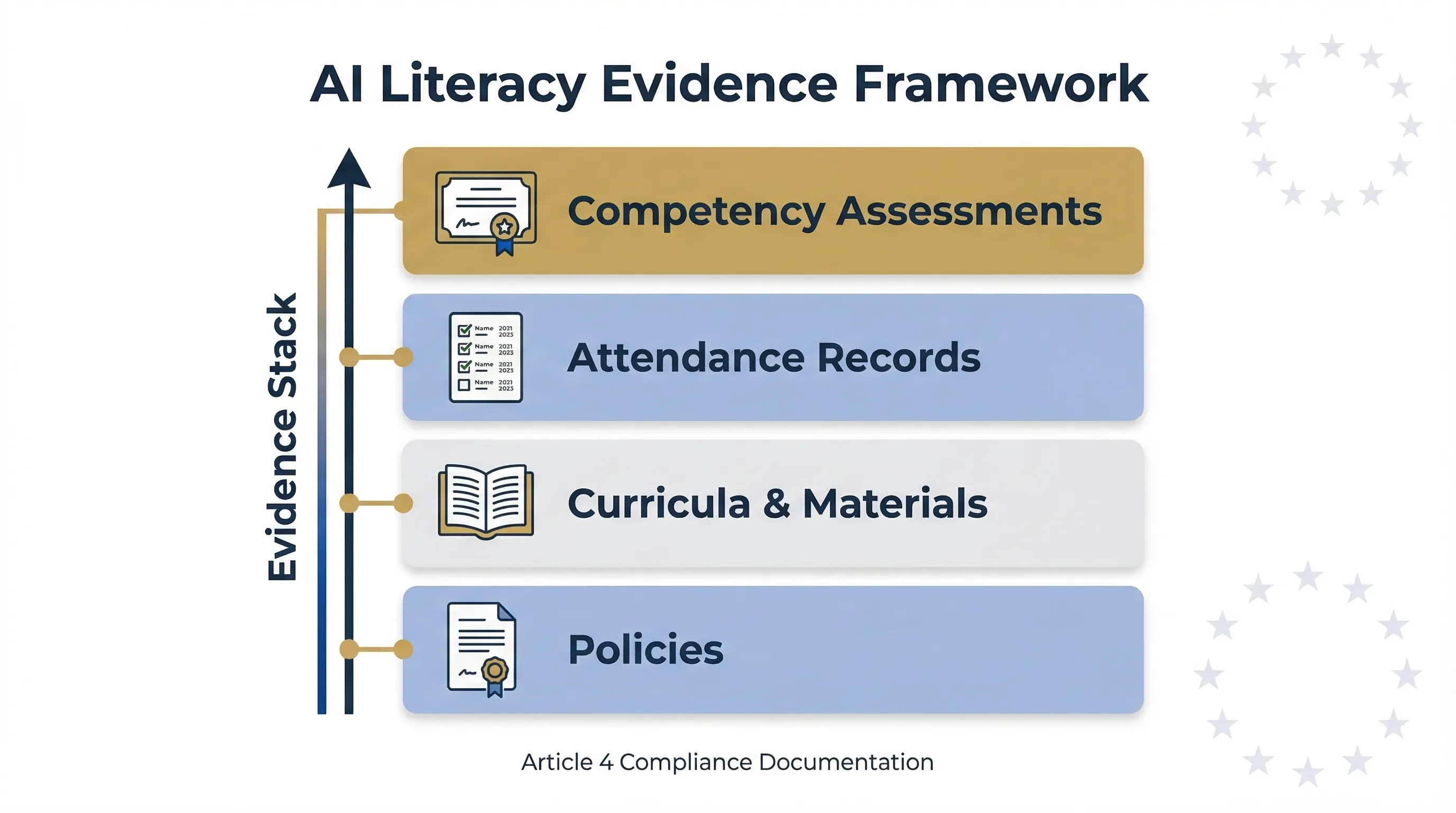

Figure: The four layers of an AI literacy evidence stack — policy, curriculum, attendance, and competency records.

How to prove AI literacy compliance to regulators and customers

Running training sessions isn't enough. You need an evidence trail. When a national competent authority asks "how have you ensured AI literacy?", your answer can't be "we sent a Slack message." Here's the documentation stack you should maintain.

Policies

Two documents minimum: an AI Acceptable Use Policy (what staff can and can't do with AI tools) and an AI Literacy Policy (how training is structured, who's responsible, what the refresh cycle is). These should be board-approved or signed by a senior executive. They form the governance layer.

Training materials and curricula

Retain copies of every training module, slide deck, scenario exercise, and job aid. Version them. If a regulator asks what you taught your staff in Q2 2026, you need the exact materials — not a summary you wrote after the fact.

Attendance and completion records

Name, date, module completed, score (if applicable). Export these from your LMS or maintain a structured register. Coverage should be traceable to every person in your role-to-level mapping.

Competency assessments and surveys

Quiz results, scenario assessment outcomes, post-training confidence surveys. These demonstrate not just that training happened, but that it landed. A 95% completion rate with a 40% pass rate tells a regulator your programme isn't working.

All of this integrates with your broader AI governance framework. Your FRIA documentation (see our FRIA & DPIA Combo Guide) should reference the literacy status of people involved in impact assessments. Your risk register should flag roles where literacy gaps create residual risk.

AI literacy as your first line of defence against shadow AI

Shadow AI — unapproved AI tools used without IT or compliance knowledge — is the fastest-growing governance gap in mid-market companies. And AI literacy is the single most cost-effective countermeasure. Why? Because people don't use unapproved tools maliciously. They use them because nobody told them the rules.

A practical AI literacy programme teaches three things that directly reduce shadow AI risk: what counts as an AI tool (most people don't realise their email spam filter qualifies), what data handling restrictions apply, and when to flag a new tool to IT or compliance before using it.

Pair your Article 4 training with the Shadow AI Discovery Protocol and the AI Vendor Risk Screener to close the loop: train people on the rules, discover what they're actually using, and screen new tools before they're adopted.

Two example AI literacy programme blueprints

What does this look like in practice? Here are two scenarios — a 30-person SME with minimal AI use, and a 200-person firm with multiple AI systems across departments.

| Dimension | SME (30 FTE, 2 AI tools) | Mid-Market (200 FTE, 8 AI systems) |

|---|---|---|

| AI inventory | Office AI assistant (Copilot) + 1 SaaS tool with AI features | 3 high-risk systems (credit scoring, recruitment screening, fraud detection) + 5 general-purpose tools |

| Tier split | 25 Basic, 4 Intermediate, 1 Advanced | 120 Basic, 55 Intermediate, 25 Advanced |

| Delivery | 1 × 30-min all-staff e-learning, 1 × 90-min management workshop | E-learning platform (Basic), quarterly workshops (Intermediate), annual intensive + external assessment (Advanced) |

| Timeline | 2 weeks to deploy | 6–8 weeks phased rollout |

| Evidence artefacts | AUP + quiz results spreadsheet + signed acknowledgement forms | AI Literacy Policy + LMS records + scenario assessment reports + competency attestations + board reporting |

| Annual cost estimate | €500–€2,000 (internal effort + e-learning platform) | €15,000–€40,000 (LMS licence + external trainers + internal time) |

| Key risk if skipped | Shadow AI incidents, €7.5M fine exposure | Human oversight failures on high-risk systems, procurement due diligence failures, €7.5M+ fine exposure |

Table 2: AI literacy programme comparison — SME vs mid-market. Cost estimates are indicative as of March 2026 and will vary by jurisdiction and delivery model.

The SME version takes 2 weeks and costs less than most organisations spend on a single team offsite. There's no excuse for not having something in place. The mid-market version requires more planning but should integrate with existing L&D infrastructure. Either way, the audit artefacts are non-negotiable — the programme only counts if you can prove it happened.

For SMEs specifically, our August 2026 Deadline Guide for SMEs covers AI literacy alongside the full timeline of obligations you're facing.

Digital Omnibus warning: Article 4 may change — but don't wait

⚠️ Proposed change — not yet law

The Digital Omnibus proposal has raised the possibility of a softer future Article 4 framing, but that proposal is not enacted law and the exact legislative outcome remains unsettled. For now, the current Article 4 duty still applies in full.

Should you wait to see if the Omnibus passes before investing in AI literacy? No. Three reasons.

First, the current law is enforceable today. The Omnibus is a proposal. It needs European Parliament and Council agreement, and that timeline isn't certain. You can't cite a proposed amendment as a defence for current non-compliance.

Second, even if Article 4 is softened, AI literacy remains a precondition for complying with Articles 14 (human oversight), 26 (deployer obligations), and 50 (transparency). Those aren't going anywhere. Training your staff on AI isn't optional just because the specific literacy mandate might relax.

Third, customers and procurement teams already ask for AI literacy evidence in due diligence questionnaires. That pressure won't vanish with a regulatory softening. If anything, the market expectation will persist even if the legal mandate loosens.

Related compliance tools

Every tool below runs 100% in your browser. No login, no data collection, no server-side processing.

Design your role-based training programme. Article 4 focused. ~3 min.

Find unapproved AI tools in your organisation. ~3 min.

12-question diagnostic across Articles 2, 3, 5, 6, 50, 51. ~5 min.

Check your deployer duties under Articles 26, 29, 50, 27. ~3 min.

Screen AI vendors for compliance readiness. Articles 25, 26, 28. ~3 min.

Full interactive training covering Articles 4, 5, 6, and 50. ~10–30 min.

Log Article 14 oversight decisions with timestamps. ~2 min per entry.

Assess whether your oversight roles are genuinely effective. Article 14. ~3 min.

FAQ: AI literacy and Article 4

Does Article 4 AI literacy apply if we only use off-the-shelf AI tools?

Yes. Article 4 applies to both providers and deployers regardless of whether the AI system is custom-built or commercial off-the-shelf. As a deployer, you must ensure staff who interact with or rely on the AI system's outputs have sufficient AI literacy to understand its capabilities, limitations, and risks. The obligation scales to the context of use, not the origin of the software.

Do we need formal certification for AI literacy under the EU AI Act?

No. Article 4 doesn't mandate any specific certification, examination, or accredited programme. The requirement is to ensure a "sufficient level of AI literacy" considering the technical knowledge, experience, education and training of the persons involved, and the context of use. You choose the delivery method. What matters is that you can demonstrate the effort and assess outcomes.

How often must AI literacy training be repeated?

The EU AI Act doesn't specify a fixed refresh cycle. Best practice is to refresh annually and whenever a material change occurs — deploying a new AI system, upgrading an existing one, or when new regulatory guidance is published. The Commission's Q&A guidance emphasises that literacy should reflect the evolving state of AI use in the organisation.

Does Article 4 AI literacy apply to contractors and third-party staff?

Yes. Article 4 covers "staff and other persons dealing with the operation and use of AI systems on their behalf." This includes employees, contractors, consultants, and outsourced teams. If a third party operates or makes decisions based on your AI system, their literacy is your responsibility under Article 4.

What are the penalties for failing to comply with Article 4?

Article 99 sets the framework. Non-compliance with Article 4 can attract fines of up to €7.5 million or 1% of global annual turnover, whichever is higher. For SMEs and startups, proportionality principles apply. As of March 2026, no fines have been issued under Article 4, but national competent authorities are being established across Member States.

Could the Digital Omnibus proposal change Article 4 requirements?

Possibly, but not yet. Omnibus materials have discussed softening how Article 4 is framed, yet the legislative outcome is still unsettled and the proposal is not enacted law. Until any amendment is formally adopted, the current obligation remains fully enforceable. See our Digital Omnibus deep dive for status tracking.

Is there a standard curriculum for AI literacy under the EU AI Act?

No official curriculum exists. Article 3(56) defines AI literacy broadly as skills enabling informed deployment and risk awareness. The Commission's AI Literacy Q&A suggests topics including AI basics, risks, bias, transparency, and governance. Organisations design their own curriculum to fit their risk profile and AI deployment context.

How does Article 4 AI literacy relate to Article 14 human oversight?

They're directly linked. Article 14 requires that people overseeing high-risk AI systems have the competence, training, and authority to do so effectively. Article 4 literacy provides the foundation for that competence. Without adequate AI literacy, human oversight becomes a checkbox where the person monitoring the system doesn't understand what they're looking at — a condition known as automation complacency. Use our Automation Complacency Assessor to test for this.

Further reading on EU AI Act compliance

- EU AI Act Compliance Guide → — Full overview of all obligations, timelines, and penalties.

- FRIA & DPIA Combo Guide → — How to run fundamental rights and data protection impact assessments together.

- Annex III High-Risk AI Systems Checklist → — Determine if your AI system falls under high-risk classification.

- ISO 42001 / NIST AI RMF Crosswalk → — Map your existing framework controls to EU AI Act requirements.

- Article 5 Prohibited Practices Explained → — What AI uses are banned outright under the EU AI Act.

Abhishek G Sharma

Founder & CEO, Move78 International Limited. 20+ years in cybersecurity and AI risk management. Certifications: ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, CAIRO.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & educational purpose

This guide is published by Move78 International Limited for educational purposes only. It does not constitute legal advice. The EU AI Act (Regulation 2024/1689) is a complex legislative instrument, and its interpretation may vary by jurisdiction and national implementation. Organisations should consult qualified legal counsel for compliance decisions specific to their circumstances. All regulatory information is current as of March 2026. The Digital Omnibus proposal is described as a proposal, not enacted law. No enforcement fines have been issued under Article 4 as of this publication date.

Sources and legal basis

- Regulation (EU) 2024/1689 — EU Artificial Intelligence Act (Eur-Lex full text)

- EU Commission — AI Literacy Questions and Answers (Article 4 guidance)

- EU Commission — Regulatory Framework for AI (risk levels and timeline)

- AI Act Service Desk — Article 99: Penalties (€35M/7%, €15M/3%, €7.5M/1%)

- AI Act Service Desk — Article 3: Definitions (including AI literacy, Article 3(56))