What is a DPIA under GDPR — and what is a FRIA under the EU AI Act?

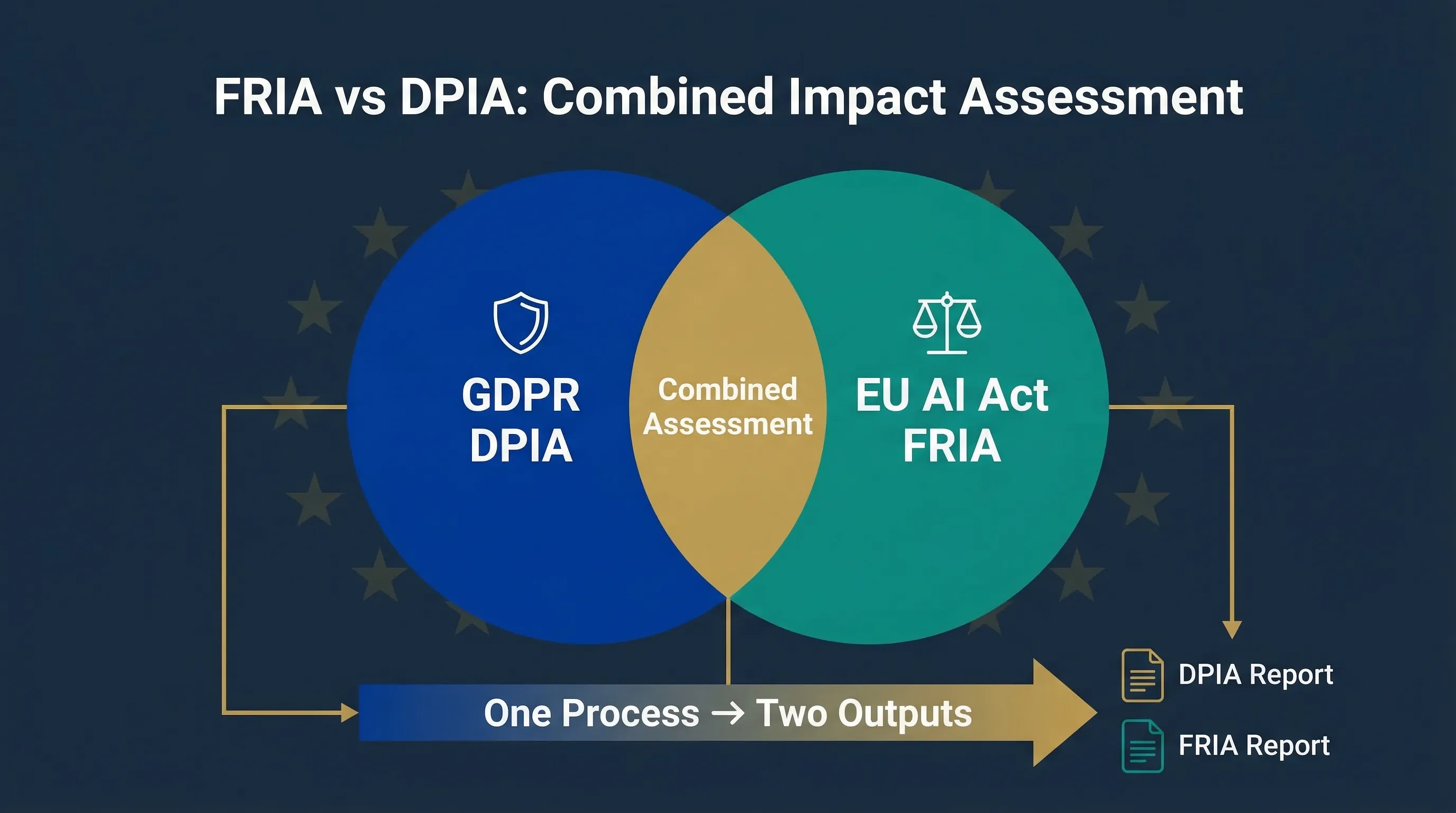

If you're deploying a high-risk AI system in the EU that processes personal data, you're staring at two separate impact assessment obligations from two separate laws. And nobody told you they overlap. That's the core problem this guide solves.

DPIA: Data Protection Impact Assessment (GDPR Article 35)

A DPIA is required under GDPR Article 35 whenever data processing is "likely to result in a high risk to the rights and freedoms of natural persons." For AI, this triggers in familiar scenarios: systematic profiling, large-scale processing of special category data, public area monitoring, and automated decision-making with legal or significant effects. The focus is narrow but deep: privacy rights, data minimisation, purpose limitation, and safeguards for data subjects. If you've been through a GDPR compliance cycle, you've probably run at least one DPIA already.

FRIA: Fundamental Rights Impact Assessment (EU AI Act Article 27)

A FRIA is a newer obligation under Article 27 of the EU AI Act. It applies to certain deployers of high-risk AI systems — specifically public bodies and private entities providing services in Annex III domains including credit scoring (5a), insurance pricing (5b), employment decisions (5c/5d), access to essential services (6), law enforcement adjacent (7), and migration (8). The scope is broader than a DPIA: it covers fundamental rights beyond privacy, including non-discrimination, access to education, employment, housing, social protection, and due process. The FRIA must be completed before the high-risk AI system is put into service, and the results must be notified to the relevant market surveillance authority under Article 27(4). This obligation applies from August 2, 2026.

| Dimension | DPIA | FRIA |

|---|---|---|

| Legal basis | GDPR Article 35 | EU AI Act Article 27 |

| Focus | Personal data rights and freedoms | Broader fundamental rights (non-discrimination, access, due process) |

| Trigger | High-risk data processing | Deploying high-risk AI in Annex III domains |

For the full context on EU AI Act obligations, see our Complete EU AI Act Compliance Guide.

DPIA vs FRIA: key differences, overlaps, and how they fit together

I've run combined assessments for three different mid-market deployers in the past six months, and the biggest misconception I keep hearing is "we already did a DPIA, so we're covered." You're not. Here's why.

| Dimension | DPIA (GDPR) | FRIA (EU AI Act) |

|---|---|---|

| Legal basis | GDPR Article 35 | EU AI Act Article 27 |

| Scope of rights | Privacy, data protection, rights of data subjects | Non-discrimination, access to services, education, employment, housing, due process, dignity |

| Who performs it | Data controller (any sector) | Deployer of high-risk AI (public bodies + certain private entities) |

| Trigger | High-risk processing (profiling, special categories, monitoring) | Deploying Annex III high-risk AI in listed domains (points 5-8) |

| Timing | Before processing begins | Before AI system is put into service |

| Notification | Consult DPA if high residual risk (Article 36) | Notify market surveillance authority of results (Article 27(4)) |

| Documentation | Internal records, available to DPA on request | FRIA report, notified to authority |

| Enforceable from | Since May 2018 | August 2, 2026 |

Where they overlap (and why running two separate processes wastes time)

Both assessments require you to describe the system, map the context, identify affected persons, assess risks, and define mitigation measures. Running them separately means double interviews, double documentation cycles, and two governance committees reviewing essentially the same system. The Commission, Parliament, and EDPB have all indicated that FRIAs are meant to complement — not replace — existing DPIA practice, and integrated approaches are explicitly encouraged. The efficient approach is a combined workflow that shares the heavy lifting and routes the output into two separate, legally compliant documents.

Do you need a DPIA, a FRIA, or both?

Step 1: Check DPIA triggers (GDPR Article 35)

You need a DPIA if your AI system involves systematic profiling with legal effects, large-scale processing of special category data (health, biometric, criminal), public area monitoring, or automated decision-making that significantly affects individuals. In AI terms: recruitment scoring, credit decisioning, health diagnostics, behavioural profiling, proctoring, and fraud detection almost always trigger DPIA.

Step 2: Check FRIA triggers (EU AI Act Article 27 and Annex III)

You need a FRIA if you're deploying a high-risk AI system AND you're a public body or a private entity providing services in the Annex III domains: creditworthiness assessment (5a), insurance risk pricing (5b), recruitment and employment decisions (5c/5d), access to essential private services (6), law enforcement functions (7), or migration and border control (8). Not every deployer of high-risk AI needs a FRIA — but if you're in one of these domains, you do.

Step 3: Common scenarios where both apply

In practice, most AI systems that trigger a FRIA also trigger a DPIA because they process personal data. Three examples:

Not sure which applies to you? Our FRIA Generator and Deployer Obligation Self-Assessment walk you through the eligibility logic step by step.

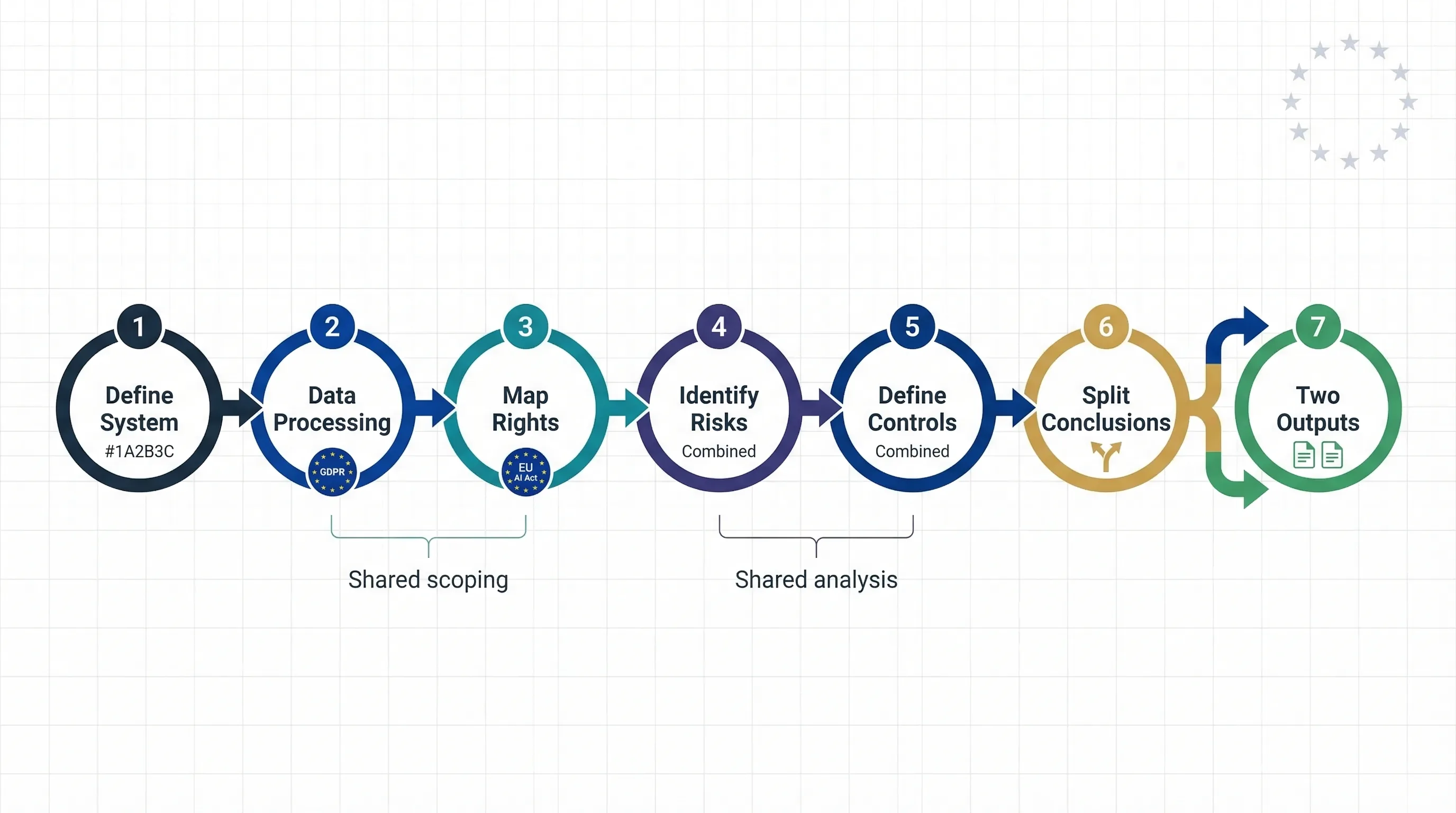

How to run a single combined FRIA + DPIA workflow

You can usually run FRIA and DPIA in one coordinated workflow, but don't collapse them into a single legal artifact. Share scoping, interviews, system description, and control mapping, then route that shared material into two separate outputs. Here's the seven-step process.

Step 1 — Define system and context (shared)

Describe the AI system: what it does, how it works at a high level, who built it, and how it's deployed. Map it to the relevant Annex III category. Identify the GDPR processing context — controller, processor, joint controllers. This step is identical for both assessments, so do it once.

Step 2 — Describe data processing (DPIA focus)

Document data categories processed, lawful basis, necessity and proportionality analysis, data recipients, international transfers, and retention periods. This feeds the DPIA output specifically.

Step 3 — Map affected persons and fundamental rights (FRIA focus)

Identify the groups affected: job applicants, borrowers, students, patients, citizens. Map the relevant fundamental rights beyond data protection: non-discrimination, access to work, education, housing, social protection, due process. This feeds the FRIA output specifically.

Step 4 — Identify risks and harms (combined)

Build a unified risk table covering both data protection risks and fundamental rights risks. Columns: risk ID, description, affected right(s), likelihood, severity, risk owner. This is where running a combined process saves the most time — you assess the same system once against both rights frameworks instead of doing two separate risk workshops.

Step 5 — Define mitigation measures and oversight (combined)

Document the technical and organisational safeguards: human oversight arrangements (Article 14 AI Act), logging and monitoring, access controls, bias testing, appeals mechanisms, and any limitations on automated decision-making. These measures address risks identified in Step 4 and feed both output documents.

Step 6 — Document separate conclusions

This is where the process splits into two outputs. For the DPIA: state the residual risk level and whether to proceed, modify, or consult the DPA under Article 36. For the FRIA: summarise the residual rights impact and whether the conditions under Article 27 are met. Different legal conclusions, same evidence base.

Step 7 — Generate two outputs from one process

The combined workflow produces a DPIA report (for GDPR documentation and DPA consultation if needed) and a FRIA report (aligned with Article 27 and future AI Office templates, notified to the market surveillance authority). Two documents. One integrated process. That's the whole point.

The combined FRIA + DPIA workflow: seven steps, one integrated process, two legally compliant outputs.

Use the Combined FRIA + DPIA Wizard (free tool)

The workflow above is exactly what our Combined FRIA + DPIA Wizard implements. It's a guided questionnaire that walks you through all seven steps, collects your answers, and generates separate DPIA and FRIA draft reports. No login, no data collection — everything runs in your browser.

🧭 Combined FRIA + DPIA Wizard

Answer 20-30 questions about your AI system and deployment context. Get a GDPR DPIA draft and an EU AI Act FRIA draft. Export as DOCX/PDF for internal review and legal counsel.

Download FRIA, DPIA, and combined templates

If you prefer to work offline or need to adapt the assessment to your existing governance framework, use these templates directly. The free versions cover the core structure and give you a practical starting point for FRIA and DPIA work.

📄 Templates

• DPIA for AI systems template (GDPR Article 35 aligned) — DOCX

• FRIA template structured on Article 27(1) points (a)-(f) — DOCX

• Combined FRIA + DPIA checklist summarising required fields — PDF

Free versions: core structure. For broader FRIA and DPIA implementation support, visit Move78 International.

Worked examples: how a combined FRIA + DPIA looks in practice

Recruitment AI (ATS with candidate scoring)

A 120-person fintech uses an ATS that scores and ranks applicants based on CV parsing and predictive analytics. DPIA is triggered because the system performs automated profiling with significant effects on employment. FRIA is triggered because recruitment falls under Annex III Area 5c/5d. The combined assessment revealed that the vendor couldn't explain the scoring model's weighting — which became both a GDPR transparency issue and an AI Act human oversight issue resolved in one process. See our HR/Recruitment Validator for classification.

Credit scoring model in financial services

A mid-market lender deploys a credit scoring model to assess loan eligibility for EU customers. DPIA triggered: systematic profiling affecting creditworthiness. FRIA triggered: Annex III Area 5a (access to credit). The combined process identified that the model's training data underrepresented certain demographics — a bias risk that's both a data quality issue under GDPR and a non-discrimination risk under the FRIA. One stakeholder workshop, two documented findings. See our Credit Scoring Delimiter for risk classification.

Remote exam proctoring in education

A university deploys AI-powered proctoring that monitors students via webcam, flagging suspicious behaviour during remote exams. DPIA triggered: large-scale monitoring with biometric processing. FRIA triggered: Annex III Area 3 (education and vocational training). The combined assessment surfaced that students with disabilities were disproportionately flagged by the movement-detection algorithm — a finding that required both a data protection safeguard (alternative assessment options) and a fundamental rights mitigation (non-discrimination review). See our EdTech Assessment Validator.

Where FRIA + DPIA sit in your AI governance framework

This combined assessment isn't a side quest. It's the central evidence mechanism of your AI governance system.

If you're using ISO 42001, the combined FRIA + DPIA slots into the Plan-Do-Check-Act cycle as your primary risk assessment artifact. It's how you operationalise Clause 6.1 (actions to address risks and opportunities) for AI-specific deployments.

If you're mapping to NIST AI RMF, the combined assessment lives in the MAP function (context and risk framing) and the MEASURE function (assessing identified risks against defined metrics). The unified risk table from Step 4 above is directly reusable as input to NIST's risk profiling process.

For the detailed framework mappings, see our EU AI Act vs ISO 42001 vs NIST AI RMF crosswalk. If your problem is that you don't know which AI systems you're even deploying, start with the Shadow AI Discovery Protocol before attempting any assessment.

Related tools and references

FRIA vs DPIA: frequently asked questions

You can run a single combined process that shares scoping, system description, stakeholder interviews, and risk identification. But you should produce two separate output documents: a DPIA report for GDPR compliance and a FRIA report for EU AI Act Article 27 compliance. This preserves legal accountability while eliminating duplicate work.

Yes, if your deployment triggers Article 27 of the EU AI Act. A DPIA covers personal data processing risks under GDPR. A FRIA covers broader fundamental rights impacts including non-discrimination, access to services, and due process. They overlap but are not substitutes for each other.

The deployer of the high-risk AI system is responsible under Article 27. In practice, this typically falls to the DPO, compliance lead, or CISO, depending on organisational structure. The FRIA must be completed before the high-risk AI system is put into service.

Article 27 applies to deployers that are public bodies or private entities providing services in areas listed in Annex III points 5(a), 5(b), 5(c), 5(d), 6, 7, and 8. This includes private employers using AI for recruitment decisions, creditworthiness assessment, insurance pricing, and access to essential services.

Article 27 requires the FRIA to be updated when there is a substantial change to the AI system or its deployment context. Best practice is to review the FRIA at least annually and after any material change in system functionality, target population, or regulatory guidance.

Article 27(4) requires deployers to notify the relevant market surveillance authority of the results of the FRIA. The full FRIA document does not need to be made public, but the notification obligation means you should assume a regulator will review it.

A DPIA under GDPR Article 35 focuses on risks to personal data rights and freedoms arising from data processing. A FRIA under EU AI Act Article 27 focuses on broader fundamental rights risks arising from the deployment of a high-risk AI system, including non-discrimination, access to services, education, employment, and due process. Both may be required for the same AI system.

A FRIA is required under Article 27 when a deployer of a high-risk AI system is a public body or a private entity providing services in domains listed in Annex III points 5-8, including creditworthiness, insurance, employment decisions, and access to essential public services. The obligation applies from August 2, 2026.

About the author

Abhishek G Sharma is the founder of EU AI Compass and Move78 International Limited. He holds ISO/IEC 42001 LA, ISO/IEC 27001 LA, CISA, CISM, CRISC, CEH, and CCSK certifications, with 20+ years of experience in cybersecurity, cloud security, and AI governance across Asia, Europe, and the Middle East.

Need Broader AI Governance Support?

EU AI Compass focuses on free EU AI Act tools and guides. For broader cross-framework support covering governance frameworks and implementation approaches, visit Move78 International.

Visit Move78 InternationalImportant disclaimer: this guide is not legal advice

This guide provides educational and operational guidance only. It is not legal advice. The EU AI Act and GDPR are evolving — AI Office guidance, national authority interpretations, and case law will continue to refine how FRIAs and DPIAs are implemented in practice. National authorities may differ in how they interpret FRIA triggers and format requirements. Consult qualified legal counsel for borderline or high-sensitivity deployments. Content is current as of March 2026. Published by Move78 International Limited, Hong Kong SAR.

Sources & legal basis

EU AI Act Article 27 (FRIA): Eur-Lex Regulation 2024/1689

GDPR Article 35 (DPIA): Eur-Lex Regulation 2016/679

AI Act Service Desk: ai-act-service-desk.ec.europa.eu

EDPB-EDPS Joint Opinion 1/2026: Advisory opinion on the Digital Omnibus proposal

European Commission AI Act regulatory framework: EC Regulatory Framework for AI