What makes an AI system high-risk?

Under the EU AI Act, high-risk AI systems are those that can significantly affect people's health, safety, or fundamental rights. The regulation doesn't ban these systems — it requires anyone building or using them to meet strict accountability standards. If you're deploying AI for credit decisions, hiring, insurance pricing, or biometric identification, you're almost certainly in scope.

High-risk designation comes through two routes:

Annex I route: AI systems that serve as safety components of products already regulated under EU product safety law — medical devices, machinery, toys, vehicles, aviation equipment. These obligations become enforceable on August 2, 2027.

Annex III route: AI systems used in specific high-impact domains listed in Annex III of the regulation. This is the route that applies to most deployers, and it becomes enforceable on August 2, 2026. There are 8 high-risk areas covering everything from recruitment to critical infrastructure.

Article 6 defines the classification logic. Article 6(3) offers a narrow exemption — if the AI system doesn't pose a significant risk of harm to health, safety, or fundamental rights, it may be exempt. But the burden of proof sits squarely on the deployer or provider. You can't just assert the exemption; you need to document it using the Article 6(3) exemption generator.

The Material Influence Evaluator can help you assess whether your AI system's output materially affects decisions about natural persons — a key factor in classification.

One important caveat: the Digital Omnibus proposal may extend Annex III deadlines to December 2, 2027. But that isn't law yet. Plan for August 2, 2026. For the full picture across all risk levels, see our complete EU AI Act compliance guide.

Annex III: the 8 high-risk use case areas explained

Under the EU AI Act, a deployer is any natural or legal person that uses an AI system under its authority in a professional capacity, except for personal non-professional activity (Article 3(4)). High-risk AI systems under Annex III include AI used in recruitment and employment, creditworthiness assessment, insurance risk scoring, education, biometric identification, critical infrastructure, law enforcement, migration, and administration of justice.

Here's what each area covers and which of your AI systems might fall into it.

Area 1: Biometric identification and categorisation

Remote biometric identification (both real-time and post-hoc), emotion recognition in workplace and educational settings, and biometric categorisation systems that infer race, political opinions, religion, or sexual orientation. Think facial recognition for access control, age estimation systems, or any tool that identifies individuals from biological data. Use the Biometric Identity Validator to check your systems.

Area 2: Critical infrastructure management

AI systems used as safety components in managing road traffic, water, gas, heating, electricity supply, and digital infrastructure. If you're running AI-optimised grid load balancing or autonomous traffic management, this is your area. Use the IIoT Safety Component Validator for industrial applications.

Area 3: Education and vocational training

AI systems determining access to educational institutions, evaluating learning outcomes, monitoring behaviour during tests, or detecting prohibited conduct. AI-powered proctoring, automated essay grading, and admissions screening all fall here. The EdTech Assessment Validator covers this space.

Area 4: Employment, workers management, and self-employment access

AI systems for recruitment and selection — screening, filtering, assessing candidates — plus decisions on promotion, termination, task allocation, and performance monitoring. Resume screening tools, AI-powered video interview scoring, performance analytics that influence promotion decisions: all high-risk. The Promotion & Termination Validator is built for this.

Area 5: Essential private and public services

5a — Creditworthiness assessment: AI that determines access to financial services through credit scoring or automated lending decisions. 5b — Insurance risk and pricing: AI for life and health insurance underwriting, risk scoring, and claims assessment that affects coverage. 5c — Emergency services: AI evaluating and classifying emergency calls for prioritisation and dispatch. Use the Fraud vs Credit Scoring Delimiter for financial services and the Insurance Underwriting Assessor for insurance.

Area 6: Law enforcement

Polygraphs, deception detection, evidence reliability assessment, crime prediction, and profiling during investigations. Most SMEs won't deploy these — included for completeness.

Area 7: Migration, asylum, and border control

Risk assessment tools, polygraphs, document authenticity verification, and residence permit evaluation. Again, primarily a government and large-agency concern.

Area 8: Administration of justice and democratic processes

AI assisting judicial fact-finding, law application, and dispute resolution, plus AI systems that could influence elections. Narrow applicability for most commercial deployers.

⚖ Which Annex III area applies to you?

Not sure where you fit? Start with the Compliance Checker →

For the full checklist of Annex III systems with examples, see our Annex III high-risk AI systems checklist.

Provider vs deployer: which obligations are yours?

This is where most organisations get confused — and where the most dangerous assumptions live. The EU AI Act draws a hard line between providers (who build or market AI systems) and deployers (who use them). Each role carries distinct obligations, and they don't overlap the way people expect.

The provider bears the heaviest burden: risk management system, data governance, technical documentation, logging design, transparency to users, human oversight design, accuracy and robustness testing, conformity assessment, CE marking, EU database registration, quality management, and post-market monitoring.

The deployer owns operational accountability under Article 26: use the system according to provider instructions, implement human oversight, monitor operations, retain logs for at least 6 months, conduct a FRIA where required (Article 27), inform employees about workplace AI, ensure input data quality, and maintain AI literacy for all staff involved in oversight (Article 4 — enforceable since February 2, 2025).

⚠ The "accidental provider" trap (Article 25)

If you modify a high-risk AI system substantially, put your name or trademark on it, or change its intended purpose — you become a provider and inherit all provider obligations. This catches: fine-tuning vendor models, rebranding white-label AI, and deploying a system for a use case the provider didn't intend. Check if you've accidentally become a provider →

Your vendor being "EU AI Act compliant" does not make you compliant.

A SOC 2-compliant SaaS vendor doesn't make you SOC 2 compliant. Same principle here. The vendor provides components and documentation. You still own your operational controls, oversight, monitoring, incident handling, and evidence.

Use the Deployer Obligation Self-Assessment to map your gaps, and the Article 26 Operations Scorer to benchmark your readiness.

Article 26 obligations: what deployers must do, point by point

Article 26 is the operational core of what deployers owe. Here's each obligation with practical guidance on what "good" actually looks like. Are you confident you can demonstrate compliance for each one?

1. Use in accordance with instructions (Art. 26(1))

Obtain and retain the provider's instructions for use. Don't deploy the system for purposes outside the provider's stated intended purpose. If you change the intended purpose, you risk triggering Article 25 and becoming a provider yourself.

2. Human oversight (Art. 26(2))

Assign named individuals responsible for oversight. Those people need the competence, authority, and resources to override or intervene. Document everything: who reviews AI outputs, what triggers human review, how overrides are escalated. Use the Human Oversight Log to structure this.

3. Input data relevance (Art. 26(4))

Ensure data fed into the AI system is relevant to the intended purpose. If you control input data, it must be sufficiently representative. The Input Data Validator helps you assess this.

4. Monitoring and logging (Art. 26(5))

Monitor AI system operation for risks, anomalies, or incidents. Retain automatically generated logs for at least 6 months — or longer if sector-specific EU or national law requires it. If you detect a serious incident or malfunction, report to the provider AND the relevant national authority.

5. Fundamental Rights Impact Assessment (Art. 27)

Required before deployment by: (a) bodies governed by public law, (b) private entities providing public services, (c) deployers of credit scoring or insurance pricing/risk assessment systems. The FRIA must assess impact on affected persons, specific context of use, and risk of harm to fundamental rights. The EU AI Office is expected to publish a FRIA template, but hasn't done so as of March 2026. Use the FRIA Generator in the meantime.

6. Transparency to affected persons (Art. 26(11))

Inform natural persons that they're subject to a high-risk AI system decision. Limited exemptions exist for law enforcement contexts.

7. Workplace notification (Art. 26(7))

Inform employees or worker representatives before deploying a high-risk AI system in the workplace. This applies specifically to workplace management, task allocation, monitoring, and termination decisions.

8. AI literacy (Art. 4)

All personnel involved in AI system operation and oversight must have sufficient AI literacy. This has been enforceable since February 2, 2025 — it's already live. The AI Literacy Training Planner helps you structure a programme.

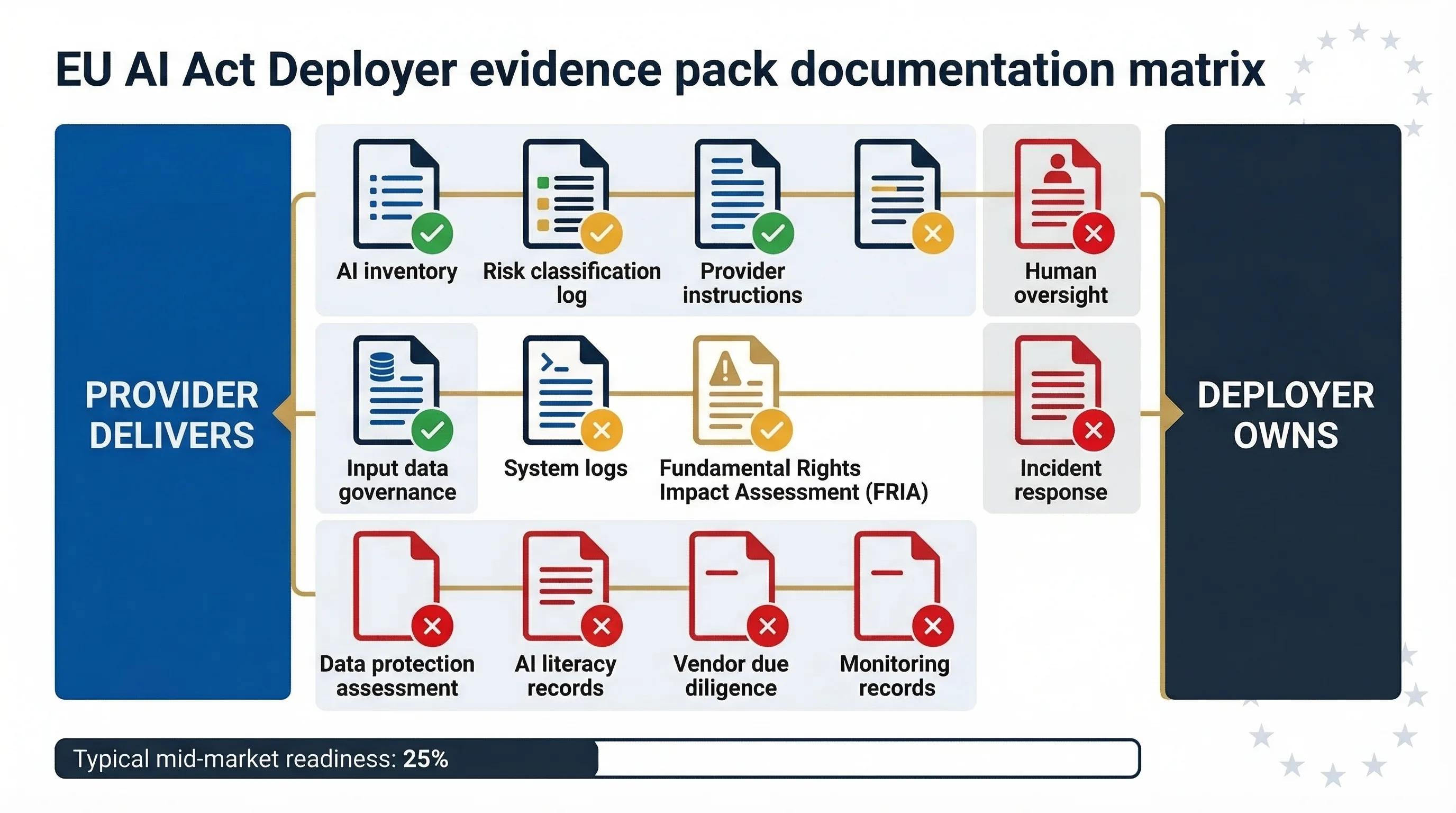

The deployer evidence pack: what documentation you need before August 2026

This is where theory meets reality. You may understand your obligations — but can you produce the evidence that proves you've met them? Most mid-market deployers I assess have 2-3 of these items partially in place and zero items in audit-ready condition. The gap isn't knowledge. It's packaging. You know what to do; you haven't produced the artifacts that prove you did it.

| Evidence Item | EU AI Act Source | What It Contains | Who Owns It |

|---|---|---|---|

| AI system inventory | Articles 26, 4 | Complete register of all AI systems in use, providers, intended purposes, risk classifications | Deployer |

| Risk classification decision log | Article 6, Annex III | Documented reasoning for each system's risk level, including Article 6(3) exemption analysis if claimed | Deployer (with provider input) |

| Provider instructions for use | Art. 13 (provider), Art. 26(1) (deployer) | Intended purpose, limitations, human oversight specs, performance metrics | Provider delivers; Deployer retains |

| Human oversight arrangements | Art. 14 (design), Art. 26(2) (implementation) | Named oversight persons, competence requirements, review triggers, escalation paths, override procedures | Deployer |

| Input data governance records | Article 26(4) | Data quality checks, representativeness assessment, bias review for deployer-controlled input data | Deployer |

| System operation logs | Art. 12 (design), Art. 26(5) (retention) | Automatically generated logs, retained minimum 6 months | Provider designs logging; Deployer retains logs |

| FRIA documentation | Article 27 | Impact assessment on fundamental rights, context analysis, risk mitigation measures | Deployer |

| Incident response plan | Art. 26(5), Art. 62 | Serious incident definition, reporting workflow (provider + national authority), timelines, responsible persons | Deployer |

| AI literacy training records | Article 4 | Training programme description, completion records, competence assessment results | Deployer |

| Vendor due diligence records | Articles 25, 26 | Provider's conformity declaration, CE marking, EU database registration confirmation, contract terms covering AI Act obligations | Deployer verifies; Provider supplies |

| Monitoring & performance records | Article 26(5) | Ongoing performance monitoring results, drift detection, accuracy checks, bias monitoring outputs | Deployer |

That's 11 evidence items. How many can you produce right now, in a format that would satisfy a regulator?

📦 Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

The 11-item deployer evidence pack: most mid-market organisations have fewer than 3 items in audit-ready condition.

What your AI vendor covers — and what they don't

This is the most dangerous misconception in the market right now. I've sat across from compliance teams who genuinely believed their vendor's "EU AI Act ready" badge covered their obligations. It doesn't. Here's the split.

| Your vendor (provider) should deliver | You (deployer) still own |

|---|---|

| Technical documentation describing the system | Your AI system inventory and classification decisions |

| Instructions for use (intended purpose, limitations) | Using the system per those instructions |

| Conformity assessment and CE marking | Verifying the vendor completed conformity assessment |

| Risk management system design | Implementing human oversight in YOUR context |

| Logging system design | Retaining logs for at least 6 months |

| Accuracy and robustness testing | Monitoring performance in YOUR production environment |

| Post-market monitoring system | Reporting serious incidents to vendor AND authority |

| EU database registration | FRIA (if you're in a triggering category) |

| AI literacy training for YOUR staff | |

| Workplace notification to YOUR employees | |

| Vendor due diligence documentation |

The provider's conformity assessment covers the system as designed. It doesn't cover how you deploy it, what data you feed it, what decisions you make based on its output, or whether your staff has the competence to oversee it.

Contract review is critical: your vendor agreement should explicitly address which AI Act obligations the vendor fulfils and which fall to you. If the contract is silent on this, you've got a gap. And if the vendor updates the system, you need to assess whether the change triggers a re-classification or additional obligations.

Use the AI Vendor Risk Screener to evaluate your vendor's posture. And run the Accidental Provider Classifier to check if any modifications you've made have shifted your role.

High-risk AI by industry: what deployers in your sector need to know

The abstract categories in Annex III become concrete when you map them to your sector. Here's what matters for the four industries where most of our ICP operates.

AI in recruitment and employment (Annex III Area 4)

AI systems that screen CVs, rank candidates, score video interviews, filter applicants, or automate early-stage rejection are all high-risk. So are systems monitoring employee performance, making promotion or termination recommendations, or allocating tasks. ATS platforms with AI-powered screening — Workday, iCIMS, SmartRecruiters, Eightfold, HireVue, and others — create deployer obligations for the employer. Not the ATS vendor. The employer is the deployer. Use the Promotion & Termination Validator to assess your HR AI systems.

AI in credit scoring and lending (Annex III Area 5a)

AI systems assessing creditworthiness of natural persons are explicitly high-risk. That includes credit scoring models, automated loan decisioning, credit limit assignment, and affordability checks. Fraud detection is NOT automatically high-risk — but the line matters, because fraud detection that blocks transactions can functionally deny access to financial services. FRIA is mandatory for deployers in this category. The Fraud vs Credit Scoring Delimiter helps draw that line.

AI in insurance pricing and underwriting (Annex III Area 5b)

AI for risk assessment and pricing in life and health insurance is high-risk. Automated underwriting, risk scoring for policy pricing, and claims assessment that affects coverage or renewal all fall in scope. Property and casualty insurance isn't explicitly listed — but if AI decisions affect access to essential services, classification may still apply. Run the Insurance Underwriting Assessor to check.

AI in education (Annex III Area 3)

AI systems determining access to education, evaluating learning outcomes, monitoring student behaviour during tests, or detecting prohibited conduct — all high-risk. AI proctoring, automated grading, admissions screening, and learning analytics that influence student placement are all in scope. The EdTech Assessment Validator covers this sector.

Conformity assessment: it's the provider's job, but you need to verify it

Conformity assessment is the provider's obligation under Article 43. For most Annex III systems, it's a self-assessment (internal control procedure under Annex VI). For biometric identification (Area 1) and critical infrastructure (Area 2), a third-party conformity assessment by a notified body is required.

After conformity assessment, the provider affixes the CE marking and registers the system in the EU database. Your role as deployer: verify the vendor has completed conformity assessment, has CE marking, and is registered. If any of these are missing, don't deploy the system.

Request the provider's EU Declaration of Conformity (Article 47). If they can't produce it, that's a red flag you can't ignore.

When do high-risk obligations become enforceable?

Here's the timeline that matters for deployers of Annex III high-risk AI systems:

February 2, 2025 — AI literacy (Article 4) enforceable

Already live. All organisations deploying AI must ensure sufficient AI literacy among staff involved in operations and oversight.

August 2, 2026 — Annex III high-risk obligations enforceable

Current law. All deployer obligations under Article 26, FRIA requirements (Article 27), and transparency obligations (Article 50) for high-risk and limited-risk systems kick in.

August 2, 2027 — Annex I high-risk obligations enforceable

For AI systems that are safety components of products regulated under EU product safety legislation.

December 2, 2027 — Proposed Omnibus extension (NOT YET LAW)

The Digital Omnibus proposal may push Annex III deadlines to this date. It hasn't been adopted. Don't plan around it.

⚠ Penalties for high-risk violations

Up to €15 million or 3% of worldwide annual turnover — whichever is higher (Article 99). SMEs pay the lower of the two amounts. No enforcement actions have been issued yet as of March 2026, but national competent authorities are being designated across member states.

For the full enforcement picture, see: Confirmed vs proposed timeline · National enforcement tracker · August 2026 deadline SME guide

How to start: a step-by-step roadmap for high-risk AI deployers

If you're reading this and realising you have gaps, here's the sequence that works. I've walked multiple organisations through this process, and the order matters more than most people think.

Inventory all AI systems.

Start with procurement records, SaaS subscriptions, and vendor contracts. Don't forget embedded AI in tools your team uses daily. Use the Shadow AI Discovery Protocol to find what's hidden.

Classify each system against Annex III.

Use the sector-specific validators above. Document your classification reasoning — that becomes evidence item #2.

Request provider documentation.

For every high-risk system: instructions for use, conformity declaration, CE marking confirmation, EU database registration ID. If your vendor can't produce these, that's a red flag.

Conduct a deployer obligation gap assessment.

Use the Deployer Obligation Self-Assessment to identify which obligations you've met and where the holes are.

Implement human oversight.

Name oversight individuals, define review triggers and escalation paths, document the arrangements. Use the Human Oversight Log.

Conduct FRIA (if required).

If you deploy high-risk AI in public services or credit/insurance decisions affecting natural persons, the FRIA is mandatory before deployment. Use the FRIA Generator.

Build your evidence pack.

Assemble all 11 evidence items from the matrix above. This is what an auditor, regulator, or procurement team will ask for.

Establish ongoing monitoring.

Post-deployment monitoring isn't optional. Define what you check, how often, and what triggers escalation.

🚀 Start your high-risk compliance journey

25+ free tools for high-risk AI deployers

Every tool below runs in your browser, collects zero data, and requires no login. They're organised by the stage of your EU AI Act compliance checklist — from initial classification through to evidence documentation.

Stage 1: Classify your systems

EU AI Act Compliance Checker

Quick classification against the full regulation

Quick Risk Quiz

5-minute risk-level assessment

Detailed Applicability Scorer

In-depth applicability analysis

Accidental Provider Classifier

Check if modifications made you a provider

Stage 2: Validate sector classification

Biometric Identity Validator

Area 1: Biometric identification

EdTech Assessment Validator

Area 3: Education and vocational training

Promotion & Termination Validator

Area 4: Employment and workers management

Fraud vs Credit Scoring Delimiter

Area 5a: Creditworthiness assessment

Insurance Underwriting Assessor

Area 5b: Insurance risk and pricing

Article 6(3) Exemption Generator

Document exemption from high-risk classification

Stage 3: Assess and document obligations

Deployer Obligation Self-Assessment

Map your compliance gaps against Article 26

Article 26 Operations Scorer

Benchmark your operational readiness

Local FRIA Generator

Generate fundamental rights impact assessment

Human Oversight Log

Structure oversight arrangements

Input Data Validator

Assess data relevance and representativeness

Automation Complacency Assessor

Evaluate oversight effectiveness

AI Literacy Training Planner

Plan Article 4 literacy programme

Related tools and references

FAQ: high-risk AI deployer compliance

Yes. AI systems used for recruitment, screening, filtering, evaluating candidates, or making decisions about promotions, terminations, or task allocation fall under Annex III Area 4 (Employment, workers management, and access to self-employment). If your organisation uses an ATS with AI-powered features, you're a deployer of a high-risk system. The employer is the deployer, not the ATS vendor.

No. Provider compliance covers the AI system as designed and tested. Deployer obligations — human oversight, monitoring, logging, FRIA, AI literacy, incident reporting, and vendor verification — remain your responsibility regardless of your vendor's compliance status. Think of it like SOC 2: your SaaS vendor's SOC 2 report doesn't make you SOC 2 compliant.

A FRIA is required before deploying high-risk AI systems by bodies governed by public law, private entities providing public services, or deployers using credit scoring or insurance pricing and risk assessment systems (Article 27). The FRIA must assess the impact on specific affected persons in the specific deployment context.

If you substantially modify a high-risk AI system, change its intended purpose, or place it on the market under your name, you may become a provider under Article 25 and inherit all provider obligations — including conformity assessment and CE marking. This catches fine-tuning vendor models, rebranding white-label AI, and deploying a system for a purpose the provider didn't intend.

At least 6 months, or longer if required by sector-specific EU or national law (Article 26(5)). Financial services, healthcare, and employment regulations may impose longer retention periods. Establish a log retention policy aligned with the strictest applicable requirement.

No. Fraud detection and creditworthiness assessment are different use cases with different risk classifications. Credit scoring that determines access to financial services is explicitly high-risk under Annex III Area 5a. Fraud detection may or may not be high-risk depending on whether it functionally denies service access to natural persons.

It's not classified as high-risk under the current text. However, you may still have transparency obligations under Article 50 and AI literacy obligations under Article 4. Voluntary compliance with high-risk standards is encouraged via codes of conduct (Article 95). Also monitor the Digital Omnibus — the scope of Annex III may change.

Abhishek G Sharma

Founder & CEO, Move78 International Limited

20+ years in cybersecurity and risk management. Certifications: ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, CAIRO.

Need More Practical Guidance?

From self-service templates to guided workshops and full advisory engagements — we meet you where you are.

Disclaimer

This guide is for educational and informational purposes only. It does not constitute legal advice. The EU AI Act (Regulation 2024/1689) is a complex regulation and its interpretation may evolve as implementing acts, guidelines, and enforcement decisions are published. Consult qualified legal counsel for advice specific to your organisation's circumstances. Move78 International Limited makes no warranties regarding the completeness or accuracy of this content. All regulatory references are current as of March 2026.