Quick answer

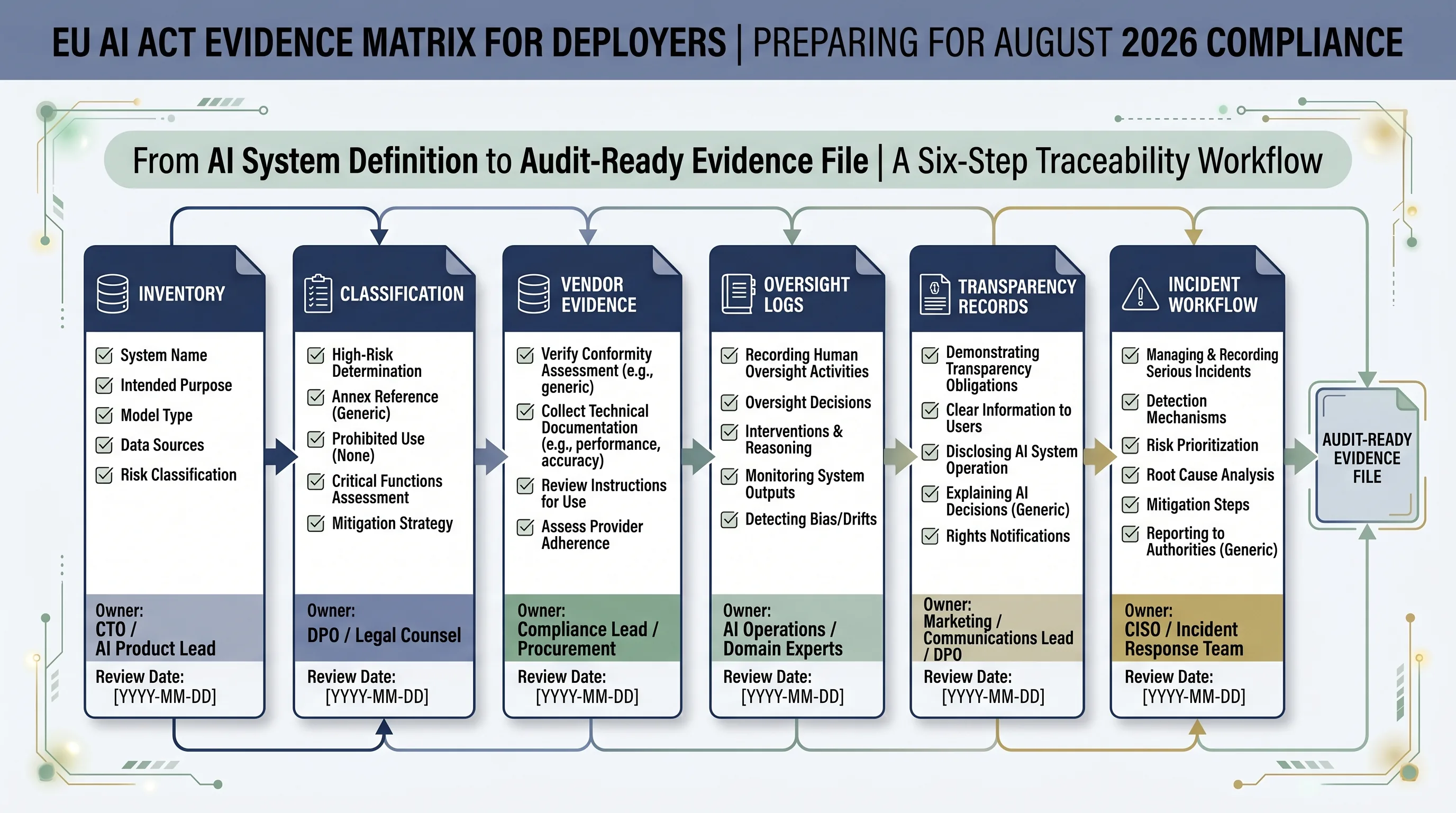

EU AI Act evidence checklist work should start with one controlled file per AI system. For deployers, the practical file should show what the system is, why it is or is not high-risk, who owns it, what the vendor provided, how humans oversee it, which logs are retained, what disclosures were made, and how serious incidents are escalated.

This is not an official regulator-issued checklist. It is an operational evidence model for teams preparing for August 2026 under current law.

Why August 2026 changes the evidence conversation

The AI Act applies from 2 August 2026, with several earlier and later exceptions. That date matters because many deployer teams will need to move from policy language to records that can survive review. A board slide saying “we are tracking AI risk” is not enough. Someone must be able to open the evidence file for a real system.

For high-risk AI systems, the evidence trail should connect current-law scope, Annex III classification, Article 26 deployer operation, provider instructions, human oversight, logging, transparency, data protection routing, and serious incident workflow. Some of these duties sit primarily with providers. Some sit with deployers. Audit failure often begins when nobody can separate the two.

Current-law discipline: track proposal-stage timeline changes separately. Until adopted law changes the position, build the evidence plan against the current Regulation (EU) 2024/1689 baseline and keep a visible source-review date.

What evidence should deployers be ready to show?

The evidence set below is designed for deployers, not providers. Use it as a gap map. For each AI system, the question is not “do we have a policy?” The question is “can we show the record, owner, decision, date, and review trail?”

Auditors usually test traceability before maturity. They pick one system and follow the chain: inventory entry, role decision, risk classification, vendor file, oversight owner, log source, disclosure decision, and incident route. If that chain breaks, the policy does not rescue the file.

| Evidence area | What to keep | Why it will be requested | Free EU AI Compass starting point |

|---|---|---|---|

| AI system inventory | System name, owner, vendor, business process, users, data categories, deployment status, geography, and review date. | Auditors need the controlled population before they can test classification, oversight, disclosures, and incident readiness. | Start the AI system inventory |

| Role and scope decision | Provider/deployer/importer/distributor role analysis, use case, affected persons, output use, and EU nexus. | Role errors create downstream evidence errors. A deployer file is not the same as a provider technical file. | Separate provider and deployer responsibilities |

| High-risk classification | Annex III mapping, Article 6 decision, rationale, reviewer, and approval date. | High-risk classification determines evidence depth, oversight, logging, vendor requests, and escalation needs. | Classify the AI system before deployment |

| Vendor evidence file | Instructions for use, intended purpose, limitations, accuracy/robustness statements, logging details, change notice process, and support contact. | Deployer teams must be able to show what the vendor handed over and how operational controls were built around it. | Request vendor evidence before deployment |

| Human oversight log | Oversight owner, training/competence record, intervention authority, review frequency, overrides, exceptions, and sign-off. | Human oversight must be operational. A named person must be able to monitor, interpret, intervene, or escalate. | Record human oversight decisions |

| Input data and use controls | Input data owner, permitted inputs, prohibited inputs, quality checks, relevance review, and misuse handling. | Article 26 creates deployer responsibility for input data relevance where the deployer controls those inputs. | Run the Deployer Obligation Self-Assessment |

| Logs and traceability | Available system logs, access logs, tool/action logs, prompt/output records where appropriate, retention rule, and access control. | Logs support monitoring, incident analysis, post-market evidence, and reconstruction of system operation. | Use the Evidence Starter Library |

| Article 50 transparency records | Disclosure trigger decision, notice copy, channel placement, screenshot proof, version history, and approval record. | Transparency evidence is needed when users interact with AI systems, synthetic content, deepfakes, emotion recognition, biometric categorisation, or public-interest AI-generated text are in scope. | Review AI content marking triggers |

| FRIA / DPIA routing | Decision record explaining whether FRIA, DPIA, both, or neither is needed, plus reviewer and rationale. | Deployers need to show that fundamental-rights and data-protection impact assessment questions were considered, not guessed. | Use the FRIA starter worksheet |

| Serious incident workflow | Incident intake, severity trigger, provider notification path, authority escalation logic, investigation owner, and closure record. | If the system fails in a serious way, teams need a reporting path before the incident occurs. | Prepare the serious incident register |

Which evidence belongs to the provider, and which evidence belongs to the deployer?

Provider evidence and deployer evidence overlap, but they are not interchangeable. Providers normally control technical documentation, system design details, conformity evidence, instructions for use, logging capability, and performance information. Deployers control the business use case, local configuration, user assignment, human oversight, input data under their control, operational logs under their control, workplace notifications, and incident escalation inside the organization.

Provider-side evidence to request

- Instructions for use and intended purpose

- Technical documentation summary appropriate for deployer use

- Accuracy, robustness, and cybersecurity information

- Logging capability and available export fields

- Change notice and post-market monitoring contact

Deployer-side evidence to create

- Inventory and business owner record

- Use-case and role classification decision

- Human oversight assignment and review log

- Input data control and monitoring record

- Disclosure, incident, and internal escalation files

The practical control is a handoff record: what the vendor gave you, what your team accepted, what gaps remain, who signed off, and what must be reviewed after deployment.

30-day evidence sprint before August 2026

A 30-day sprint should not try to solve every EU AI Act issue. It should create an evidence baseline that makes gaps visible. The output is a working evidence file, not a perfect governance programme.

| Week | Action | Output |

|---|---|---|

| Week 1 | Build or clean the AI inventory. Assign one owner per system. | Controlled inventory with owner, vendor, process, deployment status, and review date. |

| Week 2 | Classify systems and separate provider/deployer responsibilities. | Role and high-risk decision record for priority systems. |

| Week 3 | Collect vendor documents and create human oversight records. | Vendor handoff file, oversight owner, intervention rules, and log availability record. |

| Week 4 | Check disclosure, FRIA/DPIA routing, incident workflow, and unresolved gaps. | Evidence gap register and management sign-off path. |

Common mistakes that weaken audit evidence

- Treating vendor PDFs as complete compliance evidence. Vendor material is an input. It does not prove your team used the system correctly.

- Keeping an inventory without owners. An ownerless inventory is a spreadsheet, not a control.

- Recording human oversight as a policy statement. Oversight needs competence, authority, review cadence, and intervention evidence.

- Ignoring logs until after an incident. If logs are not available, accessible, retained, or privacy-reviewed, incident reconstruction becomes weak.

- Mixing current law with proposal-stage assumptions. Track proposals, but do not build evidence on an assumed delay unless adopted law confirms it.

- Forgetting Article 50 evidence. Disclosure decisions need records, not just visible labels.

Where E1/E2 fits after the free evidence path

Use the free tools first. If one system exposes a few gaps, fix those gaps locally. If repeated evidence gaps appear across multiple systems, the E1/E2 implementation layer can help convert the evidence starter work into structured templates, control mapping, management-ready registers, and repeatable review packs.

Do not buy implementation material before you know which systems, roles, and evidence gaps matter. Diagnose first. Package second.

FAQ: EU AI Act evidence checklist

No. Regulation (EU) 2024/1689 does not publish a single deployer evidence checklist. This page translates current-law obligations into practical records a deployer should be ready to show: AI inventory, role classification, instructions for use, oversight records, logs, disclosure notices, incident workflow, vendor evidence, and review history.

No. The evidence set depends on the AI system, role, use case, risk classification, sector, and national implementation details. A low-risk internal productivity tool will not need the same file as a high-risk employment, education, credit, biometric, or essential-services system. Start with classification, then scale evidence depth.

Start with the AI system inventory. Without a controlled inventory, a deployer cannot prove which systems exist, who owns them, which vendors supply them, whether Annex III is triggered, what data is used, or which obligations apply. Inventory is the control point for every later evidence file.

No. Vendor documents help, but they do not replace the deployer’s own evidence. Deployers still need records showing the system was used according to instructions, human oversight was assigned, input data was managed where under their control, logs were retained where available, and incidents or risks were escalated.

Auditors usually test traceability first: whether the organization can connect the AI system inventory, role classification, risk decision, vendor file, oversight owner, log source, and operating record. A polished policy is weak evidence if the team cannot show the operating trail behind one real AI system.

Deployers should retain AI logs only through an approved privacy and retention model. Article 26 references at least six months where logs are under deployer control, unless other Union or national law provides otherwise. If logs include personal data, align retention with GDPR, security, access control, and legal counsel guidance.

Article 50 disclosure evidence should be stored as a versioned notice file, scenario decision record, channel inventory, approval record, and screenshot or deployment proof. For chatbots, synthetic content, deepfakes, emotion recognition, biometric categorisation, and public-interest AI-generated text, keep the disclosure trigger decision visible.

No. This page is operational guidance for evidence planning. It does not determine legal status, confirm compliance, or replace advice from qualified counsel. Use it to structure the evidence file, then validate high-risk, sector-specific, GDPR, employment, procurement, and national-law questions with appropriate professionals.

Source and review note

This page was reviewed against official AI Act Service Desk pages and the official Regulation (EU) 2024/1689 text linked from the Service Desk. It is operational guidance for evidence planning. It is not legal advice, and it does not guarantee compliance.

- Article 113: entry into force and application

- Annex III: high-risk AI systems

- Article 11: technical documentation

- Article 12: record-keeping

- Article 14: human oversight

- Article 15: accuracy, robustness and cybersecurity

- Article 26: obligations of deployers of high-risk AI systems

- Article 50: transparency obligations

- Article 73: reporting of serious incidents

Disclaimer: Validate legal classification, regulator-facing submissions, GDPR/DPIA implications, employment-law duties, sector-specific obligations, and national-law questions with qualified counsel or a competent regulatory adviser.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.