Four Roles Under the EU AI Act (Article 3)

The EU AI Act assigns obligations based on your role, not your industry. Get the role wrong and you're building compliance against the wrong set of requirements. Most mid-market companies are deployers — they use AI tools built by vendors. But there are four roles, and some organisations fall into more than one.

Provider (Article 3(3))

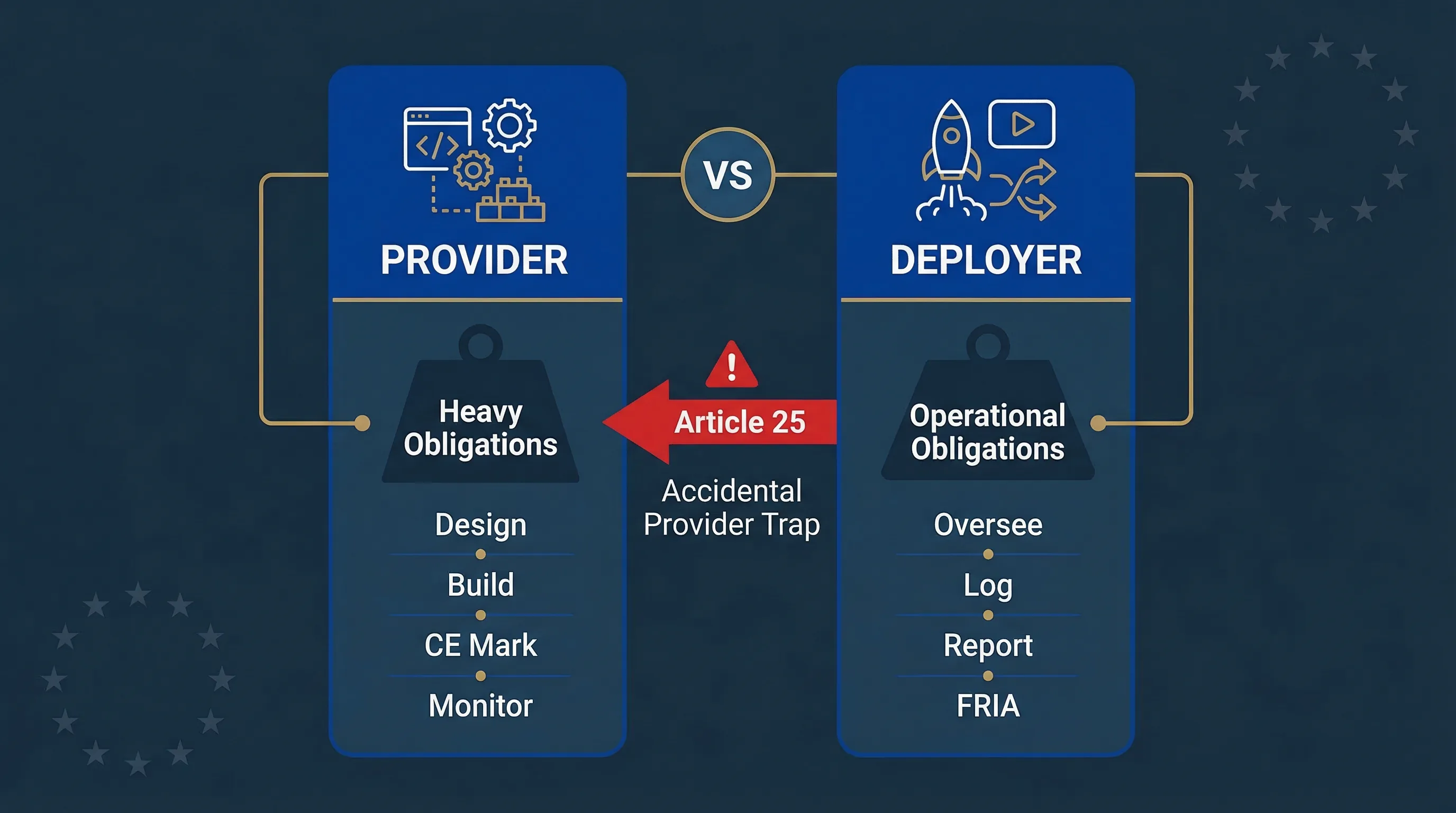

Any person or entity that develops an AI system (or has it developed) and places it on the EU market or puts it into service under its own name or trademark. If you build it and sell it under your name, you're a provider. Providers bear the heaviest obligations: the entire lifecycle from design through post-market monitoring.

Deployer (Article 3(4))

Any person or entity that uses an AI system under its authority in a professional capacity. If you use someone else's AI system in your business operations, you're a deployer. Deployer obligations are lighter than provider obligations but still substantial for high-risk systems — oversight, monitoring, logging, FRIA, AI literacy, and incident reporting.

Importer (Article 3(6))

Brings an AI system from a non-EU provider onto the EU market. Must verify the provider completed conformity assessment before importing.

Distributor (Article 3(7))

Makes an AI system available on the EU market without being the provider or importer. Must verify CE marking and documentation before distributing.

The key insight for most readers:

Most mid-market companies are deployers. They use AI tools built by vendors — ATS platforms, credit scoring models, chatbots, analytics tools. The provider is the vendor. The deployer is you.

Provider vs Deployer: Obligation-by-Obligation Comparison

This is the reference table. 16 obligations, side by side. If you're a CISO or compliance lead who needs to brief your board on what's required, this is the slide you need.

| Obligation | Provider | Deployer |

|---|---|---|

| Risk management system | Must establish and maintain throughout AI lifecycle (Art. 9) | Must monitor system operation for risks (Art. 26(5)) |

| Data governance | Training/validation/testing data quality, bias examination (Art. 10) | Input data relevant and representative for intended purpose (Art. 26(4)) |

| Technical documentation | Full Annex IV documentation (Art. 11) | Obtain and follow provider's instructions for use |

| Record-keeping / logging | Design automatic logging into system (Art. 12) | Retain logs for minimum 6 months (Art. 26(5)) |

| Transparency to users | Provide information to deployers (Art. 13) | Inform affected persons they're subject to AI decision (Art. 26(11)) |

| Human oversight | Design oversight features into system (Art. 14) | Implement oversight using provider's specified measures (Art. 26(2)) |

| Accuracy, robustness, cybersecurity | Achieve appropriate levels through design and testing (Art. 15) | Implicitly covered through monitoring obligations |

| Conformity assessment | Complete before market placement (Art. 43) | Not required — but must verify provider completed it |

| CE marking | Affix after conformity assessment (Art. 48) | Verify CE marking exists |

| EU database registration | Register before market placement (Art. 49) | Register if deploying in public services context |

| Quality management system | Establish and maintain (Art. 17) | Not required — but evidence pack expected |

| Post-market monitoring | Systematic monitoring system (Art. 72) | Monitor in-use performance (Art. 26(5)) |

| Incident reporting | Report serious incidents to market surveillance authority (Art. 73) | Report to provider AND authority if serious incident detected |

| FRIA | Not required | Required for certain deployers (Art. 27) |

| AI literacy | Ensure own staff competence | Ensure all staff operating AI have sufficient literacy (Art. 4) |

| Workplace notification | Not applicable | Inform employees about AI use in workplace decisions (Art. 26(7)) |

Article 25: How Deployers Accidentally Become Providers

This is the section that catches people. Three specific actions can flip your status from deployer to provider overnight, and most organisations don't realise they've crossed the line until someone points it out.

Trigger 1: Putting Your Name or Trademark on It

If you take a vendor's AI system and market it under your own brand, you're a provider for that system. Common scenario: white-labelling an AI product, reselling AI under a different name. You inherit all provider obligations — conformity assessment, CE marking, technical documentation, post-market monitoring. That's not a paperwork exercise; it's a fundamental change in your legal position.

Trigger 2: Making a Substantial Modification

If you modify a high-risk AI system in a way that affects its compliance status or changes the originally assessed risk, you become the provider for the modified system. What counts as "substantial" is fact-specific, but includes: re-training on significantly different data, changing the model architecture, altering decision thresholds that affect risk classification, or adding new use cases the original provider didn't intend. Fine-tuning a vendor model on your proprietary data could cross this line depending on how significantly performance or behaviour changes.

Trigger 3: Changing the Intended Purpose

If you deploy a high-risk AI system for a purpose the provider didn't specify in their instructions for use, you become the provider. Example: using a fraud detection model for creditworthiness assessment. The provider designed it for fraud; you're using it for credit scoring — different Annex III area, different risk profile. That repurposing makes you the provider.

| Article 25 Trigger | What You Did | Result | Example |

|---|---|---|---|

| Rebranding | Put your name or trademark on vendor’s AI | YOU ARE PROVIDER | White-labelling an AI chatbot under your brand |

| Substantial modification | Changed compliance status or risk profile | YOU ARE PROVIDER | Re-training on significantly different data, changing decision thresholds |

| Changed intended purpose | Used system for purpose provider didn’t specify | YOU ARE PROVIDER | Using fraud detection model for credit scoring |

Before modifying any AI system:

Check whether the modification triggers Article 25. The difference between deployer and provider obligations is enormous — conformity assessment alone can add months and tens of thousands of euros to your compliance burden.

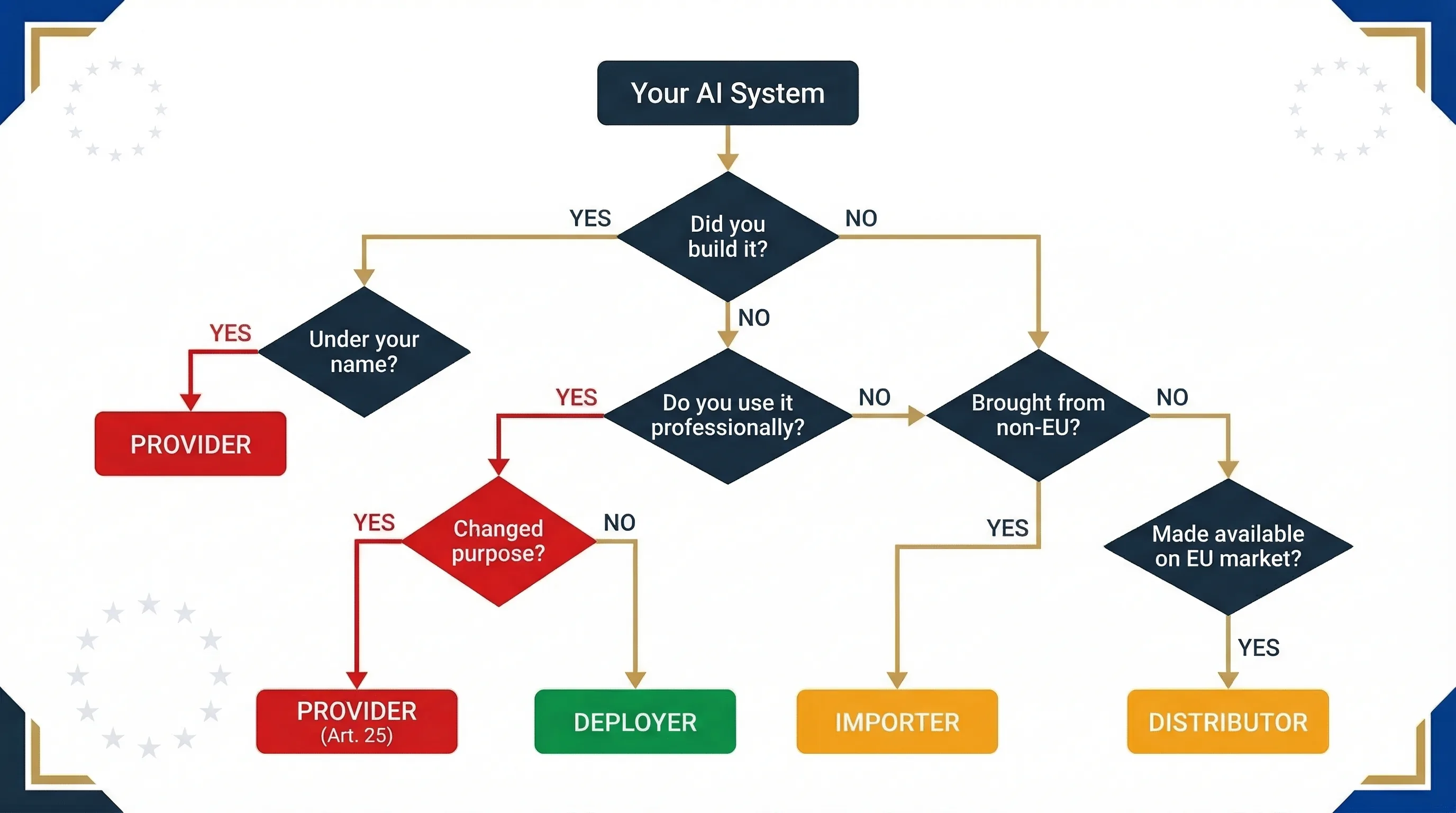

Decision tree: four questions to determine whether you're a provider, deployer, importer, or distributor under the EU AI Act.

Decision Tree: Determine Your EU AI Act Role in 4 Questions

Walk through this flowchart. Most organisations land on "deployer" within two questions.

Q1: Did you develop the AI system (or have it developed)?

YES → Did you place it on the EU market under your name or trademark? YES → You're a PROVIDER

YES, but under someone else's name → Did you make a substantial modification? YES → You're a PROVIDER (Article 25)

Q2: Do you use the AI system in a professional capacity?

YES → Did you change its intended purpose from what the provider specified? YES → You're a PROVIDER (Article 25)

YES, using as intended → You're a DEPLOYER

Q3: Did you bring a non-EU provider's system into the EU market?

YES → You're an IMPORTER

Q4: Did you make the system available on the EU market without being provider or importer?

YES → You're a DISTRIBUTOR

Most common result: Deployer. For your full deployer obligation checklist, see the High-Risk AI Deployer Guide. For role classification tools, use the Accidental Provider Classifier and Deployer Self-Assessment.

FAQ: Provider vs Deployer Under the EU AI Act

You are a deployer. You use the system under your authority in a professional capacity. The vendor who built and marketed it is the provider. Your obligations focus on oversight, monitoring, logging, and evidence, not system design.

Yes. If you build one AI system (provider) and use a different vendors AI system (deployer), you have provider obligations for the first and deployer obligations for the second. Classify each system independently.

Possibly. If the fine-tuning substantially changes the systems compliance status or risk profile, Article 25 applies and you become the provider for the modified system. The assessment depends on how significantly behaviour changed.

No. Provider compliance covers system design. Deployer compliance covers operational use: oversight, monitoring, logging, input data quality, FRIA, AI literacy, incident reporting, and vendor verification. You need both.

Same penalty structure applies to both roles. For high-risk system violations: up to 15 million euros or 3 percent of turnover. For prohibited practices: up to 35 million euros or 7 percent. The distinction is which obligations you violated, not which role you hold.

You are an importer under Article 3(6). You must verify the provider completed conformity assessment, the system has CE marking, and technical documentation is available before placing it on the EU market.

Related Compliance Tools

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel before making compliance decisions. Move78 International Limited is not a law firm. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law.