Quick answer

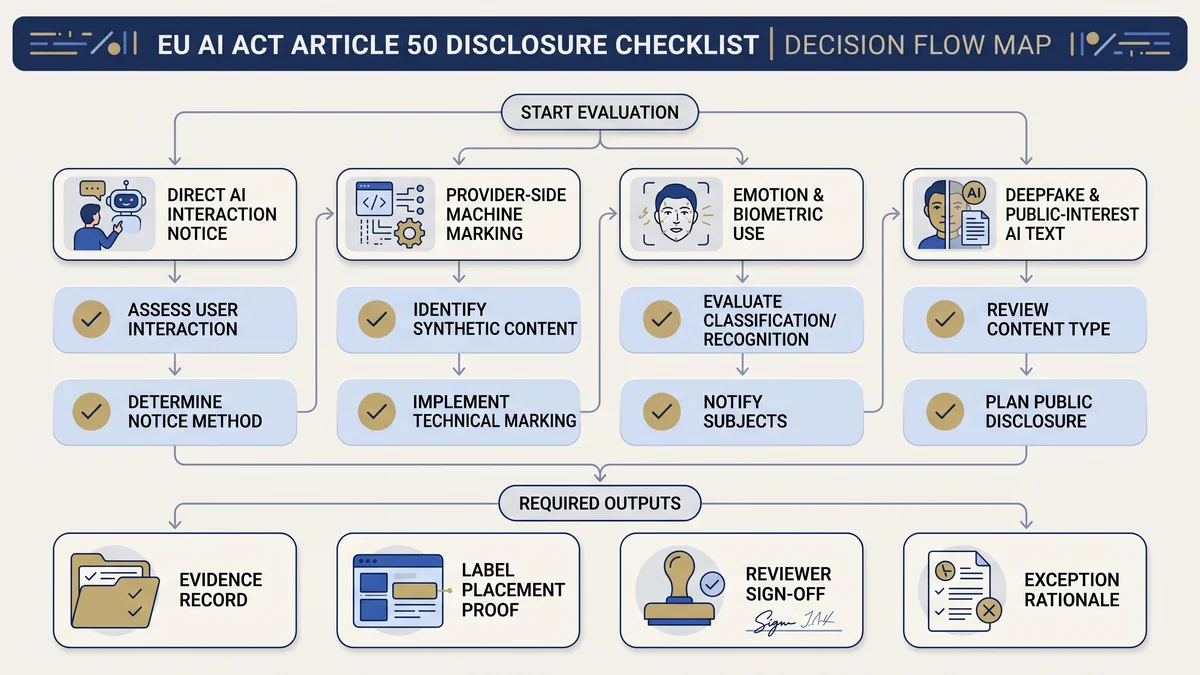

EU AI Act Article 50 disclosure checklist work is trigger-based. Do not label every AI workflow by default. Check whether the system creates direct AI interaction, synthetic output marking, emotion recognition or biometric categorisation notices, deepfake disclosure, or public-interest AI-generated text disclosure. Then retain the label decision, wording, placement, screenshot proof, reviewer, exception rationale, and next review date.

This page is an implementation checklist. For legal interpretation, use qualified counsel and official sources.

Who this checklist is for

This checklist is for deployers, product owners, marketing teams, communications teams, HR teams, DPOs, legal teams, compliance teams, and AI governance owners who need to decide whether Article 50 transparency evidence is required before an AI system or AI-generated content goes live.

Use it when

- Your team publishes AI-generated or AI-manipulated content.

- Your product includes chatbot or AI interaction flows.

- Your workflow may create deepfake image, audio, or video.

- Your system uses emotion recognition or biometric categorisation.

- Your organisation publishes AI-generated text about public-interest matters.

Do not use it as

- A legal opinion.

- A guarantee that a label is sufficient.

- A replacement for GDPR, employment-law, platform, copyright, or sector review.

- A substitute for provider evidence on machine-readable marking.

- A generic rule that every AI-assisted document must be labelled.

Article 50 trigger map

Article 50 transparency is not one control. It is a set of trigger-based duties split across providers and deployers. The first job is to identify the trigger. The second job is to record who owns the disclosure, marking, notice, or evidence record.

| Trigger | Primary operational question | Evidence to retain | Default owner |

|---|---|---|---|

| Direct interaction with an AI system | Will a natural person interact directly with an AI system, and is that obvious from context? | UX notice decision, notice wording, first-interaction screenshot, exception rationale. | Provider/system owner, with deployer UX evidence where deployed locally. |

| Synthetic audio, image, video, or text output | Does the provider’s system generate synthetic content that should be machine-readable and detectable as artificial or manipulated? | Provider marking statement, technical evidence request, vendor response, residual gap note. | Provider, with deployer vendor-evidence file. |

| Emotion recognition or biometric categorisation | Are exposed natural persons informed of the operation of the system, and has privacy review been completed? | Notice copy, placement, DPIA/privacy route, approval record, system owner, review date. | Deployer + DPO/legal/privacy. |

| Deepfake image, audio, or video | Does the content constitute a deepfake that needs disclosure unless an exception applies? | Disclosure label, publication proof, creative/satirical/fictional exception rationale if used. | Deployer/content owner + legal/comms. |

| Public-interest AI-generated or manipulated text | Is the text published to inform the public on a matter of public interest? | Editorial review note, disclosure decision, human review evidence, responsible editor or publisher record. | Deployer/editorial owner + legal/comms. |

Free tool path: run the AI Content Marking Checker, then add the output to your disclosure decision register.

Provider vs deployer responsibility split

The most common Article 50 mistake is treating provider-side marking and deployer-side disclosure as the same control. They are connected, but they are not interchangeable. A deployer may need vendor evidence that marking is available, but the deployer still needs its own publication, notice, label, exception, and review records where it controls the use case.

Provider-side evidence to request

- Direct-interaction notice design statement.

- Machine-readable marking statement for synthetic output.

- Technical limitations of marking and detection.

- Whether assistive editing or non-substantial alteration exceptions are claimed.

- Update/change notice for marking features.

Deployer-side evidence to create

- Disclosure trigger register.

- Notice or label wording.

- Placement screenshot or publication proof.

- Human review or editorial responsibility record.

- Exception rationale and legal/privacy escalation record.

Disclosure evidence checklist

Use this table as the operating file. The aim is not to create more paperwork. The aim is to make the disclosure decision reconstructable six months later when a buyer, auditor, board, regulator, journalist, customer, or affected person asks what happened.

| Evidence item | What to record | Why it matters |

|---|---|---|

| Scenario ID | System, content workflow, campaign, product feature, or publication channel. | Creates one controlled population for testing Article 50 decisions. |

| Article 50 trigger | Direct interaction, provider marking, emotion recognition, biometric categorisation, deepfake, or public-interest text. | Prevents vague “AI use” labels from replacing trigger analysis. |

| Role split | Provider duty, deployer duty, shared dependency, or vendor evidence request. | Stops deployer files from claiming provider-side controls without proof. |

| Label or notice wording | Exact disclosure text, language version, channel, and accessibility consideration. | Shows the information was clear, distinguishable, and accessible. |

| Placement proof | Screenshot, URL, app screen, content sample, notice page, or publication record. | Proves the label or notice was visible at first interaction or exposure. |

| Exception rationale | Human review/editorial responsibility, creative/satirical/fictional context, assistive editing, or authorised-law rationale where relevant. | Exception decisions are weak if they are not versioned and approved. |

| Reviewer and approver | Business owner, legal/privacy reviewer, DPO, comms owner, product owner, and approval date. | Creates accountability and review traceability. |

| Review trigger | Model update, workflow change, new channel, public-interest topic, biometric use, content republishing, or guidance update. | Stops stale disclosure decisions from surviving after the facts change. |

Deepfake and public-interest text decision table

Deepfake and public-interest text decisions are where Article 50 becomes visible outside the compliance team. Marketing, communications, public policy, investor relations, customer education, HR, and product content owners need a routing path before publication.

| Scenario | Checklist question | Evidence to retain | Escalation |

|---|---|---|---|

| AI-generated or manipulated video resembling a real person, object, place, entity, or event | Could a person falsely perceive it as authentic or truthful? | Deepfake decision note, disclosure label, publication screenshot, creative exception rationale if used. | Legal + comms. |

| AI-generated image or audio used in product, marketing, or training material | Is it synthetic or manipulated in a way that changes meaning or authenticity? | Content generation record, label decision, reviewer, channel placement proof. | Content owner + legal if public-facing. |

| AI-generated report, article, explainer, policy note, or public update | Is the text published to inform the public on a matter of public interest? | Public-interest decision, human review evidence, editorial responsibility record, disclosure decision. | Legal + editor/comms. |

| Creative, satirical, fictional, or analogous work | Can the disclosure be limited without hampering display or enjoyment? | Creative-context rationale, limited disclosure wording, approval record. | Legal + creative owner. |

| Human-reviewed AI-generated publication | Was there meaningful human review or editorial control, and who holds responsibility? | Review log, accountable person, editorial responsibility statement, final approval date. | Editorial owner + legal. |

Biometric categorisation and emotion recognition notices

Emotion recognition and biometric categorisation are not simple content-labelling issues. Article 50 requires deployers to inform exposed natural persons of the operation of those systems and process personal data under applicable data-protection law. Treat these use cases as legal, privacy, security, and governance escalation items.

Conservative rule: do not let product or HR teams self-approve biometric categorisation or emotion-recognition notices. Route to DPO/privacy, legal, security, and the business owner before deployment or exposure.

| Evidence area | What to keep |

|---|---|

| System purpose | Purpose statement, affected population, channel, geography, and lawful-use rationale. |

| Notice evidence | Notice wording, placement proof, timing, language accessibility, and exposed-person channel. |

| Privacy routing | DPIA route, data categories, retention, access control, vendor processor/controller analysis, and DPO review. |

| Governance approval | Legal/privacy/security sign-off, unresolved risks, review date, and deployment owner. |

Label wording, placement, timing, and proof

Article 50 requires the relevant information to be clear, distinguishable, accessible, and provided no later than the first interaction or exposure. The practical test is simple: a reviewer should be able to see what the person saw, when they saw it, and why that disclosure route was chosen.

Usable wording patterns

- “This content was artificially generated or manipulated.”

- “You are interacting with an AI system.”

- “This image/audio/video includes AI-generated or AI-manipulated content.”

- “This publication includes AI-generated text reviewed by [role/team].”

Proof to retain

- Screenshot at first interaction or exposure.

- URL or app screen where the notice appears.

- Language version and accessibility review.

- Approver and publication date.

- Exception decision if no label is shown.

Exceptions and escalation rules

Do not treat exceptions as informal judgement calls. If the team relies on an exception, record the legal basis, facts, reviewer, approval date, and source version. Weak exception evidence creates more risk than a conservative label.

| Exception or narrower treatment | Do not decide without | Record |

|---|---|---|

| Obvious AI interaction from context | UX review and user-context assessment. | Why the interaction is obvious to a reasonably informed, observant, and circumspect person. |

| Assistive editing or non-substantial alteration | Provider evidence and content-owner review. | Why the output does not substantially alter input data or semantics. |

| Authorised-law use for criminal-offence purposes | Qualified legal review. | Legal authority, safeguards, owner, and approval reference. |

| Creative, satirical, fictional, or analogous work | Legal/comms review. | Why limited disclosure is appropriate and how it avoids hampering enjoyment/display. |

| Human review/editorial control for public-interest text | Named accountable editor or legal person with responsibility. | Review evidence, editorial responsibility, final approval, and publication record. |

Escalate whenever the content could affect elections, financial decisions, employment, health, public safety, public policy, biometric data, children, vulnerable groups, or regulated sectors. This checklist does not replace legal review.

30-day Article 50 evidence sprint

A 30-day sprint should create a disclosure baseline, not a perfect transparency programme. Start with public-facing and people-facing workflows first, then extend to internal content, vendor-dependent marking evidence, and review triggers.

| Week | Workstream | Output |

|---|---|---|

| Week 1 | Inventory AI interaction and content channels. | List chatbots, AI assistants, synthetic content workflows, public publications, biometric/emotion systems, and owners. |

| Week 2 | Classify Article 50 triggers. | Trigger register split by provider marking, deployer notices, deepfake disclosure, public-interest text, and exceptions. |

| Week 3 | Draft notices, labels, and evidence records. | Label wording, screenshot plan, exception template, review routing, and vendor evidence requests. |

| Week 4 | Approve, publish, retain, and review. | Approved disclosure register, screenshot proof, owner sign-off, unresolved gap list, next review date. |

Free tools and guides to use first

Check whether an Article 50 disclosure or marking trigger may apply.Article 50 Transparency Guide

Read the deeper explanation of the obligation.EU AI Act Evidence Checklist

Map disclosure evidence into the broader deployer evidence file.AI System Inventory Template

Connect Article 50 decisions to controlled AI system records.

Common mistakes that weaken Article 50 evidence

- Labelling everything as AI-generated. Over-labeling can hide the actual Article 50 trigger and make evidence less precise.

- Confusing provider marking with deployer disclosure. Provider-side machine-readable marking evidence does not replace deployer publication records.

- No screenshot proof. If nobody can show what the user or exposed person saw, the disclosure record is weak.

- No exception rationale. Human review, editorial responsibility, creative context, and assistive editing need a record.

- Leaving biometric and emotion recognition to product teams alone. These workflows need privacy/legal escalation.

- Forgetting public-interest text. AI-generated public reports, policy updates, investor materials, or customer advisories may need a documented review path.

Where E1/E2 fits after the free Article 50 path

Use the free checker and this checklist first. If you find one isolated gap, fix the wording, screenshot, exception record, or vendor request locally. If repeated disclosure gaps appear across products, campaigns, HR systems, public publications, or biometric workflows, E1/E2 can become the implementation layer for templates, control mapping, registers, and board-ready evidence packs.

Do not buy implementation material before you know which disclosure triggers, owners, and evidence gaps matter. Diagnose first. Package second.

FAQ: EU AI Act Article 50 disclosure checklist

No. Article 50 is trigger-specific. It covers direct AI interaction notices, provider-side marking for synthetic audio, image, video or text outputs, deployer notices for emotion recognition or biometric categorisation, deepfake disclosure, and disclosure for certain AI-generated or manipulated public-interest text. Exceptions and context matter.

Start with a disclosure trigger register. Record each AI interaction, synthetic content workflow, biometric or emotion-recognition use, deepfake scenario, and public-interest text workflow. For each scenario, record the owner, channel, label decision, exception rationale, evidence location, and next review date.

Article 50 places machine-readable marking obligations on providers of AI systems that generate synthetic audio, image, video or text content. Deployers should still request provider evidence when they rely on those systems, but they should not treat provider marking as a replacement for their own disclosure and publication evidence.

Deployers of AI systems that generate or manipulate image, audio or video content constituting a deepfake must disclose that the content has been artificially generated or manipulated, unless a listed exception applies. Creative, satirical, fictional and analogous works receive a narrower disclosure treatment that should still be documented.

Not always. Article 50 targets AI-generated or manipulated text published to inform the public on matters of public interest. The obligation does not apply where the use is authorised by law for specified criminal-law purposes, or where the content has undergone human review or editorial control and a person holds editorial responsibility.

Store the scenario decision, label wording, placement record, screenshot or publication proof, reviewer, approval date, exception rationale, and source/version reviewed. For content channels, keep evidence by campaign, page, release, workflow, or system so the team can reconstruct the decision later.

Yes. Article 50 requires deployers of emotion recognition or biometric categorisation systems to inform exposed natural persons and process personal data according to applicable data-protection law. Treat these workflows as legal, privacy, security and governance escalation items, not simple website-label decisions.

No. This checklist is operational guidance for evidence planning. It does not determine legal status, confirm compliance, or replace advice from qualified counsel. Validate Article 50 interpretation, GDPR/DPIA implications, employment duties, sector rules, and national-law questions with appropriate professionals.

Source and review note

This page was reviewed against official AI Act Service Desk pages, the official Regulation (EU) 2024/1689 text linked from the Service Desk, and European Commission information on the Code of Practice on marking and labelling of AI-generated content. It is operational guidance for evidence planning. It is not legal advice, and it does not guarantee compliance.

- Article 50: transparency obligations for providers and deployers of certain AI systems

- Article 113: entry into force and application

- European Commission: Code of Practice on marking and labelling of AI-generated content

- Article 26: obligations of deployers of high-risk AI systems

- Article 27: fundamental rights impact assessment

Disclaimer: Validate legal classification, disclosure wording, GDPR/DPIA implications, employment-law duties, sector-specific obligations, public-interest publication questions, and national-law issues with qualified counsel or a competent regulatory adviser.

About the author: Abhishek G Sharma is the founder of Move78 International Limited and holds ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, and CAIRO certifications.