Current status note

Reviewed 30 April 2026. The EU AI Act baseline remains Regulation (EU) 2024/1689. Article 113 uses staged application dates. Colorado SB24-205 has been delayed by SB25B-004 to 30 June 2026, but litigation, rulemaking, and enforcement timing remain unsettled. Re-check Colorado status before publication, client reliance, legal sign-off, or board reporting.

Quick answer: EU AI Act vs Colorado AI Act

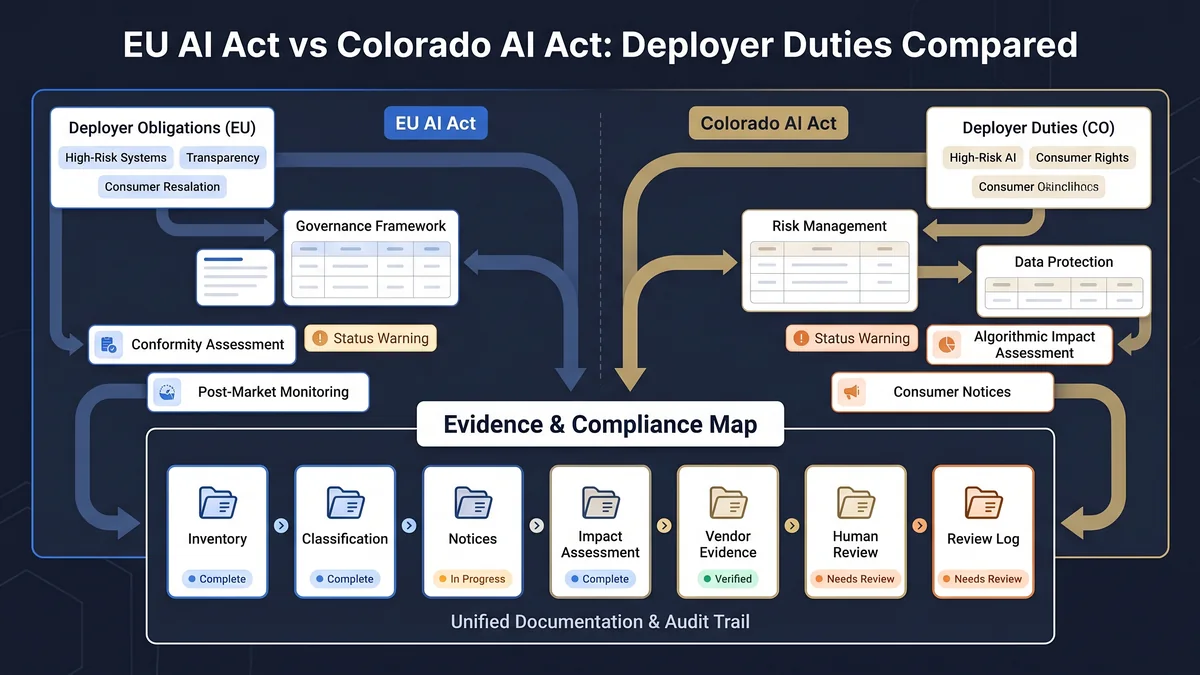

The EU AI Act and Colorado AI Act are not equivalent. The EU AI Act uses provider/deployer roles, Annex III high-risk classification, Article 26 deployer duties, Article 50 transparency obligations, and staged Article 113 dates. Colorado SB24-205 focuses on high-risk AI systems, algorithmic discrimination, consequential decisions, risk management programs, impact assessments, notices, correction, appeal, public statements, and attorney-general enforcement. The operational overlap is evidence: inventory, risk route, notice, assessment, vendor record, review, and escalation.

Side-by-side comparison for deployers

This table compares operating implications, not legal equivalence. Use it to identify which evidence fields should exist in your inventory and control library.

| Dimension | EU AI Act | Colorado AI Act |

|---|---|---|

| Core legal instrument | Regulation (EU) 2024/1689. | Colorado SB24-205, with requirements extended by SB25B-004. |

| Core concern | Risk-based AI regulation across prohibited practices, high-risk systems, transparency, GPAI, governance, and market surveillance. | Known or reasonably foreseeable risks of algorithmic discrimination from high-risk AI systems in consequential-decision contexts. |

| Main actors | Provider, deployer, importer, distributor, product manufacturer, authorised representative, and operator. | Developer and deployer, plus persons doing business in Colorado where the statutory conditions are met. |

| High-risk route | Article 6 and Annex III, plus Annex I safety-component route and limited Article 6(3) non-high-risk logic. | High-risk artificial intelligence systems used to make or substantially influence consequential decisions. |

| Deployer duties | Article 26 duties for high-risk systems, plus Article 50 duties where specified transparency scenarios apply. | Reasonable care, risk management program, impact assessment, annual review, consumer notices, correction route, appeal or human review where technically feasible, public statements, and attorney-general disclosure when algorithmic discrimination is discovered. |

| Transparency | Article 50 covers direct AI interaction, synthetic content marking, emotion recognition / biometric categorisation notification, deepfake disclosure, and certain public-interest text disclosures. | Consumer disclosures and notices tied to AI interaction and consequential-decision contexts. |

| Impact assessment | Article 27 FRIA for specified high-risk deployments; GDPR DPIA may be relevant where personal-data processing creates high risk. | Impact assessment duties for high-risk AI systems, plus annual review logic. |

| Evidence focus | Inventory, instructions for use, logs, oversight, incident path, notices, FRIA/DPIA routing, classification rationale, and Article 113 timing. | Risk management policy, impact assessment, public statement, consumer notices, correction or appeal process, annual review, and algorithmic-discrimination escalation. |

| Enforcement route | EU and national competent authority / market surveillance structure. | Colorado Attorney General exclusive enforcement route under the act. |

| Current timing | Staggered Article 113 application dates. | SB25B-004 extended requirements to 30 June 2026; litigation and enforcement uncertainty remain active. |

Where the regimes overlap operationally

The overlap is not a legal shortcut. It is a practical control architecture. A single evidence system can support both regimes if each jurisdiction-specific decision is separately documented.

AI system inventory

Both regimes require enough system context to understand use, ownership, affected persons, vendor involvement, and decision impact.

Higher-risk routing

EU teams need Article 6 and Annex III routing. Colorado teams need high-risk AI and consequential-decision routing.

Role mapping

EU provider/deployer mapping and Colorado developer/deployer mapping should sit side by side in the inventory.

Vendor evidence

Vendor or developer documentation becomes the handoff layer for impact assessment, safe use, monitoring, and notices.

Impact assessment

Both regimes push teams toward a written assessment record for higher-risk uses, even though the required content and trigger logic differ.

Human review and escalation

Human oversight, appeal, correction, incident handling, and discrimination escalation should be assigned to named owners.

Where they differ

| Difference | Practical implication |

|---|---|

| EU AI Act has a broader product-safety and market-access structure. | EU evidence may need to track provider, importer, distributor, CE marking, conformity assessment, and technical-documentation routes where relevant. |

| Colorado focuses on algorithmic discrimination and consequential decisions. | Colorado evidence should emphasise discrimination risk, consumer impact, notices, correction, appeal, public statements, and annual review. |

| EU uses Annex III categories and Article 6 routes. | EU inventory needs Article 6 reasoning and Annex III mapping, not only a generic “high-risk” label. |

| Colorado uses high-risk AI systems in consequential-decision contexts. | Colorado inventory needs fields for consequential decision, consumer impact, developer documentation, and impact assessment status. |

| EU Article 50 applies to specified transparency scenarios beyond high-risk systems. | Do not limit EU transparency checks to high-risk systems. Generated content and direct AI interaction may require separate Article 50 routing. |

| Colorado status is currently more volatile. | Add a publication-day status check before relying on Colorado timing, enforcement posture, or rulemaking assumptions. |

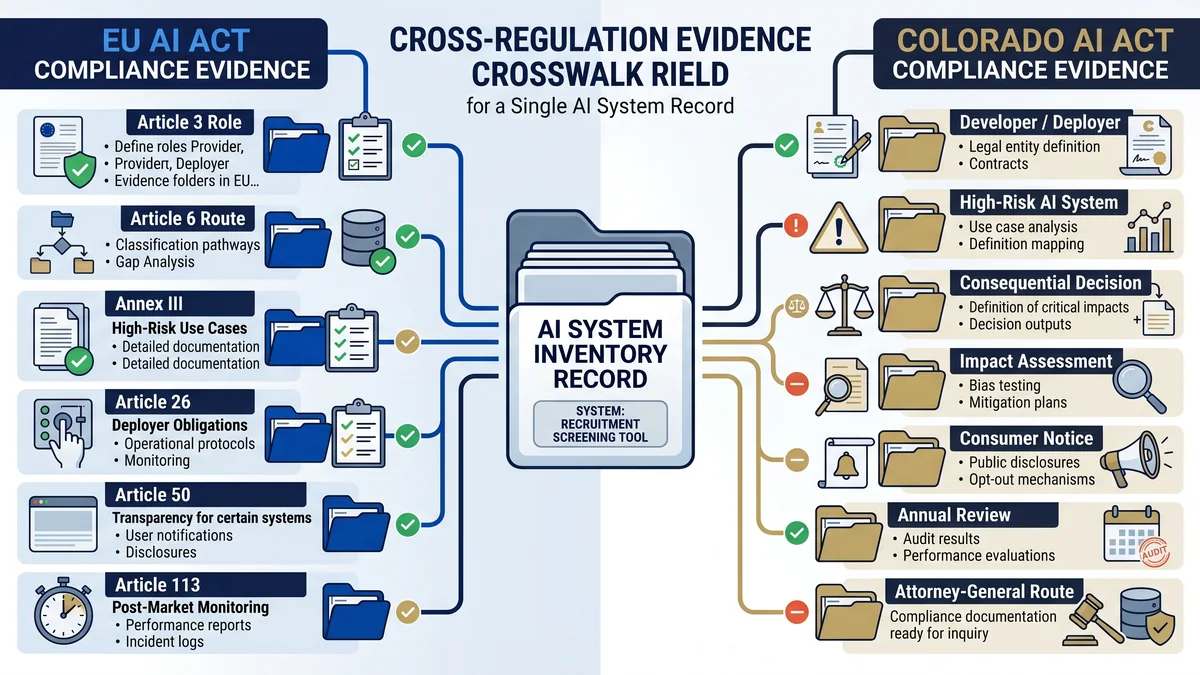

Cross-regulation evidence map

This is the commercial-value section. A deployer does not need two disconnected evidence stores. It needs one traceable record with separate legal routes.

| Evidence artifact | EU AI Act use | Colorado AI Act use | EU AI Compass route |

|---|---|---|---|

| AI system inventory | Article 3, Article 6, Annex III, Article 26, Article 50 routing. | High-risk system and consequential-decision mapping. | AI system inventory fields for EU AI Act readiness |

| Risk classification record | Article 6 / Annex III classification rationale. | High-risk AI system classification and consequential-decision context. | free EU AI Act tools |

| Vendor / developer documentation | Provider instructions, Article 26 use and monitoring evidence. | Developer statement and impact-assessment support. | AI vendor due diligence checklist |

| Impact assessment | FRIA / DPIA routing where relevant. | Impact assessment for high-risk systems. | EU AI Act evidence checklist |

| Notice text | Article 50 and Article 26 notice routes. | Consumer notice, AI interaction disclosure, and consequential-decision notice. | Article 50 implementation pack |

| Disclosure checklist | Article 50 clarity, timing, accessibility, and placement review. | Notice-quality support where Colorado notices are required. | Article 50 disclosure checklist |

| Human oversight / review log | Article 26 oversight evidence for high-risk systems. | Appeal or human review where technically feasible. | evidence checklist |

| Deadline and review record | Article 113 timing and periodic review. | Annual review and 30 June 2026 status tracking. | EU AI Act August 2026 deadline tracker |

Use this free-tool route before buying anything

The wrong move is buying a broad governance platform before knowing which systems, jurisdictions, roles, and evidence gaps you actually have. Start with routing.

AI System Inventory Fields

Capture the fields needed to route EU Article 3, Article 6, Annex III, Article 26, Article 50, DPIA, FRIA, and deadline checks.

EvidenceEU AI Act Evidence Checklist

Build the evidence folder: classification, oversight, notices, logs, vendor handoff, impact-assessment route, and review cadence.

Vendor evidenceVendor Due Diligence Checklist

Ask the provider, vendor, developer, reseller, or integrator for the documentation needed before deployment.

TransparencyArticle 50 Implementation Pack

Route direct AI interaction, generated content, deepfake disclosure, biometric notices, public-interest text, and evidence records.

FAQ: EU AI Act vs Colorado AI Act

No. They are different legal regimes with different structures, definitions, regulators, dates, and obligations. The useful overlap is operational: both push teams to maintain an AI inventory, classify higher-risk uses, retain impact-assessment evidence, manage notices, and keep review records.

Not exactly. The EU AI Act uses provider, deployer, importer, distributor, and operator terminology. Colorado SB24-205 focuses on developers and deployers of high-risk AI systems. A crosswalk should map both role models instead of assuming the same party has the same duties under both regimes.

The main overlap is evidence discipline. A team needs to know what the AI system does, who controls it, whether it affects important decisions, what risk controls exist, what notices are provided, what review was performed, and where the evidence is stored.

Not a direct one-for-one equivalent. EU AI Act Article 50 contains specific transparency scenarios, including direct AI interaction, synthetic content marking, emotion recognition or biometric categorisation notices, deepfake disclosure, and public-interest text disclosure. Colorado has consumer-facing notice and disclosure duties, but the structure is different.

Yes, if the organisation has both EU and Colorado exposure. One inventory can support both regimes if it includes jurisdiction, role, intended purpose, affected persons, consequential-decision context, Annex III route, Article 50 trigger, vendor documentation, impact-assessment status, notice status, and review date.

Start with the system inventory and role map. Identify whether the organisation is a deployer under the EU AI Act, a deployer under Colorado law, or both. Then compare whether the system is high-risk, whether it supports a consequential decision, what notices are needed, and what evidence is missing.

No. SB25B-004 extended SB24-205 requirements to 30 June 2026, but litigation and policy uncertainty remain active. Any page, memo, or client deliverable relying on Colorado status should be checked again on the publication or decision date.

No. This page is an operational comparison for evidence planning. It does not provide legal advice, official interpretation, certification, safe-harbor confirmation, or a compliance guarantee. Use qualified legal or regulatory counsel for formal determinations.

Source and review note

This page was reviewed against official EU AI Act Service Desk pages, Colorado General Assembly bill pages, and public DOJ litigation information. It is an operational comparison for evidence planning. It is not legal advice, official interpretation, certification, safe-harbor confirmation, or a compliance guarantee.

- EU AI Act Article 3: definitions

- EU AI Act Article 6: high-risk classification rules

- EU AI Act Article 26: obligations of deployers of high-risk AI systems

- EU AI Act Article 50: transparency obligations

- EU AI Act Article 113: entry into force and application

- Colorado SB24-205: Consumer Protections for Artificial Intelligence

- Colorado SB25B-004: effective-date extension to 30 June 2026

- U.S. Department of Justice: xAI lawsuit intervention

Last reviewed: 30 April 2026. Re-check Colorado law, litigation, rulemaking, and enforcement posture before relying on this page for formal decisions.

Author

Abhishek G Sharma is the founder of Move78 International and EU AI Compass. He works on cybersecurity, AI governance, ISO 42001, EU AI Act readiness, and evidence-led risk management. This page is practitioner guidance, not legal advice.