Quick answer

An EU AI Act deployer evidence file is a controlled record for one AI system. It should show what the system does, who owns it, why the deployer role and risk route were selected, what vendor evidence was reviewed, how human oversight works, what logs are retained, whether Article 50 or FRIA/DPIA routing is triggered, and how incidents are escalated.

Why deployers need one file per AI system

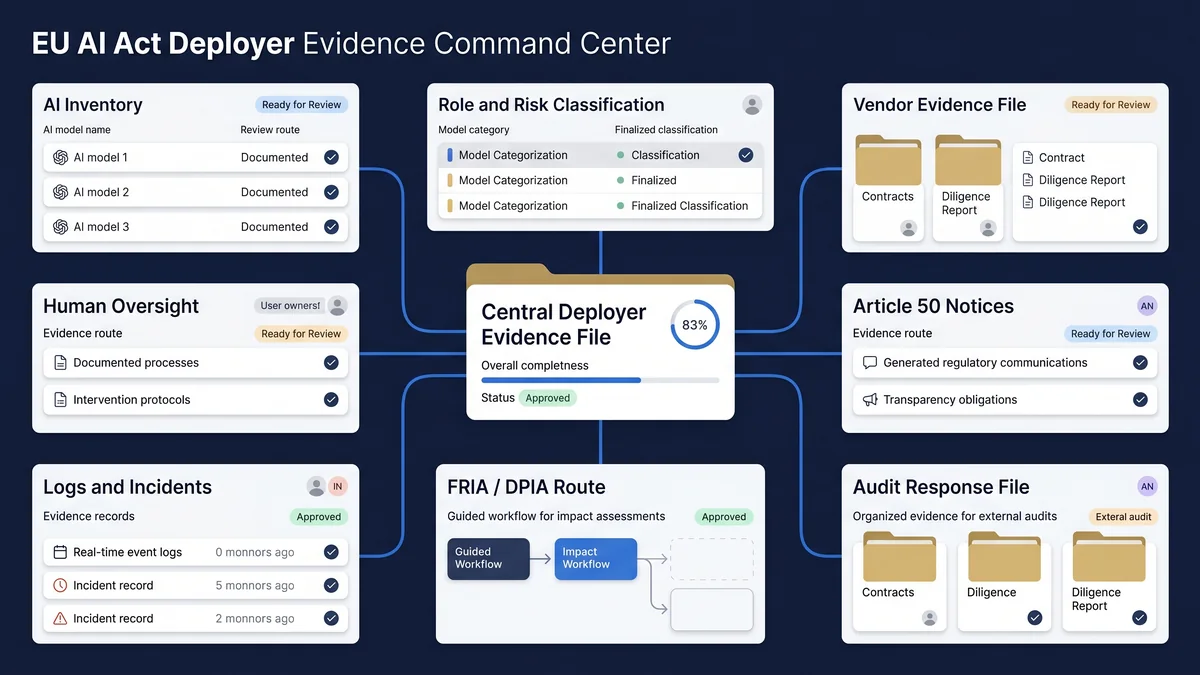

Evidence fails when it is scattered across procurement folders, policy drives, product tickets, privacy notes, vendor emails, and audit workpapers. A deployer evidence file gives one AI system a single control record: owner, use case, vendor dependency, risk route, oversight, logs, disclosure, impact-assessment routing, and escalation path.

The page does not decide legal compliance. It helps a team assemble the minimum operating record that a CISO, DPO, legal counsel, product owner, internal auditor, or procurement reviewer can inspect before the system is deployed, expanded, renewed, or questioned.

Local browser tool

Build your deployer evidence file outline

Enter non-confidential system descriptors only. The builder creates a copyable outline in your browser and does not send the content to EU AI Compass.

System baseline

Use neutral descriptors. Do not paste production records or personal data.

Evidence triggers

Known gaps

Generated outline

Evidence file output

Complete the fields above and select Generate evidence file outline.

Evidence file sections to retain

The evidence file should be proportionate. A low-impact internal AI tool may need a short record. A high-impact, regulated, vendor-dependent, employment, finance, public-service, healthcare, education, or AI-agent workflow needs a deeper file and clearer review ownership.

| Section | What to record | Why it matters |

|---|---|---|

| System identity | Name, owner, purpose, vendor, model source, users, affected persons, data categories, output type, deployment environment. | Prevents shadow AI and gives audit, privacy, legal, product, and security teams a stable object to review. |

| Role and risk rationale | Provider/deployer assumption, Annex III or non-high-risk route, uncertainty, reviewer, date, and next review trigger. | Shows that role and risk were not guessed or buried in an informal email thread. |

| Vendor/provider evidence | Instructions for use, technical documentation summaries, risk information, contractual commitments, support route, known limitations, change notices. | Deployer evidence depends heavily on what the provider or vendor hands over. |

| Instructions for use | Permitted use, prohibited use, human review point, model limitations, user training, escalation criteria, operating constraints. | Connects deployment to controlled use rather than generic procurement approval. |

| Human oversight | Oversight owner, reviewer skills, review point, override method, escalation path, sign-off evidence. | Shows how people remain accountable for review, challenge, escalation, or stopping use. |

| Monitoring and logs | Output checks, review cadence, logs under deployer control, issue records, performance review, retention decision. | Creates a traceable record for operating review, incident reconstruction, and audit questions. |

| Article 50 transparency | Trigger assessment, notice wording, placement decision, approver, retained screenshot or copy, review date. | Separates disclosure decisions from general policy language. |

| FRIA/DPIA routing | Whether FRIA, DPIA, privacy, employment, or sector review is required; reviewer; conclusion; open actions. | Keeps sensitive legal and rights-impact questions visible without letting the template pretend to answer them. |

| Incident and escalation route | Incident owner, internal notification path, provider notification path, authority-facing route, customer or affected-person route where applicable. | Prevents evidence failure after complaints, incidents, unexpected output, or regulator questions. |

| Audit-response index | Where each record sits, who owns it, last reviewed date, missing items, and escalation owner. | Makes the file usable under time pressure. |

Decision table: what to include first

| Situation | Minimum evidence file focus | Escalation |

|---|---|---|

| Internal productivity AI with no sensitive decision impact | Owner, use case, data-use guardrails, vendor, training note, prohibited use, review cadence. | Security/privacy review if personal, confidential, client, or regulated data may be used. |

| Vendor AI embedded in a business process | Vendor evidence, instructions for use, contract record, known limitations, change notification path, oversight owner. | Procurement, legal, privacy, and security review before scale-up or renewal. |

| AI system affecting individuals or access to services | Role/risk rationale, data categories, human oversight, impact-assessment routing, logs, complaint route. | Legal, DPO/privacy, sector counsel, and executive risk owner review. |

| Potential Annex III high-risk AI use case | Risk classification rationale, Article 26 evidence categories, instructions for use, oversight, monitoring, logs, FRIA/DPIA route, incident route. | Qualified legal and sector review before deployment or material change. |

| AI-generated content, chatbot, deepfake, biometric or emotion workflow | Article 50 trigger assessment, notice wording, placement, retained screenshots or records, approver, review date. | Legal/privacy review where content, biometric, employment, education, healthcare, public-service, or rights-impact context exists. |

| AI agent or tool-using autonomous workflow | Autonomy boundaries, tool permissions, data access, kill switch, logs, human approval points, incident route. | Security, privacy, product, legal, and business owner review before production use. |

Common evidence file mistakes

Using one generic AI policy as evidence

A policy is not the same as a system file. Keep the policy, but attach system-specific owner, risk, vendor, oversight, and log records.

Leaving vendor evidence outside the file

If the deployer relies on a vendor, the file should reference the vendor material reviewed and the gaps still open.

Treating Article 50 as a marketing notice only

Article 50 review should record trigger logic, notice wording, placement, and proof. Do not leave it as an informal website copy decision.

Skipping review ownership

Every missing item needs an owner. Without an owner, the file becomes a static checklist rather than an operating record.

EU AI Act deployer evidence file FAQ

Use these answers to keep the evidence file practical, conservative, and auditable. The file supports review. It does not replace qualified legal, privacy, employment, cybersecurity, procurement, or sector advice.

An EU AI Act deployer evidence file is a controlled record for one AI system. It should show the system identity, owner, deployer role, risk rationale, vendor evidence reviewed, instructions for use, oversight model, monitoring records, logs, transparency checks, impact-assessment routing, incident route, and audit-response index.

No. An evidence file helps structure records for internal review, audit preparation, procurement review, and regulator-facing explanation. It does not prove compliance, replace legal advice, or certify that a system meets EU AI Act requirements.

Start with systems that affect people, business-critical decisions, regulated processes, employment, education, financial access, healthcare, safety, public services, biometric processing, or AI-generated content disclosure. Lower-risk systems may need a lighter file, but the owner, purpose, vendor, data, and review record should still be clear.

The first section should identify the AI system: system name, business owner, technical owner, vendor or model source, use case, users, affected persons, input data categories, output type, deployment location, and the current review status.

Vendor evidence should be attached or referenced in the evidence file when the deployer depends on a third-party AI system, model, platform, or embedded feature. Include instructions for use, technical documentation summaries, contractual evidence, risk information, human oversight information, change notices, and known limitations where available.

Include an Article 50 section when the system involves AI interaction, AI-generated or manipulated content, deepfakes, emotion recognition, biometric categorisation, or public-interest text that may trigger transparency review. The evidence file should record the trigger assessment, notice wording, placement decision, approver, date, and retained proof.

Include FRIA or DPIA routing notes when the system may affect fundamental rights, personal data, employment, essential services, public services, law enforcement, education, finance, health, or similar sensitive contexts. The evidence file should not guess the legal answer; it should record whether qualified legal, privacy, or sector review is required.

Review the evidence file before deployment, after material model or vendor changes, after a new use case, after an incident or complaint, before procurement renewal, and before audit or regulator engagement. High-impact systems should also have a fixed review cadence owned by compliance, risk, privacy, or the business owner.

Source and review note

This page is an operational evidence-structuring tool for EU AI Act readiness work. It is not legal advice, does not confirm that a system is high-risk or compliant, and does not replace qualified legal, privacy, employment, sector, cybersecurity, procurement, or regulatory advice.

Primary legal and regulatory references for final review should include Regulation (EU) 2024/1689, the European Commission AI Act Service Desk implementation timeline, and European Commission material on Article 50 transparency and AI-generated content.