Does the EU AI Act apply if you are outside the EU?

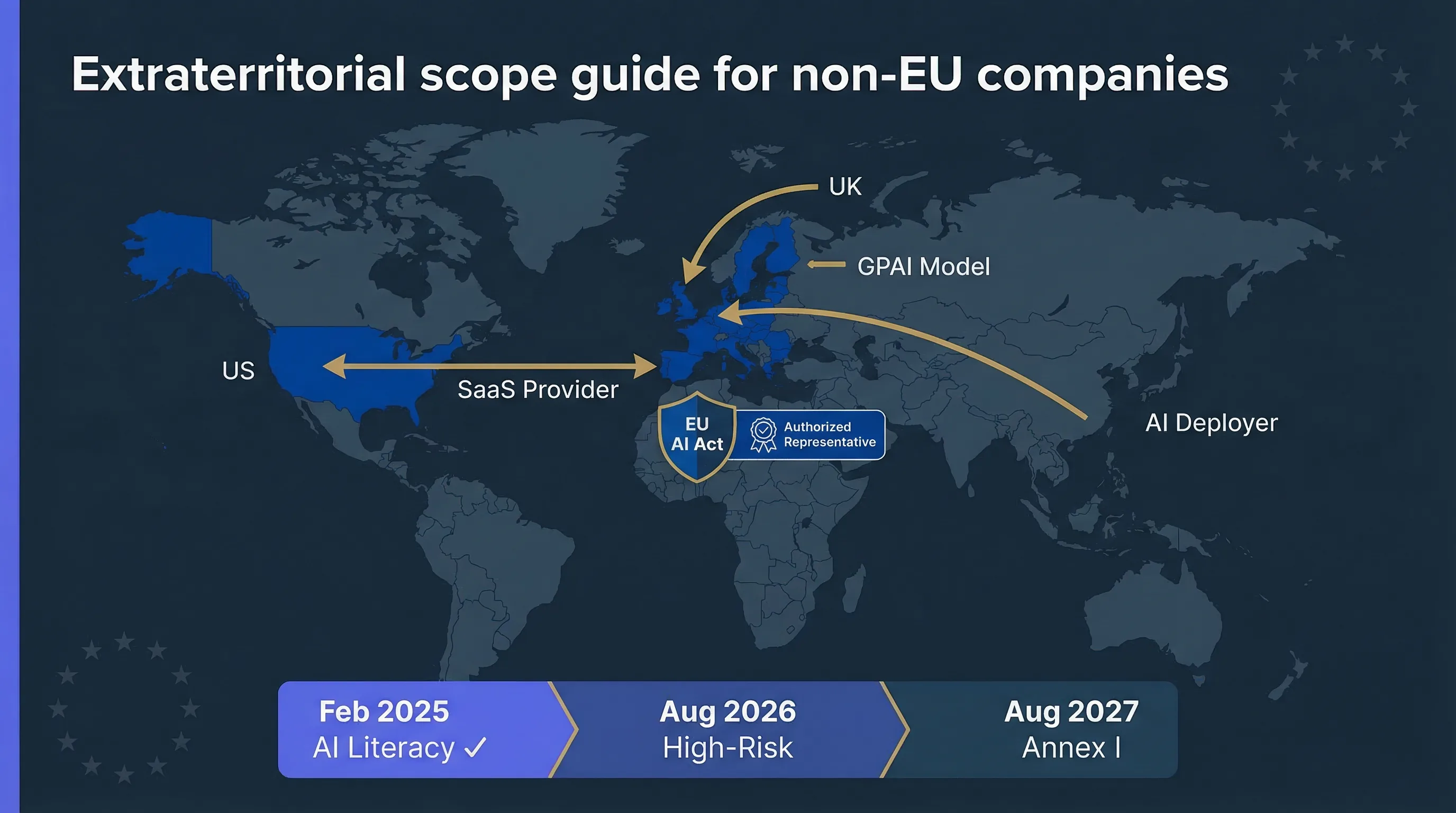

Short answer: yes, if your AI system reaches the EU market. The EU AI Act applies extraterritorially. Under Article 2, providers and deployers not established in the EU are in scope if they place AI systems on the EU market or if the output of their AI system is used within the EU. No EU entity required to trigger obligations. Sound familiar? It's the same reach logic as GDPR.

Here's what that looks like in practice. A US SaaS company selling AI-powered analytics to EU enterprise customers? In scope. A UK HR platform using AI to screen candidates for roles in EU offices? In scope. An APAC startup exposing a GPAI model via API that EU developers embed in their products? In scope. A Canadian company whose AI chatbot answers questions from EU consumers? In scope.

The trigger isn't where your company sits. It's where your AI system's effects land. If those effects reach EU territory, the regulation follows. Use the EU AI Act Compliance Checker to assess your specific situation, and see our complete EU AI Act compliance guide for the full regulatory background.

What role are you playing: provider, deployer, or GPAI provider?

Your obligations depend on the role you play in the AI value chain. Most non-EU companies fall into one of four categories, and some hold multiple roles simultaneously.

| Role | Typical non-EU example | Authorized representative needed? |

|---|---|---|

| Provider of AI system | US company that develops and sells an AI-powered recruitment screening tool to EU clients | Yes, for high-risk AI systems (Article 22) |

| Deployer of AI system | UK company using AI credit scoring for EU borrowers via a third-party AI tool | Not required for deployers under current text, but deployer obligations (Art. 26) still apply |

| GPAI model provider | US/APAC company providing a foundation model or fine-tuned model via API to EU customers | Yes (Article 54) |

| Downstream integrator | Indian startup embedding OpenAI/Anthropic models into a product used by EU businesses | May become provider if substantially modifying the AI system (Article 25); check role carefully |

If you're unsure which role applies, the Accidental Provider Classifier can help. The distinction matters enormously: providers carry heavier obligations (risk management, conformity assessment, CE marking) while deployers own operational controls (human oversight, monitoring, FRIA). Getting the role wrong means preparing for the wrong set of obligations.

When do you need an EU authorized representative under the AI Act?

If you're a non-EU provider of high-risk AI systems and you want to place those systems on the EU market, you must appoint an authorized representative (AR) established in the EU before doing so. Article 22 covers this for high-risk AI providers. Article 54 covers GPAI model providers.

What an AR does

At a high level, the AR acts as your EU point of contact. They hold relevant technical documentation, cooperate with national competent authorities and the AI Office, and can be reached for inspections and correspondence. They don't replace your compliance obligations — they represent you in the EU for regulatory purposes.

When an AR is NOT required

If you're a deployer (not a provider), the current text doesn't require an AR appointment. If your AI system isn't classified as high-risk and you're not a GPAI model provider, you may not need one. But you still have obligations — transparency (Article 50), AI literacy (Article 4), and potentially deployer obligations under Article 26 if you use AI systems for Annex III purposes.

⚠ This guide does not provide legal advice on AR selection

Authorized representative appointment involves contractual terms, liability allocation, and jurisdiction-specific considerations. Seek local legal counsel for AR selection and contract drafting. Our advisory service can help you prepare the compliance foundation the AR will need to reference.

Three typical non-EU scenarios and what they must do

Abstract rules become concrete when mapped to real situations. Here are three profiles I encounter regularly.

Scenario 1: US SaaS vendor with AI recruitment tool

A 60-person US company sells an AI-powered applicant screening platform to EU enterprise clients. The AI scores CVs, ranks candidates, and flags potential matches. Role: Provider of a high-risk AI system (Annex III Area 4 — employment). AR required: Yes (Article 22). Key obligations: Conformity assessment (Annex VI self-assessment), CE marking, EU database registration, technical documentation per Annex IV, risk management system, post-market monitoring. Use Compliance Checker and Deployer Self-Assessment.

Scenario 2: UK fintech using GPAI for credit scoring

A 40-person UK fintech integrates a foundation model (via API) into their credit decisioning workflow for EU borrowers. Role: Deployer of a high-risk AI system (Annex III Area 5a — creditworthiness) AND potentially a provider if they substantially modified the model. AR required: Not as deployer; yes if they've become a provider through modification. Key obligations: FRIA (mandatory for credit scoring deployers), human oversight, input data governance, logging, AI literacy. Check Accidental Provider Classifier and FRIA Generator.

Scenario 3: Indian startup with an AI chatbot platform

A 25-person Indian startup offers a white-label chatbot platform used by EU e-commerce sites. The chatbot handles customer enquiries, product recommendations, and complaint routing. Role: Likely limited-risk (Article 50 transparency obligations). If the chatbot makes decisions affecting access to services, it may tip into Annex III. AR required: Not if limited-risk only. Key obligations: Article 50 transparency (disclose AI interaction to users), Article 4 AI literacy, review whether any use cases touch Annex III areas.

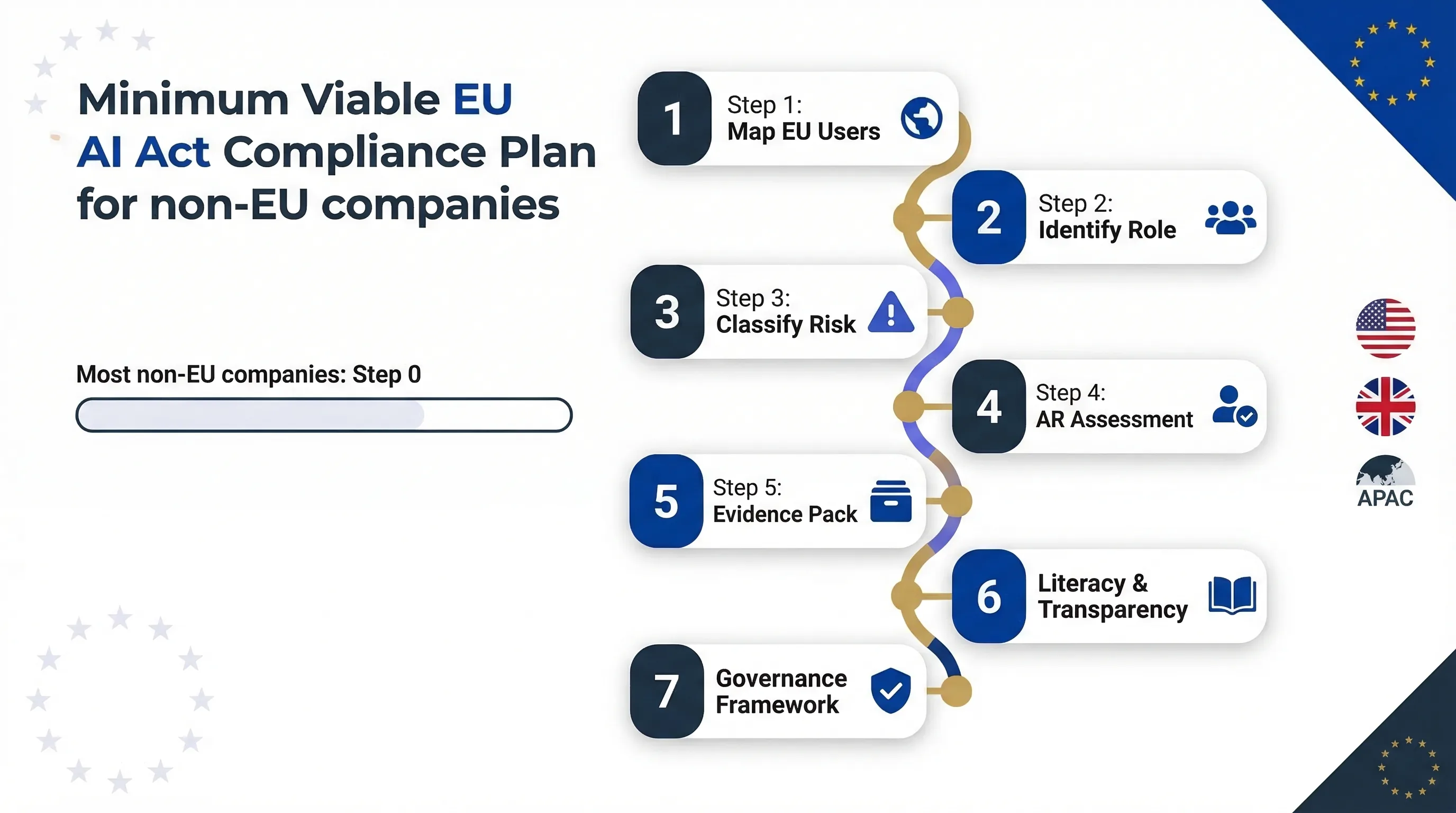

The 7-step minimum viable compliance plan: most non-EU companies haven't started step 1.

Minimum viable EU AI Act compliance for non-EU SMEs and mid-market

You don't need a 50-person compliance team. You need a structured sequence. Here's the minimum a 10-250 employee non-EU company should implement before August 2, 2026. I've walked companies through this process — the order matters.

Map your EU users and AI use cases.

Identify every product or feature that uses AI and serves EU customers. This is your scope baseline. Compliance Checker →

Identify your role: provider, deployer, or GPAI provider.

Your obligations are entirely different depending on the role. If you've modified a vendor's model, you may have crossed from deployer to provider. Accidental Provider Classifier →

Classify risk level against Annex III.

Determine whether your AI use cases are high-risk, limited-risk, or minimal-risk. Annex III Checklist →

Determine AR requirement and select if needed.

If you're a provider of high-risk AI or a GPAI model provider, you need an authorized representative in the EU. Start this process early — it involves contractual negotiation and documentation handoff.

Build your core evidence pack.

Risk assessment documentation, technical documentation (Annex IV for providers), FRIA/DPIA where required, usage logs, human oversight arrangements. For the full deployer evidence matrix, see our High-Risk Deployer Guide. Deployer Self-Assessment →

Implement AI literacy and transparency basics.

Article 4 AI literacy is enforceable since February 2, 2025 — already live. Article 50 transparency obligations kick in August 2, 2026. AI Literacy Planner →

Choose a governance framework for ongoing compliance.

If you're already on ISO 27001 or NIST CSF, extending to ISO 42001 or NIST AI RMF is the natural path. See our Framework Mapping Guide. ISO/NIST Gap Analyzer →

🌎 Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Which free tools non-EU companies should start with

Every tool runs in your browser, collects zero data, requires no login. Here's the recommended sequence for non-EU companies.

| Question you need answered | Tool |

|---|---|

| Does the EU AI Act apply to us? | EU AI Act Compliance Checker |

| Are our AI use cases high-risk? | Annex III High-Risk Checklist |

| Do we need a FRIA or DPIA? | FRIA Generator |

| What are our deployer obligations? | Deployer Obligation Self-Assessment |

| Have we accidentally become a provider? | Accidental Provider Classifier |

| Which governance framework should we use? | ISO/NIST Gap Analyzer |

Top 7 mistakes non-EU companies make with the EU AI Act

I've seen every one of these in real engagements. Don't be the company that discovers these the hard way.

1. Assuming "no EU entity = no EU AI Act"

The regulation applies based on market effect, not company location. Article 2 is explicit.

2. Thinking GDPR compliance alone covers the AI Act

Separate regulation, separate obligations. A GDPR DPA doesn't satisfy conformity assessment, human oversight, or AI literacy requirements.

3. Delaying AR appointment until after enforcement

If you need an AR, the appointment must be in place before you place your AI system on the EU market — not after a regulator asks for it.

4. Ignoring Article 4 (AI literacy) because it seems minor

It's been enforceable since February 2, 2025. It applies to all organisations deploying AI — not just high-risk. Don't overlook it.

5. Not checking whether model fine-tuning makes you a provider

Article 25: substantially modifying an AI system or deploying it for a purpose the provider didn't intend can reclassify you from deployer to provider.

6. Treating a "few EU customers" as below threshold

There's no de minimis clause. One EU customer using your high-risk AI system triggers obligations.

7. Waiting for the Digital Omnibus to "simplify" things

The Omnibus is a proposal, not law. Plan for August 2, 2026 as the binding deadline.

EU AI Act and non-EU companies: frequently asked questions

Yes, if they place AI systems on the EU market or the output of their AI system is used within the EU. The EU AI Act applies extraterritorially based on market effect, not company location. A US SaaS vendor with EU enterprise customers selling AI-powered features is in scope regardless of where the company is incorporated.

Non-EU providers of high-risk AI systems must appoint an authorized representative established in the EU before placing those systems on the EU market (Article 22). Non-EU GPAI model providers must also designate an authorized representative under Article 54. The AR acts as the point of contact for EU authorities and must hold relevant technical documentation.

Potentially yes. If you integrate OpenAI, Google, or other foundation model outputs into a product or service used by EU customers, you may be a deployer of an AI system under the EU AI Act. If the use case falls under Annex III (high-risk), you carry deployer obligations under Article 26 regardless of where you are based.

The EU AI Act does not include a de minimis threshold for the number of EU users. If your AI system is placed on the EU market or its output is used in the EU, the regulation applies. Even a single EU customer using your high-risk AI product triggers obligations.

The EU AI Act does not restrict which types of entities can serve as authorized representatives, provided they are established in the EU. A law firm, consultancy, or specialised compliance firm could serve as AR. However, the AR must be able to fulfil specific obligations including holding technical documentation, cooperating with authorities, and being reachable for inspections. Seek local legal counsel for AR selection and contract terms.

No. GDPR and the EU AI Act are separate regulations with different obligations. GDPR covers personal data processing. The EU AI Act covers AI system risk management, transparency, human oversight, technical documentation, conformity assessment, and more. You may need to comply with both, and a GDPR data processing agreement does not satisfy EU AI Act requirements.

At minimum: (1) determine if your AI systems are in scope, (2) classify risk level against Annex III, (3) identify your role (provider, deployer, GPAI provider), (4) determine if you need an authorized representative, (5) build a core evidence pack (risk assessment, technical documentation, usage logs), (6) implement Article 4 AI literacy training, and (7) meet Article 50 transparency obligations where applicable.

Abhishek G Sharma

Founder & CEO, Move78 International Limited

ISO 42001 LA · ISO 27001 LA · CISA · CISM · CRISC · CEH · CCSK · CAIGO · CAIRO

20+ years in cybersecurity and risk management. Based in Shanghai and Hong Kong — advises non-EU companies navigating EU AI Act compliance from an APAC perspective.

Need More Practical Guidance?

Scope assessment, AR requirement analysis, evidence pack templates, and guided workshops — tailored for companies outside the EU.

Disclaimer

This guide is for educational and informational purposes only. It does not constitute legal advice. The EU AI Act (Regulation 2024/1689) is a complex regulation and its interpretation may evolve. Authorized representative selection, contractual terms, and scope delimitation require local legal advice. Move78 International Limited is not a law firm and does not act as an authorized representative. All references current as of March 2026.