Why reading the EU AI Act isn't enough — and why frameworks matter

Regulation 2024/1689 contains 113 articles and 13 annexes. It defines requirements for risk management, data governance, technical documentation, human oversight, accuracy, robustness, cybersecurity, logging, transparency, post-market monitoring, and incident reporting. What it doesn't tell you is how to build the management system that satisfies those requirements.

That's what an AI governance framework provides: a structured approach to designing, implementing, operating, and continuously improving an AI management system. Without one, you're stitching together ad-hoc policies and hoping the regulator doesn't ask to see the thread.

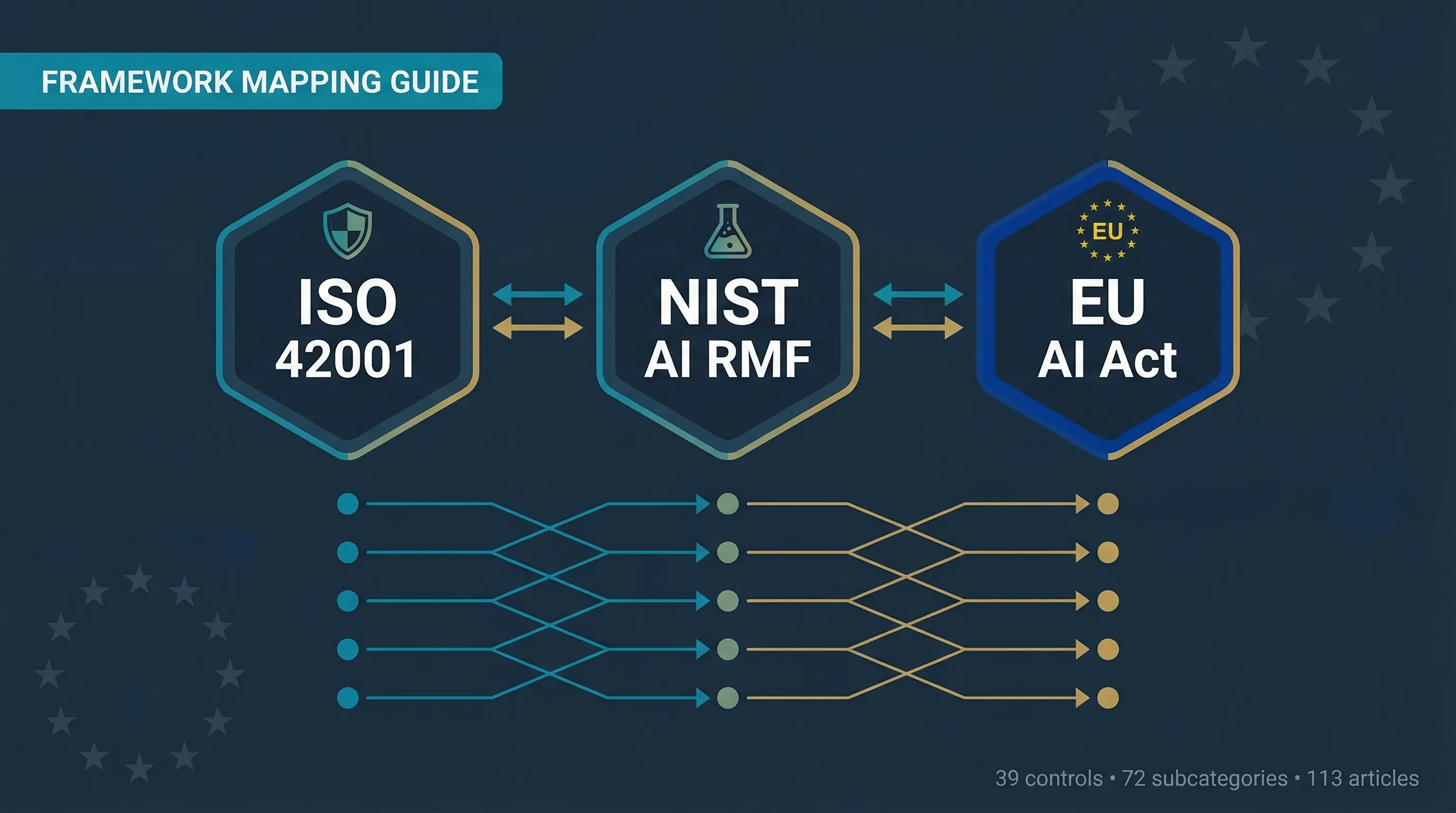

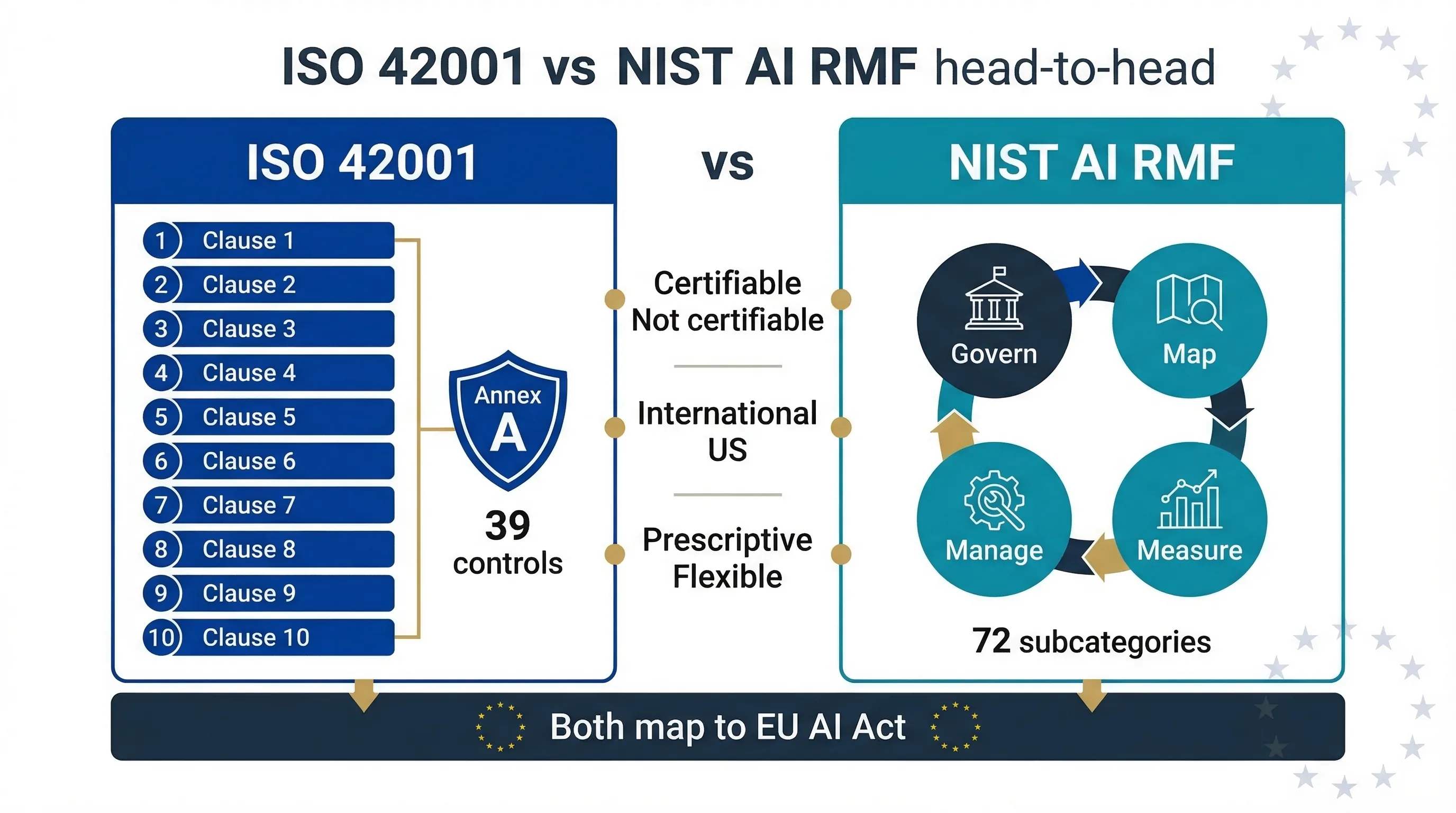

Two frameworks dominate: ISO/IEC 42001:2023 (international standard, certifiable) and NIST AI RMF 1.0 (US voluntary framework, not certifiable). Neither was designed specifically for the EU AI Act, but both map substantially to its requirements. The practical question: which framework do you use, and how does it connect to your legal obligations?

This guide answers that with clause-by-clause mapping tables, a head-to-head comparison, gap analysis, and an implementation roadmap. For the full picture of EU AI Act obligations before you get into frameworks, see our complete EU AI Act compliance guide. To run your own gap analysis right now, use the ISO/NIST Gap Analyzer.

ISO/IEC 42001: the first international standard for AI management systems

ISO/IEC 42001:2023 is the first international standard providing requirements for establishing, implementing, maintaining, and continually improving an AI management system (AIMS). Published in December 2023 by ISO/IEC JTC 1/SC 42, it follows the Annex SL high-level structure shared with ISO 27001, ISO 9001, and ISO 14001 — which means it integrates with existing management systems your organisation may already run.

It's certifiable. Organisations can achieve third-party ISO 42001 certification through accredited bodies. According to a 2025 Sprinto survey, 76% of organisations plan to adopt ISO 42001 as their AI governance backbone.

Structure

The standard follows 10 Annex SL clauses: Context of the organisation (Clause 4), Leadership (Clause 5), Planning (Clause 6), Support (Clause 7), Operation (Clause 8), Performance evaluation (Clause 9), and Improvement (Clause 10). Annex A contains 39 controls across 4 themes. Annexes B, C, and D provide implementation guidance, hazard sources, and sector application guidance.

What it doesn't cover

ISO 42001 is a management system standard, not a product certification standard. It doesn't test your AI system's performance, accuracy, or bias. It certifies that your organisation has a systematic approach to managing AI risks. And it doesn't automatically satisfy EU AI Act obligations — it provides the management backbone, but you still need to map specific regulatory requirements (conformity assessment, CE marking, FRIA, logging, incident reporting) to your controls.

Key facts: Published December 2023 · Standard: ISO/IEC 42001:2023 · 10 clauses (Annex SL) · 39 Annex A controls · Certifiable: Yes · ISO catalogue entry →

NIST AI RMF: the US voluntary framework for AI risk management

NIST AI RMF 1.0 is a voluntary risk management framework published on January 26, 2023 by the US National Institute of Standards and Technology. It's organised around four core functions: Govern, Map, Measure, and Manage. It isn't legally binding and isn't certifiable — you can't get "NIST AI RMF certified," which is a common misconception. But it's widely adopted outside the US as a practical risk methodology.

The four core functions

GOVERN: Establish organisational AI risk governance — policies, roles, accountability, culture. This is the cross-cutting function. MAP: Identify and contextualise AI risks — understand the system's context, stakeholders, and potential impacts. MEASURE: Assess and monitor AI risks — bias testing, performance evaluation, drift detection. MANAGE: Treat, respond to, and recover from AI risks — mitigation, incident response, continuous monitoring.

Across these four functions there are 19 categories (GOVERN has 6, MAP has 5, MEASURE has 4, MANAGE has 4) and approximately 72 subcategories. The companion NIST AI RMF Playbook provides concrete implementation guidance for each subcategory.

What it doesn't cover

No certification mechanism — NIST doesn't certify organisations. Not designed as a compliance tool for any specific regulation. Doesn't prescribe documentation formats or specific technical controls. US-centric in origin but regulation-agnostic in design. It's complemented by NIST AI 600-1 (Generative AI Profile, published July 2024), which adds 12 unique risks specific to generative AI systems.

Key facts: Published January 26, 2023 · Document: NIST AI 100-1 · 4 functions, 19 categories, ~72 subcategories · Certifiable: No · GenAI Profile: NIST AI 600-1 (July 2024) · Full document (PDF) →

Framework crosswalk: how ISO 42001, NIST AI RMF, and EU AI Act map together

Neither ISO 42001 nor NIST AI RMF was designed specifically for the EU AI Act. But the overlap is substantial. The table below maps the key EU AI Act obligations to their closest equivalents in each framework. Where gaps exist — obligations that a framework doesn't cover — they're flagged explicitly. I've built this from clause-level analysis, not high-level category matching.

| EU AI Act Obligation | Article(s) | ISO 42001 Mapping | NIST AI RMF Mapping | Gap Notes |

|---|---|---|---|---|

| Risk management system | Art. 9 | Clause 6.1, Clause 8.2, Annex A Controls A.5.2-A.5.4 | MAP (full function), MANAGE MG-1, MG-2 | Strong coverage in both. ISO 42001 more prescriptive on management system structure. |

| Data governance | Art. 10 | Clause 8.4, Annex A Control A.7.4 | MAP MP-2.3, MEASURE MS-2.6 | Art. 10 has specific training/validation/testing data and bias examination requirements that exceed both frameworks. |

| Technical documentation | Art. 11 | Clause 7.5, Annex A Control A.6.2.5 | GOVERN GV-1.3, MAP MP-5.2 | GAP: Annex IV documentation template not covered by either framework. |

| Record-keeping / logging | Art. 12 | Clause 9.1, Annex A Control A.6.2.7 | MEASURE MS-2, MANAGE MG-3.2 | GAP: Automatic logging design requirements are EU AI Act-specific. |

| Transparency to users | Art. 13 | Annex A Control A.8.2 | GOVERN GV-1.2, MAP MP-5.1 | Reasonable alignment. Art. 13 specifies particular information that must be disclosed. |

| Human oversight | Art. 14 | Annex A Control A.8.4 | GOVERN GV-5, MAP MP-3.4 | GAP: Art. 14 requires specific measures designed into the system. Neither framework prescribes this at the same specificity. |

| Accuracy, robustness, cybersecurity | Art. 15 | Annex A Controls A.6.2.3, A.6.2.4, Clause 6.1.2 | MEASURE MS-2, MAP MP-2.6 | GAP: Art. 15 requires resilience against adversarial attacks and data poisoning — cybersecurity-specific requirements neither covers at this granularity. |

| Quality management system | Art. 17 | Entire standard (Clauses 4-10) | GOVERN function (partially) | Strongest alignment. ISO 42001 IS a management system standard. Substantially satisfies Art. 17 QMS requirements. |

| Conformity assessment | Art. 43 | Clause 9.2 (internal audit), Clause 9.3 | Not covered | MAJOR GAP: Conformity assessment is EU AI Act-specific. ISO 42001 internal audit is not equivalent. |

| CE marking | Art. 48 | Not covered | Not covered | MAJOR GAP: EU product regulation-specific. |

| EU database registration | Art. 49 | Not covered | Not covered | MAJOR GAP: EU-specific regulatory requirement. |

| Post-market monitoring | Art. 72 | Clause 10.1, Clause 9.1 | MANAGE MG-3, MG-4 | GAP: EU AI Act requires a specific post-market monitoring system documented as part of the QMS. |

| Serious incident reporting | Art. 73 | Annex A Control A.6.2.8 | MANAGE MG-4 | MAJOR GAP: Mandatory reporting to national authorities within specified timeframes. Neither framework mandates regulatory reporting. |

| AI literacy | Art. 4 | Clause 7.2, Clause 7.3 | GOVERN GV-2 | Good alignment. ISO 42001 Clauses 7.2/7.3 map well to AI literacy. |

| FRIA | Art. 27 | Clause 8.2, Annex C | MAP MP-3, MP-4 | GAP: FRIA has specific requirements (affected persons, fundamental rights focus) not fully covered by either. |

| Transparency / AI content labeling | Art. 50 | Annex A Control A.8.2 | Not specifically covered | GAP: Art. 50 labeling/watermarking requirements are EU AI Act-specific. |

🔎 Run your own gap analysis

The ISO/NIST Gap Analyzer maps your current controls against both frameworks and flags EU AI Act gaps automatically.

Start Gap Analysis →For a narrative overview of the crosswalk, see our ISO/NIST crosswalk blog article.

What ISO 42001 and NIST AI RMF don't cover: EU AI Act-specific gaps

Implementing ISO 42001 or NIST AI RMF gets you approximately 60-70% of the way to EU AI Act compliance for the management system and risk governance requirements. The remaining 30-40% consists of EU-specific regulatory obligations that must be addressed separately. Here are the six gaps you can't ignore.

Gap 1: Conformity assessment and CE marking (Art. 43, 48)

Neither framework provides a conformity assessment procedure. For most Annex III high-risk systems, providers must self-assess per Annex VI. For biometric ID and critical infrastructure, a notified body must conduct third-party assessment. CE marking is then affixed. Pure EU product regulation with no framework equivalent.

Gap 2: EU database registration (Art. 49)

Providers and certain deployers must register high-risk AI systems in the EU database before placing them on the market. Administrative requirement with no framework mapping.

Gap 3: Mandatory incident reporting (Art. 73)

Both frameworks recommend incident tracking and communication. Neither mandates reporting to a national regulatory authority within specified timeframes. The EU AI Act requires reporting serious incidents "without undue delay."

Gap 4: Automatic logging requirements (Art. 12)

High-risk AI systems must be designed with automatic logging capabilities that record specific events. This is a technical design requirement beyond operational monitoring covered by ISO 42001 Clause 9.1 or NIST MEASURE.

Gap 5: Annex IV technical documentation format (Art. 11)

Article 11 and Annex IV specify the exact content required in technical documentation. Neither framework prescribes documentation to this level of specificity.

Gap 6: Article 50 content labeling (Art. 50)

The Code of Practice on marking AI-generated content requires specific metadata and watermarking approaches not addressed by either framework.

The bottom line

ISO 42001 or NIST AI RMF gives you the governance foundation. The six gaps above are the regulatory overlay you bolt on top. Don't treat framework adoption as a substitute for EU AI Act compliance — treat it as 60-70% of the journey with a clear list of what's left.

ISO 42001 vs NIST AI RMF: certifiable management system vs flexible risk methodology — most organisations need both.

ISO 42001 vs NIST AI RMF: which framework should you implement?

This is the question I get asked most often by CISOs and GRC leads. The answer depends on your starting point, your regulatory exposure, and whether certification matters to you. Here's the head-to-head.

| Dimension | ISO/IEC 42001:2023 | NIST AI RMF 1.0 |

|---|---|---|

| Origin | ISO/IEC (international) | NIST (US federal) |

| Status | International standard | Voluntary framework |

| Certifiable | Yes (third-party audit) | No |

| Structure | Annex SL management system (Clauses 4-10) | 4 functions (Govern, Map, Measure, Manage) |

| Prescriptiveness | Higher — specifies "shall" requirements | Lower — descriptive, flexible |

| Integration | Integrates with ISO 27001, 9001, 14001 via Annex SL | Standalone; references NIST CSF, SP 800-53 |

| Controls | 39 Annex A controls (4 themes) | ~72 subcategories (19 categories) |

| Risk approach | AIMS-integrated risk treatment | Risk management lifecycle |

| Documentation | Extensive (management system + SOA) | Less formal (profiles, playbooks) |

| Cost to implement | Higher (certification costs, formal audit) | Lower (no certification, flexible adoption) |

| EU AI Act alignment | Stronger for management system (Art. 17 QMS) | Stronger for risk identification and measurement |

| Best for | EU-facing organisations, regulated industries, procurement requirements | US-centric operations, flexible adoption, risk methodology focus |

So which one first? If you need certification — for customer trust, EU procurement, or regulatory positioning — ISO 42001. It's the only certifiable AI governance standard. If you need a practical risk methodology and flexibility matters more than certification, NIST AI RMF. It's faster to adopt, and the Playbook gives you concrete implementation guidance.

If you already have an ISO 27001 ISMS, ISO 42001 is the natural extension. Both share the Annex SL structure. You're extending your existing management system, not building a new one from scratch.

If you need both EU AI Act compliance AND US AI governance alignment: implement both. They complement rather than compete. Use the ISO/NIST Gap Analyzer to run a gap analysis across both frameworks simultaneously.

How to implement: a framework adoption roadmap for EU AI Act compliance

Here's the sequencing I recommend for mid-market organisations (50-250 employees) pursuing ISO 42001 implementation with EU AI Act compliance overlay. The total timeline runs 24-32 weeks. If you already have ISO 27001, you'll move faster — the Annex SL shared structure shaves 4-8 weeks off the early phases.

Phase 1: Scope and inventory (Weeks 1-4)

Define AIMS scope — which AI systems, which business processes, which organisational units. Conduct AI system inventory (maps to ISO 42001 Clause 4.3, NIST GOVERN GV-1, EU AI Act Art. 26). Classify systems by risk level against Annex III. Shadow AI Discovery Protocol →Compliance Checker →

Phase 2: Risk assessment and gap analysis (Weeks 4-8)

Conduct AI risk assessment per ISO 42001 Clause 6.1 / NIST MAP and MEASURE. Map existing controls against ISO 42001 Annex A and NIST subcategories. Identify EU AI Act-specific gaps: conformity assessment, CE marking, logging, FRIA, incident reporting. ISO/NIST Gap Analyzer →

Phase 3: Design controls and documentation (Weeks 8-16)

Develop AI policy (ISO 42001 Clause 5.2). Implement selected Annex A controls with Statement of Applicability. Build technical documentation per Annex IV. Establish human oversight arrangements (Art. 14 / ISO 42001 A.8.4). Design logging procedures (Art. 12). For deployer-specific obligations, see the High-Risk Deployer Guide. Human Oversight Log →Deployer Self-Assessment →

Phase 4: Implement and train (Weeks 16-24)

Deploy controls in operational environment. Conduct AI literacy training (Art. 4 / ISO 42001 Clauses 7.2-7.3). Establish incident response and reporting procedures (Art. 73). Document vendor due diligence (Art. 25-26 / ISO 42001 Clause 8.5). AI Literacy Planner →AI Vendor Risk Screener →

Phase 5: Evaluate and certify (Weeks 24-32)

Conduct internal audit (ISO 42001 Clause 9.2). Management review (Clause 9.3). Address nonconformities and corrective actions (Clause 10.1). If pursuing certification: engage accredited body. If self-assessing for EU AI Act: conduct conformity assessment per Annex VI.

🏗 Guided framework implementation

Move78 International provides broader cross-framework implementation support for teams aligning ISO 42001, NIST AI RMF, and EU AI Act governance work.

Three frameworks, one compliance programme: how they work together

I get a version of this question every week: "Do I need to choose between ISO 42001 and NIST AI RMF?" No. They're not competitors. They occupy different layers of your AI governance stack.

ISO 42001

Management system backbone — policies, roles, risk treatment, internal audit, management review, continual improvement. Satisfies Art. 17 QMS requirements.

NIST AI RMF

Risk methodology engine — MAP and MEASURE functions provide the operational playbook for risk assessment within the ISO 42001 management system.

EU AI Act

Legal obligations — conformity assessment, CE marking, FRIA, incident reporting, database registration, content labeling. Overlay requirements that neither framework fully covers.

Start with ISO 42001 as your structural foundation. Use NIST AI RMF MAP/MEASURE as your risk assessment methodology inside Clauses 6 and 8. Then gap-fill the EU AI Act-specific items as overlay requirements. That's how you build one coherent programme instead of three competing workstreams.

Free tools for AI governance framework implementation

Every tool runs in your browser, collects zero data, and requires no login. These are the tools most relevant to organisations implementing ISO 42001 or NIST AI RMF alongside EU AI Act compliance.

ISO/NIST Gap Analyzer

Map controls against both frameworks, flag EU AI Act gaps

Shadow AI Discovery Protocol

Find undocumented AI across your organisation

AI Vendor Risk Screener

Evaluate vendor AI governance posture

Bias Testing Safe Harbor Protocol

Structure bias assessment per NIST MEASURE

Agentic AI Bounds Definer

Set operational boundaries for autonomous AI

RAG Data Hygiene Screener

Assess data quality for retrieval-augmented systems

AI Literacy Training Planner

Build Article 4 literacy programme

EU AI Act Compliance Checker

Quick classification against full regulation

Deployer Obligation Self-Assessment

Map gaps against Article 26

Human Oversight Log

Structure oversight arrangements

FAQ: AI governance frameworks and EU AI Act compliance

No. NIST AI RMF is a voluntary framework published by the US National Institute of Standards and Technology. There is no NIST certification programme. NIST does not certify organisations. ISO/IEC 42001 is the certifiable international standard for AI management systems.

No. ISO 42001 provides the management system backbone that covers approximately 60-70% of EU AI Act management and governance requirements. The remaining obligations — conformity assessment, CE marking, database registration, mandatory incident reporting, and specific documentation formats — must be addressed separately as EU-specific regulatory gap-fill items.

If you need certifiable AI governance (for EU procurement, customer trust, or regulatory positioning), start with ISO 42001. If you need a practical risk methodology with maximum flexibility, start with NIST AI RMF. If you already have ISO 27001, extend to ISO 42001 — the Annex SL structure makes integration straightforward.

Both follow the Annex SL high-level structure. An organisation with an existing ISO 27001 ISMS can extend it to include AI management by adding ISO 42001-specific clauses and Annex A controls. The management system infrastructure (policies, internal audit, management review, risk treatment) is shared. Some organisations pursue an integrated audit.

Published July 2024, NIST AI 600-1 is a companion to NIST AI RMF specifically addressing risks from generative AI systems (LLMs, image generators, etc.). It adds 12 unique risks including confabulation, data privacy, and environmental impact, and maps them to the AI RMF functions. Relevant for organisations deploying generative AI under EU AI Act transparency obligations (Article 50).

For a mid-market organisation (50-250 employees) with an existing ISO 27001 system: 4-6 months to implementation, plus 2-3 months for certification audit. Without an existing management system: 6-9 months to implementation. The certification audit itself takes 2-5 days depending on scope.

They are separate frameworks designed to work together. CSF covers cybersecurity risk management. AI RMF covers AI-specific risk management. The AI RMF GOVERN function parallels the CSF GOVERN function added in CSF 2.0. Organisations implementing both use CSF for cybersecurity controls and AI RMF for AI-specific risk identification, measurement, and management.

Abhishek G Sharma

Founder & CEO, Move78 International Limited

ISO 42001 Lead Auditor · ISO 27001 Lead Auditor · CISA · CISM · CRISC · CEH · CCSK · CAIGO · CAIRO

20+ years in cybersecurity and risk management. Builds AI governance tools and advisory programmes for mid-market organisations navigating the EU AI Act.

Ready to implement your AI governance framework?

From gap analysis tools to guided workshops and full advisory — we help you build a compliant AI management system.

Disclaimer

This guide is for educational and informational purposes only. It does not constitute legal or certification advice. ISO/IEC 42001:2023 is published by ISO and is subject to copyright. NIST AI RMF 1.0 is a US government publication. The EU AI Act (Regulation 2024/1689) interpretation may evolve as implementing acts and enforcement decisions are published. Consult qualified legal counsel and certification bodies for advice specific to your organisation. Move78 International Limited is not an accredited certification body. All references current as of March 2026.