What counts as a general-purpose AI model under the EU AI Act?

Chapter V of the EU AI Act creates a separate regulatory category for general-purpose AI models — or GPAI models. These are AI models that can perform a wide range of tasks across different domains and can be integrated into many downstream AI systems. Think GPT-4, Claude, Gemini, Llama, Mistral — but also smaller domain models that exhibit general-purpose capability.

The legal definition matters. Article 3(63) defines a GPAI model as an AI model that "displays significant generality and is capable of competently performing a wide range of distinct tasks" regardless of how it was placed on the market. The Commission's Q&A guidance clarifies that it's the capability and generality of the model that determines classification — not the training compute, not the parameter count, and not the business model.

This catches more companies than you'd expect. If you're training a model that can handle diverse tasks across your product suite and selling access to it, you're likely operating a GPAI model. If you're offering it as an API to third parties who integrate it into their own systems — that's almost certainly a GPAI model placement on the market.

GPAI model vs GPAI system vs ordinary AI system

Don't confuse these three. A GPAI model is the underlying model (the weights, the architecture). A GPAI system is a GPAI model integrated into an AI system that can serve a variety of purposes — your chatbot product built on top of GPT-4, for example. An ordinary AI system is purpose-built for a specific task and doesn't exhibit significant generality. The obligations differ for each. Chapter V (Articles 51–56) governs GPAI models specifically. If your GPAI system also qualifies as high-risk under Annex III, you carry both Chapter V and high-risk obligations.

When does a GPAI model have systemic risk?

Article 51(2) creates a second tier of GPAI obligations for models classified as having "systemic risk." Two paths to that classification exist.

First, the compute threshold: if the cumulative training compute exceeds 1025 FLOPs, the model is presumed to have systemic risk. As of March 2026, only a handful of models from the largest labs (OpenAI, Google DeepMind, Anthropic, Meta) approach or exceed this threshold. If you're a mid-market provider, you're almost certainly below it.

Second, Commission designation: the European Commission can designate a model as having systemic risk based on criteria in Annex XIII, even below the compute threshold. This could capture models with outsized societal impact, unusually broad deployment, or capabilities that create novel risks. No such designations have been made as of March 2026, but the mechanism exists.

For mid-market providers

You're unlikely to hit systemic-risk status today. But design your governance with future scaling in mind. If your model's training compute grows, or if the Commission adjusts the threshold or designates models by impact criteria, you don't want to be caught without the infrastructure to comply.

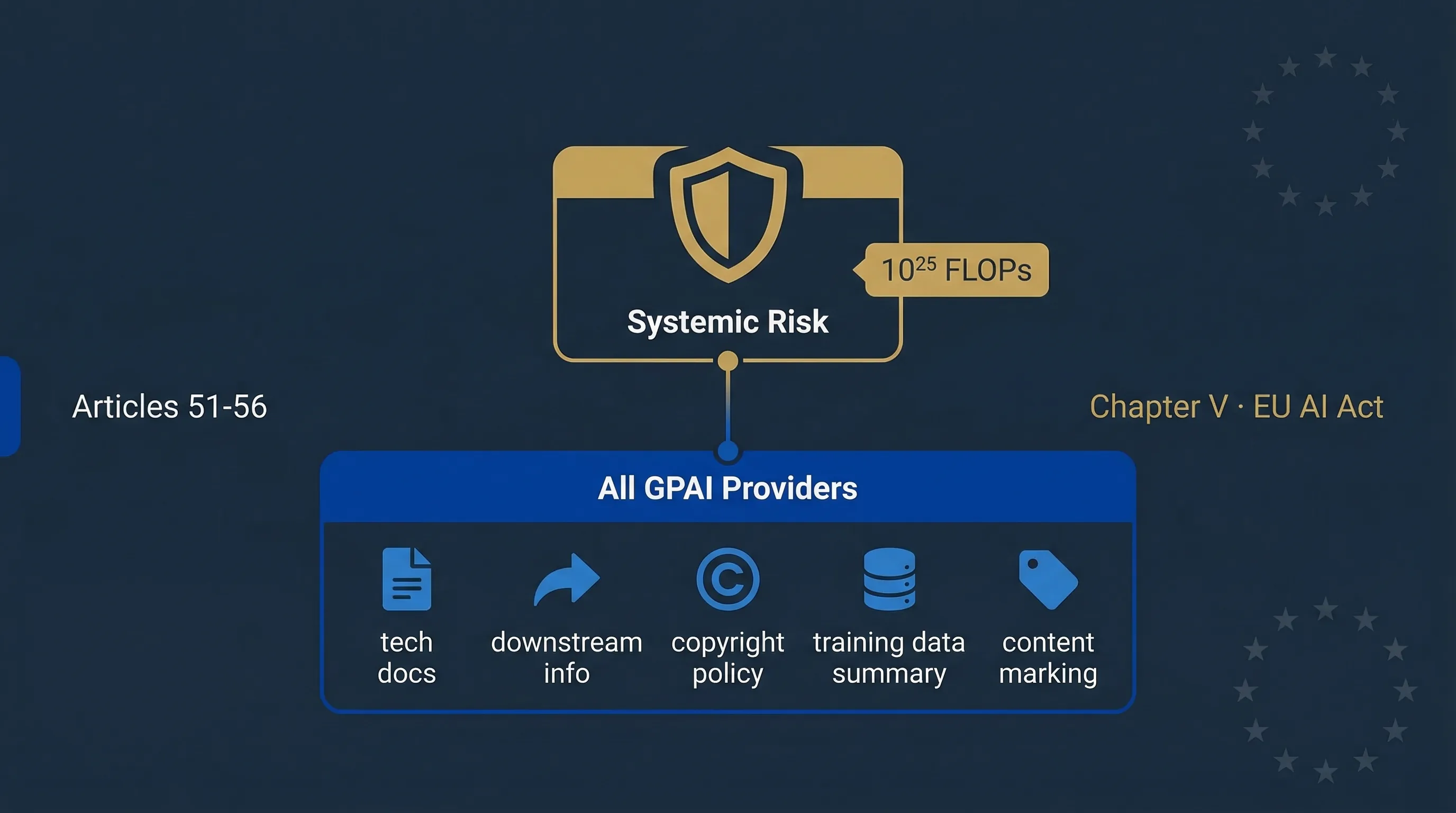

Core obligations for all general-purpose AI model providers

Article 53 applies to every GPAI provider, regardless of model size. These obligations have been enforceable since 2 August 2025. Pre-existing models (on the market before that date) have a grace period until August 2, 2027. Here's what's required.

| Obligation | What it means in practice | Article / Annex |

|---|---|---|

| Technical documentation | Maintain documentation on model training, testing methodology, evaluation results, and known limitations. Annex XI specifies the content requirements. | Art. 53(1)(a), Annex XI |

| Information to downstream providers | Provide downstream AI system providers with documentation on capabilities, limitations, intended uses, and integration requirements. This is a "downstream information pack." | Art. 53(1)(b) |

| Copyright policy | Establish and act on a policy to respect EU copyright law, including the text and data mining opt-out under the DSM Directive (2019/790). Document your approach. | Art. 53(1)(c) |

| Training data summary | Publish a "sufficiently detailed summary" of training data content. The AI Office has published a template for this. The summary must be informative without revealing trade secrets. | Art. 53(1)(d) |

| Content marking support | If your model generates synthetic content, ensure outputs can be marked as AI-generated in a machine-readable format. Support downstream deployers' Article 50 transparency duties. | Art. 50(2), Art. 53 |

Table 1: Article 53 obligations for all GPAI providers. These apply regardless of model size or systemic-risk classification.

For a mid-market provider, this translates to four concrete artefacts: a model card documenting training and evaluation, a downstream information pack for your customers, a copyright compliance policy, and a published training data summary. None of this requires hyperscaler resources. It requires discipline.

Additional obligations if your model has systemic risk

Article 55 layers additional duties on top of Article 53 for systemic-risk GPAI models. If you don't currently exceed 1025 FLOPs and haven't been designated by the Commission, this section is for awareness — not immediate action.

Model evaluations and adversarial testing

Perform state-of-the-art evaluations including adversarial (red-team) testing to identify and mitigate systemic risks. The AI Office may specify benchmarks and methodologies through codes of practice or implementing acts.

Systemic risk assessment and mitigation

Assess and mitigate possible systemic risks at Union level. This means risks that aren't just about individual harm but about aggregate societal impact — large-scale misinformation, critical infrastructure disruption, or widespread discrimination.

Incident tracking and reporting

Track serious incidents and report them to the EU AI Office and relevant national authorities. The reporting threshold is lower than for ordinary AI systems — systemic-risk models face heightened scrutiny.

Cybersecurity protections

Ensure adequate cybersecurity for the model and its physical infrastructure. This includes supply-chain security — who has access to model weights, training data, and deployment pipelines.

Figure: GPAI provider classification — where you sit in the value chain determines which obligations apply.

Non-EU GPAI providers: authorised representatives and scope

Article 54 is straightforward but non-negotiable: if you're a GPAI provider based outside the EU and you place your model on the EU market, you must appoint an authorised representative (AR) established in the Union. The AR acts as the compliance contact for the EU AI Office and national competent authorities.

This applies to every non-EU GPAI provider — not just systemic-risk models. If you're a US-based startup selling API access to European customers, you need an EU-based AR before that first API call from an EU IP address constitutes "placing on the market."

The AR must have a written mandate from the GPAI provider specifying their powers and responsibilities. They must be able to provide technical documentation to the AI Office on request. This isn't a PO box arrangement — it's a substantive compliance function.

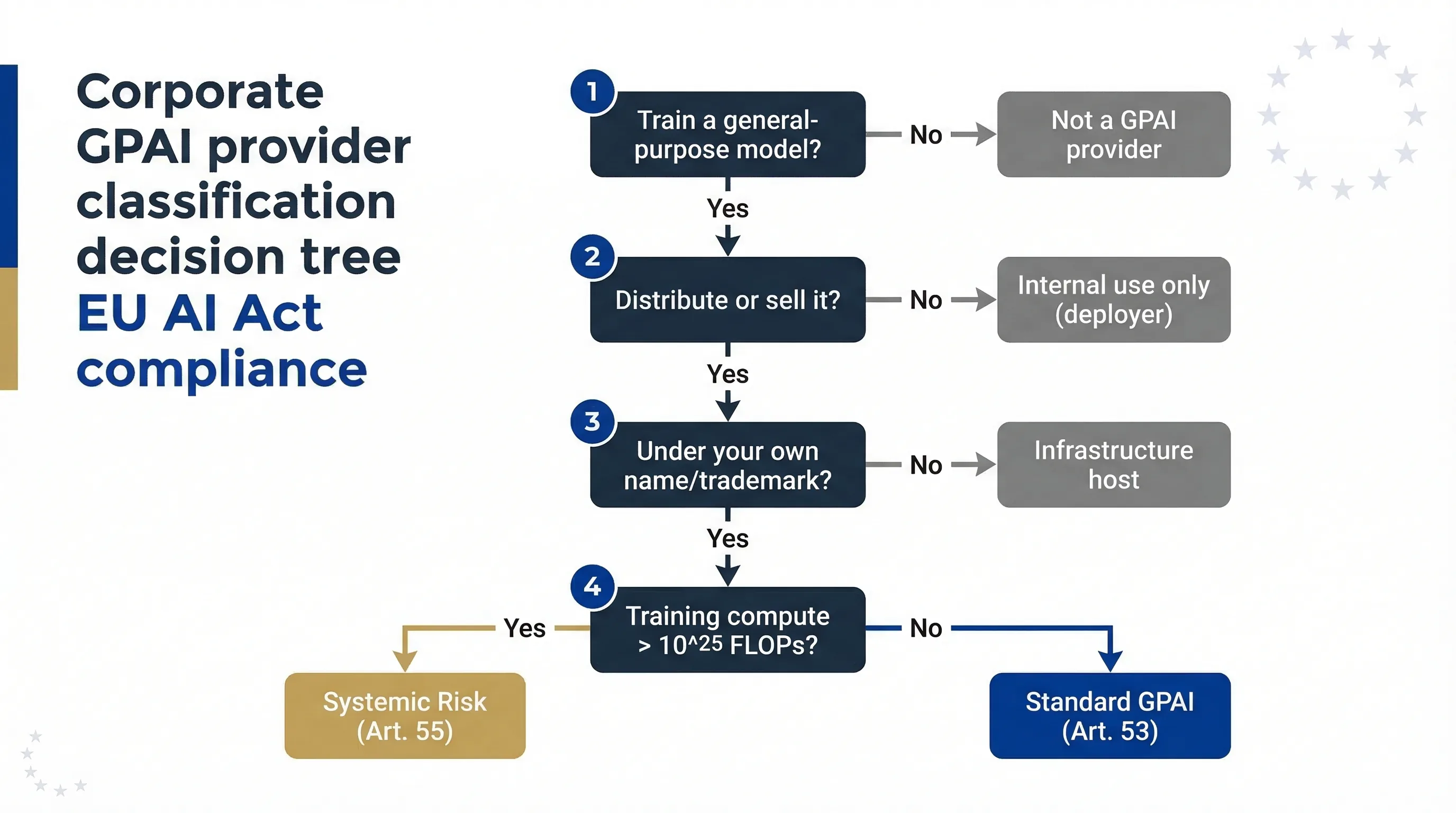

Are you a GPAI provider, downstream provider, or deployer?

This is the question that creates the most confusion. The answer determines which obligations you carry. Here's the decision logic.

| Role | What you do | Your obligations |

|---|---|---|

| GPAI model provider | You train a general-purpose model from scratch and distribute it (API, open-source, or embedded in products) | Full Article 53 obligations. Article 55 if systemic risk. Article 54 AR if non-EU. |

| Substantial fine-tuner / modifier | You substantially modify a GPAI model and redistribute it under your name | May become a GPAI provider. Use Accidental Provider Classifier to check. |

| Downstream AI system provider | You integrate a GPAI model into a specific-purpose AI system and sell that system | AI system provider obligations (risk classification, documentation, CE marking if high-risk). Not Chapter V unless you also modify the model. |

| Deployer | You use a GPAI-powered AI system in your business operations | Deployer obligations (Article 26, Article 4 literacy, Article 50 transparency). No Chapter V duties. |

| Pure infrastructure host | You host third-party models without modification or configuration | Generally no GPAI provider obligations. But if you select, configure, or bundle models, you may be a system provider. |

Table 2: GPAI value chain roles and corresponding EU AI Act obligations. Classification depends on what you do, not what you call yourself.

The most dangerous grey zone is the "substantial fine-tuner." If you take Llama, fine-tune it on industry-specific data, and offer it as your product to EU customers, you're likely a GPAI provider — not just a deployer. The Commission's Q&A guidance uses the test of whether you've "substantially modified" the model's capabilities or safety characteristics. If your fine-tuning changes what the model can do in a material way, that's a modification. If you're just prompt-tuning or adding a RAG layer, it's probably not.

Practical governance for mid-market GPAI and foundation model teams

You don't need OpenAI's compliance budget to satisfy Chapter V. Here's a realistic checklist for a mid-market GPAI provider — the kind of company with 15–200 employees, training domain-specific models or fine-tuning open-source foundations.

Model card / system card

Document your model's architecture, training methodology, evaluation results, known limitations, and intended uses. Follow the Annex XI structure. This is your core technical documentation artefact — treat it as a living document that's updated with every material model change.

Safety evaluations

Run structured safety evaluations aligned with ISO 42001 risk assessment or NIST AI RMF MAP/MEASURE functions. For mid-market providers, this doesn't mean hiring a red-team lab. It means running standardised benchmarks (bias, toxicity, factual accuracy, safety refusal rates) and documenting the results. Use our ISO/NIST Gap Analyzer to identify governance gaps.

Downstream information pack

Your EU customers will need documentation to satisfy their own deployer obligations — Articles 26, 14, 50. Build a standard downstream information pack: model capabilities and limitations, recommended use conditions, known risks, integration guidance, and content marking support specifications. Ship this with every API contract.

Content marking in API outputs

If your model generates text, images, audio, or video, your API must support machine-readable content marking. This enables your downstream deployers to meet their Article 50 transparency obligations. Embed metadata (XMP, IPTC, C2PA) in generative outputs. If your API doesn't support this yet, that's a gap you need to close before your customers face enforcement in August 2026. See our Article 50 Transparency Guide for implementation patterns.

Incident and red-team testing

Establish an incident tracking process — even if you're below the systemic-risk threshold. When your model produces harmful outputs, offensive content, or factually dangerous information in production, log it, assess the root cause, and fix it. This builds the muscle you'll need if you ever scale into systemic-risk territory, and it demonstrates governance maturity to enterprise customers who increasingly ask for it.

What EU enterprise customers will expect from GPAI vendors

Forget the regulation for a moment. Your EU enterprise customers will demand contractual assurances regardless of whether a regulator comes knocking. Here's what procurement teams are already asking for in due diligence questionnaires.

AI Act compliance statement. A written assertion that your model meets Article 53 requirements, with specific reference to technical documentation, downstream information, copyright policy, and training data summary.

Model card / documentation. Not a marketing white paper. A structured document covering capabilities, limitations, safety evaluation results, known failure modes, and intended use conditions.

Copyright and data governance disclosure. How was training data sourced? What's your policy on copyright compliance? Do you honour text-and-data-mining opt-outs under the DSM Directive? Enterprise legal teams will ask.

Security and incident notification. Cybersecurity posture, access controls on model weights, incident response SLA, and notification timeline for security events. I've seen three procurement due diligence questionnaires in the past quarter that specifically referenced Article 55 cybersecurity requirements — even when the vendor isn't systemic-risk. Customers are using the regulation as a governance benchmark, not just a legal checklist.

Content marking support. Can your API output content with machine-readable provenance metadata? If not, the customer can't meet their Article 50 duties. That's a deal-breaker for compliance-conscious buyers.

Related compliance tools

All tools run 100% in your browser. No login, no data collection.

Full 12-question diagnostic including GPAI classification. ~5 min.

Check if fine-tuning or modifying a model makes you a provider. Article 25. ~2 min.

Check content marking duties for downstream transparency. ~3 min.

Machine-readable marking requirements for synthetic content. ~3 min.

Map governance to ISO 42001 and NIST AI RMF. Articles 9, 15, 17. ~3 min.

Find unapproved GPAI use inside your organisation. ~3 min.

FAQ: GPAI models and foundation models under the EU AI Act

If we fine-tune an open-source GPAI model, are we a GPAI provider?

It depends on the extent of modification. If you fine-tune substantially and redistribute under your name, you may become a GPAI provider under Article 53. Minor adaptations (prompt tuning, RAG) generally don't trigger this. Use the Accidental Provider Classifier to check.

Does training compute below 10²³ FLOPs mean we're out of scope?

Not necessarily. The 1025 FLOPs threshold triggers systemic risk presumption specifically. A model can still be classified as GPAI based on generality and capability, regardless of compute. Low compute doesn't exempt you from Article 53 if your model is general-purpose.

What if we only host third-party GPAI models without modifying them?

Pure hosting without modification generally isn't GPAI provision. But if you select, configure, or bundle models in ways that influence behaviour (system prompts, safety filters, routing logic), you may be creating a GPAI system with its own obligations. Document your role clearly.

When did GPAI obligations become enforceable?

Since August 2, 2025. Models already on the market before that date have a grace period until August 2, 2027. Post-August 2025 models must already comply.

Do non-EU GPAI providers need an authorised representative?

Yes. Article 54 requires non-EU GPAI providers to appoint an EU-based AR before placing their model on the EU market. This applies to all GPAI providers, not just systemic-risk models.

What documentation must all GPAI providers maintain?

Article 53 requires: technical documentation per Annex XI, downstream information pack (capabilities, limitations, intended uses), copyright compliance policy, and a published training data summary. For mid-market: a model card, safety evaluations, downstream pack, and data summary.

What are the penalties for GPAI non-compliance?

Up to €15 million or 3% of global annual turnover (Article 99). Incorrect information to the AI Office: €7.5M or 1%. The EU AI Office is the primary enforcement body for GPAI. No fines issued as of March 2026.

How does the Digital Omnibus affect GPAI obligations?

The proposed Omnibus (Council mandate ST-7322-2026-INIT) primarily targets high-risk AI timelines and Article 4 literacy — not Chapter V GPAI obligations. Core Article 53 duties remain intact. Treat current GPAI obligations as stable. See our Digital Omnibus deep dive.

Further reading

- EU AI Act Compliance Guide → — Full overview of all obligations, timelines, and penalties.

- Article 50 Transparency Guide → — Content marking obligations GPAI providers must support.

- ISO 42001 / NIST AI RMF Crosswalk → — Map governance frameworks to EU AI Act requirements.

- AI Literacy Article 4 Guide → — Train staff on GPAI obligations and downstream duties.

- Digital Omnibus Tracker → — Status of proposed changes including GPAI-adjacent adjustments.

Abhishek G Sharma

Founder & CEO, Move78 International Limited. 20+ years in cybersecurity and AI risk management. Certifications: ISO 42001 LA, ISO 27001 LA, CISA, CISM, CRISC, CEH, CCSK, CAIGO, CAIRO.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & educational purpose

This guide is published by Move78 International Limited for educational purposes only. It does not constitute legal advice. GPAI classification and obligations are subject to evolving guidance from the EU AI Office. The Commission may adjust compute thresholds or designate models by impact criteria. Organisations should consult qualified legal counsel for compliance decisions. No enforcement fines have been issued under Chapter V as of this publication date. All information current as of March 2026.