Quick answer

An AI agent evidence file is a controlled record for one agent workflow. It should document the agent’s purpose, autonomy level, tool and data access, human approval points, vendor or model evidence, prompt and action logs, testing, disclosure review, override and rollback paths, incident escalation, and the owner responsible for keeping the evidence current.

Why AI agents need separate evidence

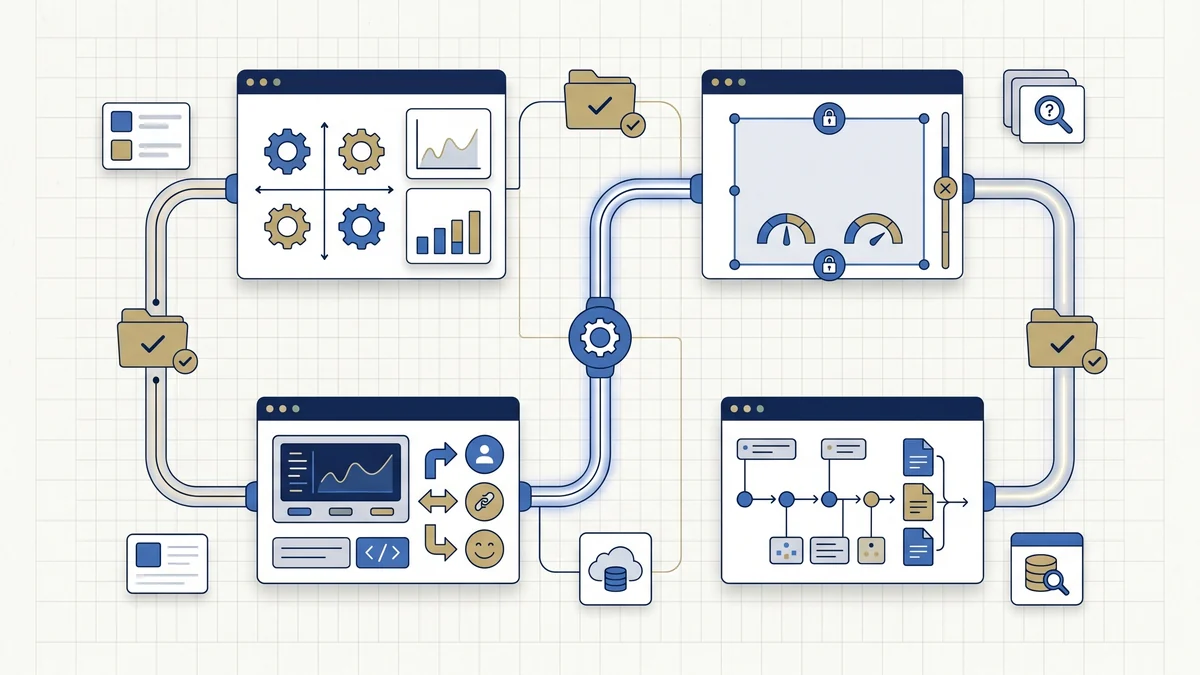

Agent workflows can combine reasoning, retrieval, tools, memory, plugins, permissions, human approvals and downstream actions. That makes the evidence question different from a simple chatbot or static model review. A deployer needs to know what the agent can do, which systems it can reach, who can stop it, what it records, and which gaps require legal, privacy, security or sector review.

This page does not decide whether an AI agent is high-risk. It helps a team assemble an operational record before deployment, pilot expansion, procurement renewal, security review, audit response or incident investigation.

Local browser tool

Build your AI agent evidence outline

Enter non-confidential workflow descriptors only. The builder creates a copyable outline in your browser and does not send the content to EU AI Compass.

Agent baseline

Use summaries. Do not paste confidential prompts, logs, datasets, secrets, client material, or personal data.

Capabilities and access

Known evidence gaps

Generated outline

AI agent evidence output

Complete the fields above and select Generate AI agent evidence outline.

What the AI agent evidence outline should contain

| Evidence section | What to record | Why it matters |

|---|---|---|

| Agent identity and owner | Name, use case, business owner, technical owner, vendor/model source and review status. | Prevents anonymous agent workflows and unclear accountability. |

| Purpose and users | What the agent is meant to do, who uses it, who may be affected and where it is deployed. | Supports role, risk, privacy and disclosure routing. |

| Autonomy boundary | What the agent may do alone, what needs approval and what is prohibited. | Separates controlled automation from unmanaged delegation. |

| Tool, API and data permissions | Connected systems, datasets, knowledge bases, permission levels and access owner. | Shows the agent’s operational blast radius. |

| Human approval model | Approval points, reviewer authority, override route, escalation triggers and stop conditions. | Documents whether oversight can actually change outcomes. |

| Vendor or model evidence | Provider documents, model cards, instructions, limitations, change notices and support contacts. | Connects deployment risk to the supplier evidence file. |

| Prompt and action logs | Prompt categories, tool calls, actions taken, exceptions, human decisions and retention position. | Supports incident review and audit-response reconstruction. |

| Testing and red-team evidence | Pre-deployment tests, misuse tests, tool-misuse tests, refusal tests and defect closure. | Shows the agent was tested before broader use. |

| Disclosure and privacy routing | Article 50 review, DPIA route, personal data indicators, user notice and sensitive-context triggers. | Routes legal and privacy review without guessing the answer. |

| Incident, override and audit index | Rollback plan, incident owner, emergency stop path, audit question index and open gaps. | Gives reviewers one place to inspect readiness and unresolved risks. |

Capability decision table

| If the agent can... | Add this evidence | Escalate to |

|---|---|---|

| Call tools, APIs or scripts | Permission register, action allow-list, tool owner, test cases, error handling and rollback route. | Security, product owner, platform owner. |

| Access business data or knowledge bases | Data-source list, sensitivity rating, access approval, provenance, freshness and retrieval logging. | Data owner, security, privacy. |

| Process personal data | Purpose, data categories, legal/privacy assessment route, retention position and DPIA trigger review. | DPO/privacy counsel. |

| Interact with people | User-facing disclosure review, escalation route, complaint route and human contact path. | Legal, UX, customer operations. |

| Support employment, education, credit, insurance, healthcare, public services or safety workflows | Sector review, role/risk rationale, human oversight record, impact-assessment route and audit-response index. | Legal, compliance, sector owner. |

| Generate public-facing content | Article 50 review, labelling/notice decision, content review workflow and retained proof. | Legal, communications, product. |

Common AI agent evidence mistakes

Documenting the model, not the workflow

An agent evidence file should cover actions, tools, data, approvals and logs. A model card alone does not describe operational use.

No permission register

If the agent can call tools or access systems, keep a controlled register of permissions, owners, restrictions and change approvals.

Oversight that cannot intervene

Reviewers need authority, instructions, escalation thresholds and a record of decisions. Passive monitoring is weak evidence.

Copying logs without sensitivity review

Prompt and action logs can contain personal data, secrets and privileged information. Retention needs privacy and security review.

Ignoring vendor dependency

Third-party agent platforms, model providers and plugins should be tied to vendor evidence, change notices and support contacts.

No rollback or incident route

Autonomous or semi-autonomous workflows need a documented way to pause, override, roll back, investigate and escalate.

FAQ

An AI agent evidence file is a controlled record for one agent workflow. It documents the agent purpose, autonomy level, tool and data permissions, human approval points, vendor or model evidence, prompt and action logs, testing records, disclosure review, override and rollback paths, incident escalation, and the owner responsible for keeping the record current.

No. The builder helps structure evidence for internal review, audit preparation, procurement review and risk governance. It does not decide legal status, prove EU AI Act compliance, certify a system, or replace qualified legal, privacy, cybersecurity, employment, procurement or sector-specific review.

Start with agents that can call tools, access business data, trigger workflow actions, support decisions, interact with users, generate public-facing content, process personal data, or operate in regulated contexts. Lower-risk agent experiments may need a lighter file, but purpose, owner, boundaries, access and review status should still be clear.

Document what the agent may do without approval, what requires human approval, what it must never do, which tools or systems it can call, who can change those boundaries, and which conditions trigger escalation, override, pause, rollback or shutdown.

Record each connected tool, API, dataset, knowledge base, database, file repository, workflow system or external service. For each access path, capture the owner, permission level, data sensitivity, approval route, logging method, change control and known restrictions.

Useful oversight evidence includes named reviewers, approval points, escalation thresholds, override authority, review instructions, training records, sample review records, incident handoff, and proof that reviewers can stop or challenge agent output or actions when needed.

Prompt logs, action logs and tool-call records can be useful for review, incident analysis and audit response, but they may contain personal data, secrets, privileged material or confidential business information. Retention decisions should be risk-based and reviewed with privacy, legal and security owners.

Trigger review when the agent processes personal data, supports employment, education, credit, insurance, healthcare, public services, biometric or safety-relevant workflows, interacts with users, generates public-facing content, or can cause material operational, legal or security impact.

Source and review note

This page is an operational evidence-structuring tool for AI agent governance and EU AI Act readiness work. It is not legal advice, does not determine whether an AI system is high-risk or compliant, and does not replace qualified legal, privacy, cybersecurity, employment, procurement, or sector-specific review.

Primary references for final review should include Regulation (EU) 2024/1689, the European Commission AI Act Service Desk implementation timeline, NIST AI RMF Core, and OWASP Top 10 for Agentic Applications. Use technical risk frameworks as context, not as legal authority.