What is shadow AI — and why is it different from shadow IT?

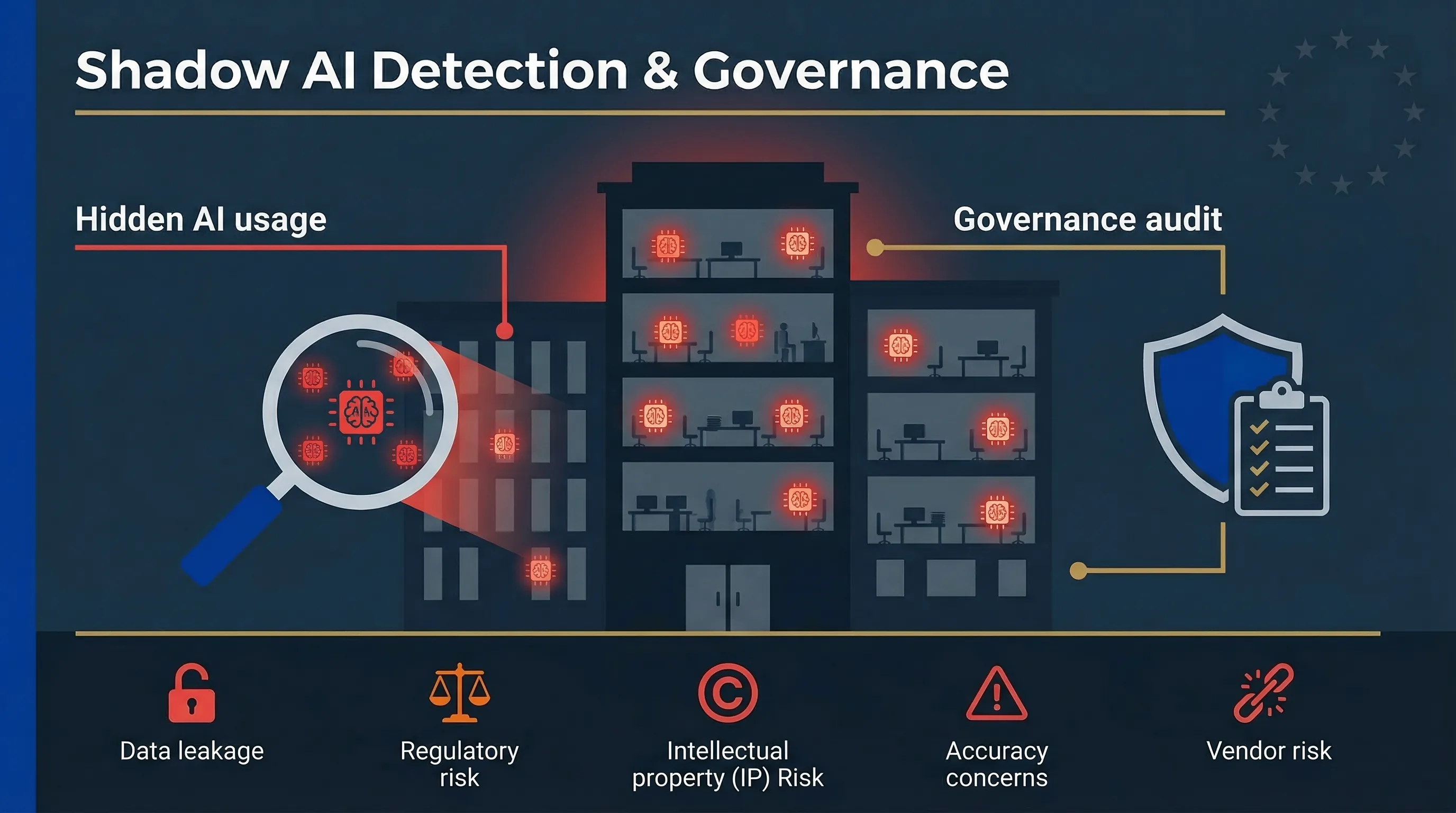

Shadow AI is the use of AI-powered tools, services, or models by employees without organisational approval, oversight, or governance. It differs from traditional shadow IT in three ways that make it materially more dangerous.

Data risk magnitude is higher. Employees paste confidential data — customer records, source code, financial projections, legal documents — into AI chat interfaces. The data leaves your perimeter and may be used for model training. Samsung engineers leaking proprietary semiconductor source code via ChatGPT in 2023 wasn't a one-off; it was the incident that happened to go public.

Output liability is new. AI-generated outputs — reports, code, hiring decisions, customer communications — carry accuracy, bias, IP, and regulatory risks that traditional SaaS shadow IT never did. When an employee uses ChatGPT to draft a credit assessment, that's not just a data risk. It's a decision risk.

Detection is harder. Shadow AI runs through browser-based tools (ChatGPT, Claude, Gemini) that look like normal web traffic. No software is installed. No procurement record exists. Free tiers leave no financial trail.

Common shadow AI includes: ChatGPT/Claude/Gemini via browser, Copilot features auto-enabled in Microsoft 365, AI-powered browser extensions (Grammarly AI, Jasper), image generators (Midjourney, DALL-E), coding assistants (GitHub Copilot on personal accounts), AI meeting summarisers, and AI-powered CRM features.

| Dimension | Traditional Shadow IT | Shadow AI |

|---|---|---|

| Data risk | Data stored in unapproved SaaS | Data pasted into AI models, potentially used for training |

| Output risk | Minimal — tool produces standard outputs | High — AI outputs carry accuracy, bias, and IP liability |

| Detection | Visible via procurement, endpoint agents | Browser-based, no install, free tiers leave no trail |

| Regulatory exposure | Data protection (GDPR) | GDPR + EU AI Act deployer obligations + IP risk |

| User intent | Productivity workaround | Productivity acceleration — often with business-critical outputs |

How widespread is shadow AI?

Shadow AI adoption accelerated after ChatGPT's launch in November 2022 and hasn't slowed down through the subsequent wave of enterprise AI tools in 2024-2025. Multiple enterprise surveys report that the majority of employees using AI tools at work do so without IT or compliance approval. SaaS management platforms consistently find 3-5x more AI tools in organisational environments than IT teams expected.

The gap between what security teams know about and what employees actually use is widening, not closing. And the problem is worst in mid-market organisations (50-500 employees) where formal AI governance doesn't exist, IT teams are small and stretched, there's no dedicated compliance function, and employees adopt tools faster than policy can keep up.

Here's what most CISOs miss: shadow AI isn't malicious. Employees use unauthorised AI because it makes them more productive and nobody told them not to — or the approved alternative is worse. The goal isn't to ban AI. It's to bring it under governance. Organisations that try to prohibit all AI tool usage drive adoption further underground and lose all visibility.

The five categories of shadow AI risk

Unlike traditional shadow IT, shadow AI introduces three additional risk dimensions: magnified data leakage, output liability, and detection difficulty. Here's the full taxonomy I use when assessing shadow AI exposure in organisations.

1. Data leakage and confidentiality breach

Employees input sensitive data into AI services: customer PII, financial records, source code, trade secrets, legal documents, M&A materials, board presentations. Most consumer AI tools retain input data for model training unless enterprise agreements are in place. A single paste of customer data into ChatGPT can constitute a data breach under GDPR Article 33 if personal data of EU residents is involved.

2. Regulatory and compliance exposure

Under the EU AI Act, if an employee uses an AI tool to screen job applicants, score credit risk, or make insurance decisions — even without organisational approval — the organisation is deploying a high-risk AI system under Annex III. Under GDPR, using AI to process personal data without a lawful basis, DPIA, or data processing agreement creates separate liability. The organisation is responsible for the employee's AI use regardless of whether it was authorised.

3. Intellectual property contamination

AI-generated code or content may infringe third-party IP — training data copyright issues are unresolved in most jurisdictions. Confidential information entered into AI tools may lose trade secret protection. And AI outputs may be uncopyrightable in some jurisdictions (the US Copyright Office has taken this position for purely AI-generated works).

4. Accuracy and decision quality risk

AI hallucinations in business-critical outputs: financial analyses, legal advice, medical information, compliance assessments, customer communications. No quality control, no validation, no human review. The Automation Complacency Assessor evaluates how vulnerable your teams are to over-trusting AI output.

5. Vendor and supply chain risk

Unauthorised AI tools are unsigned vendors in your supply chain. No data processing agreements, no security assessments, no SLA, no incident notification obligations. If the AI vendor has a breach, you may not even know your data was involved. Screen your AI vendors with the AI Vendor Risk Screener.

| Risk Category | Primary Impact | Regulatory Trigger |

|---|---|---|

| Data leakage | Confidential data exits organisation perimeter | GDPR Art. 33 breach notification |

| Regulatory exposure | Unmanaged deployer obligations | EU AI Act Art. 26, Annex III |

| IP contamination | Trade secret loss, copyright infringement | National IP law, US Copyright Office guidance |

| Decision quality | Hallucinations in business-critical outputs | Professional liability, fiduciary duty |

| Vendor/supply chain | Unsigned vendors with no contractual controls | GDPR Art. 28 (DPA), EU AI Act Art. 25-26 |

Shadow AI creates EU AI Act obligations you haven't scoped

This is the part that catches most CISOs off guard. Here's the legal logic chain:

An employee uses an AI tool for recruitment screening, credit assessment, or insurance pricing without organisational approval.

Under the EU AI Act, the organisation is the deployer — whether or not it authorised the use. Article 3(4) defines deployer as "any natural or legal person... that uses an AI system under its authority."

If the use case falls under Annex III, the organisation has high-risk deployer obligations it isn't meeting: no human oversight, no monitoring, no logging, no FRIA, no vendor due diligence.

Article 4 (AI literacy) requires all staff involved in AI system operation to have sufficient competence. Shadow AI users almost certainly don't meet this bar. This has been enforceable since February 2, 2025.

Annex III high-risk obligations take effect August 2, 2026. Penalties: up to €15 million or 3% of worldwide annual turnover.

You can't comply with the EU AI Act if you don't know what AI your organisation is using. Shadow AI inventory is step zero of compliance.

GDPR stacks on top. If shadow AI processes personal data of EU residents without a lawful basis under Article 6, without a DPIA under Article 35, or without a data processing agreement under Article 28 — that's a separate violation. GDPR fines reach €20 million or 4% of turnover. And the 72-hour breach notification clock starts the moment you become aware of a data breach, not when you discover the shadow AI tool that caused it.

For the full picture of deployer obligations, see our High-Risk Deployer Guide. To check whether your AI use cases are high-risk, use the EU AI Act Compliance Checker and the Deployer Obligation Self-Assessment. For the broader compliance context, see our complete EU AI Act compliance guide.

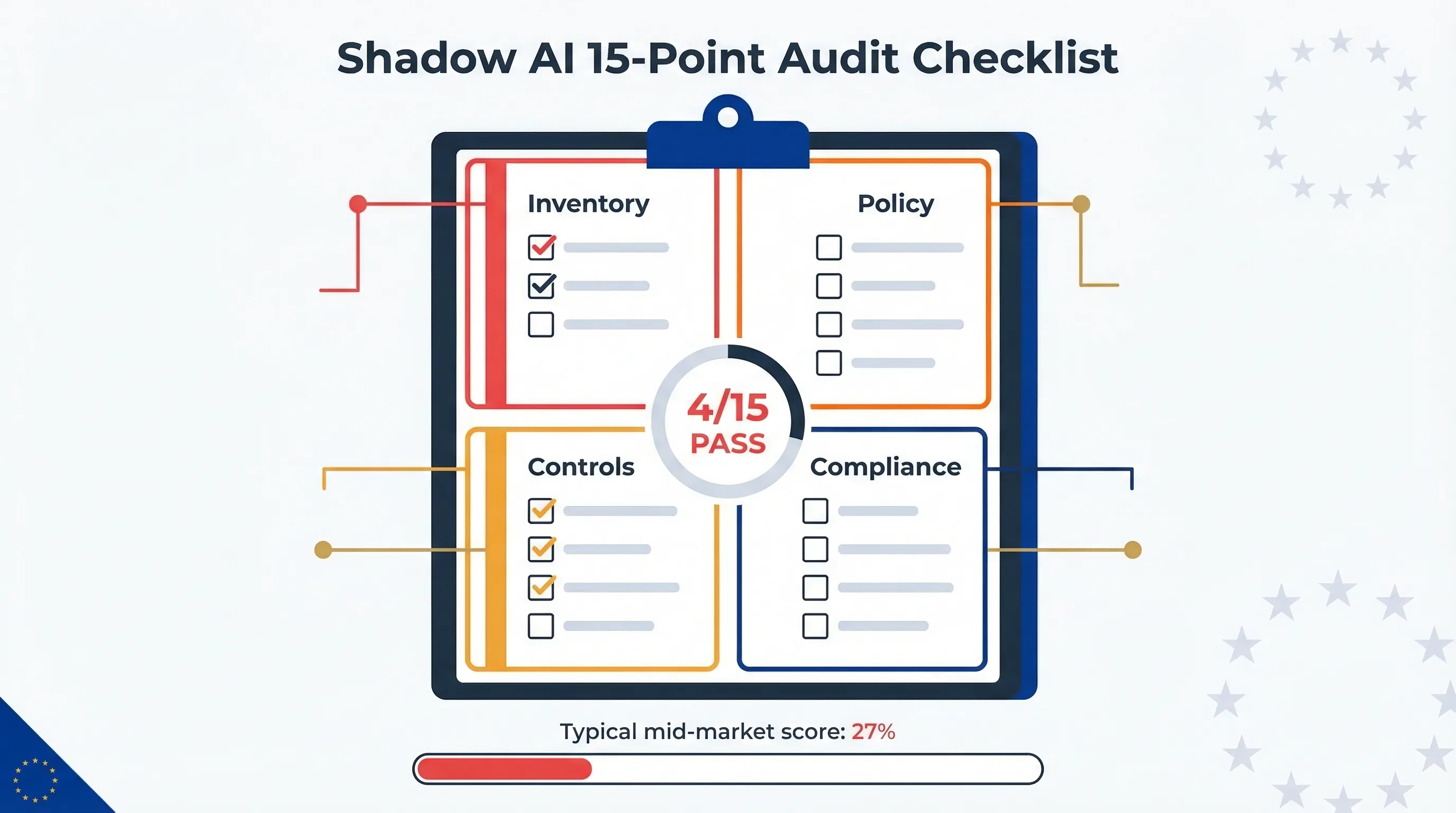

The 15-point shadow AI audit checklist: most mid-market organisations pass fewer than 4 items.

Shadow AI detection: four methods that work

How do you find what you can't see? You attack from four angles simultaneously. No single method catches everything — combined, they give you 70-80% visibility.

Method 1: Network and endpoint analysis

Monitor DNS queries and web traffic for known AI service domains (api.openai.com, claude.ai, gemini.google.com, midjourney.com). Check browser extension inventories for AI-powered add-ons. Review CASB (Cloud Access Security Broker) logs for AI SaaS usage. Limitation: encrypted traffic and personal devices reduce visibility.

Method 2: Procurement and financial audit

Search expense reports and credit card transactions for AI tool subscriptions. Review SaaS management platform data for AI tool licences. Check for free-tier usage that doesn't show in procurement (ChatGPT free, Claude free, Gemini free). Limitation: free-tier usage leaves no financial trail.

Method 3: Employee survey and self-declaration

Direct survey asking which AI tools employees use for work — anonymous option increases honesty. Questions: what tools, what data types inputted, what outputs used for decisions, frequency. Combine with an amnesty period: "declare now, no consequences; detected later, policy applies." Limitation: underreports by 30-50% typically.

Method 4: Application and API inventory

Review OAuth grants and API connections to organisational accounts (Google Workspace, Microsoft 365, Slack, GitHub). Many AI tools connect via OAuth — these leave discoverable traces. Check GitHub for personal Copilot usage vs organisational licences. Review Slack/Teams app installations for AI bots.

🔎 Free tool: Shadow AI Discovery Protocol

Structured discovery workflow covering all four detection methods. Identifies unmanaged AI systems and maps them to EU AI Act classification.

Start Discovery Protocol →How to govern shadow AI without killing productivity

Good governance makes AI adoption faster, not slower. Teams that know what's approved and how to use it responsibly adopt AI more aggressively than teams operating in a policy vacuum. Here are the six components.

1. AI acceptable use policy

Define approved AI tools with specified use cases and data classification restrictions. Define prohibited uses — customer PII, source code, financial data, legal documents never go into unapproved tools. Define "grey zone" procedures: how to request approval for a new AI tool. The policy must be proportionate — overly restrictive policies drive usage underground.

2. Approved AI tool registry

Maintain a register of AI tools approved for organisational use. For each tool: vendor name, data processing agreement status, security assessment status, permitted data classification levels, permitted use cases. Review quarterly — the AI tool environment shifts fast.

3. Data classification enforcement

Classify organisational data (public, internal, confidential, restricted). Define which classification levels can be processed by AI tools and under what conditions. Implement DLP rules blocking paste of classified data into unapproved tools where technically feasible.

4. Monitoring cadence

Monthly: review CASB/network logs for new AI tool usage. Quarterly: re-run employee survey, update approved tool registry. Annually: full shadow AI audit (see checklist below).

5. Training and awareness (Article 4)

All employees must understand the AI acceptable use policy. Training covers: what's approved, what's prohibited, how to request new tools, what data is never permitted, how to verify AI outputs. This directly satisfies EU AI Act Article 4 AI literacy obligations — enforceable since February 2, 2025. Use the AI Literacy Training Planner to structure your programme.

6. Incident response for shadow AI

Define what constitutes a shadow AI incident: unauthorised processing of restricted data, AI-generated output used in a high-risk decision without oversight, data breach via AI tool. Escalation path: employee reports → IT/security triage → compliance assessment → regulatory reporting if required (GDPR 72-hour notification, EU AI Act serious incident reporting).

For framework-level governance alignment (ISO 42001, NIST AI RMF), see our Framework Mapping Guide and the ISO/NIST Gap Analyzer.

Shadow AI audit: a 15-point checklist

Run this against your organisation. How many of these 15 items can you pass right now? In my experience, most mid-market organisations pass fewer than 4.

Inventory and discovery

Complete AI tool inventory conducted (all four detection methods)

All discovered AI tools classified by risk level (EU AI Act Annex III check)

Shadow AI usage quantified (number of tools, number of users, data types processed)

Policy and governance

AI acceptable use policy published and communicated to all employees

Approved AI tool registry exists and is current (reviewed within last quarter)

Data classification scheme applied to AI usage (what data types permitted per tool)

AI tool procurement process includes security assessment and DPA verification

Controls and monitoring

Network/CASB monitoring covers known AI service domains

DLP rules configured for AI tool paste detection (where technically feasible)

Monthly review of AI tool usage logs conducted

OAuth and API connection inventory includes AI tool integrations

Compliance and training

AI literacy training completed by all staff operating AI systems (Article 4)

High-risk AI use cases identified and deployer obligations assessed

GDPR DPIAs conducted for AI tools processing personal data

Shadow AI incident response procedure documented and tested

📋 Need a structured audit?

Move78 International provides broader AI governance support for organisations that need additional implementation help beyond the free EU AI Compass shadow AI tools and guides.

Shadow AI policy template: what to include

Your AI acceptable use policy needs these 12 sections. I'm giving you the structure — enough to start drafting and recognise the complexity involved. Use this outline together with your internal governance documentation and the free EU AI Compass tools as you build the final policy.

1. Purpose and scope (who the policy covers, what AI tools are in scope)

2. Definitions (AI system, approved AI tool, shadow AI, data classification levels)

3. Approved AI tools and permitted use cases (registry reference)

4. Prohibited AI uses (specific prohibitions by data type and use case)

5. New tool request and approval process

6. Data handling requirements (what data can/cannot be inputted into AI tools)

7. Output verification requirements (human review mandates for AI-generated content/decisions)

8. Monitoring and enforcement (how compliance is monitored, what happens on violation)

9. Training requirements (Article 4 AI literacy link)

10. Incident reporting (what to report, to whom, within what timeframe)

11. Policy review and update schedule

12. Exceptions and waivers process

A shadow AI policy nobody reads is worse than no policy.

It creates a false sense of governance. Keep it under 5 pages, write it in plain language, and require annual acknowledgment with a test.

FAQ: shadow AI detection and governance

What is shadow AI and how is it different from shadow IT?

Shadow AI is the use of AI-powered tools by employees without organisational approval or governance. It differs from traditional shadow IT in three ways: the data risk is higher (employees paste sensitive information into AI interfaces), the output creates new liability (AI-generated decisions carry accuracy and bias risk), and detection is harder (browser-based AI tools leave minimal footprints). Start the Discovery Protocol →

Should we ban AI tools outright?

No. Banning AI tools drives usage underground and eliminates all visibility. The effective approach is governed adoption: define approved tools, set data handling rules, train employees, and monitor compliance. Organisations with clear AI governance policies report higher AI adoption AND fewer incidents than those with blanket bans.

Does shadow AI create EU AI Act obligations?

Yes. If an employee uses an AI tool for a use case covered by Annex III (recruitment screening, credit assessment, insurance pricing), the organisation is a deployer under the EU AI Act regardless of whether it authorised the use. Deployer obligations apply from August 2, 2026. AI literacy obligations under Article 4 have applied since February 2, 2025. Full deployer obligations →

What happens if an employee pastes customer data into ChatGPT?

Potentially a GDPR violation. If the data includes personal data of EU residents and no lawful basis, DPIA, or data processing agreement exists, it constitutes unauthorised data transfer. Depending on the data type and volume, it may trigger the GDPR 72-hour breach notification requirement. The organisation is liable, not the individual employee.

How do I detect shadow AI if employees use personal devices?

Personal devices limit direct monitoring. Focus on: network-level detection (DNS/proxy logs for AI service domains on corporate WiFi), OAuth grant audits, employee self-declaration surveys with amnesty periods, and financial trail analysis. Accept that detection on personal devices will always be partial — governance and training are more effective than surveillance.

How often should we audit for shadow AI?

Continuous monitoring (CASB/network logs) monthly. Employee surveys quarterly. Full audit annually. Update the approved tool registry quarterly. The AI tool environment shifts rapidly — a tool that didn't exist 6 months ago may have 50% employee adoption today.

What is the cost of NOT governing shadow AI?

GDPR fines (up to €20M or 4% of turnover), EU AI Act penalties (up to €15M or 3% of turnover for high-risk violations), IP contamination (loss of trade secret protection, copyright infringement claims), reputational damage (customer data exposed via AI tool breach), and decision quality failures (AI hallucinations in business-critical outputs with no quality control).

Abhishek G Sharma

Founder & CEO, Move78 International Limited

CEH · CISM · CISA · CCSK · ISO 42001 LA · ISO 27001 LA · CRISC · CAIGO · CAIRO

20+ years in cybersecurity and risk management. Builds AI governance tools and advisory for mid-market organisations.

Ready to bring shadow AI under control?

Policy templates, audit checklists, discovery protocols, and guided workshops — everything you need to govern AI adoption.

Disclaimer

This guide is for educational and informational purposes only. It does not constitute legal advice. The EU AI Act (Regulation 2024/1689) and GDPR (Regulation 2016/679) are complex regulations and their interpretation may evolve. Consult qualified legal counsel for advice specific to your organisation. Move78 International Limited makes no warranties regarding the completeness or accuracy of this content. All references current as of March 2026.