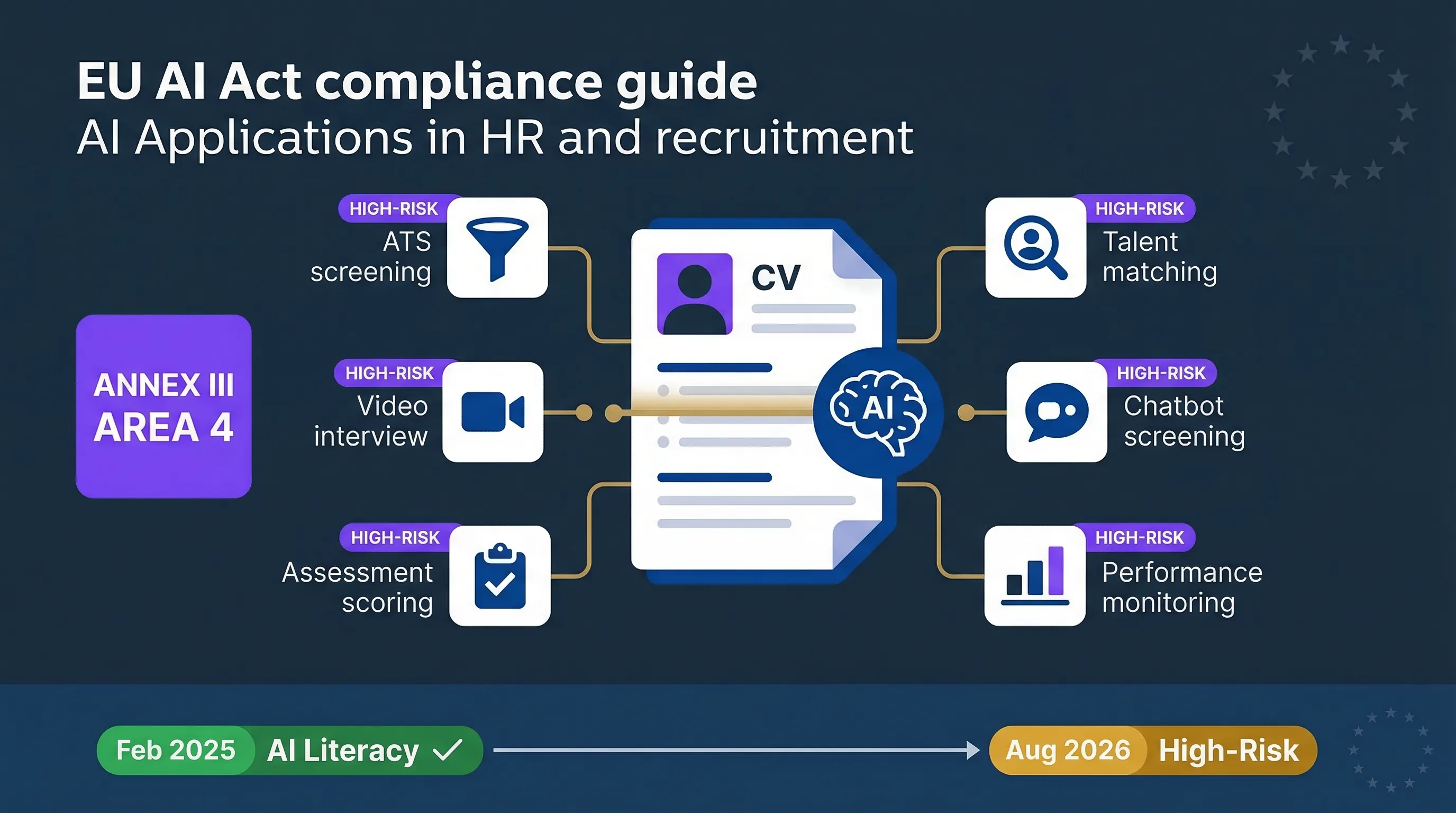

Annex III Area 4: AI in employment is classified high-risk

Annex III Area 4 of the EU AI Act explicitly covers AI systems used for recruitment and selection of candidates — screening, filtering, assessing applications, and ranking. It also covers AI for decisions on promotion, termination, task allocation based on individual behaviour, and performance monitoring and evaluation. There's no ambiguity here. If you use AI in hiring, you're in scope.

That means the following tools and features trigger high-risk classification when used in the EU or affecting EU individuals:

| Tool category | Examples | High-risk trigger |

|---|---|---|

| ATS with AI screening/ranking | Workday Recruiting, iCIMS, SmartRecruiters, Greenhouse AI features | CV screening, candidate ranking, automated shortlisting |

| Talent intelligence platforms | Eightfold AI, Beamery, Phenom | Candidate matching, predictive sourcing, talent scoring |

| AI video interview scoring | HireVue, Modern Hire | Automated assessment of video responses |

| Assessment platforms with AI | SHL, Pymetrics/Harver | Automated scoring of psychometric/cognitive tests |

| Conversational AI for screening | Paradox/Olivia | Automated candidate qualification via chatbot |

| AI sourcing tools | Various | Ranking or filtering candidates from talent pools |

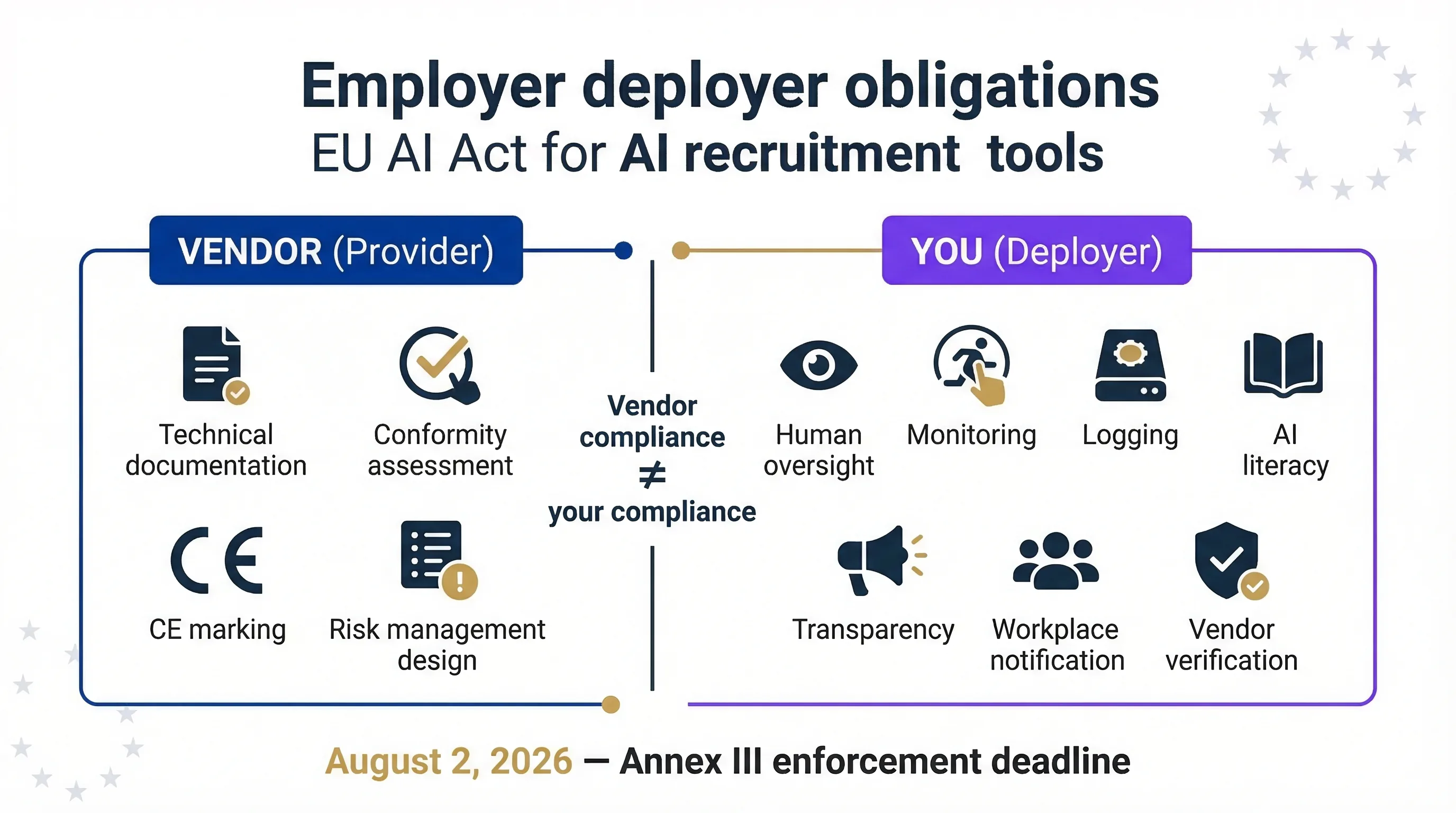

The employer using these tools is the deployer. The vendor building them is the provider. Both have obligations, but they're different. And here's the part most HR teams miss: even if the tool vendor says they're "EU AI Act compliant," the employer still has independent deployer obligations — human oversight, monitoring, logging, AI literacy, vendor verification. Your vendor's compliance doesn't make you compliant. I've made this analogy before: a SOC 2-compliant SaaS vendor doesn't make your organisation SOC 2 compliant.

Use the Promotion & Termination Validator to assess your specific HR AI tools. If you've customised or fine-tuned a vendor's model, check the Accidental Provider Classifier — you may have crossed from deployer to provider. For the full deployer obligations picture, see our High-Risk Deployer Guide.

Deployer obligations for recruitment AI: the vendor provides the system; you own the operational controls.

What employers must do: deployer obligations for AI hiring tools

Human oversight

Name the person responsible for reviewing AI-generated candidate shortlists, scores, or rejection recommendations before they become final decisions. The recruiter or hiring manager reviewing AI output IS the oversight mechanism — but it must be documented, not assumed. If your ATS auto-rejects candidates without human review, that's a compliance gap you need to close before August 2026.

Use per vendor instructions

Obtain and follow the vendor's instructions for use. If the vendor says "this tool is designed for initial screening, not final selection," don't use it for final selection. If you deploy it outside the stated purpose, you risk becoming a provider under Article 25 — and inheriting all provider obligations including conformity assessment and CE marking.

Monitoring

Track the AI system's performance over time. Is the shortlist quality degrading? Are certain demographic groups systematically under-represented? Is the auto-reject rate changing? This doesn't require a data science team — it requires someone looking at the numbers monthly.

Logging

Retain the logs generated by the AI system for at least 6 months (Article 26(5)). Most ATS platforms generate audit logs — verify yours does, confirm what's logged, and make sure you retain them. Employment-sector regulations may require longer retention.

AI literacy (already live since Feb 2, 2025)

Every recruiter and hiring manager using the AI tool must understand how it works, what it can and can't do, how to override it, and what data it uses. A focused 1-hour training session covers this. It's not optional — Article 4 has been enforceable since February 2, 2025.

Transparency to candidates

Inform candidates that AI is used in the hiring process. This is a deployer obligation under both the EU AI Act and GDPR Article 22 (automated individual decision-making). A clear statement in your application process is the minimum.

Workplace notification

If AI is used for internal decisions — promotions, terminations, performance monitoring, task allocation — inform employees or their representatives before deployment. Article 26(7) is specific about this.

Five questions to ask your recruitment AI vendor today

If your vendor can't answer all five, they're not ready — and neither are you.

1. "Is your AI screening/ranking feature classified as high-risk under EU AI Act Annex III Area 4? What's your classification rationale?"

2. "Can you provide your EU Declaration of Conformity and CE marking documentation for the AI components?"

3. "What does your instructions-for-use document specify about human oversight requirements for our use case?"

4. "What automatic logging does your system provide, and how do we access and retain those logs?"

5. "What deployer obligations does your documentation assign to us?"

Use the AI Vendor Risk Screener for a structured vendor assessment.

GDPR Article 22 and the EU AI Act: double compliance for AI hiring

GDPR Article 22 already restricts "solely automated individual decision-making" that produces legal or similarly significant effects. Hiring decisions qualify. The EU AI Act adds on top: risk management, technical documentation, human oversight design, accuracy and robustness requirements, transparency, logging, and post-market monitoring.

| Requirement | GDPR | EU AI Act |

|---|---|---|

| Human involvement in decisions | Required (Art. 22) | Required (Art. 14, 26) |

| Transparency to individuals | Required (Art. 13-14, 22) | Required (Art. 13, 26, 50) |

| Data quality controls | Required (Art. 5(1)(d)) | Required (Art. 10, 26(4)) |

| Impact assessment | DPIA (Art. 35) | FRIA (Art. 27) — different scope |

| Risk management system | Not required | Required (Art. 9) |

| Technical documentation | Not required | Required (Art. 11, Annex IV) |

| Conformity assessment | Not required | Required for providers (Art. 43) |

A DPIA under GDPR and a FRIA under the EU AI Act may cover overlapping ground, but they're not the same assessment. You may need both. For the full compliance picture, see our complete EU AI Act compliance guide.

FAQ: AI in recruitment under the EU AI Act

Yes. Annex III Area 4 explicitly covers AI systems used for recruitment, selection, screening, filtering, assessing, and ranking candidates. It also covers AI for decisions on promotion, termination, task allocation, and performance monitoring. If your organisation uses an ATS with AI-powered screening features, you're deploying a high-risk AI system.

You're the deployer (you use the system). The ATS vendor is the provider (they built it). Both have obligations, but yours focus on human oversight, monitoring, logging, AI literacy, and operational controls. The vendor's compliance does not cover your deployer obligations.

Yes. Under both the EU AI Act (deployer transparency obligation) and GDPR Article 22 (right not to be subject to solely automated decisions), candidates must be informed that AI is used in the recruitment process.

This is a compliance gap under both the EU AI Act (Article 14 human oversight requirement) and GDPR Article 22. Implement a human review step before any AI-driven rejection becomes final. The recruiter or hiring manager reviewing AI output is the oversight mechanism — but it must be documented, not assumed.

Yes. Annex III Area 4 covers AI for decisions on promotion and termination, task allocation based on individual behaviour or characteristics, and monitoring and evaluating performance. Internal workforce management AI carries the same high-risk classification as recruitment AI.

August 2, 2026 for high-risk Annex III obligations. AI literacy under Article 4 is already enforceable since February 2, 2025. GDPR Article 22 automated decision-making restrictions are already enforceable. Penalties for high-risk violations: up to 15 million euros or 3% of worldwide annual turnover.

Abhishek G Sharma

Founder & CEO, Move78 International Limited

ISO 42001 LA · ISO 27001 LA · CISA · CISM · CRISC · CEH · CCSK · CAIGO · CAIRO

20+ years in cybersecurity and risk management. Builds AI governance tools and advisory for mid-market organisations.

Need More Practical Guidance?

Use the free EU AI Compass tools and guides to structure oversight, monitoring, vendor due diligence, and AI literacy planning for HR teams.

Disclaimer

This guide is for educational and informational purposes only and does not constitute legal advice. The EU AI Act (Regulation 2024/1689) and GDPR (Regulation 2016/679) are complex regulations. Consult qualified legal counsel for advice specific to your organisation. Vendor names are listed for illustrative purposes only and do not constitute endorsement or classification determination. All references current as of March 2026.