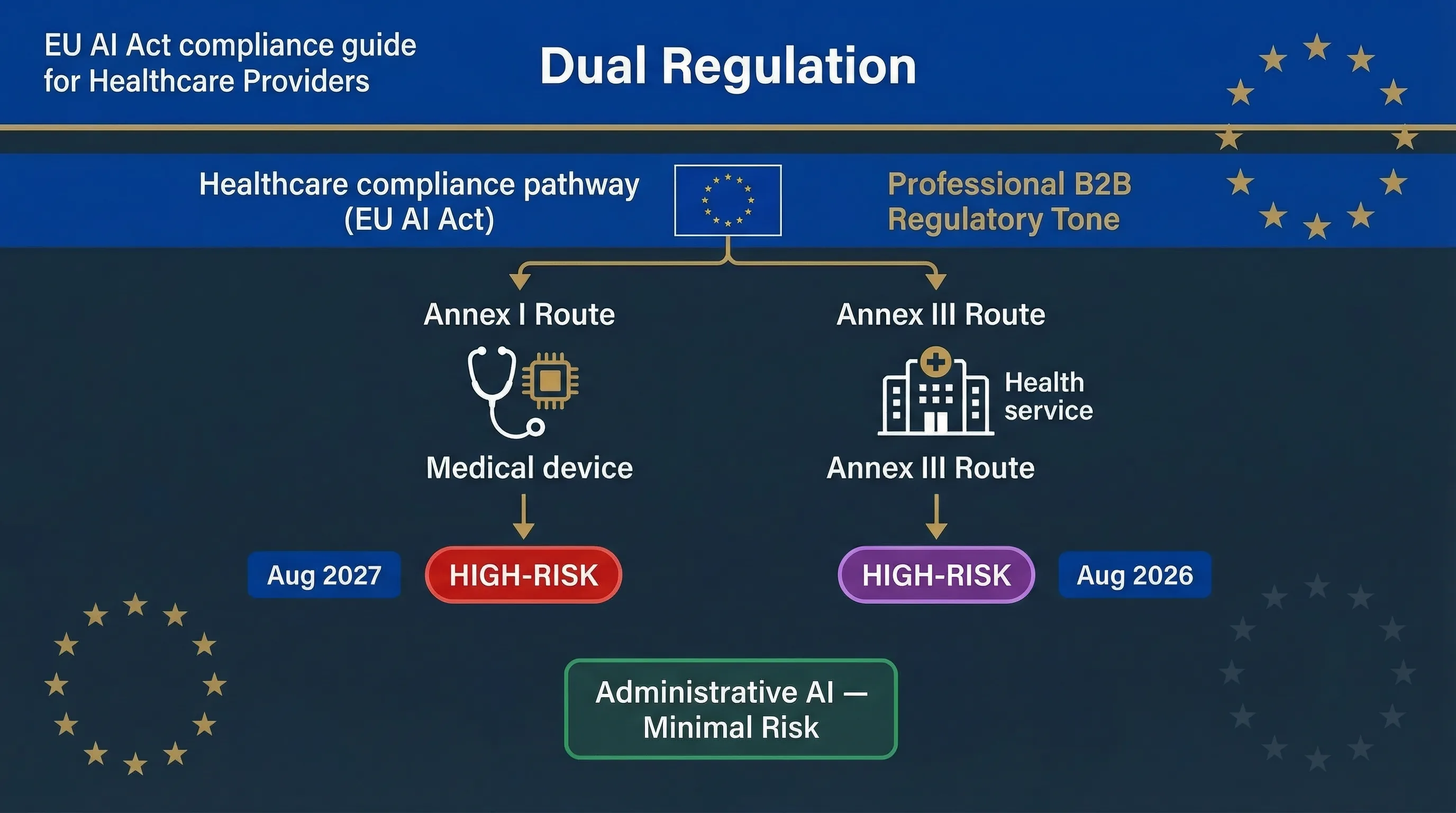

Two Paths to High-Risk: Annex I (Medical Devices) and Annex III (Health Services)

Healthcare AI can be classified as high-risk through two completely independent routes under the EU AI Act. Getting this wrong means you're either building compliance against the wrong deadline or missing obligations entirely. Can your regulatory affairs team explain right now which route each of your AI systems falls under?

Route 1: Annex I — AI as a Safety Component of a Medical Device

If your AI system is a medical device (or a safety component of one) regulated under the Medical Devices Regulation (MDR 2017/745) or In Vitro Diagnostics Regulation (IVDR 2017/746), it falls under Annex I of the EU AI Act. The AI Act requires a conformity assessment that integrates with the existing MDR/IVDR process — you don't run two separate assessments; you extend the MDR process to cover AI-specific requirements. This route becomes enforceable August 2, 2027, one year later than Annex III. Examples include AI-powered diagnostic imaging (radiology AI), AI clinical decision support classified as SaMD (Software as a Medical Device), and AI-driven patient monitoring devices. If your AI is classified as a Class IIa, IIb, or III medical device under MDR, it almost certainly falls under this route.

Route 2: Annex III — AI Affecting Access to Healthcare Services

Annex III Area 5 covers AI systems used to evaluate access to essential services, including healthcare. This captures AI that isn't a medical device but still affects patient access: AI triage systems, patient risk scoring for resource allocation, AI-powered appointment prioritisation, and AI determining insurance coverage for health services. Annex III Area 5(c) also covers AI for evaluating and classifying emergency calls and dispatch prioritisation. This route becomes enforceable August 2, 2026.

The Boundary Matters

AI classified as a medical device (Annex I): MDR conformity assessment plus AI Act requirements, enforceable August 2027. AI not classified as a medical device but affecting health service access (Annex III): AI Act conformity assessment only, enforceable August 2026. AI that's neither — hospital administrative AI, scheduling without patient impact — is likely minimal or limited risk with transparency obligations only.

| Healthcare AI Type | Regulatory Route | Enforcement Date | Assessment Type |

|---|---|---|---|

| AI diagnostic imaging (SaMD) | ANNEX I Medical device | 2 Aug 2027 | Extended MDR conformity assessment |

| AI clinical decision support (SaMD) | ANNEX I Medical device | 2 Aug 2027 | Extended MDR conformity assessment |

| Patient triage / resource allocation AI | ANNEX III Health service access | 2 Aug 2026 | AI Act conformity assessment |

| Emergency call classification / dispatch AI | ANNEX III Area 5(c) | 2 Aug 2026 | AI Act conformity assessment |

| Health insurance coverage AI | ANNEX III Area 5(b) | 2 Aug 2026 | AI Act + FRIA |

| Hospital admin AI (staff scheduling, supply chain) | MINIMAL RISK | Transparency only | No conformity assessment |

Regulatory signal: France's CNIL and HAS (Haute Autorité de Santé) published a joint draft guide on AI in healthcare for consultation on March 5, 2026. It provides sectoral interpretation of AI Act and GDPR obligations in the medical context. Currently non-binding, but signals regulatory direction. Referenced in eu-ai-rules-engine v2.4.

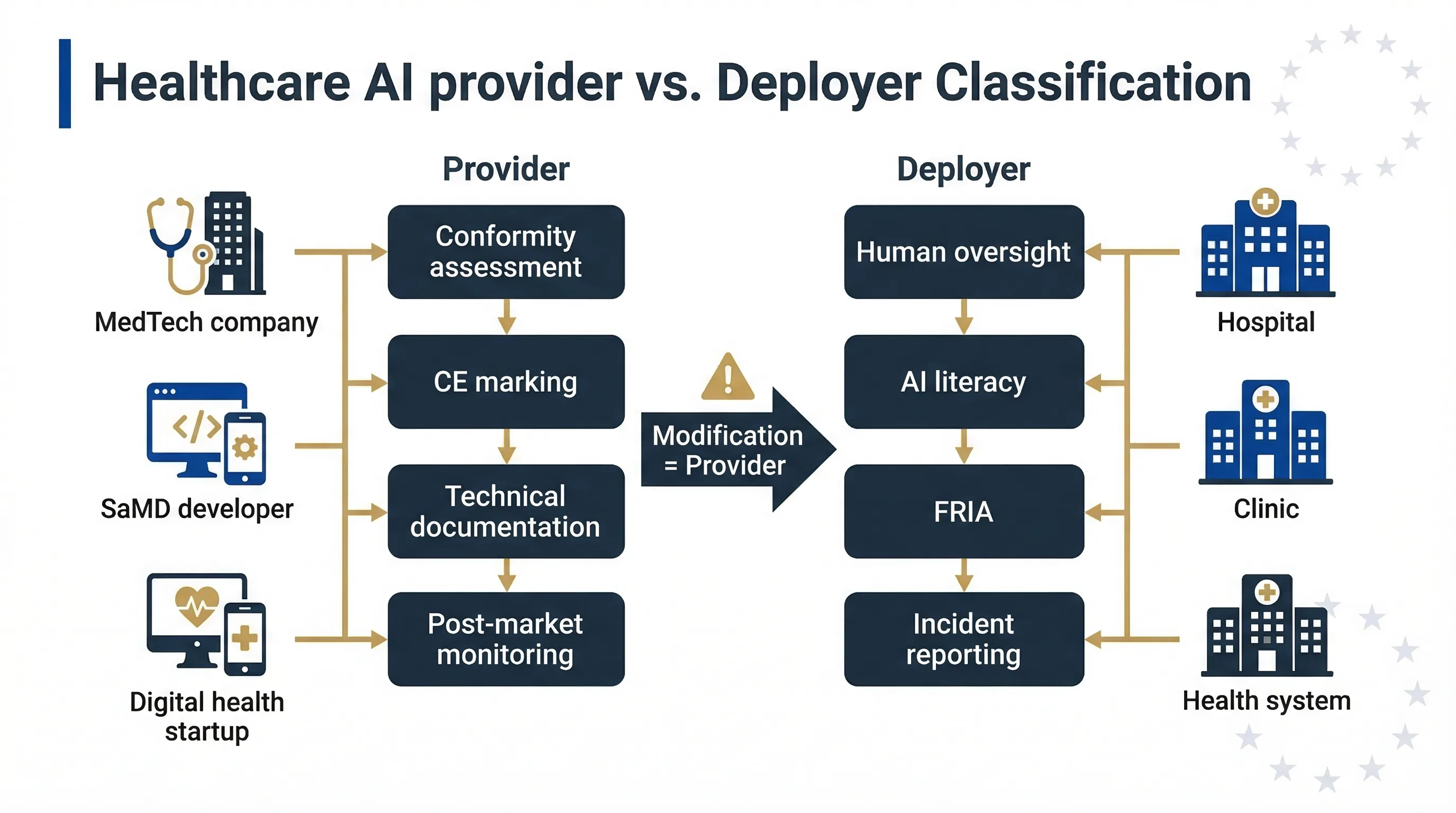

Who's the Provider and Who's the Deployer in Healthcare AI?

The provider/deployer distinction is critical in healthcare because the obligations are dramatically different, and getting it wrong can flip your entire compliance programme upside down. I've seen MedTech startups assume they're deployers when they're actually providers — that's not a minor error, it's a Category 1 misclassification.

MedTech / Digital Health Company Building the AI

You're the provider. You bear the heaviest obligations: risk management system (Article 9), data governance (Article 10), technical documentation per Annex IV, human oversight design (Article 14), conformity assessment, CE marking, EU database registration, and post-market monitoring. For medical device AI, your existing MDR Quality Management System under Article 10 MDR provides a foundation, but the AI Act adds specific requirements around bias testing, data governance, and cybersecurity under Article 15.

Hospital / Health System / Clinic Deploying the AI

You're the deployer. Article 26 obligations apply: use per instructions, human oversight in the clinical context, monitoring, logging, AI literacy for clinical staff, and incident reporting. Clinical staff using AI diagnostic tools must understand the tool's limitations and be competent to override its recommendations. That's both an AI Act requirement and a patient safety imperative.

Trap: If a hospital modifies the AI — fine-tunes on local patient data, changes its intended use, retrains on local datasets — it may become a provider under Article 25. Use the Accidental Provider Classifier to check if your modifications cross the line.

| Role | Who | Key Obligations | Risk of Misclassification |

|---|---|---|---|

| Provider | MedTech company, SaMD developer, digital health startup | Art. 9–15, Annex IV documentation, conformity assessment, CE marking, post-market monitoring | Assuming you're a deployer when you're building the system |

| Deployer | Hospital, clinic, health system | Art. 26: use per instructions, human oversight, FRIA, monitoring, AI literacy, incident reporting | Modifying AI and inadvertently becoming a provider |

| Health insurer | Insurer using vendor AI for coverage/pricing | Art. 26 deployer duties + mandatory FRIA for health service access | Not recognising health AI as high-risk |

Decision map: how healthcare AI systems split between Annex I (medical device) and Annex III (health service access) classification routes.

Healthcare-Specific Deployer Obligations

If you're a hospital or health system deploying high-risk AI, here's what Article 26 actually means in a clinical setting. These aren't abstract requirements — they translate into specific operational changes that affect clinical workflows.

Human Oversight in Clinical Context

Clinical AI must support, not replace, clinical judgement. The clinician must be the final decision-maker. Document which clinician reviews AI output, what training they've received on the specific tool, how they override it, and what happens when AI and clinician disagree. Automation complacency is acute in healthcare — clinicians may over-trust AI recommendations under time pressure. That's the real risk, and regulators know it.

Data Governance for Health Data

Health data is "special category data" under GDPR Article 9. Processing requires explicit consent or another Article 9(2) legal basis. The AI Act's data governance requirements under Article 10 compound on GDPR: training, validation, and testing data must be relevant, representative, and as error-free as possible. Patient data used as AI input must satisfy both GDPR and AI Act data quality requirements simultaneously.

Triple Incident Reporting Burden

Serious incidents must be reported to the national market surveillance authority (AI Act Article 62), potentially the national competent authority for medical devices (MDR), and the data protection authority (GDPR, if a personal data breach). A single AI failure involving patient data can trigger three separate notification obligations. Does your incident response playbook cover all three channels?

AI Literacy for Clinical Staff

Already enforceable since February 2, 2025. Clinical staff using AI tools need training specific to what the tool does, what data it uses, its accuracy and limitations, how to interpret and override outputs, and how to report issues. Generic "AI awareness" training doesn't satisfy Article 4. The training must be tool-specific.

Healthcare Deployer Tools

Track clinical override decisions Automation Complacency Assessor

Evaluate over-trust risk AI Literacy Planner

Build staff training programme Input Data Validator

Check data governance compliance RAG Data Hygiene Screener

Assess data quality for AI systems EU AI Act Compliance Checker

General system classification

Related guides: For the full deployer framework, see the High-Risk AI Deployer Guide. For health insurance AI specifically, see EU AI Act for Insurance.

FAQ: EU AI Act for Healthcare Organisations

Is AI diagnostics high-risk under the EU AI Act?

Do I need separate conformity assessments under MDR and AI Act?

What about clinical decision support tools that aren't medical devices?

Is hospital scheduling or administrative AI covered?

What is the CNIL-HAS healthcare AI guide?

When do healthcare AI obligations take effect?

All Healthcare AI Compliance Tools

EU AI Act Compliance Checker

General system classification

Accidental Provider Classifier

Check if modifications make you a provider

Deployer Self-Assessment

Check your deployer duties

Human Oversight Log

Track clinical override decisions

Automation Complacency Assessor

Evaluate over-trust risk

AI Literacy Planner

Build staff training programme

Input Data Validator

Check data governance compliance

RAG Data Hygiene Screener

Assess data quality

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal, medical, or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel, your notified body, and national competent authority before making compliance decisions. Move78 International Limited is not a law firm, medical device manufacturer, or authorised compliance service provider. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law. The CNIL-HAS guide is draft and non-binding.