Annex III Area 5b and 5c: Which Insurance AI Is High-Risk?

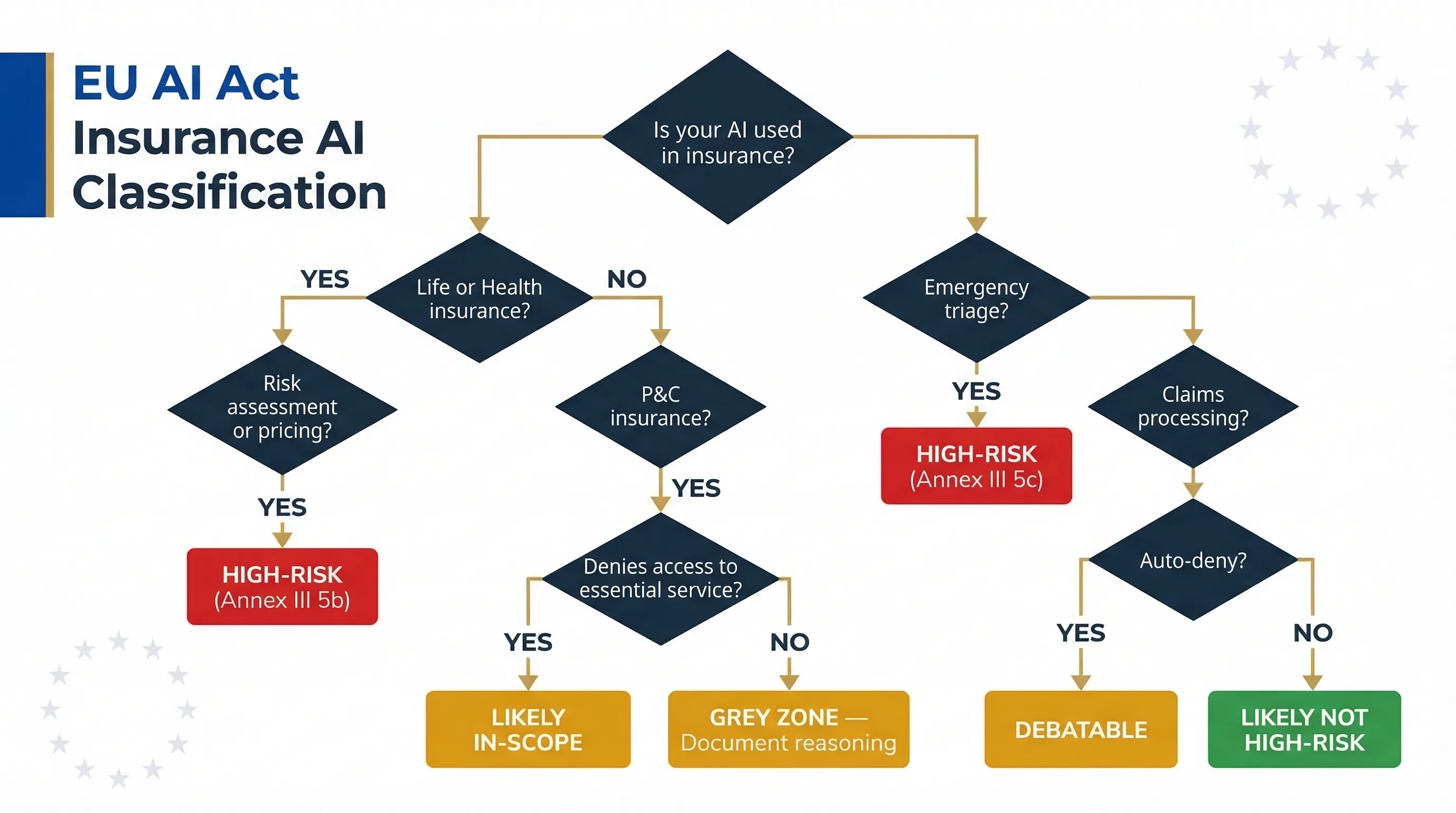

The EU AI Act doesn't treat all insurance AI the same way. Life and health insurance underwriting AI is explicitly high-risk. Property and casualty isn't mentioned. Claims processing is a grey zone. If you're an insurer or InsurTech deploying AI anywhere in the underwriting-to-claims pipeline, can your team articulate exactly where the line falls for each of your systems?

Explicitly High-Risk: Life and Health Insurance (Area 5b)

Under Annex III Area 5(b), AI systems used for risk assessment and pricing in life and health insurance are classified as high-risk. That's not ambiguous. If your AI influences what premium someone pays, whether they get accepted for coverage, or how their risk profile is scored for life or health products, it's high-risk. This includes automated underwriting for life insurance, AI-driven premium calculation for health insurance, and risk scoring models that determine policy acceptance or rejection. The obligation applies from August 2, 2026, with penalties up to €35 million or 7% of global annual turnover.

The Grey Zone: Property and Casualty (P&C)

Property and casualty insurance — home, auto, commercial property, liability — isn't explicitly listed in Annex III Area 5(b). But here's where it gets complicated. If your P&C AI functionally denies access to an essential service (for example, auto insurance that's legally required in most EU member states, or home insurance required for a mortgage), the "access to essential services" framing in Annex III may still apply. I'd take the conservative approach: if your AI prices or accepts/rejects policies for any insurance product where the policyholder is in the EU, treat it as potentially in-scope and document your classification reasoning. Being wrong on the safe side costs paperwork. Being wrong on the other side costs up to 7% of turnover.

Emergency Triage AI (Area 5c)

Annex III Area 5(c) covers AI systems used to evaluate and classify emergency calls, including establishing priority of dispatching for medical emergency services. This is relevant for health insurers operating emergency triage AI or telemedicine platforms that route patients based on AI-assessed urgency.

Claims Processing: Not Listed, But Not Safe

AI for claims assessment — approve, deny, or adjust claims — isn't explicitly listed in Annex III. However, claims decisions directly affect access to insurance benefits a policyholder has already paid for. If your AI autonomously denies or reduces claims, classification may be debatable. The conservative approach: document your reasoning for classifying claims AI as non-high-risk. If you can't write a convincing two-page justification, it probably is high-risk.

| Insurance AI Use Case | Annex III Classification | Confidence Level |

|---|---|---|

| Life insurance underwriting & pricing | HIGH-RISK Area 5(b) | Explicit — no ambiguity |

| Health insurance underwriting & pricing | HIGH-RISK Area 5(b) | Explicit — no ambiguity |

| P&C insurance (auto, home, commercial) | GREY ZONE | Not listed; may apply if essential service access |

| Emergency triage / dispatch AI | HIGH-RISK Area 5(c) | Explicit — no ambiguity |

| Claims processing (auto-approve/deny) | GREY ZONE | Not listed; debatable if auto-denial involved |

| Fraud detection (supporting investigators) | LIKELY NOT HIGH-RISK | If human makes final decision |

Insurance Deployer Obligations Under the EU AI Act

If your insurance AI is classified as high-risk, Article 26 deployer obligations kick in. These aren't theoretical requirements. They're specific evidence expectations that a market surveillance authority will ask for if they come knocking. Here's what that looks like for an insurer.

Human Oversight That Actually Means Something

Underwriters reviewing AI-generated risk scores must have the authority to override and the competence to evaluate the AI's reasoning. "The model says reject" isn't sufficient oversight under Article 14. The human must understand why the AI scored a risk in a particular way and be able to disagree. From what I've seen at mid-market insurers, the most common failure mode here is rubber-stamping — an underwriter who clicks "approve" on every AI recommendation because they trust the model and don't have time to review each case. That won't satisfy the Article 14 requirement.

FRIA: Mandatory Before Deployment

Deployers using AI for insurance risk assessment and pricing must conduct a Fundamental Rights Impact Assessment under Article 27 before putting the system into production. Insurance pricing AI can discriminate on prohibited grounds — health status as a proxy for race, postal code as a proxy for ethnicity, claims history correlating with socioeconomic status. The FRIA must assess these specific discrimination risks in your specific deployment context, not generically.

Input Data Governance and Proxy Discrimination

Insurance AI is particularly vulnerable to proxy discrimination. Training data reflecting historical pricing patterns may embed biases — geographic, demographic, socioeconomic. Article 26(4) requires deployers to ensure input data is relevant and sufficiently representative. If your model was trained on a decade of pricing data from a single EU market, deploying it across five markets without revalidation is a risk you need to document.

Ongoing Monitoring and Model Drift

Track model drift in risk scoring. If acceptance rates, pricing distributions, or claims ratios shift over time, that's a signal the model is degrading or the underlying population has changed. Document your monitoring methodology, cadence, and escalation thresholds. Quarterly isn't unreasonable for a high-risk system; monthly is better for new deployments.

Solvency II Integration

For EU-regulated insurers, AI governance intersects with Solvency II Pillar 2 risk management requirements. Your AI risk management can integrate into your existing Own Risk and Solvency Assessment (ORSA) process rather than creating a parallel governance structure. That's not just efficient — it's what EIOPA will expect. Building a separate "AI governance programme" that doesn't connect to your ORSA is a red flag that suggests the two teams aren't talking to each other.

| Obligation | AI Act Article | Insurance-Specific Considerations |

|---|---|---|

| Human oversight | Article 14 | Underwriters must override, not just rubber-stamp. Log override rates. |

| FRIA | Article 27 | Assess proxy discrimination: postal code, claims history, health data correlations. |

| Input data governance | Article 26(4) | Validate training data representativeness across all deployment markets. |

| Monitoring | Article 26(5) | Track drift in acceptance rates, pricing distributions, claims ratios. |

| Incident reporting | Article 62 | Systemic mispricing or mass denial events require notification. |

Deployer Compliance Tools

Deep dive: For the full deployer obligation framework, see the High-Risk AI Deployer Guide. For the broader financial services picture including DORA and GDPR, see EU AI Act for Financial Services.

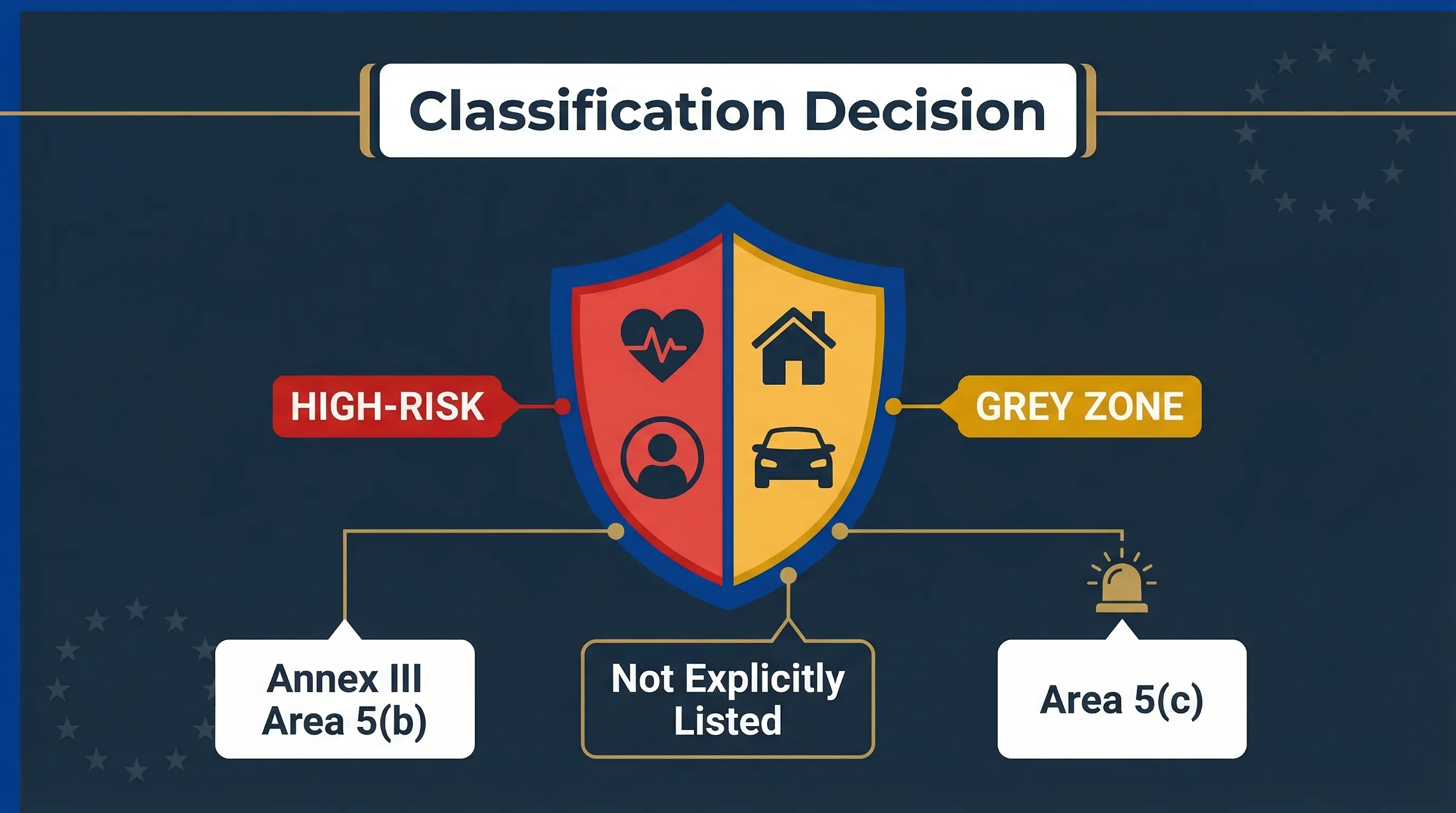

Classification decision tree: how insurance AI use cases map to Annex III Areas 5(b) and 5(c).

Insurance Fraud Detection vs Risk Pricing: Different Classifications

This distinction catches people out. Insurance fraud detection and risk pricing are different AI use cases with potentially different risk classifications under the EU AI Act. You can't blanket-classify your "insurance AI" — you must classify each system independently.

Fraud detection supporting human investigators — flagging suspicious claims for manual review — is likely not high-risk if the human investigator makes the final decision. The AI is an assistive tool, not a decision-maker.

Fraud detection that autonomously denies claims or triggers policy cancellation moves much closer to the high-risk boundary. If the AI is the effective decision-maker rather than a human, classification changes.

Risk pricing that determines premium levels or coverage acceptance for life and health insurance is explicitly high-risk under Annex III(5)(b), regardless of whether a human approves the final price.

Action: Use the Fraud vs Credit Scoring Delimiter to determine the boundary for your specific systems. Don't assume fraud detection is automatically safe.

FAQ: EU AI Act for Insurance Companies

Is AI insurance underwriting high-risk under the EU AI Act?

Do I need a FRIA for insurance pricing AI?

Is claims processing AI high-risk under the EU AI Act?

How does EU AI Act compliance interact with Solvency II?

Does the ECB opinion on credit scoring affect insurance AI classification?

All Insurance AI Compliance Tools

Insurance Underwriting Assessor

Check underwriting obligations

Fraud vs Credit Scoring Delimiter

Distinguish fraud from pricing AI

Deployer Self-Assessment

Check your deployer duties

FRIA Generator

Build your impact assessment

Human Oversight Log

Track override decisions

EU AI Act Compliance Checker

General system classification

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal, financial, or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel and your insurance regulator before making compliance decisions. Move78 International Limited is not a law firm, regulated financial advisor, or authorised compliance service provider. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law.