Annex III Area 5: which financial AI systems are high-risk?

Explicitly high-risk: creditworthiness assessment (Area 5a)

AI systems used to evaluate the creditworthiness of natural persons or establish their credit score are explicitly classified as high-risk under the EU AI Act. That includes automated credit scoring models, AI-driven loan approval/denial, credit limit assignment algorithms, affordability assessment tools, and automated underwriting for consumer lending. The key phrase is "creditworthiness of natural persons" — B2B credit assessment (scoring a company, not a person) isn't explicitly listed, but may still be captured if natural persons are directly affected, such as SME lending where the owner is personally assessed.

FRIA is mandatory for deployers in this category (Article 27). No exceptions.

Explicitly high-risk: insurance risk and pricing (Area 5b)

AI for risk assessment and pricing in life and health insurance is also explicitly high-risk. If your firm operates in insurance, see our dedicated coverage on this (cross-reference: eu-ai-act-for-insurance.html when live).

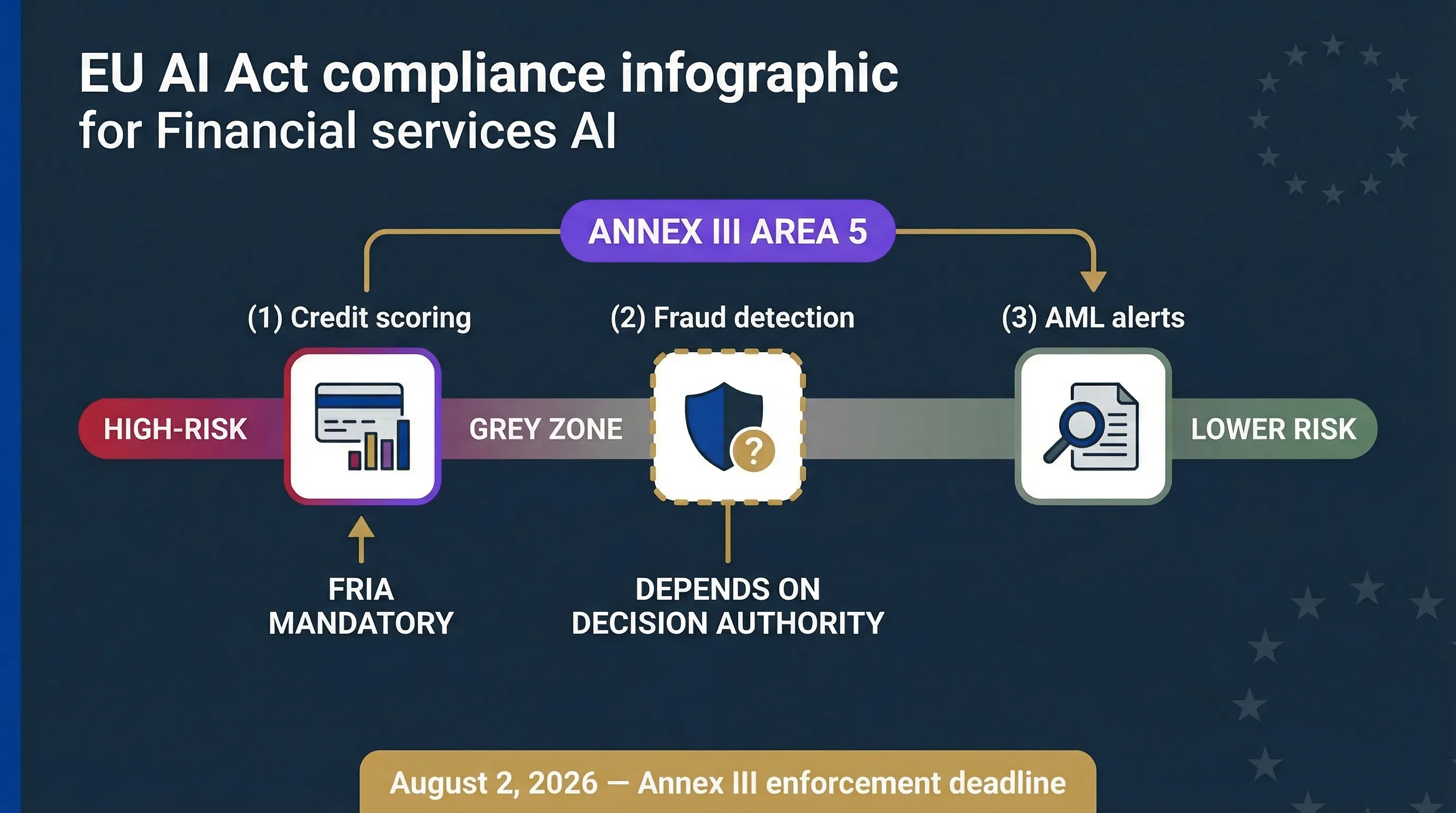

The grey zone: fraud detection, AML, and KYC

This is where most fintech compliance teams get stuck. Fraud detection is NOT automatically high-risk under Annex III. However, if a fraud detection system functionally denies access to financial services — blocks a transaction, freezes an account, rejects an onboarding application — it may cross into "access to essential services" territory and trigger high-risk classification.

AML transaction monitoring that generates alerts for human review is likely not high-risk — it's decision support, not decision-making. But AML systems that automatically file suspicious activity reports or freeze accounts are closer to the high-risk line. KYC/KYB onboarding that auto-rejects applicants directly affects access to financial services and may trigger classification.

The distinction matters enormously.

If your AI is high-risk, you have the full deployer obligation stack: oversight, monitoring, logging, FRIA, vendor verification, incident reporting. If it isn't, you have lighter obligations: transparency and AI literacy. Misclassification in either direction creates risk — over-classification wastes resources; under-classification creates enforcement exposure.

ECB Opinion CON/2026/10 (March 13, 2026)

The ECB recommended excluding linear/logistic regression models from Annex III(5)(b) credit scoring classification when adequate human supervision exists. This is an advisory opinion, not binding — the Digital Omnibus negotiations may or may not adopt it. Current law doesn't distinguish by model type. All AI credit scoring is high-risk regardless of model complexity. Don't plan around the ECB opinion becoming law.

⚖ Classify your financial AI

Financial AI classification spectrum: credit scoring is explicitly high-risk; fraud detection and AML sit in a grey zone that depends on decision-making authority.

What financial services deployers must do under the EU AI Act

Human oversight

For credit decisioning, a human must be able to review and override AI-generated credit decisions before they affect applicants. Fully automated rejection without human review is a compliance gap under both the EU AI Act (Articles 14/26) and GDPR (Article 22). The oversight person needs competence in credit risk — not just a rubber stamp on an algorithm's output.

FRIA (mandatory for credit scoring deployers)

Article 27 requires a Fundamental Rights Impact Assessment before deploying high-risk AI for creditworthiness assessment. The FRIA must assess: impact on specific affected persons (applicants), context of use (consumer lending vs SME lending vs credit cards), and risk of harm to fundamental rights (access to financial services, non-discrimination). Use the FRIA Generator to build yours.

Monitoring and logging

Retain AI system logs for at least 6 months (Article 26(5)). For financial services, sector-specific regulations — PSD2, MiFID II record-keeping requirements — may demand longer retention. Apply the stricter requirement. Track performance drift, bias emergence, and false positive/negative rates monthly.

Input data governance

If you control the data fed into credit models — applicant data, bureau data, alternative data — you must ensure it's relevant and sufficiently representative (Article 26(4)). Bias in input data leads to bias in output, which leads to discriminatory credit decisions, which leads to regulatory and legal exposure. The Input Data Validator helps assess this.

Incident reporting

Report serious incidents — AI system causing significant harm or systematic failure — to the national market surveillance authority AND the AI system provider. For financial services firms, this sits alongside existing incident reporting to financial regulators (ECB, NCAs, FCA for UK-exposed firms).

📋 Financial services deployer tools

For the complete deployer obligation breakdown, see our High-Risk Deployer Guide.

Financial services cross-regulation: EU AI Act + PSD2 + DORA + GDPR

Financial services firms don't comply with the EU AI Act in isolation. You comply with a stack. Here's how they intersect — and why a single AI governance programme can satisfy most of them simultaneously.

| Regulation | AI-relevant obligations | Overlap with EU AI Act |

|---|---|---|

| GDPR | Automated decision-making restrictions (Art. 22), DPIA (Art. 35), data quality/minimisation | Human involvement, transparency, impact assessment, data governance |

| PSD2 | Strong customer authentication, transaction monitoring, fraud prevention | AI in fraud detection may trigger AI Act classification depending on decision authority |

| DORA | ICT risk management, incident reporting, third-party risk management (from Jan 17, 2025) | AI vendors are ICT third-party providers; vendor DD satisfies both DORA and AI Act |

| National regulators | CBI (Ireland), BaFin (Germany), AMF (France), CNMV (Spain), FCA (UK) | May add sector-specific AI requirements on top of EU AI Act |

A robust AI governance programme built to EU AI Act standards will substantially satisfy DORA ICT risk management, GDPR DPIA/Article 22, and PSD2 monitoring requirements — because they share overlapping concerns: risk management, oversight, logging, incident response, and vendor management. Build once, satisfy multiple regulators. For the framework implementation path, see our complete EU AI Act compliance guide.

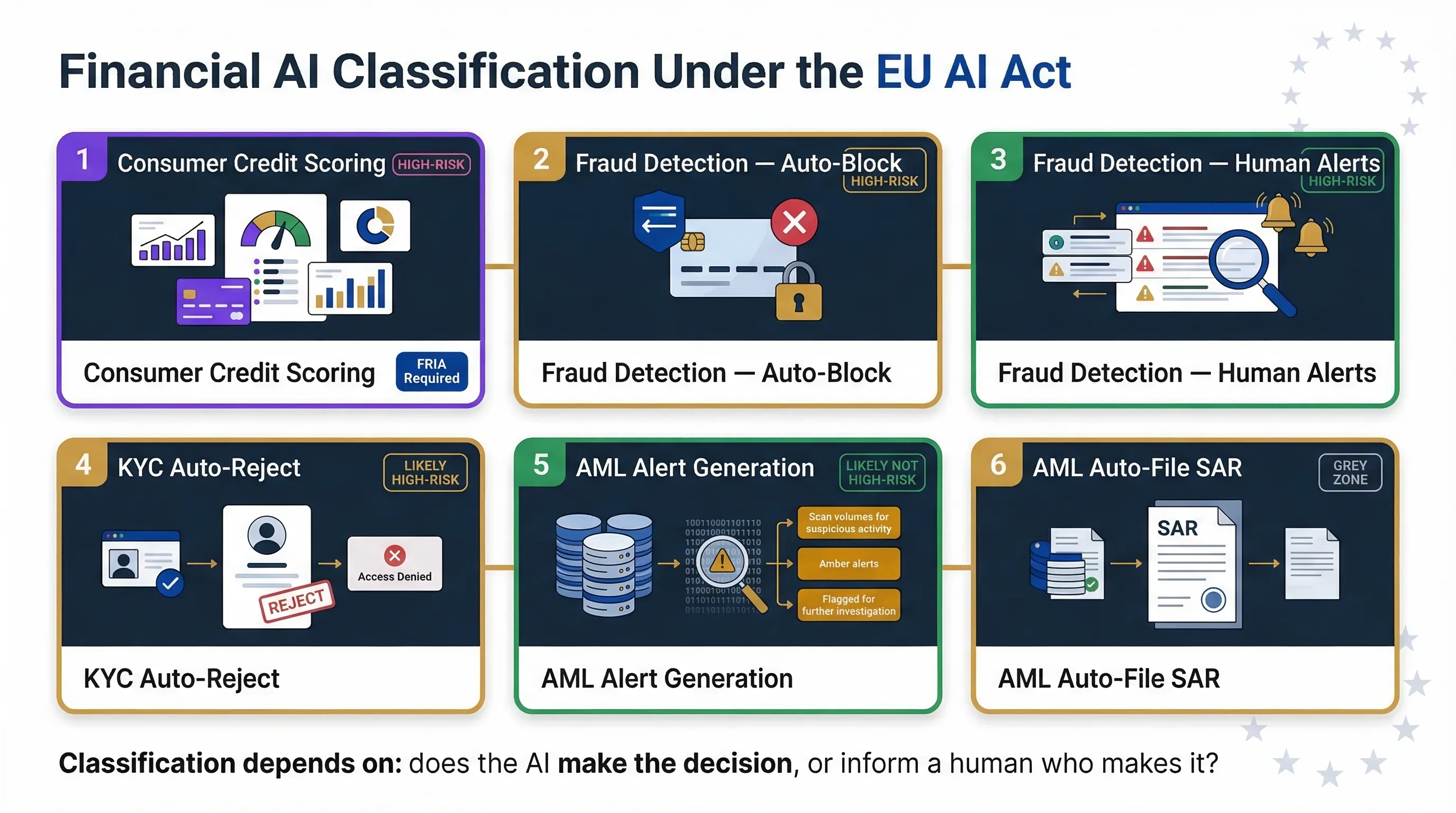

Common scenarios: how the EU AI Act applies to your financial AI

Consumer lending platform using AI credit scoring

Classification: Annex III Area 5a — explicitly high-risk. Full deployer obligations apply. FRIA mandatory. No ambiguity. Use the Fraud vs Credit Delimiter to document your classification.

Payment processor using AI fraud detection

Classification: depends on decision authority. If the system autonomously blocks transactions, it likely qualifies as high-risk because it denies access to services. If it flags for human review, it likely doesn't. Document the distinction carefully.

Neo-bank using AI for KYC/onboarding

Classification: if the AI auto-rejects applicants (denying account access), likely high-risk. If it flags for human review, lower risk. The functional effect on the applicant is what matters, not the technical architecture.

AML compliance using AI transaction monitoring

Classification: AI generating alerts for human investigation is likely not high-risk. AI automatically filing SARs or freezing accounts is closer to the high-risk boundary. The key question: does the AI make the decision, or does it inform a human who makes the decision?

| Financial AI use case | Likely classification | FRIA required? | Key factor |

|---|---|---|---|

| Consumer credit scoring | High-risk (Annex III 5a) | Yes (Art. 27) | Explicitly listed |

| Fraud detection — auto-block | Likely high-risk | Assess case-by-case | Denies access to services |

| Fraud detection — human-reviewed alerts | Likely not high-risk | No | Decision support only |

| KYC/KYB — auto-reject | Likely high-risk | Assess case-by-case | Denies account access |

| AML — alerts for investigation | Likely not high-risk | No | Human makes final decision |

| AML — auto-file SAR/freeze | Grey zone, closer to high-risk | Assess case-by-case | Autonomous action on accounts |

FAQ: EU AI Act for financial services

Yes. Annex III Area 5a explicitly classifies AI systems evaluating the creditworthiness of natural persons as high-risk. This includes automated credit scoring models, AI-driven loan approval/denial, credit limit assignment, and affordability assessment tools. FRIA is mandatory for deployers of credit scoring AI.

Not automatically. The classification depends on whether the fraud detection system autonomously denies access to financial services (blocks transactions, freezes accounts) or supports human decision-making (generates alerts for review). Autonomous blocking may cross into high-risk territory because it functionally denies access to essential services.

Yes. Article 27 requires deployers of credit scoring AI systems affecting natural persons to conduct a Fundamental Rights Impact Assessment before deployment. The FRIA must assess impact on specific affected persons, context of use, and risk of harm to fundamental rights including access to financial services and non-discrimination.

DORA (Digital Operational Resilience Act) requires ICT third-party risk management. AI vendors are ICT third-party service providers under DORA. Your AI vendor due diligence should satisfy both DORA and EU AI Act requirements simultaneously. A robust AI governance programme built to EU AI Act standards will substantially satisfy DORA ICT risk management requirements.

ECB Opinion CON/2026/10 (March 13, 2026) recommended excluding simple linear and logistic regression models from high-risk classification when adequate human supervision exists. This is an advisory opinion, not binding. Current law does not distinguish by model type — all AI credit scoring is high-risk regardless of model complexity.

Annex III Area 5a covers creditworthiness of natural persons. Pure B2B credit assessment (scoring a company, not a person) is not explicitly listed. However, if a natural person is personally affected — for example, an SME owner who is personally assessed or guaranteed — the boundary may shift toward high-risk classification.

Abhishek G Sharma

Founder & CEO, Move78 International Limited

ISO 42001 LA · ISO 27001 LA · CISA · CISM · CRISC · CEH · CCSK · CAIGO · CAIRO

20+ years in cybersecurity and risk management. Advises fintechs and financial services firms on AI governance and EU AI Act compliance.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer

This guide is for educational and informational purposes only and does not constitute legal or financial advice. The EU AI Act (Regulation 2024/1689) is a complex regulation. ECB Opinion CON/2026/10 is advisory. Consult qualified legal counsel for advice specific to your organisation. All references current as of March 2026.