Blog · Reviewed 9 May 2026 · 8 min read

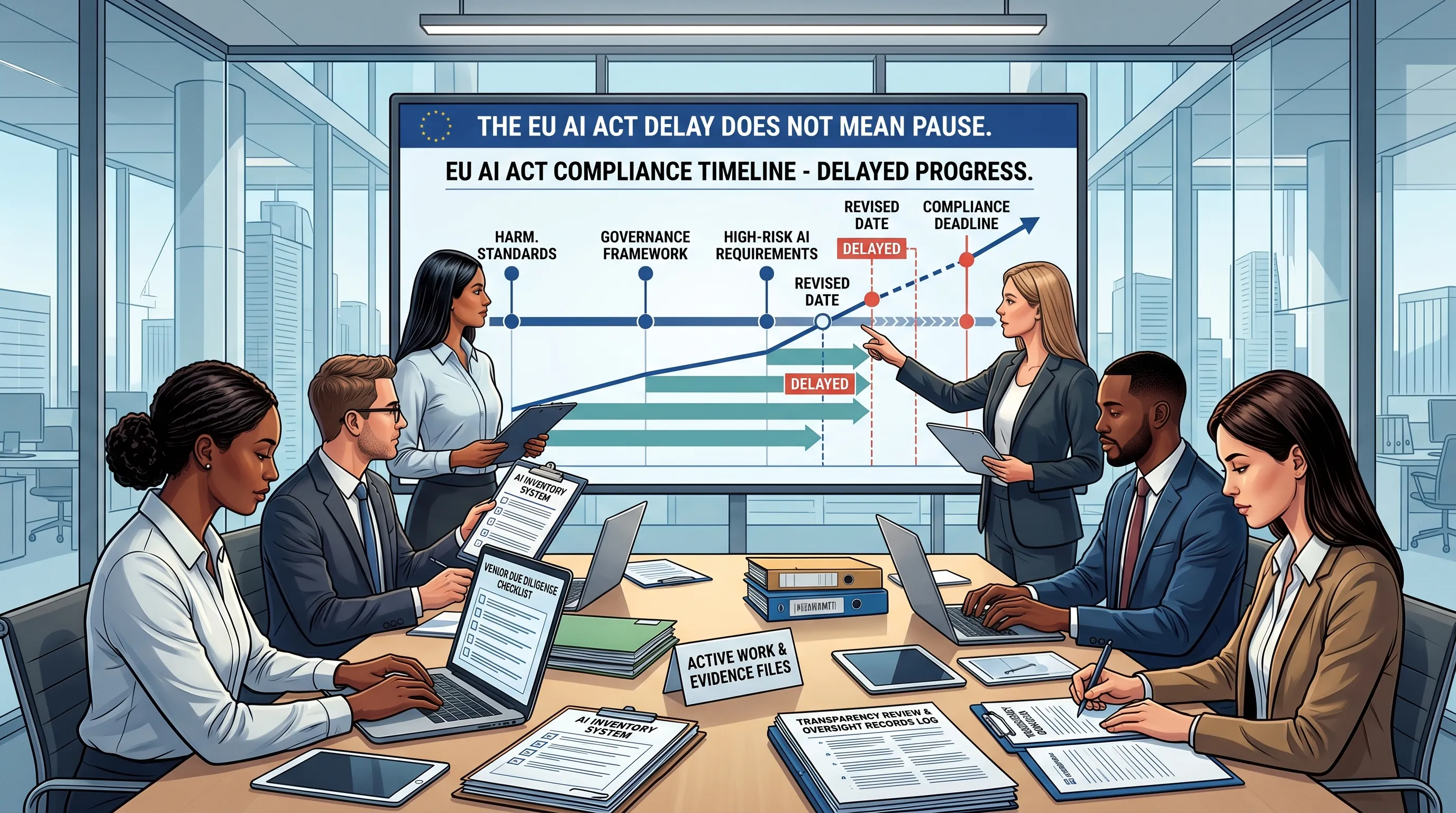

The EU AI Act Delay Does Not Mean Pause

A practical evidence-work article for deployers who are trying to separate current law from provisional Digital Omnibus planning dates.

Reviewed: 9 May 2026.

Source basis: Regulation (EU) 2024/1689, the European Commission 7 May 2026 Digital Omnibus announcement, the Council 7 May 2026 provisional-agreement release, and the European Commission AI Act FAQ. This page is educational and does not provide legal advice or compliance guarantees.

Quick answer: The Omnibus agreement changed the planning conversation, but it did not create a "do nothing until 2027" outcome. Under the current published AI Act baseline, prohibitions and AI literacy already apply, GPAI obligations already apply, Article 50 transparency still needs review for 2 August 2026, and Annex II product-integrated high-risk AI still points to 2 August 2027 unless changed through the formal Omnibus route.

What moved

The 7 May 2026 political agreement introduces later application dates for certain stand-alone high-risk AI systems, 2 December 2027, and for high-risk AI systems embedded in products, 2 August 2028, if formally adopted and published. It also pushes national AI regulatory sandboxes to 2 August 2027 and sets a 2 December 2026 planning date for some transparency solutions for artificially generated content.

Planning rule: treat these as provisional-agreement planning dates until endorsement, legal-linguistic revision, formal adoption, and publication are complete.

What did not disappear

Several obligations and workstreams did not vanish. The current AI Act baseline remains the published legal text until the Omnibus text is formally adopted. Prohibited practices and AI literacy already apply. GPAI governance obligations already apply. Article 50 transparency still needs active review for 2 August 2026.

| Workstream | Why it still matters |

|---|---|

| AI system inventory | You cannot classify, assign ownership, review vendors, or plan evidence without a controlled system list. |

| Role mapping | A team still needs to know whether it is acting as deployer, provider, importer, distributor, or a mix. |

| Article 50 review | Transparency obligations remain a separate review track and should not be assumed to move with all high-risk dates. |

| AI literacy records | Article 4 is already applicable. Training evidence is not a 2027 task. |

| Decision log | A dated log shows whether a decision relied on current law, guidance, or provisional-agreement planning. |

Why deployers still need evidence work in 2026

A delay in one part of the timeline does not remove the need to identify systems, map roles, review vendor claims, understand transparency triggers, document human oversight, retain logs, and prove AI literacy action. Buyers, boards, procurement teams, and auditors may ask for records before a regulator does.

The extra complexity is the point. When dates move, the file should explain which date the organisation used and why. Otherwise the organisation buys time and loses the audit trail.

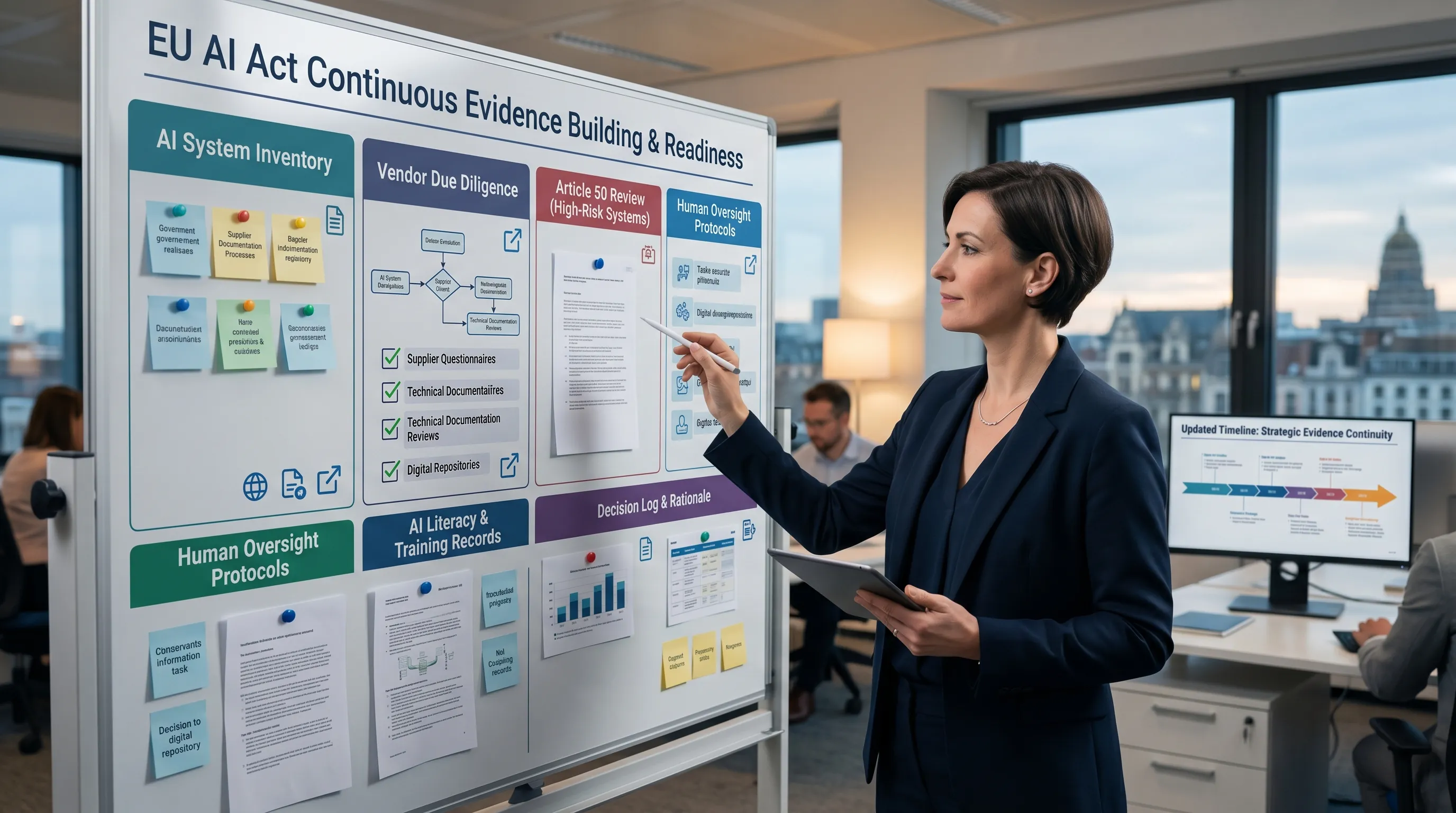

Evidence work that still makes sense now

- AI system inventory: list systems, owners, vendors, purposes, data types, users, affected processes, and business-criticality.

- Role and legal-status mapping: record whether each decision relied on current law, draft guidance, or the Digital Omnibus provisional agreement.

- Vendor due diligence: request model capability, limitation, logging, oversight, content-marking, and safety-control evidence.

- Article 50 trigger review: check chatbot disclosure, deep fake, public-interest text, biometric categorisation, and emotion-recognition scenarios.

- Human oversight and use instructions: document what users can do, when they must escalate, and when AI outputs cannot be used alone.

- AI literacy records: keep training, attendance, curriculum, role mapping, and refresher evidence.

- Decision log: preserve the regulatory status used for each planning decision.

The real risk if teams pause

The real risk is loss of internal control. A paused team usually creates five avoidable gaps:

- no clean AI system inventory

- no vendor evidence trail

- no Article 50 trigger review

- no human oversight documentation

- no dated explanation for why one legal-status assumption was used over another

Better operating model: maintain a three-column tracker: current law baseline, Omnibus provisional-agreement watch items, and evidence useful under either track.

What to do next

Start with the timeline page. Then run the evidence checklist. Then check Article 50 triggers, vendor due diligence, and AI literacy status. The point is not to overbuild. The point is to avoid wasting the extra time.

FAQ

Direct answers on what the Digital Omnibus timeline shift changes, what still needs review, and which evidence remains useful.

No. The EU AI Act delay changed parts of the timeline discussion, but it did not remove the need for inventory, vendor due diligence, Article 50 review, oversight records, and AI literacy evidence. Evidence work should be sequenced, not paused.

Under the current AI Act baseline, prohibited practices and AI literacy already apply, GPAI obligations already apply, Article 50 still needs review for 2 August 2026, and high-risk AI embedded in Annex II regulated products still points to 2 August 2027 unless changed through the formal Omnibus route.

A decision log matters because the regulatory picture now includes current law, Commission guidance, and Omnibus provisional-agreement planning. A dated log shows which status your team relied on, which evidence was available, which owner approved the decision, and when the assumption should be reviewed.

The most valuable evidence now is the material that stays useful under either timeline track: AI system inventory, role mapping, vendor evidence, human-oversight steps, Article 50 trigger review, AI literacy records, and a status note that explains current-law versus Omnibus planning assumptions.

No. The EU AI Act delay matters, but it should change sequencing rather than become an excuse to stop preparing. The practical risk is losing inventory discipline, vendor evidence, Article 50 review records, oversight documentation, and the audit trail for legal-status assumptions.