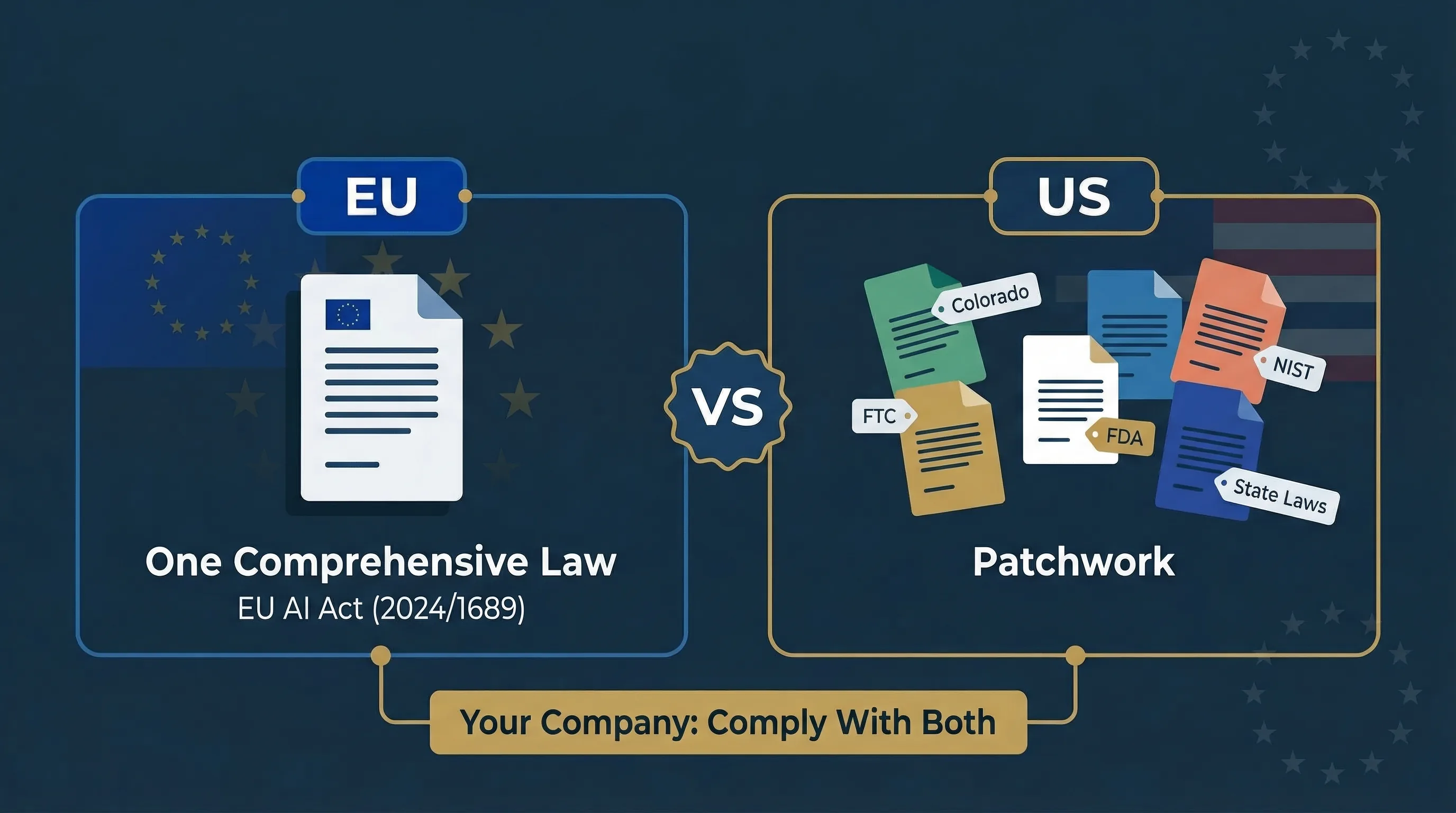

Comprehensive Law vs Regulatory Patchwork: The EU and US Approaches Diverge

The EU Approach: One Comprehensive Regulation

The EU AI Act (Regulation 2024/1689) is a single, horizontal regulation governing all AI systems across all sectors. Risk-based classification. Binding obligations. Enforceable penalties up to €35 million or 7% of turnover. Applies directly in all 27 member states without national transposition. The philosophy: regulate the technology itself based on risk, regardless of sector.

The US Approach: Sector-Specific, State-Level, Enforcement-Driven

No single federal AI law exists as of March 2026. Instead, the US relies on federal guidance (NIST AI RMF, Executive Orders, agency-specific guidance from FTC, FDA, EEOC, CFPB), sector-specific regulation (FDA for AI medical devices, EEOC for AI in employment), state-level legislation (Colorado AI Act, NYC Local Law 144, Illinois AI Video Interview Act), and enforcement actions (FTC using Section 5 unfair/deceptive practices authority). The philosophy: regulate AI harms within existing frameworks; legislate at the state level where federal action stalls.

Key implication for global companies:

If you serve both EU and US markets, you don't choose one regime. You comply with both. The EU AI Act's extraterritorial reach under Article 2 means US companies with EU customers are already in scope.

EU AI Act vs US AI regulatory environment: Structured Comparison

15 dimensions compared. When someone asks "how does EU AI regulation compare to the US?" — this is the reference table.

| Dimension | EU AI Act | US AI Regulation (composite) |

|---|---|---|

| Legal instrument | Single comprehensive regulation (2024/1689) | No single federal law. Patchwork: state laws + federal guidance + agency enforcement |

| Scope | All AI systems regardless of sector | Varies. Colorado: high-risk in listed sectors. FTC: consumer harm. FDA: medical devices. |

| Risk classification | 4 tiers: unacceptable, high-risk, limited, minimal | Colorado: “high-risk” + “consequential decisions.” No universal federal classification. |

| Binding obligations | Yes — mandatory requirements with penalties | Colorado: yes. NIST AI RMF: voluntary. FTC: enforceable via existing authority. |

| Penalties | Up to €35M or 7% of worldwide turnover | Colorado: $20K per violation (AG enforcement). FTC: varies. No single federal penalty structure. |

| Impact assessment | FRIA (Article 27) for certain deployers | Colorado: required for high-risk. NIST: recommended, not mandatory. |

| Human oversight | Mandatory for high-risk (Article 14) | Colorado: “reasonable care” including oversight options. Not universally mandated. |

| Transparency | Article 50: mandatory AI content labelling | Colorado: disclosure for consequential decisions. NYC LL144: bias audit disclosure. No federal labelling. |

| Documentation | Technical documentation (Annex IV) mandatory | Colorado: documentation and records. No federal documentation standard. |

| Conformity assessment | Mandatory for high-risk AI | Not required under any US instrument |

| CE marking | Required for high-risk on EU market | No US equivalent |

| Extraterritorial scope | Yes (Article 2) — applies to non-EU companies | Colorado: entities doing business in Colorado. No general US extraterritorial AI statute. |

| AI literacy | Mandatory (Article 4, since Feb 2025) | Not mandated federally or by any state |

| Enforcement body | National market surveillance authorities + EU AI Office | FTC, state AGs, FDA, EEOC, CFPB, SEC |

| Effective dates | Phased: Feb 2025 → Aug 2025 → Aug 2026 → Aug 2027 | Colorado: June 30, 2026 (delayed from Feb 1). Others: varies. |

Colorado AI Act (SB 24-205): The Closest US Equivalent to the EU AI Act

Colorado SB 24-205 is the most comprehensive US state AI law. Originally set for February 1, 2026, enforcement was postponed to June 30, 2026 when Governor Polis signed SB 25B-004 on August 28, 2025. A repeal-and-replace bill is being negotiated as of March 2026, so the final form of this law may change. This is the fastest-moving regulatory area in this entire guide series.

What It Covers

"High-risk AI systems" used to make or substantially influence "consequential decisions" about consumers in employment, education, financial and lending services, essential government services, healthcare, housing, insurance, and legal services. Applies to both "developers" (roughly equivalent to EU AI Act providers) and "deployers" (same term).

Key Obligations

Developers must provide deployers with documentation, known limitations, intended use, and risk mitigation guidance. Deployers must implement a risk management policy, complete impact assessments, notify consumers when AI is used in consequential decisions, and provide opt-out or human appeal mechanisms. Both must exercise "reasonable care" to protect consumers from algorithmic discrimination.

| Dimension | Colorado AI Act | EU AI Act |

|---|---|---|

| Scope | Consequential decisions in listed sectors | All AI systems by risk level, all sectors |

| Conformity assessment | Not required | Mandatory for high-risk |

| CE marking | No equivalent | Required for high-risk |

| Maximum penalty | $20K per violation | €35M or 7% of turnover |

| AI literacy | Not required | Mandatory since Feb 2025 |

| AI content labelling | Not required | Mandatory (Article 50) |

| Enforcement date | June 30, 2026 (delayed) | Phased through Aug 2027 |

Data verification warning: US state AI legislation is evolving rapidly. The Colorado AI Act may be amended or replaced before its June 30, 2026 enforcement date. A repeal-and-replace bill is under negotiation. Verify current status before relying on any specific provision for compliance planning.

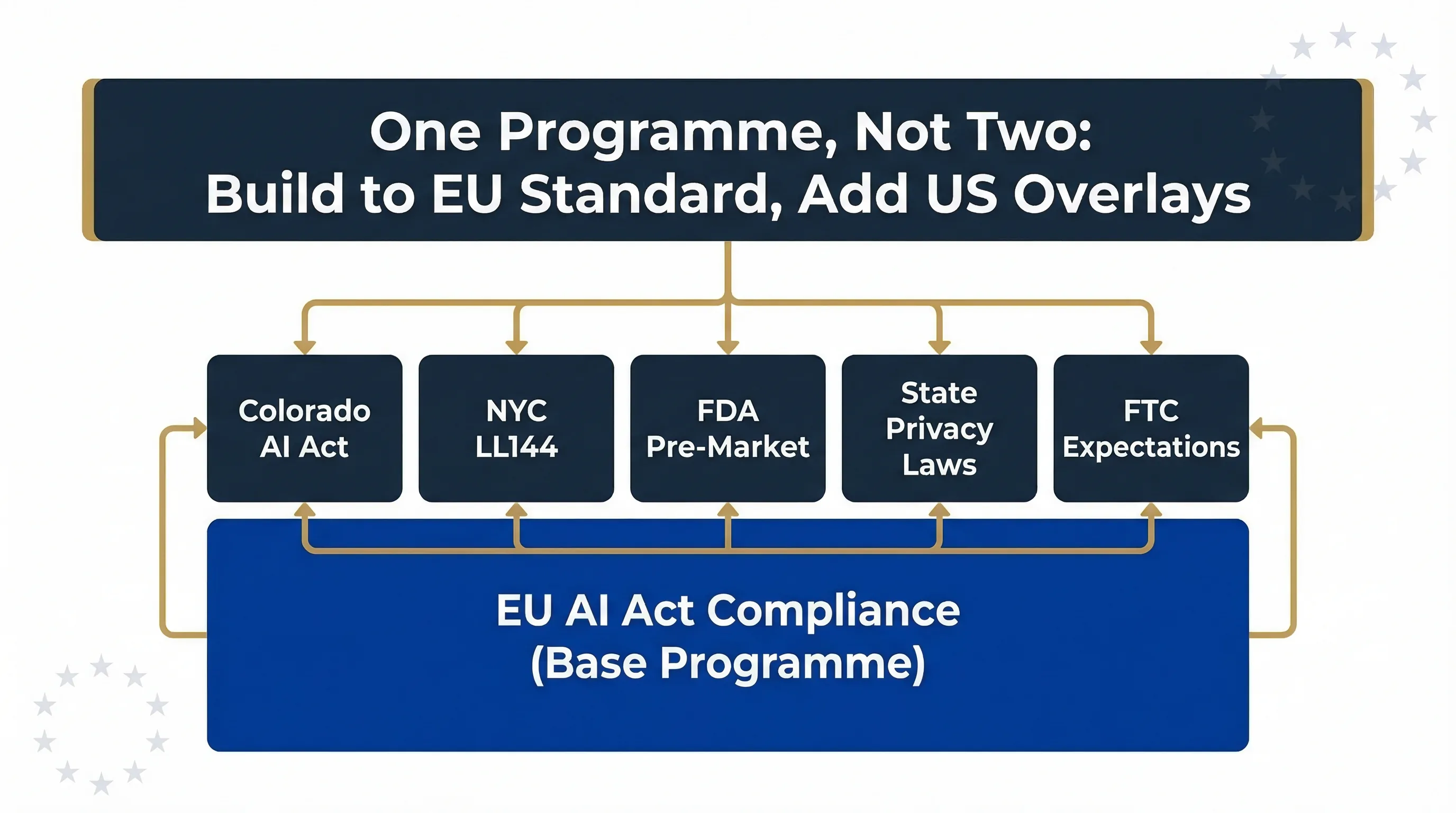

Compliance strategy: build to the EU AI Act standard, then add US-specific overlays. One programme, not two.

Operating in Both Markets: A Practical Compliance Strategy

Don't build two separate governance programmes. Build one programme to the higher standard — the EU AI Act — and add US-specific overlays where needed. This reduces cost, eliminates duplication, and ensures consistency.

What EU AI Act Compliance Already Covers for the US

A programme built to EU AI Act standards substantially satisfies Colorado AI Act requirements (which are lighter across every dimension), FTC "reasonable care" expectations (which look for documented risk management and transparency), NIST AI RMF alignment (which your governance programme inherently provides), and sector-specific US requirements (which focus on specific harms already addressed by EU AI Act risk management).

What US-Specific Requirements Add on Top

Colorado requires specific consumer notification language. NYC Local Law 144 requires annual bias audits for automated employment tools plus public summary posting. FDA requires pre-market review for AI medical devices (separate from EU MDR). EEOC guidance on AI in employment may require disparate impact testing methodology that differs from EU expectations. State privacy laws (CCPA/CPRA, Virginia VCDPA) add opt-out rights and data deletion requests that compound on GDPR where both apply.

Get Started

Related guides: For framework integration, see ISO 42001 / NIST AI RMF / EU AI Act Mapping. For non-EU companies specifically, see EU AI Act for Non-EU Companies.

FAQ: EU AI Act and US AI Regulation

Yes, if the AI system is placed on the EU market or its output is used in the EU. Article 2 provides extraterritorial reach. A US SaaS company serving EU customers, a US fintech with EU users, or a US HR tech company whose clients hire EU candidates are all potentially in scope.

Substantially but not completely. EU AI Act compliance exceeds Colorado AI Act and FTC expectations. But US-specific requirements like NYC LL144 bias audits, FDA pre-market review, and state privacy law opt-outs add obligations the EU AI Act does not cover. Build to EU standard, then add US overlays.

No, as of March 2026. The US relies on agency enforcement (FTC, FDA, EEOC), federal guidance (NIST AI RMF, Executive Orders), and state legislation (Colorado AI Act, NYC LL144). Multiple federal bills have been proposed but none have passed.

Colorado SB 24-205, the most comprehensive US state AI law. Originally set for February 1, 2026, enforcement was postponed to June 30, 2026 by SB 25B-004. Covers high-risk AI in employment, finance, insurance, healthcare, education, housing, legal services, and government. Requires impact assessments, consumer notification, and reasonable care against algorithmic discrimination.

If you operate in both markets, EU AI Act first. It is the strictest and most comprehensive. A programme built to EU AI Act standards substantially exceeds US requirements. Add US-specific requirements as overlays rather than building a parallel programme.

Unpredictable. Multiple bills have been proposed. The regulatory trend is toward more governance, not less. But US political dynamics make timing uncertain. Do not wait for federal legislation. State requirements and EU AI Act extraterritorial reach already create binding obligations.

Operating Across EU and US Markets?

Move78 International provides broader cross-framework support for teams coordinating EU AI Act obligations with US governance, risk, and implementation work.

Visit Move78 InternationalDisclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal or regulatory advice. US state AI legislation is evolving rapidly and may change between publication and reading. Verify all US regulatory references against official sources before relying on them. Move78 International Limited is not a law firm. EU regulatory references are based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law.