GDPR Protects Personal Data. The AI Act Governs AI System Safety. They're Not the Same Problem.

GDPR (Regulation 2016/679) regulates the processing of personal data. Its concern: protecting individuals' data rights — access, rectification, erasure, portability, consent, lawful basis, minimisation. The EU AI Act (Regulation 2024/1689) regulates AI systems. Its concern: ensuring AI systems are safe, transparent, non-discriminatory, and subject to human oversight.

Many AI systems process personal data, so both regulations apply simultaneously. But they address different aspects of the same system. Think of it this way: GDPR asks "are you handling this person's data lawfully?" The AI Act asks "is this AI system safe and is someone watching it?"

A system can be fully GDPR-compliant — lawful basis established, DPIA completed, data minimised, consent obtained — and still violate the AI Act because nobody designed human oversight, nobody ran a conformity assessment, nobody set up incident reporting to AI authorities. The reverse is also true: a system can meet all AI Act requirements but process personal data unlawfully under GDPR.

The key takeaway for DPOs:

GDPR compliance does not equal AI Act compliance. If you've just inherited AI governance responsibilities, you need to build a separate compliance workstream. But you can leverage your existing GDPR infrastructure as a foundation — roughly 30–40% of what you need is already in place.

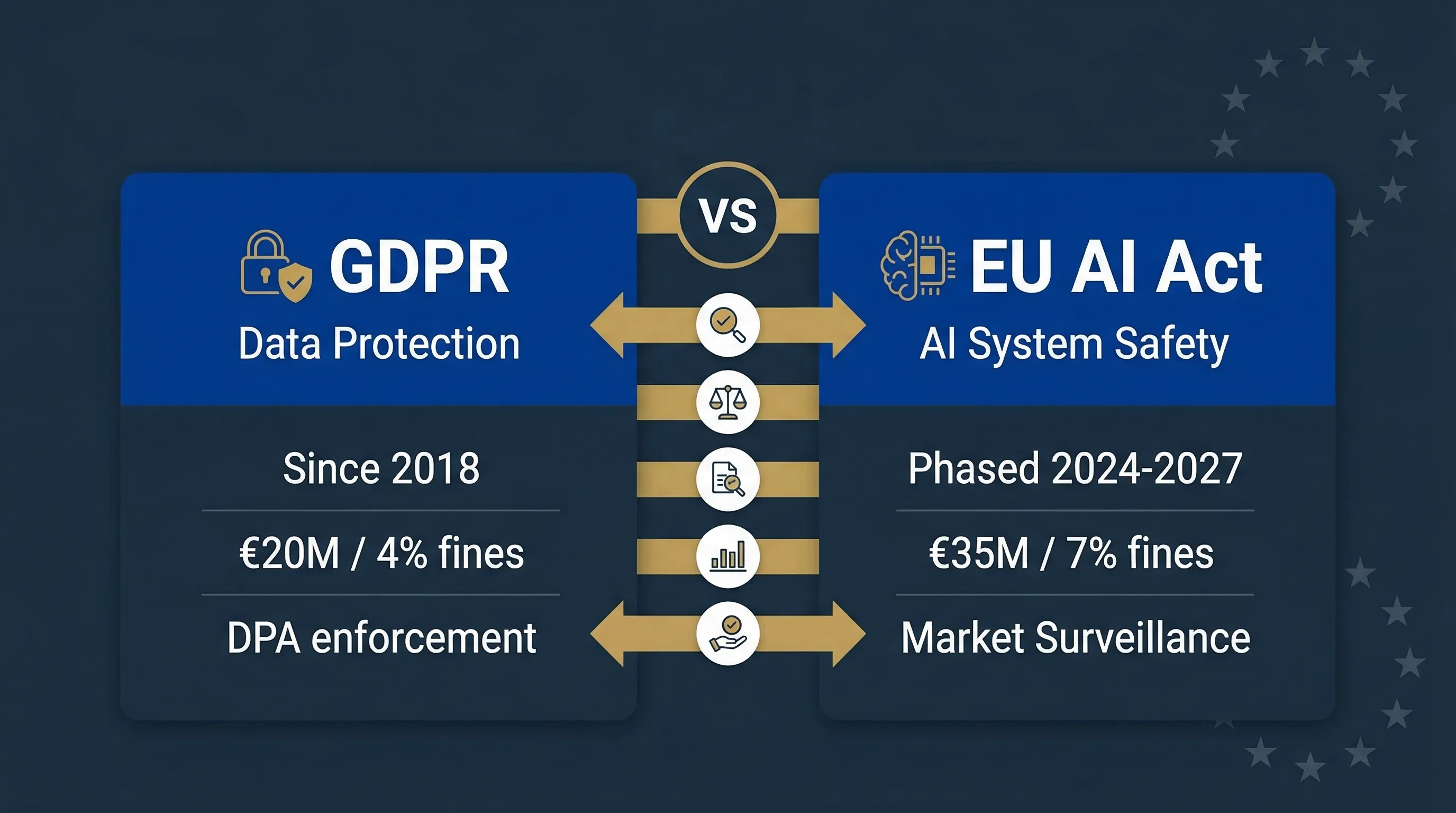

EU AI Act vs GDPR: Head-to-Head Comparison

This table covers 14 dimensions. It's designed as a reference document — bookmark it, share it with your legal team, use it in board presentations. Can your compliance team explain each row?

| Dimension | GDPR (Regulation 2016/679) | EU AI Act (Regulation 2024/1689) |

|---|---|---|

| What it regulates | Processing of personal data | AI systems (regardless of whether they process personal data) |

| In force since | May 25, 2018 | August 1, 2024 (phased enforcement through 2027) |

| Scope trigger | Processing personal data of EU residents | Placing AI on EU market, putting into service, or output used in EU |

| Risk approach | Risk-based (DPIA for high-risk processing) | Risk-based (4 tiers: unacceptable, high, limited, minimal) |

| Applies to | Data controllers and processors | AI providers, deployers, importers, distributors |

| Key obligations | Lawful basis, consent, transparency, data rights, DPIA, DPO appointment, breach notification | Risk management, data governance, technical documentation, human oversight, conformity assessment, CE marking, logging, incident reporting |

| Impact assessment | DPIA (Article 35) — data protection risks | FRIA (Article 27) — fundamental rights impacts |

| Automated decisions | Article 22: right not to be subject to solely automated decisions with legal effects | Articles 14, 26: human oversight design + deployer monitoring obligations |

| Transparency | Articles 13–14: inform data subjects about processing | Article 13 (to users), Article 50 (AI content labelling), Article 26 (to affected persons) |

| Documentation | Records of processing activities (Article 30) | Technical documentation (Article 11, Annex IV), quality management system (Article 17) |

| Supervisory authority | National data protection authorities | National market surveillance authorities + EU AI Office (for GPAI) |

| Maximum fines | €20M or 4% of worldwide annual turnover | €35M or 7% of worldwide annual turnover (prohibited practices) |

| Incident reporting | 72 hours to DPA for personal data breaches | Without undue delay to market surveillance authority for serious AI incidents |

| Certification | Optional (Articles 42–43) | Mandatory conformity assessment for high-risk AI (Article 43) |

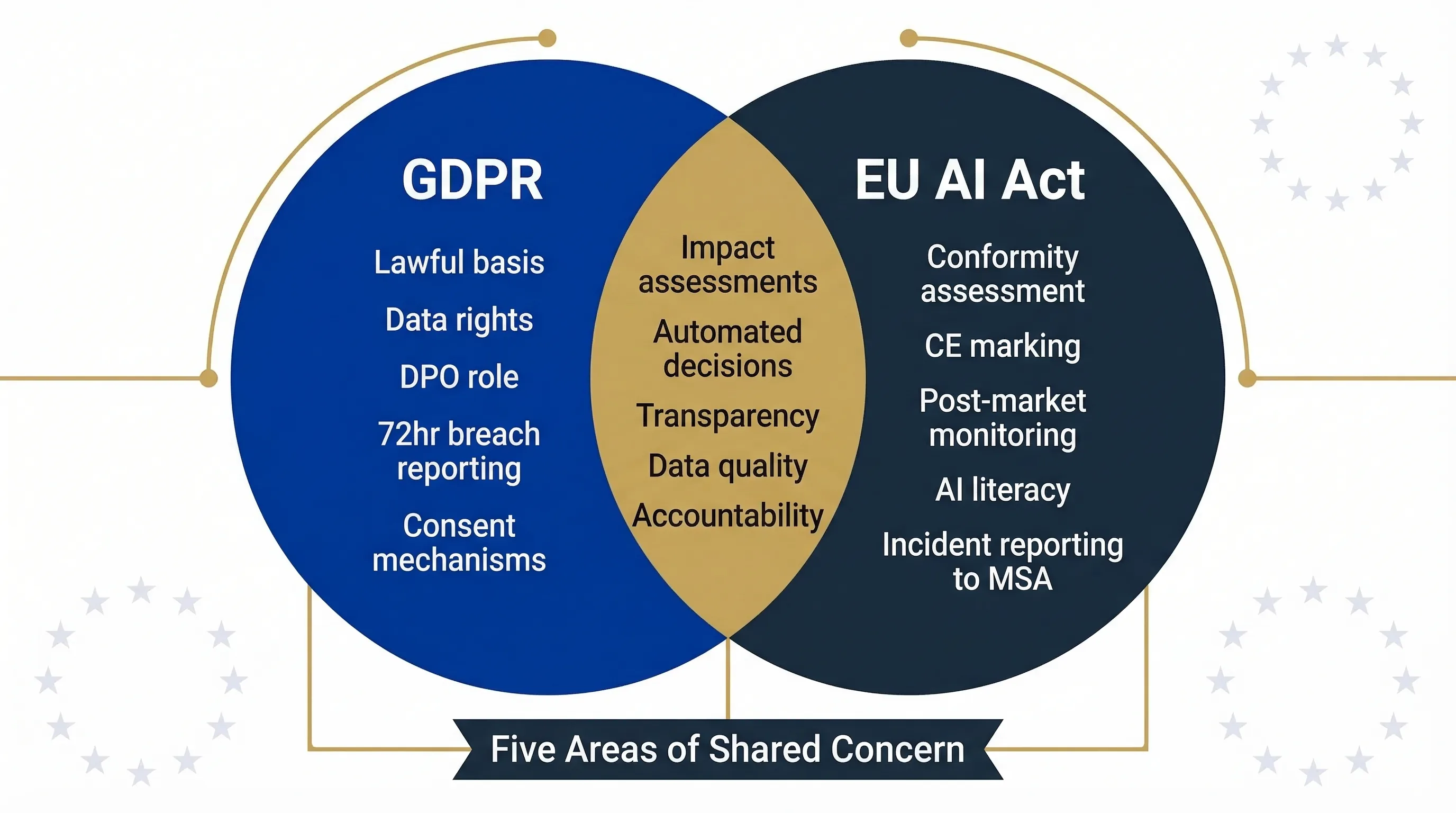

Where GDPR and the AI Act Overlap: Five Areas of Shared Concern

Overlap 1: Impact Assessments (DPIA vs FRIA)

GDPR Article 35 requires a DPIA for high-risk data processing. AI Act Article 27 requires a FRIA for certain deployers of high-risk AI. They're not the same assessment — DPIA focuses on data protection risks, FRIA on broader fundamental rights impacts including discrimination, access to services, and safety. But they share methodology: identify risks, assess likelihood and severity, define mitigations, document and review. The practical approach: run them together as a combined assessment with separate sections for each. The EDPB/EDPS Joint Opinion 1/2026 supports this integration.

Overlap 2: Automated Decision-Making (Article 22 vs Articles 14/26)

GDPR Article 22 gives individuals the right not to be subject to solely automated decisions with legal or significant effects. It requires meaningful human involvement. AI Act Article 14 requires human oversight designed into high-risk systems. Article 26 requires deployers to implement that oversight. If your AI makes decisions about people — credit, hiring, insurance — both apply. GDPR demands human involvement in the decision. The AI Act demands structured oversight of the system itself.

Overlap 3: Transparency

GDPR Articles 13–14 require informing data subjects about automated processing and profiling. AI Act Article 13 requires transparency to system users. Article 50 requires labelling AI-generated content. Result: you must inform people about both the data processing and the AI system. Two separate transparency obligations, often fulfilled through a single expanded notice.

Overlap 4: Data Quality

GDPR Article 5(1)(d) requires data accuracy. AI Act Article 10 requires training, validation, and testing data to be relevant, representative, error-free, and bias-examined. The AI Act goes further: it mandates examination of data for biases and documentation of data governance measures. GDPR doesn't require bias testing. If your AI processes personal data, Article 10 compliance automatically satisfies Article 5(1)(d), but not the reverse.

Overlap 5: Documentation and Accountability

GDPR Article 5(2) requires demonstrable accountability. Article 30 requires records of processing. AI Act Articles 11–12 require technical documentation per Annex IV and automatic logging. Both demand demonstrable compliance — "we're doing the right thing" isn't enough; you must prove it with evidence.

Combined Assessment Tool

FRIA + DPIA GeneratorBuild a combined fundamental rights and data protection impact assessment

Five areas where GDPR and the AI Act overlap: impact assessments, automated decisions, transparency, data quality, and accountability documentation.

What the AI Act Requires That GDPR Doesn't

This is the section that matters most if you're a DPO who just inherited AI governance. These are obligations you've never dealt with under GDPR. They require new processes, new documentation, and potentially new skills on your team.

| AI Act-Only Obligation | Article | Why GDPR Doesn't Cover This |

|---|---|---|

| Conformity assessment & CE marking | Article 43 | GDPR has no product certification requirement |

| Continuous risk management system | Article 9 | GDPR requires risk assessment for DPIAs; AI Act requires lifecycle-long system |

| Human oversight system design | Article 14 | GDPR requires human involvement; AI Act requires the system itself to have oversight features |

| Accuracy, robustness & adversarial resilience | Article 15 | GDPR requires "appropriate technical measures"; AI Act specifies resilience against data poisoning, adversarial attacks |

| Post-market monitoring | Article 72 | No GDPR equivalent at all |

| Serious incident reporting to AI authorities | Article 62 | GDPR reports to DPAs; AI Act reports to different authorities with different thresholds |

| AI literacy | Article 4 | No GDPR equivalent. Enforceable since February 2, 2025 |

How to Build AI Act Compliance on Top of Your Existing GDPR Programme

You're not starting from zero. A mature GDPR programme provides roughly 30–40% of the infrastructure you need. Here's what you can reuse and what you must build new.

What You Can Reuse From GDPR

Your data processing register → extend to become an AI system inventory.

Your DPIA process → extend to include FRIA elements.

Your data subject rights procedures → extend to include AI-specific transparency.

Your breach notification workflow → add parallel serious incident reporting to AI authorities.

Your vendor management process → extend to include AI-specific vendor due diligence.

Your accountability documentation → extend to include AI technical documentation.

What You Must Build New

AI system classification (risk levels per Articles 5–6, Annex I/III)

Human oversight arrangements per system (Article 14)

Conformity assessment process (if you're a provider, Article 43)

Post-market monitoring system (Article 72)

AI literacy training programme (Article 4 — already enforceable)

AI-specific incident reporting to market surveillance authority (Article 62)

AI Act Readiness Tools

Related guides: For the full deployer framework, see the High-Risk AI Deployer Guide. For framework mapping, see ISO 42001 / NIST AI RMF / EU AI Act Mapping.

FAQ: EU AI Act and GDPR

No. GDPR covers data protection. The AI Act covers AI system safety, oversight, and product certification. Conformity assessment, CE marking, human oversight design, post-market monitoring, AI literacy, and incident reporting to AI authorities have no GDPR equivalent. You need both.

Yes, practically. They assess different risks (data protection versus fundamental rights) but share methodology. Conduct a combined assessment with separate sections for each. The EDPB/EDPS Joint Opinion 1/2026 supports integration.

Possibly. 55 percent of privacy professionals have acquired AI governance duties according to IAPP data. The AI Act does not require a specific officer role like the GDPR DPO, but many organisations assign AI governance to the existing DPO or compliance function. Clarify accountability with your management.

No. GDPR is enforced by national data protection authorities. The AI Act is enforced by national market surveillance authorities, which are different bodies, plus the EU AI Office for GPAI models. You may report the same incident to two different authorities.

The AI Act. Maximum fine for prohibited practices is 35 million euros or 7 percent of worldwide annual turnover. GDPR maximum is 20 million euros or 4 percent. They stack: a single AI system could trigger fines under both regulations simultaneously.

Partially. GDPR Article 22 requires meaningful human involvement in automated decisions. The AI Act goes further: Article 14 requires the system to be designed with specific oversight features, and Article 26 requires deployers to implement structured oversight arrangements. Article 22 compliance is necessary but not sufficient.

Building AI Governance on Top of GDPR?

Move78 International provides broader AI governance and implementation support for teams building on top of existing GDPR programmes.

Visit Move78 InternationalDisclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel before making compliance decisions. Move78 International Limited is not a law firm. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law.