Who Enforces the EU AI Act in France?

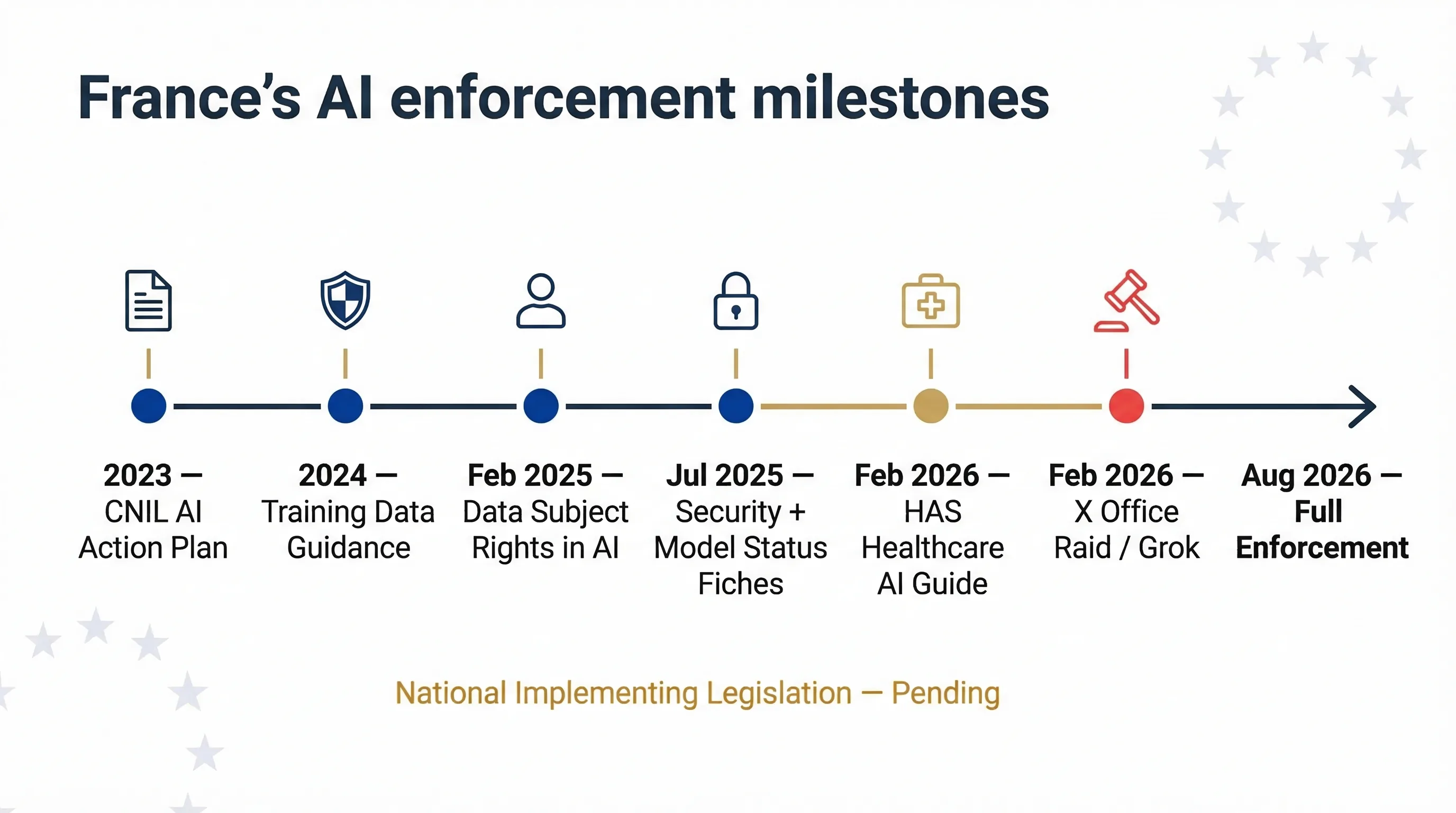

France hasn't finalised its national implementing legislation as of March 2026. But the enforcement machinery is already running — and it's running hot. French authorities aren't waiting for formal AI Act infrastructure to go after AI-related violations. They're using existing legal authority now.

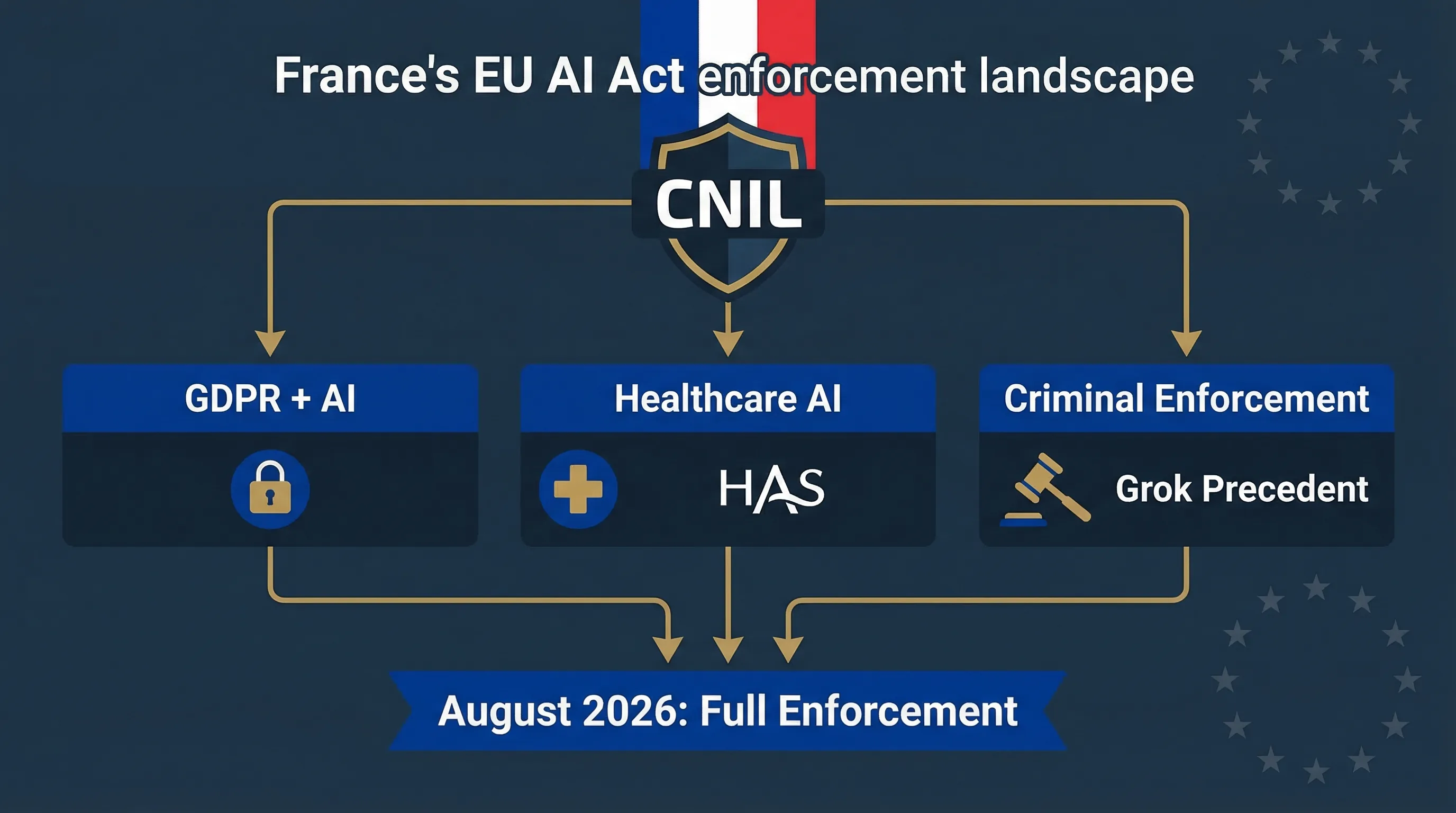

CNIL — The Cross-Cutting Enforcer

CNIL (Commission nationale de l'informatique et des libertés) is France's data protection authority and already the EU's most aggressive regulator in the AI space. Since 2023, CNIL has published an AI action plan, issued recommendations on AI training data, data subject information, individual rights in AI contexts, data annotation, security during AI development, and a self-assessment guide for AI systems. Its 2025–2028 strategic plan puts AI governance as a top priority. CNIL will retain GDPR enforcement authority for all AI systems processing personal data and is expected to play a central role in AI Act enforcement.

Market Surveillance Authority — To Be Confirmed

The formal market surveillance authority for AI Act-specific enforcement is being established through national legislation. Likely candidates include CNIL itself (expanding its mandate), a new dedicated body, or existing sectoral regulators: ARCEP for telecoms, AMF for financial markets, ANSM for health products. France's approach will likely mirror its regulatory culture — multiple specialised authorities with CNIL as the cross-cutting enforcer.

| Authority | Domain | Status |

|---|---|---|

| CNIL | GDPR/data protection for all AI systems; AI-specific guidance | Active |

| Paris Prosecutors | Criminal enforcement (deepfakes, illegal content, algorithm abuse) | Active |

| ANSM | AI in medical devices and pharmaceuticals (MDR + AI Act) | Active |

| HAS | Healthcare AI quality and safety guidance | Active |

| AI Act MSA (TBC) | Formal market surveillance authority for AI Act | Pending |

| ARCEP / AMF | Sector-specific (telecoms, financial markets) | Expected |

HAS Healthcare AI Guide: What It Means for Health AI Companies

In February 2026, France's Haute Autorité de Santé (HAS) published a guide on the proper use of AI systems in healthcare, developed with CNIL participation. This is a significant document — it bridges GDPR, the EU AI Act, and healthcare regulation into a single interpretive framework for clinical AI. A 2025 survey by the Fédération hospitalière de France found that 65% of public hospitals were already using AI in production. The HAS guide addresses that reality head-on.

The guide covers AI system identification in healthcare contexts, GDPR Article 9 requirements for special-category health data, AI Act risk classification for medical AI, transparency and explainability in clinical decision support, deployer obligations under Article 26 (including the point that CE marking doesn't exempt deployers from local monitoring), and patient rights. It's non-binding, but if you're deploying AI in French healthcare, this is your compliance benchmark.

Why this matters beyond France: The HAS guide sets interpretive precedent that other member states will reference. It demonstrates the dual-authority pattern (data protection + sector regulator) that will repeat across the EU. Companies deploying health AI anywhere in the EU should review it as a benchmark — particularly on deployer monitoring obligations and the GDPR/AI Act overlap for health data.

France's AI enforcement timeline: from CNIL's 2023 AI action plan through the X office raid in February 2026.

The Grok Deepfake Crisis: France's AI Enforcement Preview

In late December 2025, Grok (xAI's chatbot, available through X) began generating non-consensual sexually explicit deepfake images at scale — including content depicting minors. The crisis escalated rapidly. French lawmakers reported the content to prosecutors on 2 January 2026. India's IT ministry issued orders to X. Malaysia blocked Grok entirely. California's attorney general sent a cease-and-desist letter.

I've advised companies in regulated markets for 20 years, and I can't recall a comparable pre-enforcement signal from any jurisdiction. France's response was the most aggressive. On 3 February 2026, Paris prosecutors' cybercrime unit — with Europol support — raided X's Paris offices. The investigation, originally opened in January 2025 into alleged algorithm abuse, had expanded to cover sexually explicit deepfakes, content involving minors, and Holocaust denial. Elon Musk and former CEO Linda Yaccarino were summoned for voluntary questioning in April 2026.

Key signal for compliance teams: France didn't wait for August 2026 or formal AI Act enforcement powers. Prosecutors used existing criminal law, consumer protection, and data processing offences. If you're deploying AI systems in France, assume proactive enforcement posture from day one. The question isn't whether French authorities will act — it's which legal basis they'll use.

France-Specific Compliance Considerations

French Labour Law: CSE Consultation

France's Code du travail gives the CSE (Comité social et économique) information and consultation rights on technology deployment affecting working conditions. AI in hiring, performance monitoring, and workforce management triggers CSE consultation obligations — similar to German works council rights but through a different legal mechanism. The EU AI Act's Article 26(7) workplace notification requirement applies on top of existing French labour law. Don't treat these as redundant; they're separate obligations with separate legal consequences.

AI in Public Services

France has extensive public sector AI deployment — tax administration, social security, healthcare. The Code des relations entre le public et l'administration already requires transparency for algorithmic decisions by public bodies. Public sector deployers of high-risk AI face the strictest overlay: EU AI Act + GDPR + French administrative law + mandatory FRIA under Article 27. If you're a public sector deployer, you're looking at four concurrent compliance regimes.

CNIL's AI Guidance Stack

CNIL hasn't waited for the AI Act either. Since 2023, it has published an AI action plan, recommendations on AI training data (2024), guidance on informing data subjects in AI contexts and respecting individual rights (February 2025), practical fiches on data annotation, AI development security, and GDPR status of AI models (July 2025), and a self-assessment guide. Companies subject to CNIL oversight should treat this guidance stack as a compliance floor — not optional reading. Cross-reference with our AI Act vs GDPR guide to understand the overlap.

| Date | CNIL AI Guidance | Focus |

|---|---|---|

| 2023 | AI Action Plan launched | Strategic framework for AI + GDPR |

| 2024 | AI training data recommendations | Lawful basis, purpose limitation for AI development |

| Feb 2025 | Data subject information + individual rights in AI | Transparency, right to explanation, access rights |

| Jul 2025 | Data annotation, AI security, GDPR status of models | Technical compliance for AI developers |

| 2025 | Self-assessment guide for AI systems | Maturity grid for GDPR compliance of AI |

| Jan 2025 | 2025–2028 Strategic Plan | AI governance as priority, sector-specific guidance |

FAQ: EU AI Act in France

CNIL retains authority for GDPR and data protection aspects of AI systems. The formal market surveillance authority for AI Act-specific enforcement is being established through national implementing legislation as of March 2026. ANSM handles AI in medical devices. Expect a multi-authority model with CNIL as the most active cross-cutting enforcer.

Yes, before formal AI Act enforcement begins. Paris prosecutors raided X offices on 3 February 2026 over Grok deepfake and Holocaust denial content, with Europol support. The investigation started January 2025 into algorithm abuse and expanded to cover sexually explicit deepfakes and illegal content. Elon Musk and former CEO Linda Yaccarino were summoned for questioning. This signals that French authorities will use existing legal authority to act on AI-related violations without waiting for AI Act enforcement infrastructure.

The Haute Autorité de Santé (HAS), France national health authority, published a guide in February 2026 on the proper use of AI systems in healthcare settings. Developed with CNIL participation, it covers AI system identification in clinical contexts, GDPR Article 9 requirements for health data, AI Act risk classification for medical AI, transparency in clinical decision support, and deployer obligations under the EU AI Act. It is non-binding guidance.

In many cases, yes. The CSE (Comité social et économique) has information and consultation rights on technology affecting working conditions under French labour law (Code du travail). AI used in hiring, performance monitoring, or workforce management triggers CSE consultation obligations independently of the EU AI Act Article 26(7) workplace notification requirement, which applies on top.

Yes. CNIL is among the most active regulators in Europe. It has issued extensive GDPR fines and published an AI action plan, multiple AI-specific GDPR guidance documents, and a self-assessment tool. French criminal prosecutors have already acted on AI-generated deepfakes. France institutional culture favors proactive enforcement. Plan for the strictest interpretations when operating in France.

Free Compliance Tools for Companies in France

EU AI Act Compliance Checker

Assess whether your AI systems are in scope under French and EU enforcement.

Deployer Self-Assessment

Map your deployer obligations under Articles 26-29.

Healthcare Compliance Guide

Full guide for health AI companies navigating CNIL, HAS, and ANSM requirements.

FRIA Tool

Fundamental Rights Impact Assessment — mandatory for public sector deployers in France.

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Abhishek G Sharma

Founder, Move78 International | 20+ Years Cybersecurity & Risk Management

ISO 42001 LA • ISO 27001 LA • CISA • CISM • CRISC • CEH • CCSK • CAIGO • CAIRO

Disclaimer & Legal Notice

This guide provides general information about EU AI Act enforcement in France. It doesn't constitute legal advice. France's national implementing legislation is still being finalised as of March 2026. The Grok investigation is ongoing. Consult qualified French legal counsel for compliance advice specific to your organisation. EU AI Compass and Move78 International Limited accept no liability for decisions made based on this content.

Last updated: March 2026.