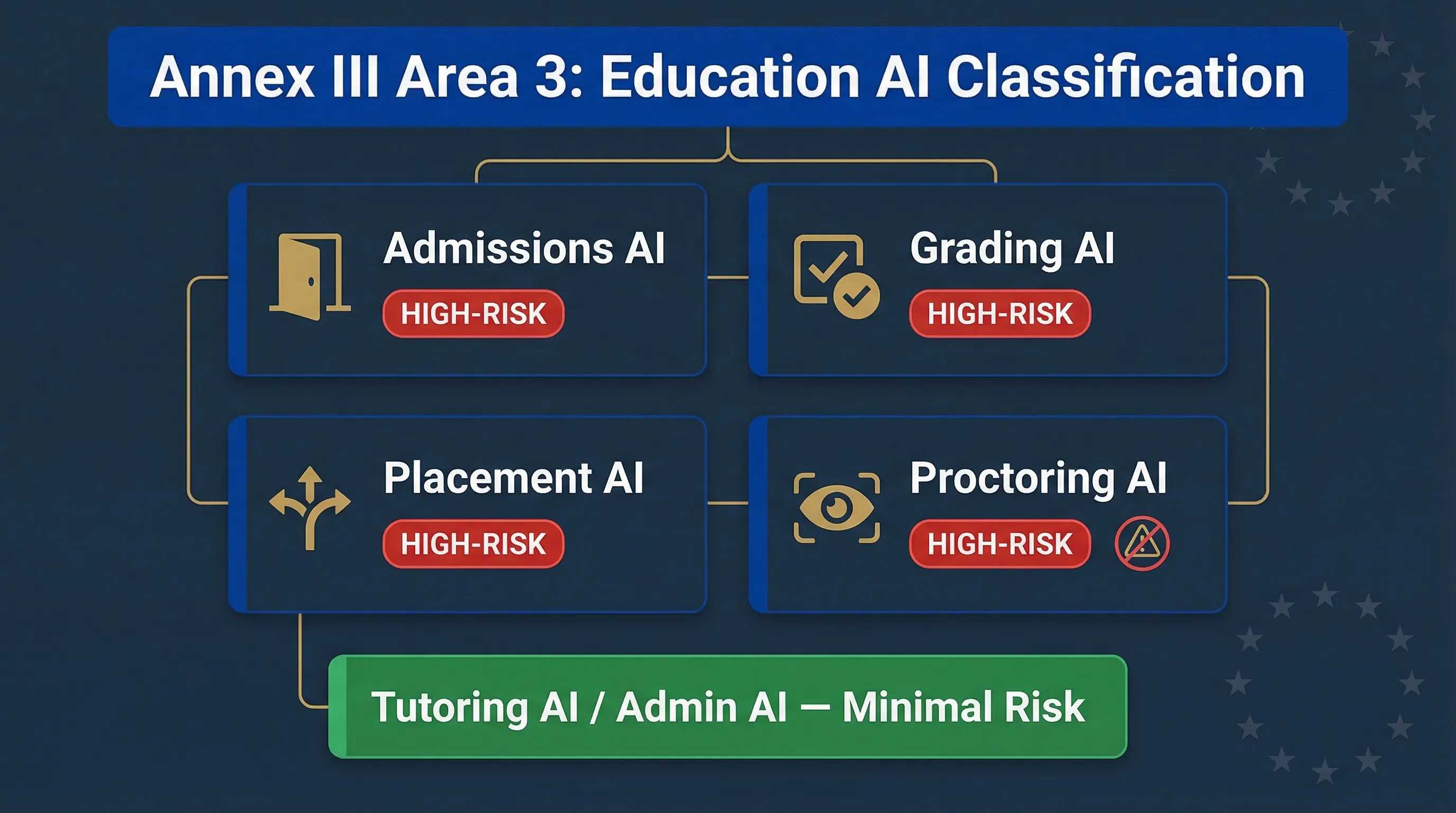

Annex III Area 3: Which Education AI Is High-Risk?

The EU AI Act doesn't treat education AI as a single category. Annex III Area 3 covers four distinct use cases, each with different implications. If you're an EdTech company or a university deploying AI assessment tools, the first question isn't "is AI in education regulated?" — it's "which of our specific systems trigger which classification?"

Category 1: AI Determining Access to Education

AI for admissions screening, applicant ranking, and acceptance or rejection recommendations. If AI influences who gets in and who doesn't, it's high-risk. This includes automated application scoring, AI that ranks applicants by predicted academic success, and algorithmic admissions filters. Enforcement begins August 2, 2026.

Category 2: AI Evaluating Learning Outcomes

Automated grading, essay scoring, and assessment evaluation. The trigger is "evaluating learning outcomes" — if the AI output contributes to a student's grade or certification, it's likely high-risk. AI-powered exam marking, automated essay grading systems, and AI that assigns grades or pass/fail determinations all fall here.

Category 3: AI Assessing Appropriate Level of Education

AI that determines student placement, course recommendations based on assessed ability, or vocational training assignments. Adaptive learning platforms that determine content difficulty and AI that recommends academic tracks based on performance data both fall under this category.

Category 4: AI Monitoring Prohibited Behaviour During Exams

AI proctoring systems that monitor students during tests. This is the most contested category. Webcam-based proctoring AI using eye tracking, face detection, or behavioural analysis combines biometric processing with behavioural monitoring, creating GDPR plus AI Act dual compliance requirements. Browser lockdown tools with AI monitoring and AI detecting phone use, impersonation, or unauthorised materials all fall here.

| Education AI Use Case | Annex III Classification | Additional Concerns |

|---|---|---|

| Admissions screening / ranking | HIGH-RISK Area 3 | Discrimination risk in applicant selection |

| Automated grading / essay scoring | HIGH-RISK Area 3 | Bias against non-native speakers |

| Student placement / level assessment | HIGH-RISK Area 3 | May entrench socioeconomic sorting |

| AI proctoring (exam monitoring) | HIGH-RISK Area 3 | Biometric data (Area 1) + possible Art. 5 prohibition |

| AI tutoring chatbot (no grading) | LIKELY NOT HIGH-RISK | Transparency obligations under Art. 50 |

| Plagiarism detection | DEBATABLE | High-risk if it directly influences grades |

| Administrative AI (scheduling) | MINIMAL RISK | No student access or outcome impact |

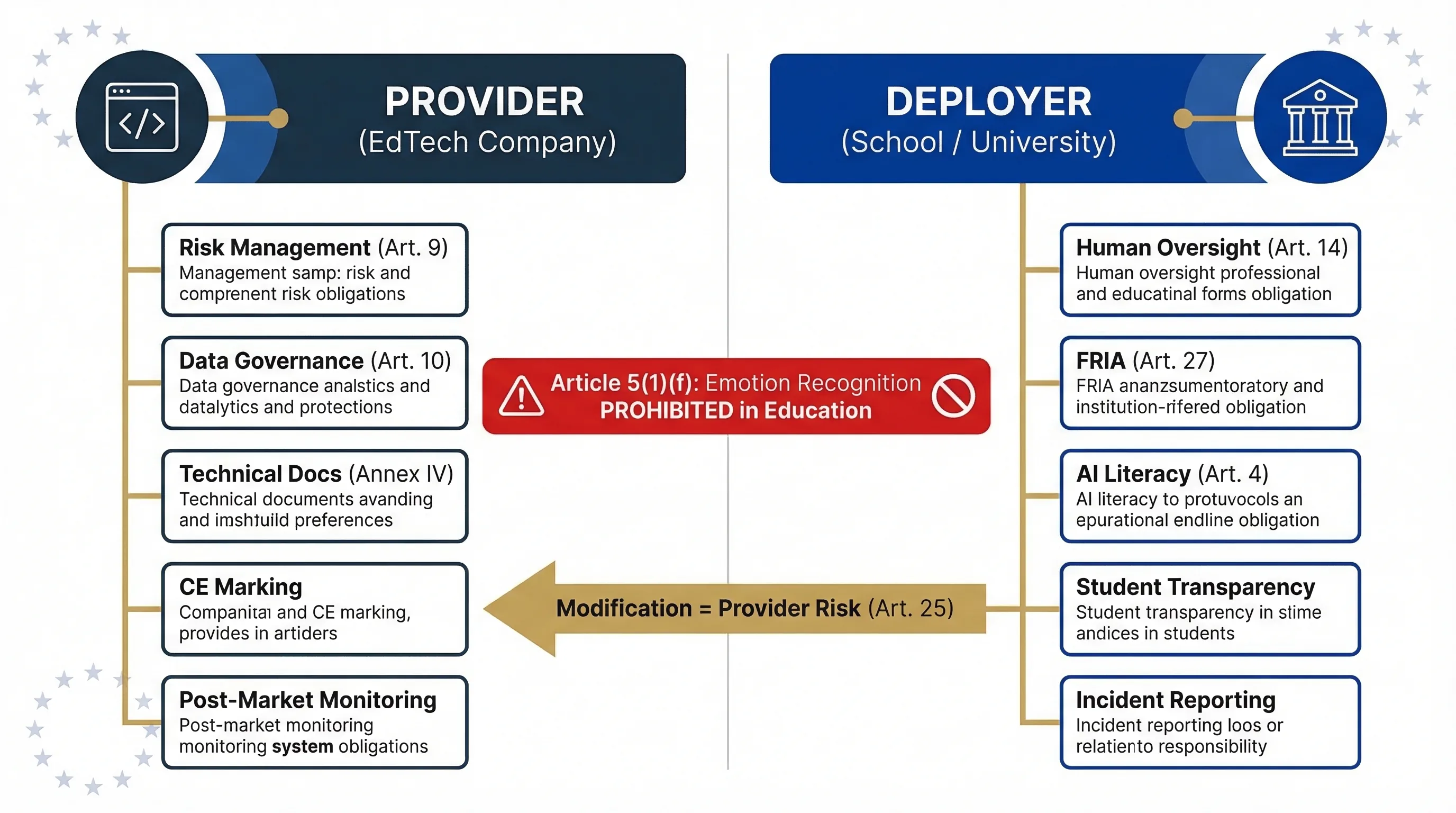

EdTech Company or Educational Institution: Who Has Which Obligations?

An EdTech company building an AI proctoring tool has completely different obligations from a university licensing that same tool. Getting this wrong means either missing provider obligations entirely or doing unnecessary work as a deployer. Which side of the line are you on?

EdTech Company Building the AI Tool

You're the provider. Full obligation stack: risk management system (Article 9), data governance (Article 10 — particularly sensitive for student data), technical documentation (Annex IV), human oversight design, accuracy and robustness, conformity assessment, CE marking, EU database registration, and post-market monitoring. If your tool processes biometric data like facial recognition in proctoring, you also face Annex III Area 1 requirements. And if it uses emotion recognition in an educational setting, you're potentially crossing into prohibited territory under Article 5(1)(f).

University, School, or Training Provider Deploying the AI

You're the deployer. Article 26 obligations apply: use per provider instructions, human oversight (teachers and examiners reviewing AI assessments before they become final), monitoring, logging, AI literacy for teaching staff, and transparency to students. Public educational institutions deploying high-risk AI must conduct a FRIA under Article 27 — most schools and universities are public bodies. If you modify the vendor's tool (custom rubrics, fine-tuning on local data, changing intended purpose), you risk becoming a provider under Article 25.

Emotion Recognition Prohibition in Education

Article 5(1)(f) prohibits emotion recognition systems in education settings, enforceable since February 2, 2025. AI proctoring that detects "suspicious behaviour" via facial expression analysis may cross this line. The boundary between "detecting prohibited behaviour" (permitted under Annex III) and "emotion recognition" (prohibited under Article 5) is a live regulatory question. Conservative recommendation: audit your proctoring tool's features. If it analyses facial expressions, eye movement patterns as emotional signals, or "stress indicators" — get legal advice before deploying in the EU.

| Role | Who | Key Obligations | Watch Out For |

|---|---|---|---|

| Provider | EdTech company, assessment platform developer | Art. 9–15, Annex IV documentation, conformity assessment, CE marking, post-market monitoring | Emotion recognition features in proctoring tools |

| Deployer | University, school, training provider | Art. 26: use per instructions, human oversight, FRIA (public bodies), AI literacy, transparency to students | Modifying vendor tools and inadvertently becoming a provider |

Role Classification Tools

Deep dive: For the full deployer framework, see the High-Risk AI Deployer Guide.

Classification map: how education AI use cases map to Annex III Area 3 categories and the Article 5(1)(f) emotion recognition prohibition.

Student Data Under GDPR and the EU AI Act: Dual Compliance

Student data — especially for minors — receives heightened protection under GDPR. GDPR Article 8 requires parental consent for processing children's data for information society services (age threshold varies by member state, typically 13–16). AI processing of student biometric data in proctoring requires a GDPR Article 9(2) legal basis for special category data in addition to the AI Act classification assessment.

DPIAs under GDPR Article 35 are almost certainly required for AI proctoring and automated grading systems — this is high-risk processing of a vulnerable group. The AI Act's data governance requirements under Article 10 add a further layer: training, validation, and testing data must be relevant, representative, and as error-free as possible. For education, that raises a specific question: does the training data represent diverse student populations? Are non-native speakers disadvantaged by language model assumptions in essay grading?

Education AI compliance means satisfying both GDPR student data protections and EU AI Act system safety requirements. The two overlap on transparency, human oversight, and data quality, but each adds requirements the other doesn't cover.

What Educational Institutions Should Do Now

Five steps. No theory. Start this week.

Audit all AI tools in academic use

Assessment platforms, proctoring systems, admissions tools, adaptive learning platforms, plagiarism detectors. Ask each department what they use. Shadow AI in education is rampant — departments adopt tools without central IT approval. → Shadow AI Discovery Protocol

Classify each against Annex III Area 3

Does the tool determine access to education, evaluate learning outcomes, assess educational level, or monitor exam behaviour? If yes to any, it's likely high-risk. → EdTech Assessment Validator

Check proctoring tools for emotion recognition

If any proctoring tool analyses facial expressions or "stress" indicators, it may violate Article 5(1)(f). This has been prohibited since February 2, 2025. This is your highest-risk item. Get legal review.

Implement human oversight for automated grading

No AI-generated grade should become final without teacher or examiner review. Document the oversight arrangement, including who reviews, their competence, and how they override. → Human Oversight Log

Conduct FRIA (public institutions)

Public schools and universities deploying high-risk AI must complete a Fundamental Rights Impact Assessment under Article 27 before deployment. → FRIA Generator

FAQ: EU AI Act for Education and EdTech

Is AI proctoring high-risk under the EU AI Act?

Is automated essay grading high-risk?

Does this apply to private tutoring platforms?

Is emotion recognition banned in schools?

When do education AI obligations take effect?

Do we need parental consent for AI tools used with minors?

All Education AI Compliance Tools

EdTech Assessment Validator

Check education AI classification

Biometric Identity Validator

For proctoring with facial recognition

EU AI Act Compliance Checker

General system classification

Accidental Provider Classifier

Check if modifications make you a provider

Deployer Self-Assessment

Check your deployer duties

Shadow AI Discovery Protocol

Find undisclosed AI tools in use

FRIA Generator

Build your impact assessment

Human Oversight Log

Track teacher override decisions

AI Literacy Planner

Build staff training programme

Need More Practical Guidance?

Explore the free EU AI Compass tools and guides to classify your use case, understand your obligations, and move to the next compliance step.

Disclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel before making compliance decisions. Move78 International Limited is not a law firm or authorised compliance service provider. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. The Digital Omnibus is a proposal, not enacted law.