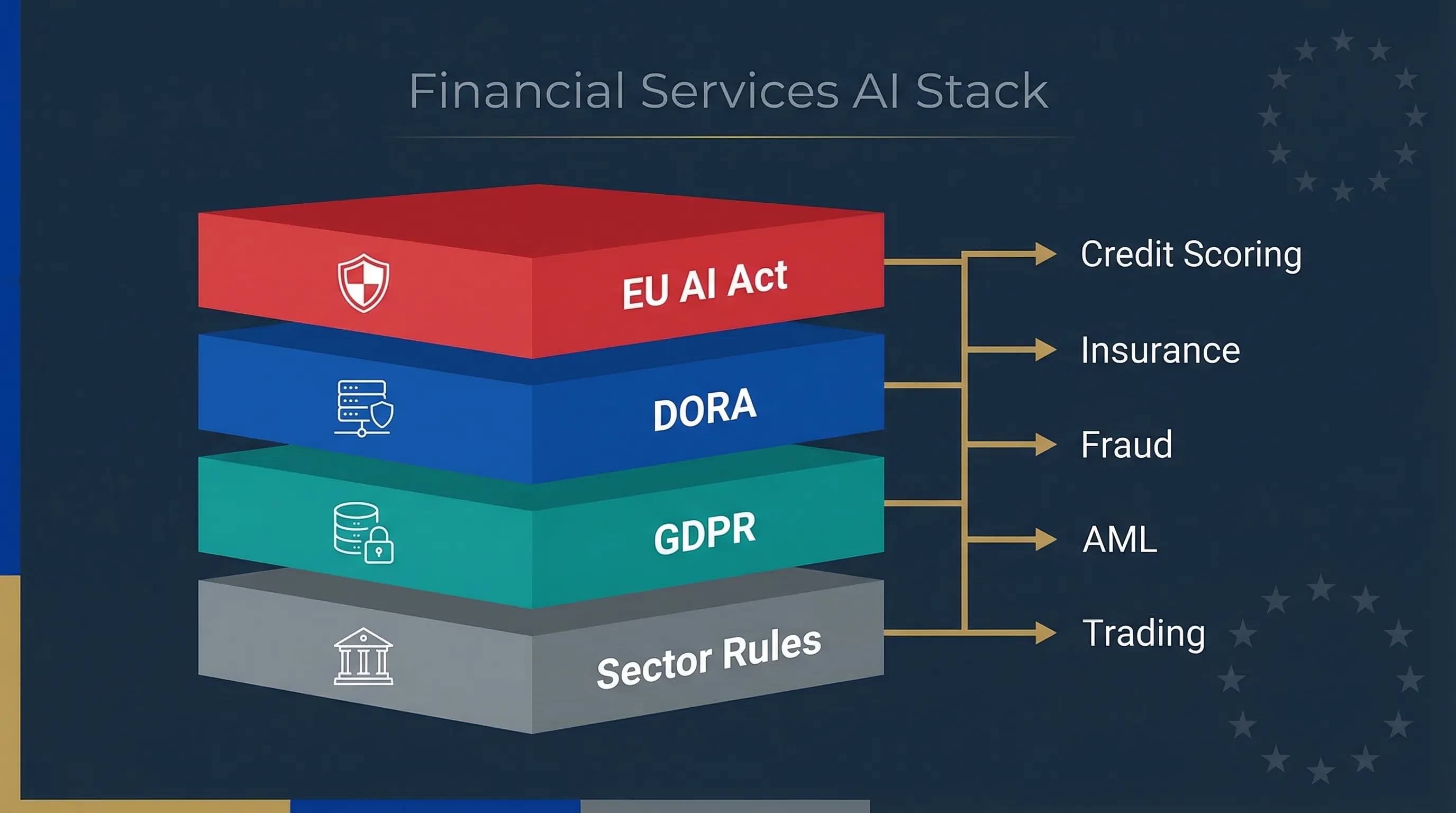

Four Regulatory Pillars You Must Align for AI in Finance

If you're running AI systems in a bank, insurer or fintech operating in the EU, you don't face one regulation. You face four, stacked on top of each other, each with its own enforcement body, penalty structure, and evidence expectations. Could your compliance team explain right now how these four interact for a single credit scoring model?

EU AI Act (Regulation 2024/1689)

The EU AI Act classifies AI systems used for creditworthiness assessment, insurance pricing, and access to essential financial services as high-risk under Annex III(5)(b). Deployers must conduct a Fundamental Rights Impact Assessment (FRIA) under Article 27, maintain risk management systems under Article 9, and ensure human oversight under Article 14. These obligations apply from August 2, 2026. Penalties reach up to €35 million or 7% of global annual turnover.

DORA (Regulation 2022/2554)

The Digital Operational Resilience Act has been enforceable since January 17, 2025. It requires financial entities to maintain ICT risk management frameworks, report major ICT incidents, conduct operational resilience testing, and manage third-party ICT provider risk. If your AI runs on cloud infrastructure provided by a designated Critical Third-Party Provider (CTPP), that vendor falls under direct ESA oversight. Fines reach 2% of total annual worldwide turnover.

GDPR (Regulation 2016/679)

The General Data Protection Regulation has been in force since May 25, 2018. For financial AI, the critical provisions are Article 22 (automated individual decision-making), Article 35 (Data Protection Impact Assessment for high-risk processing), and Articles 13–14 (transparency). Every AI system processing personal data to make or support decisions about creditworthiness, insurance pricing, or fraud status triggers GDPR obligations. Fines reach €20 million or 4% of global annual turnover.

Sector-Specific Rules

Beyond the three horizontal regulations, financial AI must comply with sector-specific requirements: EBA Guidelines on internal governance, EIOPA guidance on algorithmic underwriting, ESMA rules on algorithmic trading (MiFID II), Consumer Credit Directive requirements for credit decisions, and AML directives governing transaction monitoring. The ECB's Opinion CON/2026/10 (March 13, 2026) on credit scoring classification adds a further advisory layer, though it isn't binding.

| Regulation | Enforcement Date | Max Penalty | Enforcing Body |

|---|---|---|---|

| EU AI Act | 2 Aug 2026 (Annex III) | €35M or 7% turnover | National Market Surveillance Authorities |

| DORA | 17 Jan 2025 | 2% turnover / €5M (CTPPs) | EBA / EIOPA / ESMA |

| GDPR | 25 May 2018 | €20M or 4% turnover | National Data Protection Authorities |

| MiFID II / CCD / AML | Various (in force) | Varies by jurisdiction | National Financial Regulators |

How Key Financial AI Use Cases Map Across the Stack

Most compliance teams I've worked with treat each regulation as a separate project. That's how you end up with three different risk assessments for the same credit scoring model, done by three different teams, none of whom talk to each other. The table below maps five common financial AI use cases against all four regulatory layers in a single view.

| Use Case | EU AI Act | DORA | GDPR | Sector Rules |

|---|---|---|---|---|

| Credit Scoring | HIGH-RISK Annex III(5)(b). FRIA required. Art. 9–15 apply. | ICT risk framework for model infrastructure. Incident reporting if model failure. | DPIA required (Art. 35). Art. 22 ADM rights. Transparency under Art. 13–14. | Consumer Credit Directive. EBA model risk guidelines. |

| Insurance Underwriting | HIGH-RISK Annex III(5)(b). Risk assessment for access to essential services. | Third-party AI vendor oversight. Resilience testing of pricing engine. | DPIA for profiling. Legitimate interest or consent basis. Special category data rules. | EIOPA guidance on algorithmic underwriting. Solvency II. |

| Fraud Detection | CASE-BY-CASE High-risk if affects access to services. Otherwise may fall outside Annex III. | Mandatory incident reporting for major ICT failures. Business continuity testing. | DPIA likely required. Profiling rules. Transparency to affected individuals. | PSD2 strong customer authentication. AML Directive monitoring. |

| AML / Sanctions Screening | CASE-BY-CASE Not explicitly listed in Annex III. May qualify if it determines access to services. | ICT dependency mapping. Incident reporting for screening system failure. | DPIA recommended. Automated processing of personal data for compliance purposes. | 6th AML Directive. National FIU reporting requirements. |

| Algorithmic Trading | NOT ANNEX III Not currently listed. Document governance anyway. | Full ICT risk framework. Threat-led penetration testing (TLPT). Third-party oversight. | Limited personal data involvement. May apply if client profiling is embedded. | MiFID II algorithmic trading rules. ESMA guidelines on market abuse. |

High-Risk AI in Finance: Evidence Expectations Beyond Policy Slides

Here's the blunt truth: a PowerPoint deck describing your "AI governance framework" won't survive regulatory scrutiny. Financial regulators have decades of experience auditing model risk. They'll want operational evidence, not strategy slides.

Risk Registers and Impact Assessments

For every high-risk AI system, you'll need a risk register documenting identified risks, mitigation measures, residual risk levels, and review dates. The EU AI Act requires this under Article 9. GDPR requires a DPIA under Article 35. And DORA requires ICT risk assessment as part of the broader resilience framework. The practical question is whether you run three separate assessments or build one integrated workflow that satisfies all three. I'd strongly recommend the latter.

Technical Documentation (Articles 10–13)

The AI Act's documentation requirements under Articles 11 and 12 overlap substantially with what EBA and EIOPA already expect for model documentation. You need records of training data governance (Article 10), system architecture and design choices (Article 11), automatic logging capabilities (Article 12), and transparency information for deployers (Article 13). Financial institutions that already maintain model inventory documentation under SR 11-7 or equivalent will find perhaps 60–70% of the AI Act requirements already covered.

Governance Committees and Model Risk Oversight

The AI Act doesn't prescribe specific committee structures, but Article 9(5) requires that risk management is conducted by persons with appropriate competence. Combined with DORA's requirement for management body accountability for ICT risk (Article 5), this means your board or executive committee can't delegate AI governance to a single compliance officer. You need a defined escalation path with documented terms of reference and meeting records.

Governance framework mapping: how a single AI risk management system covers EU AI Act, DORA, GDPR and sector-specific requirements.

When AI Problems in Finance Become Regulatory Incidents

A credit scoring model that systematically underprices risk for a particular demographic isn't just a model performance issue. It's potentially a GDPR discrimination complaint, an AI Act Article 62 serious incident report, a DORA major ICT incident report, and a prudential supervisory concern — all from the same root cause. Does your incident response playbook cover all four channels?

Example Scenarios

Scenario 1: Systemic Scoring Model Failure

Your credit scoring model receives corrupted input data from a third-party provider, causing mass mispricing for 48 hours. Under DORA, this is a major ICT incident requiring reporting to your NCA within hours. Under the AI Act, if the system is high-risk, this constitutes a serious incident under Article 62. Under GDPR, affected individuals may have rights under Article 22. The scoring model failure is one event, but it triggers three separate notification obligations.

Scenario 2: AI-Driven Fraud System Misclassification

A fraud detection model flags thousands of legitimate transactions as suspicious, freezing customer accounts. Under DORA, the operational disruption to customers triggers incident reporting. Under GDPR, automated decisions adversely affecting individuals require transparency and the right to contest. Under the AI Act, if the system determines access to essential financial services, it's a high-risk system with ongoing monitoring obligations under Article 72.

Related guides: For deployer obligations in detail, see the High-Risk AI Deployer Guide. For a deeper look at incident reporting across frameworks, see Pillar 7: Financial Services AI.

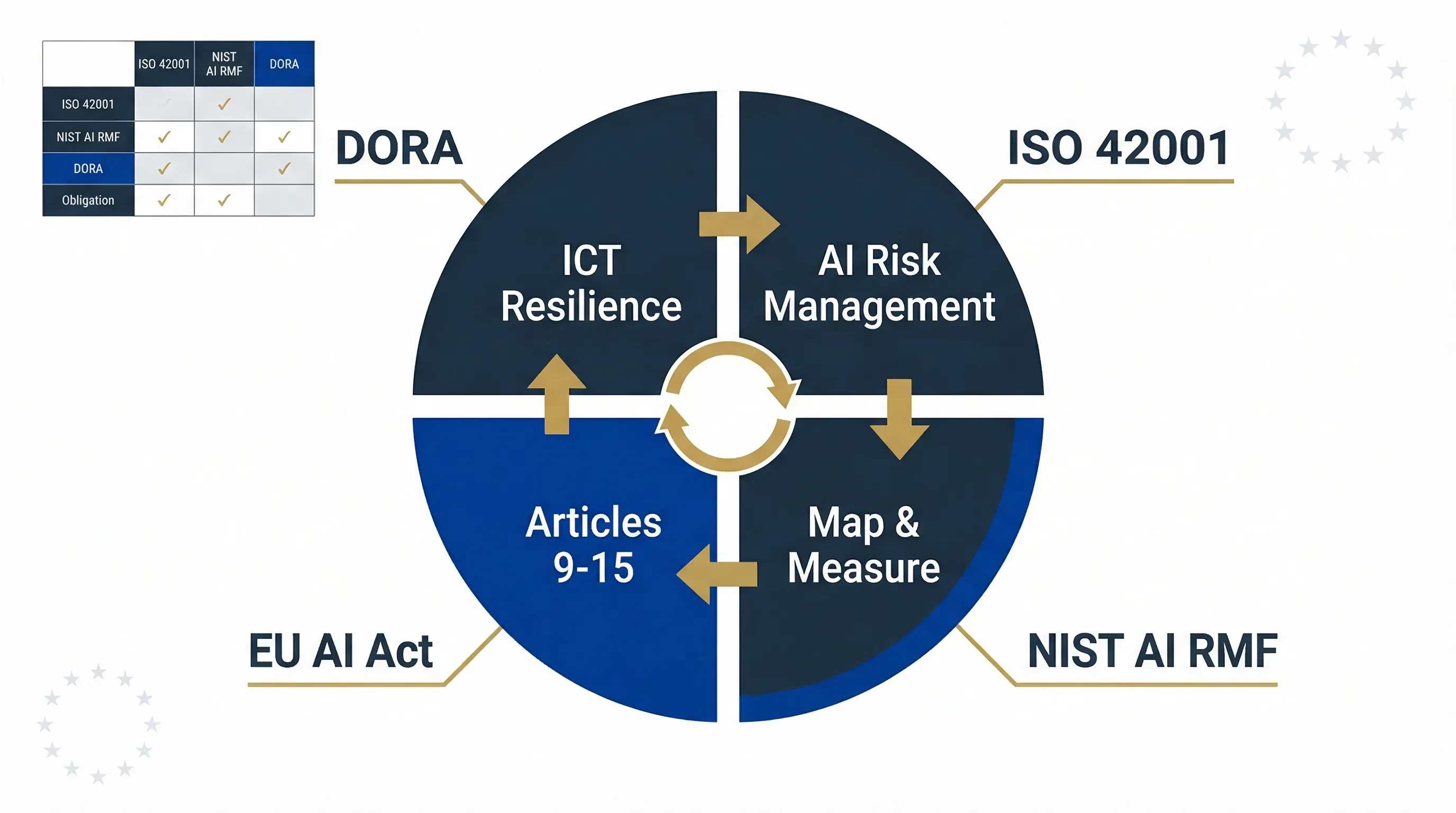

Building an AI Governance Framework for Financial Services

Financial institutions that already operate ISO 27001 information security management systems have a structural advantage. ISO 42001 (AI Management Systems) and NIST AI RMF both map cleanly onto the Plan-Do-Check-Act cycle that your ISMS team already understands. The challenge isn't building governance from scratch — it's extending what you have to cover AI-specific risks without creating a parallel bureaucracy.

How Frameworks Map to Financial AI Obligations

| Obligation Area | ISO 42001 | NIST AI RMF | EU AI Act Article | DORA Requirement |

|---|---|---|---|---|

| Risk Management | Clause 6.1 (Risk assessment) | Map & Measure functions | Article 9 | Art. 6 (ICT risk framework) |

| Data Governance | Annex B (Data management) | Map function (data provenance) | Article 10 | Art. 9 (data integrity) |

| Documentation | Clause 7.5 (Documented info) | Govern function (documentation) | Articles 11–12 | Art. 6(8) (documentation) |

| Incident Response | Clause 10 (Improvement) | Manage function | Article 62 | Art. 19 (incident reporting) |

| Human Oversight | Annex B (Human involvement) | Govern function (roles) | Article 14 | Art. 5 (management body) |

| Third-Party Management | Annex B (Supply chain) | Govern & Map functions | Article 25 (supply chain) | Art. 28–30 (ICT third parties) |

Deep dive: For the full framework-by-framework comparison, see the ISO 42001 / NIST AI RMF / EU AI Act Mapping Guide.

Which EU AI Compass Tools to Use for Each Financial AI Use Case

Every tool on EU AI Compass runs entirely in your browser. Nothing is sent to a server. That's not a marketing claim — it's an architectural decision specifically designed for financial institutions that can't push compliance data to third-party APIs. Here's what to use for each use case.

| Use Case | Tool(s) | Purpose |

|---|---|---|

| Credit Scoring | Fraud vs Credit Delimiter, Deployer Obligations | Clarify Annex III scope. Check deployer duties. |

| Insurance Underwriting | Insurance Underwriting Assessor | Check obligations and evidence requirements. |

| Fraud / AML AI | Fraud vs Credit Delimiter, AI Risk Register | Distinguish high-risk from exemptions. Track risks. |

| Trading Models | AI Risk Register | Document governance even if not Annex III. |

| All Use Cases | FRIA + DPIA Wizard, AI Incident Log | Combined impact assessment and incident tracking. |

12–18 Month Roadmap: Aligning the Full Regulatory Stack

DORA is already enforceable. GDPR has been enforceable for years. The only element still approaching is the EU AI Act's Annex III high-risk deadline on August 2, 2026. That gives you roughly five months from the date of this guide. If you haven't started your AI inventory yet, that timeline is tight. Here's a phased approach.

Phase 1: Months 0–3 (Inventory and Baseline)

Actions: Complete AI system inventory. Map each system to Annex III risk classes. Identify DORA ICT dependencies for each AI system. Assess GDPR legal basis for each processing activity. Establish cross-regulatory governance committee with terms of reference.

Tools: Fraud vs Credit Delimiter, Insurance Underwriting Assessor, AI Risk Register

Phase 2: Months 3–9 (Evidence and Process)

Actions: Run combined FRIA+DPIA for every high-risk system. Document technical specifications under Articles 10–13. Integrate AI incident scenarios into DORA incident response playbook. Update DORA registers of information to include AI vendor dependencies. Conduct first round of AI-specific operational resilience testing.

Phase 3: Months 9–18 (Sustain and Audit)

Actions: Embed AI governance into BAU risk reporting. Run annual FRIA reviews. Update DORA registers quarterly. Conduct periodic AI model validation and performance monitoring. Prepare for potential supervisory examination. Track Digital Omnibus adoption status for any Annex III changes.

Broader governance support: Visit Move78 International for broader cross-framework implementation information.

FAQ: AI Regulation Stack for Financial Services

Is credit scoring always high-risk under the EU AI Act?

Does DORA apply to our AI vendor as a critical third party?

Do we need separate DPIA and FRIA for every AI model?

How do we satisfy both AI Act and EBA model risk guidance?

What fines apply for non-compliance with AI Act, DORA and GDPR?

Does the EU AI Act apply to fraud detection systems in banks?

When do these regulations apply to financial institutions?

All Related EU AI Compass Tools

Fraud vs Credit Scoring Delimiter

Clarify Annex III(5)(b) scope

Insurance Underwriting Assessor

Check underwriting obligations

AI Risk Register

Track and manage AI risks

FRIA + DPIA Wizard

Combined impact assessment

Deployer Obligations Assessment

Deployer duties checklist

AI Incident Log

Log and track AI incidents

Need Broader AI Governance Support?

EU AI Compass focuses on free EU AI Act tools and guides. For broader cross-framework support covering governance frameworks and implementation approaches, visit Move78 International.

Visit Move78 InternationalDisclaimer & Limitations

This guide is for educational and informational purposes only. It does not constitute legal, financial, or regulatory advice. EU AI Compass tools are educational aids, not certified compliance instruments. Consult qualified legal counsel before making compliance decisions. Move78 International Limited is not a law firm, regulated financial advisor, or authorised compliance service provider. All regulatory references are accurate as of the publication date based on eu-ai-rules-engine v2.4. Regulations may change. The Digital Omnibus is a proposal, not enacted law.